When AI Finds Bugs Humans Missed for 27 Years, Your Governance Playbook Needs to Change

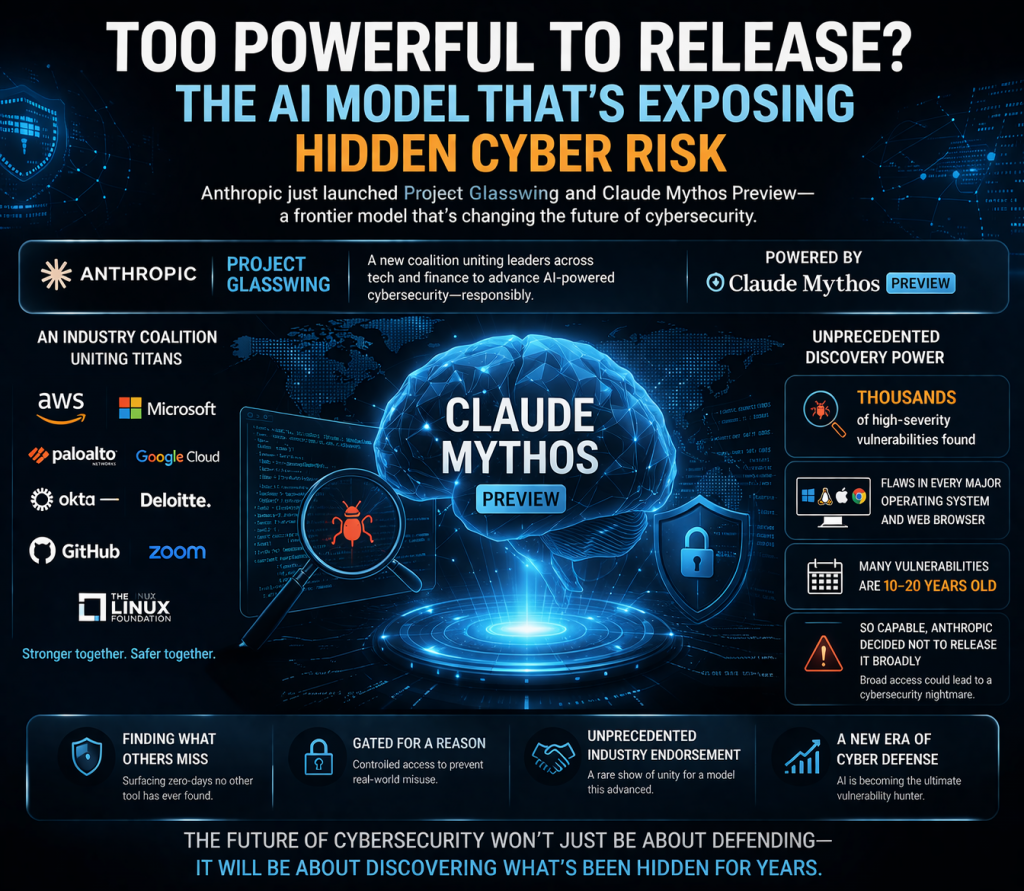

Last month, Anthropic announced Project Glasswing.

Fifty of the most consequential technology and financial institutions on the planet — Apple, Google, Microsoft, AWS, Nvidia, Cisco, Broadcom, Palo Alto Networks, CrowdStrike, JPMorgan Chase, the Linux Foundation, and roughly forty more — are now part of a coordinated effort to use a single AI model, Claude Mythos Preview, to find and fix vulnerabilities in the software the rest of us depend on.

Mozilla has already gone public with results. Firefox 150 shipped with 271 vulnerabilities patched in a single release, surfaced by Mythos in about four weeks of focused work. One of those bugs had quietly survived 27 years inside OpenBSD — an operating system whose entire identity is built around being unbreakable.

Read that again. Twenty-seven years of expert human review missed what an AI surfaced in under a month.

That’s the headline. The headline isn’t the story.

The real story is what this does to governance

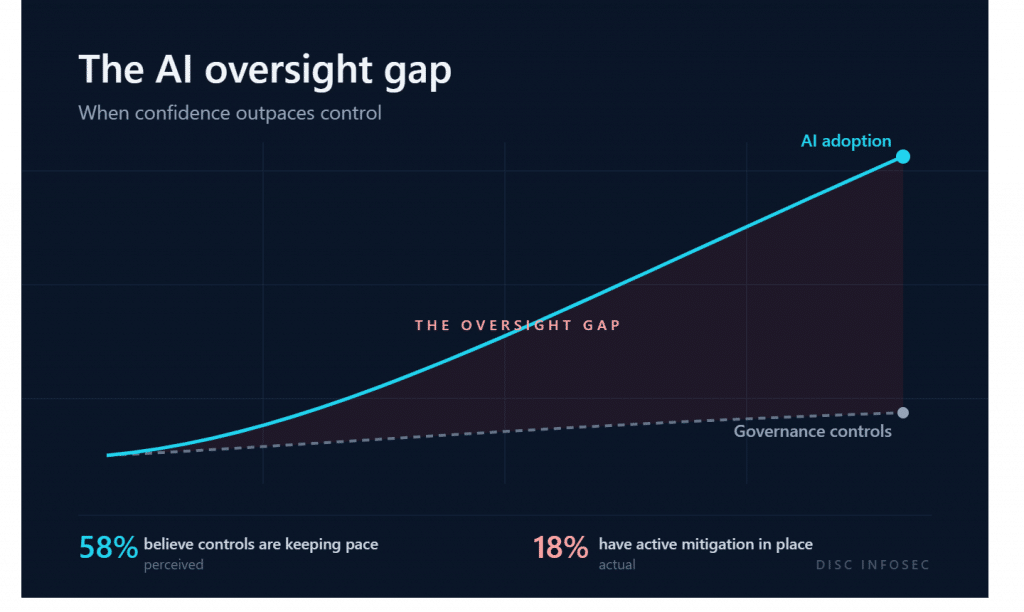

For two decades, the answer to “how do I trust this software?” has been a stack of artifacts: SOC 2 reports, penetration tests, vendor questionnaires, patch cadence policies. Those artifacts assume a world where vulnerability discovery is bottlenecked by human expertise.

That assumption just broke.

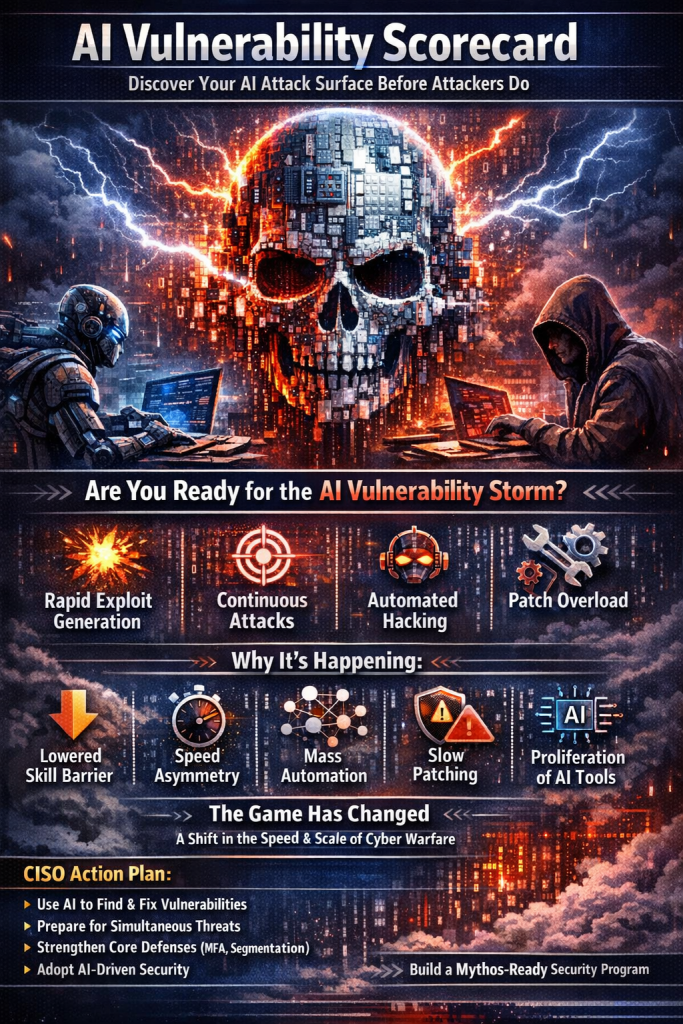

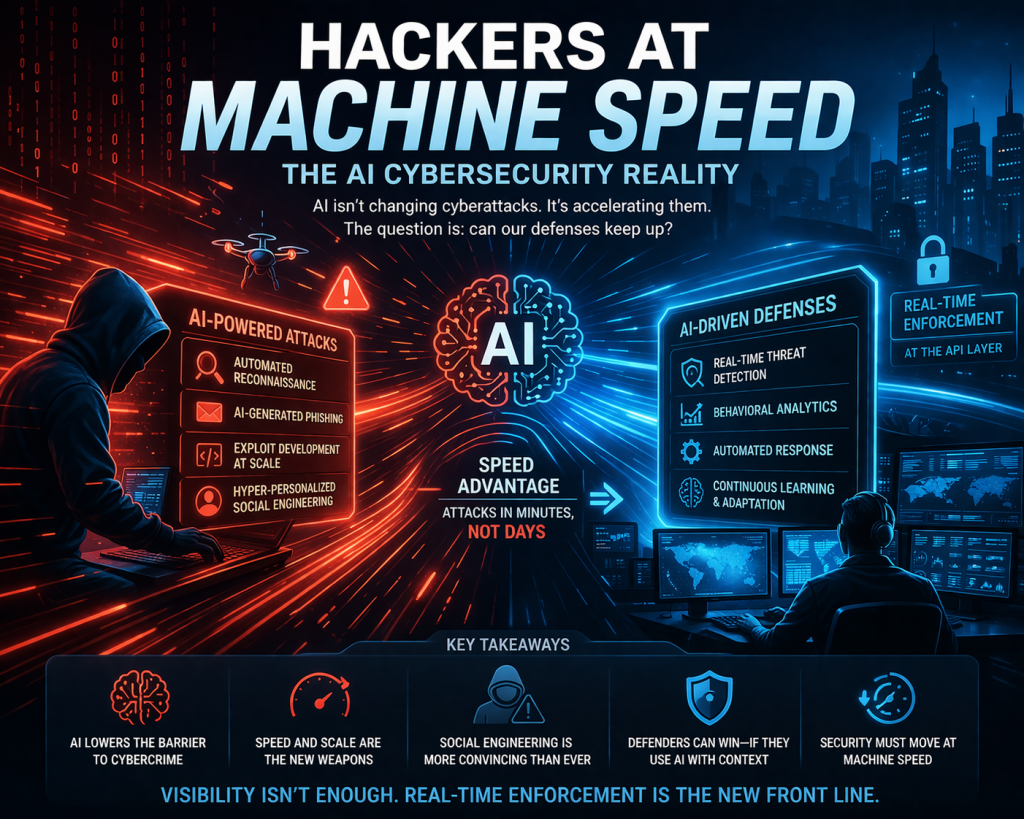

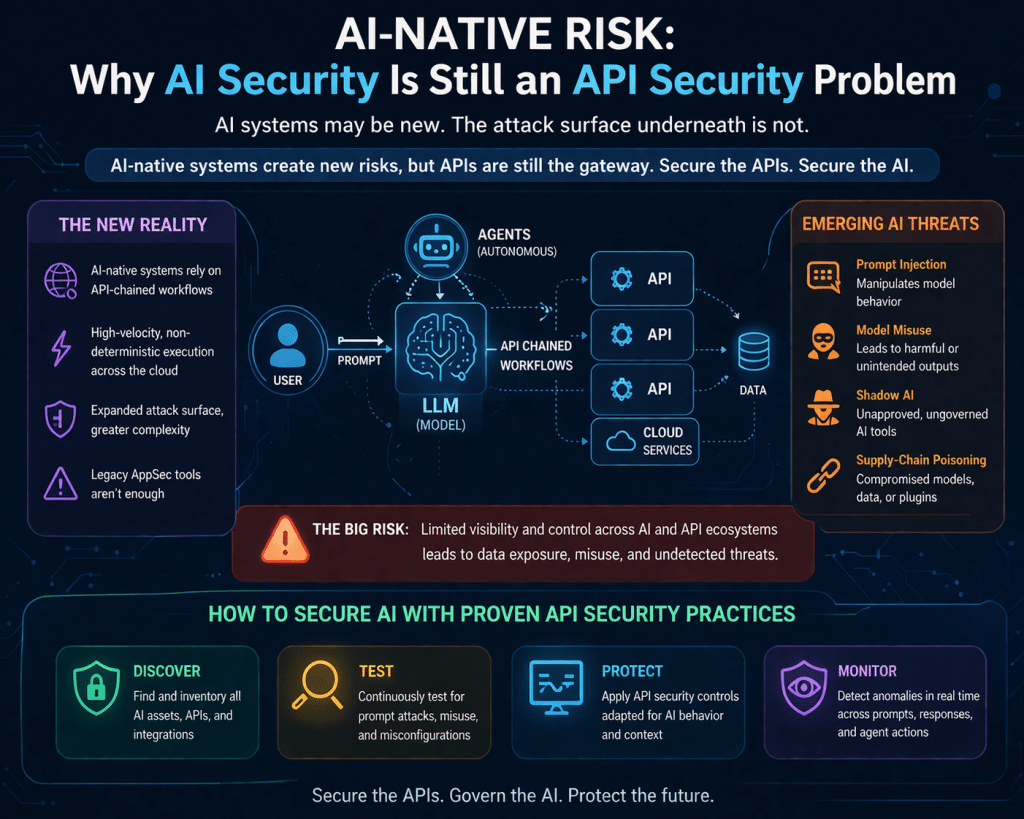

When one AI can audit every line of code in a major operating system and write working exploit code for the bugs it finds, three things move at the same time:

The bar for “reasonable security” shifts. Insurers, regulators, and enterprise buyers will start asking whether vendors use AI-assisted code review. Vendors who can’t answer cleanly will be a different risk category by 2027.

The window between patch and exploit collapses. Attackers have always reverse-engineered patches. AI compresses that work from days to minutes. If your mean time to patch critical vulnerabilities is north of 30 days, that window is materially more dangerous than it was last year.

Disclosure infrastructure shows its age. CISA’s coordinated disclosure process and the CNA framework were designed for human-paced research. They were not built for a coalition of fifty organizations running a model that finds thousands of zero-days on a quarterly cadence.

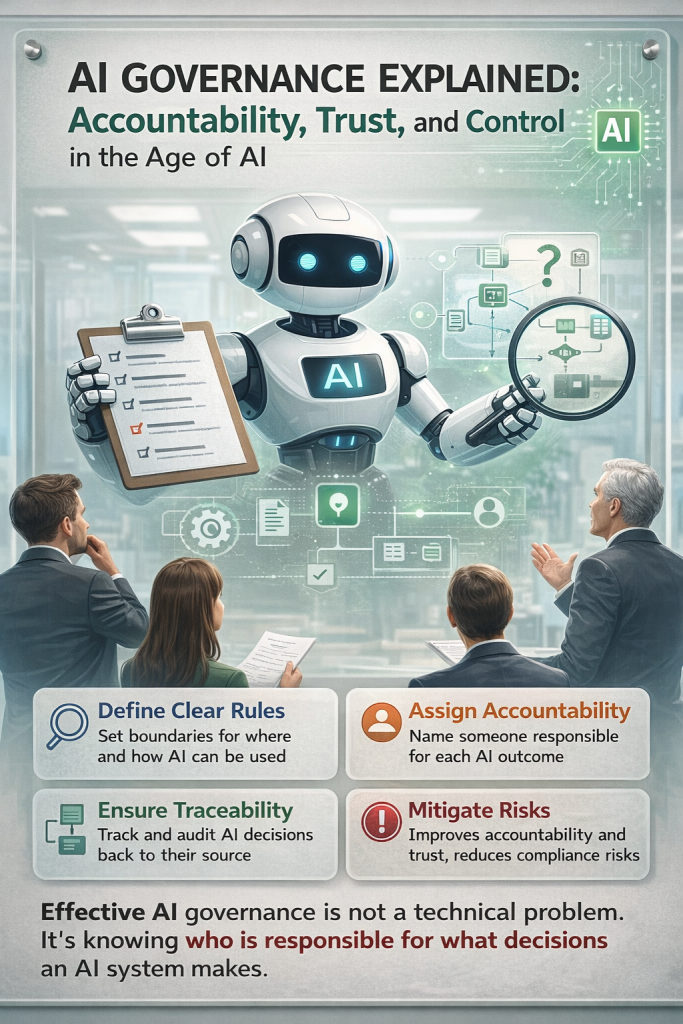

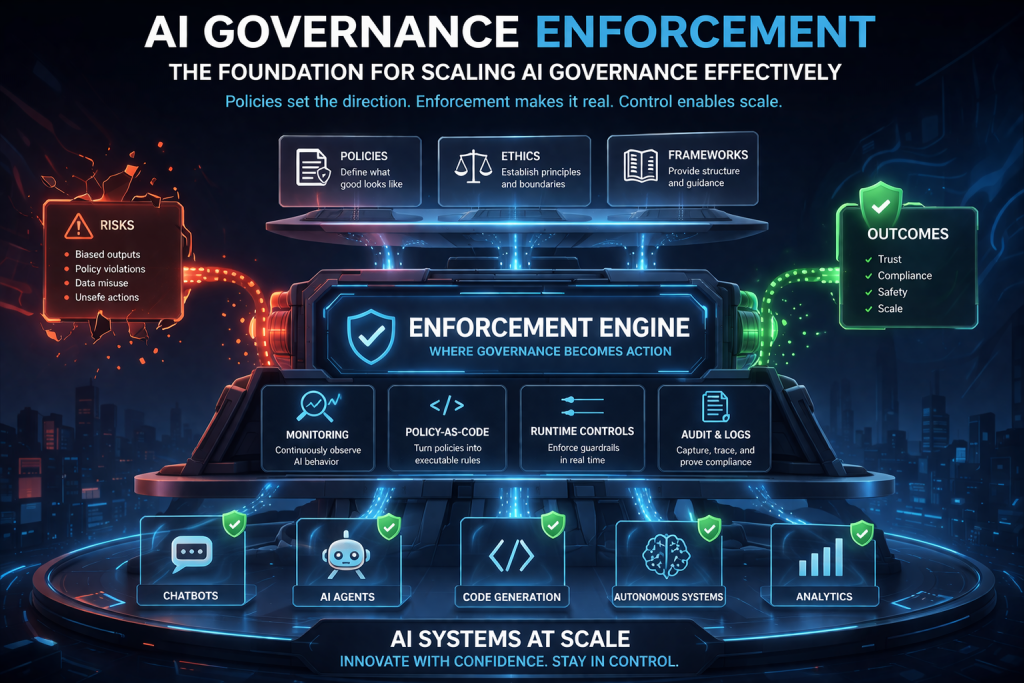

Where AI Governance comes in

I’ve spent the last two years implementing ISO/IEC 42001 in production — including taking ShareVault, a virtual data room serving M&A and financial services clients, through a successful Stage 2 audit.

That work taught me one thing clearly: AI governance is not a downstream compliance exercise. It’s the operating layer for trust.

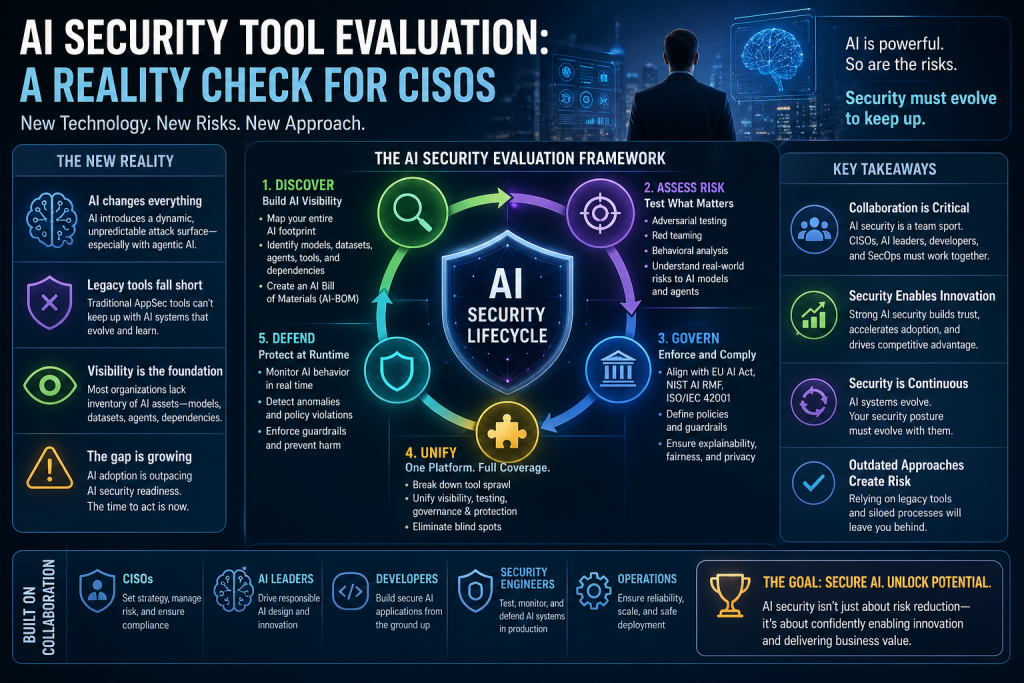

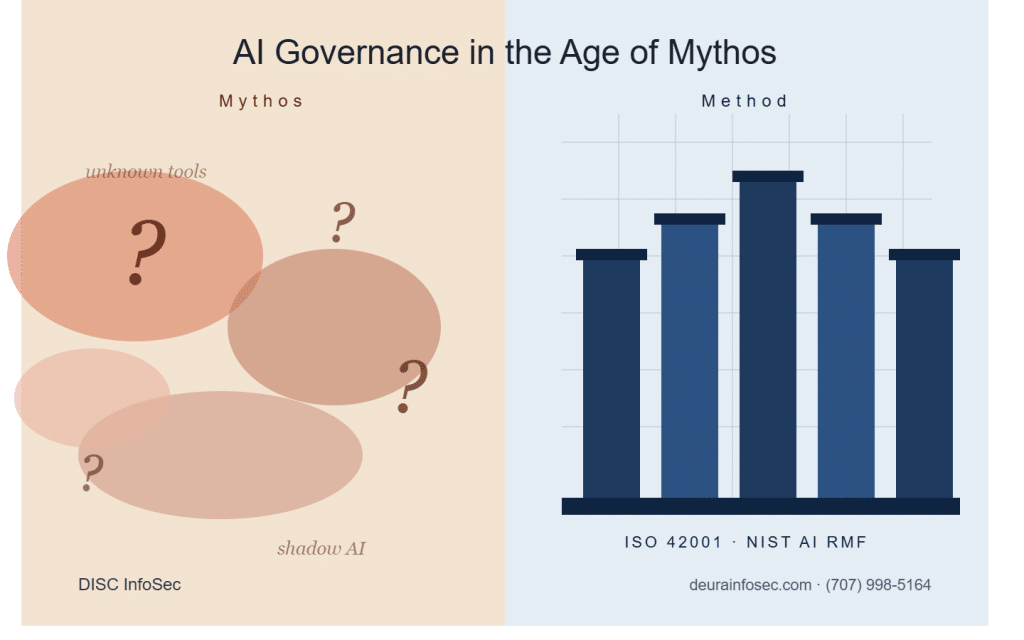

Glasswing is the most visible signal yet that the governance question is moving from “should we use AI in security-critical workflows?” to “what controls govern its use, who has access, and how do we verify the answers?” That’s the question every framework — ISO 42001, NIST AI RMF, the EU AI Act — was built to answer. Most organizations haven’t actually mapped controls to use cases yet. The ones who do this quarter will own the conversation in 2027.

Your next quarterly agenda

- Update vendor due diligence. Add one question to every security review: “Do you use AI-assisted code review or vulnerability scanning, and what governance controls sit around it?” You don’t need a perfect answer. You need to know who’s thinking about it.

- Validate actual patch performance — not the policy, the numbers. Mean time to patch critical CVEs is now a material disclosure conversation.

- Call your cyber insurance broker and ask whether AI-audited code is showing up in renewal questionnaires yet. If not this year, when. Get ahead of the question.

- Map this to your AI governance framework. ISO 42001, NIST AI RMF, and the EU AI Act give you the scaffolding. Skip the mapping exercise and you’ll be re-engineering it under audit pressure.

- Brief your board. Not on the technology. On the shift in what “reasonable security” now means.

Where this goes next

Glasswing is a coalition of fifty today. Capabilities like Mythos always proliferate — Anthropic itself estimates comparable models will be broadly available within 6 to 18 months. When that happens, defenders lose their head start.

Here’s where I think the next two years go:

AI governance becomes the language of trust. Procurement, insurance, and audit conversations will increasingly turn on whether you can produce evidence — not policy, evidence — that your AI use is governed. ISO 42001 certifications and EU AI Act conformity assessments will start showing up in RFP scoring criteria the way SOC 2 did a decade ago.

The “vCAIO” role becomes real. Most mid-market companies will not hire a full-time Chief AI Officer in 2026 or 2027. They will retain fractional governance leadership the same way they did with vCISOs after 2015. The work is similar: control mapping, risk register, board reporting, audit readiness.

Tools without process will look like theater. The organizations that come out ahead won’t be the ones that panic-buy an AI security platform. They’ll be the ones that built the governance muscle — control mappings, role definitions, audit evidence, escalation paths — before the capability was everywhere.

Financial data rooms are the hard mode of compliance. If governance works there, it works anywhere. That’s the bar I’ve been holding myself to, and it’s the bar I’d encourage you to hold your vendors to.

One question for you: Do you actually know whether the vendors in your stack use AI in their code review process? If you don’t — that’s the conversation to start this week.

If you’re working through what Glasswing-class capabilities mean for your AI governance program, I’m happy to think it through with you. DM me below.

Disc | Principal Consultant, DISC InfoSec ISO 42001 & ISO 27001 Lead Implementer | CISSP, CISM | PECB Authorized Training Partner Lead implementer for ShareVault’s ISO/IEC 42001 certification — passed Stage 2 audit

The AI Governance Quick-Start: Defensible in 10 Days, Not 4 Quarters

DISC InfoSec is an active ISO 42001 implementer and PECB Authorized Training Partner specializing in AI governance for B2B SaaS and financial services organizations.

AI Vulnerability Scorecard: Discover Your AI Attack Surface Before Attackers Do

Your Shadow AI Problem Has a Name-And Now It Has a Score

Most AI Security Tools Won’t Pass an Audit. Here’s a 15-Minute Way to Find Out.

InfoSec services | InfoSec books | Follow our blog | DISC llc is listed on The vCISO Directory | ISO 27k Chat bot | Comprehensive vCISO Services | ISMS Services | AIMS Services | Security Risk Assessment Services | Mergers and Acquisition Security

- Your AI Strategy Has a Debt Problem. Here Are the 13 Places It’s Hiding.

- GRC at Machine Speed: Four Anchors Reshaping Governance in the Cloud and AI Era

- AI Can Pentest Your Network Now. That’s Not the Risk You Should Worry About

- GRC at Machine Speed: How AI Is Reshaping Governance, Risk, and Compliance

- Building AI Governance That Actually Works: From Ethics to the Exam Room