The AI Oversight Gap: When Adoption Outpaces Governance

AI has quietly graduated from pilot project to production infrastructure. It’s writing code, drafting contracts, screening candidates, and processing customer data across functions most organizations couldn’t fully map if asked. The technology has scaled. The governance hasn’t.

New research spanning more than 800 GRC, audit, and IT decision-makers across four countries makes this gap measurable, and the numbers are uncomfortable.

The Visibility Problem

Only 25% of organizations have comprehensive visibility into how their employees are actually using AI. The other 75% are making governance decisions against an incomplete picture, drafting acceptable use policies, sizing risk, briefing boards, and signing vendor contracts without knowing which models touch which data, who’s prompting what, or where the outputs are flowing.

You cannot govern what you cannot see. And in the past twelve months, that blind spot has produced exactly the consequences you’d expect: AI-related data breaches, policy violations, regulatory enforcement actions, and legal claims. These aren’t theoretical risks anymore. They’re line items on incident reports.

The Confidence-Reality Gap

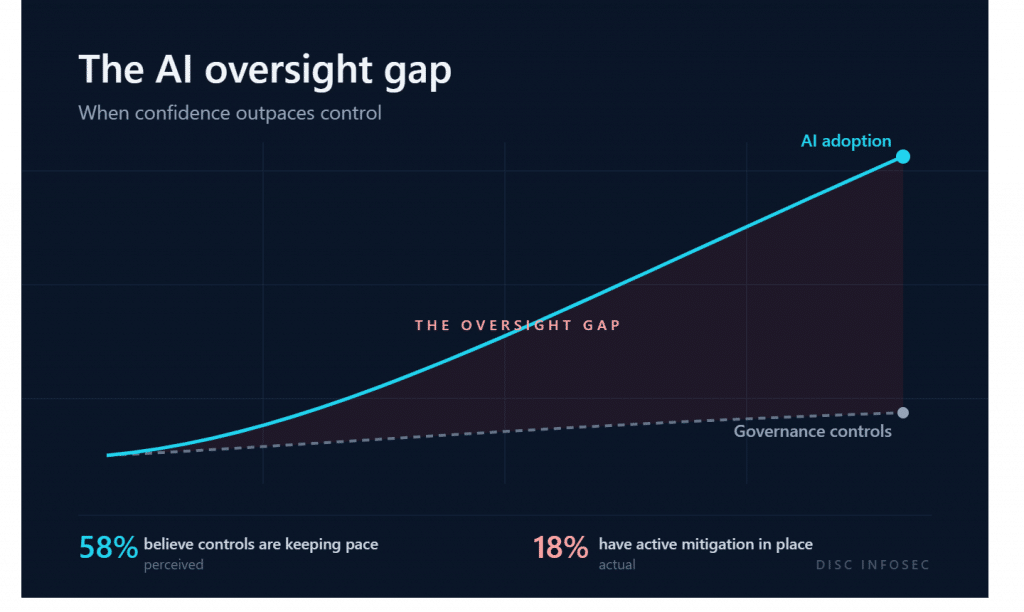

Here’s the finding that should stop every executive committee in its tracks: 58% of leaders believe their governance controls are keeping pace with AI adoption. Only 18% have active mitigation in place.

That’s a 40-point delusion gap. More than half of senior leaders are confident in controls that don’t actually exist, or exist only on paper meaning no AI Governance enforcement. This is the precise pattern that produces front-page incidents, the kind where post-mortems reveal a governance framework that looked complete in the policy binder and was never operationalized.

Confidence without mitigation isn’t governance. It’s vibes.

Why This Is Happening

The honest diagnosis is that AI adoption moves at the speed of a software download, while governance moves at the speed of committee approval. A finance analyst can integrate a new AI tool into their workflow on Monday. The corresponding risk assessment, vendor review, data classification mapping, and policy update can take six months. By then, the analyst’s team has adopted three more tools.

This is the capability-governance gap I see in nearly every organization I work with: layers of capability are being added without the corresponding layers of governance underneath. The visibility deficit isn’t a tooling problem; it’s a structural one. Most organizations built their second and third lines of defense for systems that were procured, deployed, and changed on quarterly cycles. AI doesn’t move on quarterly cycles.

My Perspective: Where We Actually Are

The current state of AI governance is best described as architecturally immature. We have frameworks (ISO 42001, NIST AI RMF, the EU AI Act), we have policies, and we have committees. What we mostly don’t have is the connective tissue: discovery tooling that finds shadow AI, control monitoring that proves policies are working, and clear ownership that survives the gap between IT, legal, risk, and the business.

Frameworks describe the destination. They don’t pave the road.

The Path Forward

The fastest way to close the oversight gap, in my experience implementing ISO 42001 and AI controls in production environments, is to work in this order:

First, get visibility before you write more policy. An AI inventory, however imperfect, beats another control framework you can’t enforce. Discovery tools, network telemetry, and a confidential amnesty window for employees to disclose what they’re actually using will tell you more in two weeks than a year of policy drafting.

Second, operationalize a single control before you scale ten. Pick one high-risk use case, define ownership, instrument monitoring, and prove the control works end-to-end. Then replicate the pattern. Governance theater collapses under audit; working controls don’t.

Third, replace confidence with evidence. The 58% who believe their controls are working should be required to produce the artifact that proves it. If the artifact doesn’t exist, the control doesn’t either.

The organizations that close this gap in 2026 won’t be the ones with the most sophisticated frameworks. They’ll be the ones who treated AI governance as an engineering problem, not a documentation exercise.

The AI Governance Quick-Start: Defensible in 10 Days, Not 4 Quarters

DISC InfoSec is an active ISO 42001 implementer and PECB Authorized Training Partner specializing in AI governance for B2B SaaS and financial services organizations.

AI Vulnerability Scorecard: Discover Your AI Attack Surface Before Attackers Do

Your Shadow AI Problem Has a Name-And Now It Has a Score

Most AI Security Tools Won’t Pass an Audit. Here’s a 15-Minute Way to Find Out.

InfoSec services | InfoSec books | Follow our blog | DISC llc is listed on The vCISO Directory | ISO 27k Chat bot | Comprehensive vCISO Services | ISMS Services | AIMS Services | Security Risk Assessment Services | Mergers and Acquisition Security

- Why ISO 42001 Will Be the Next SOC 2

- Managing AI Risk: A Practical Approach to Secure, Responsible, and Effective AI Adoption

- 50 Companies, 1 AI Model, 271 Firefox Bugs: What Project Glasswing Means for AI Governance

- From Pillars to Proof: Operationalizing AI Security Controls

- METATRON: Open-Source, Air-Gapped, Audit-Ready AI Pentesting