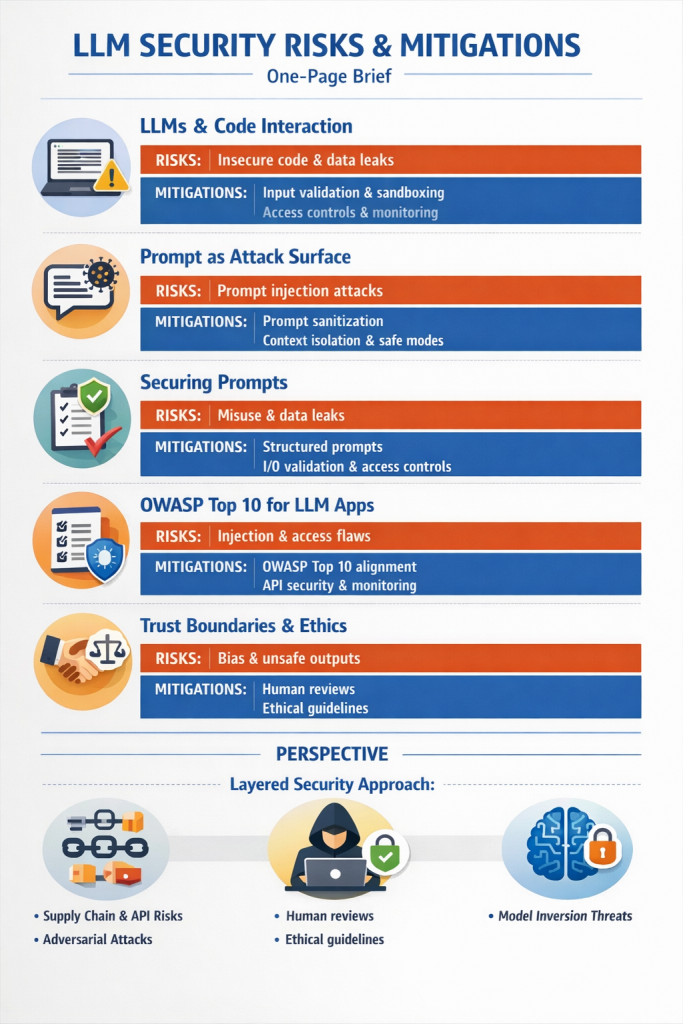

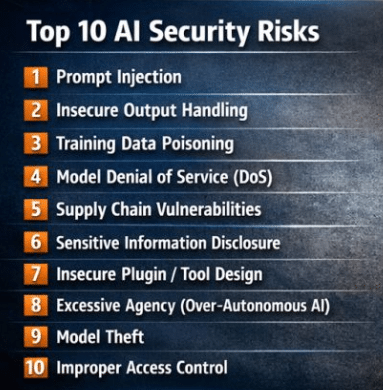

AI security spans a broader attack surface than traditional infosec because the model itself is now part of what you’re defending. The pillars most practitioners converge on:

Data security and integrity. Training, fine-tuning, and RAG data are all attack surfaces. Poisoning, label flipping, and backdoor insertion happen upstream; data lineage, provenance tracking, and integrity controls are the defense. This is also where most privacy obligations land (PII minimization, retention, consent).

Model security. Protecting the model itself from adversarial inputs (evasion), model extraction/stealing, membership inference, and inversion. Includes hardening against prompt injection and jailbreaks for LLMs, which behave differently from classical adversarial ML threats.

Access and identity. Who can query, fine-tune, deploy, or modify a model — and under what authorization. RBAC/ABAC on inference endpoints, secrets management for API keys, separation of duties between data science, MLOps, and production. Often the weakest link in real-world incidents.

Supply chain. Pre-trained foundation models, open-source libraries, HuggingFace artifacts, datasets, and embedding providers all enter your trust boundary. SBOM-equivalents for ML (model cards, dataset cards, signed artifacts) and vendor due diligence are increasingly non-negotiable.

Infrastructure and MLOps security. The pipelines, notebooks, registries, feature stores, and orchestration layers — most of which were built for velocity, not security. Standard cloud/container hardening applies, plus pipeline-specific concerns like notebook sprawl and unsecured model registries.

Output and content safety. Guardrails against harmful, biased, hallucinated, or leaked outputs. For agentic systems this expands to tool-use safety, sandboxing, and constraining what actions a model can take downstream of a malicious prompt.

Monitoring, detection, and observability. Drift, anomaly detection on inputs/outputs, abuse pattern detection, and audit logging sufficient to reconstruct an incident. Most orgs underinvest here relative to classical SIEM coverage.

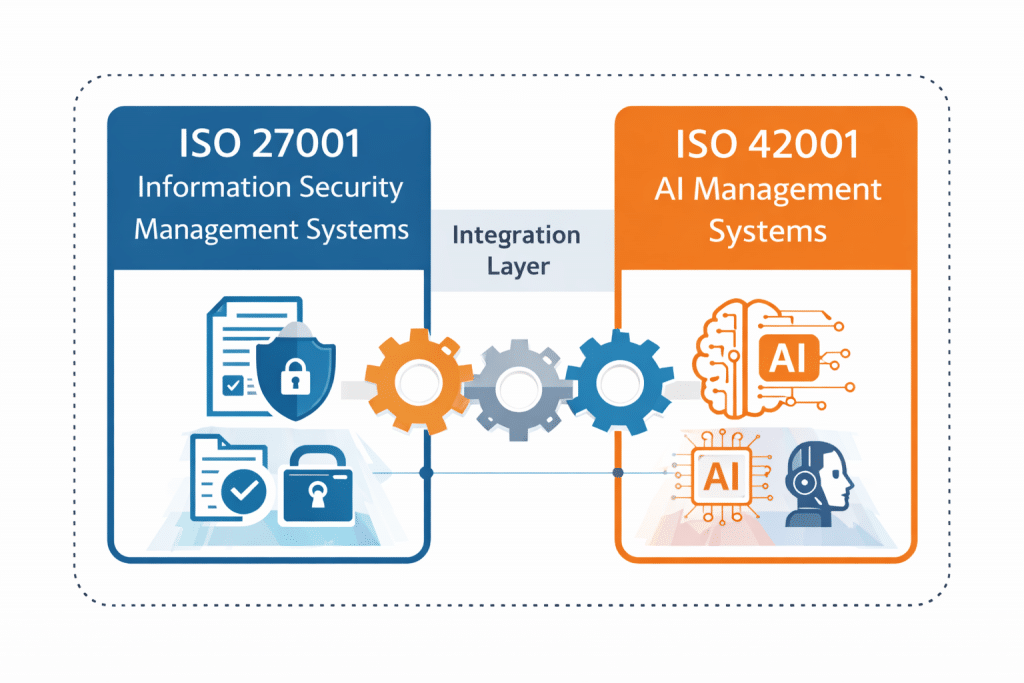

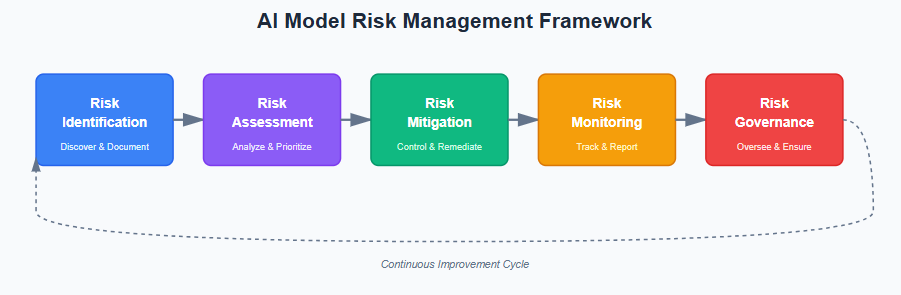

Governance and assurance. The wrapper that makes the rest defensible to auditors, regulators, and customers — ISO 42001, NIST AI RMF, EU AI Act obligations, internal AI use policies, risk registers, and impact assessments. Without this, the technical controls have no organizational accountability behind them.

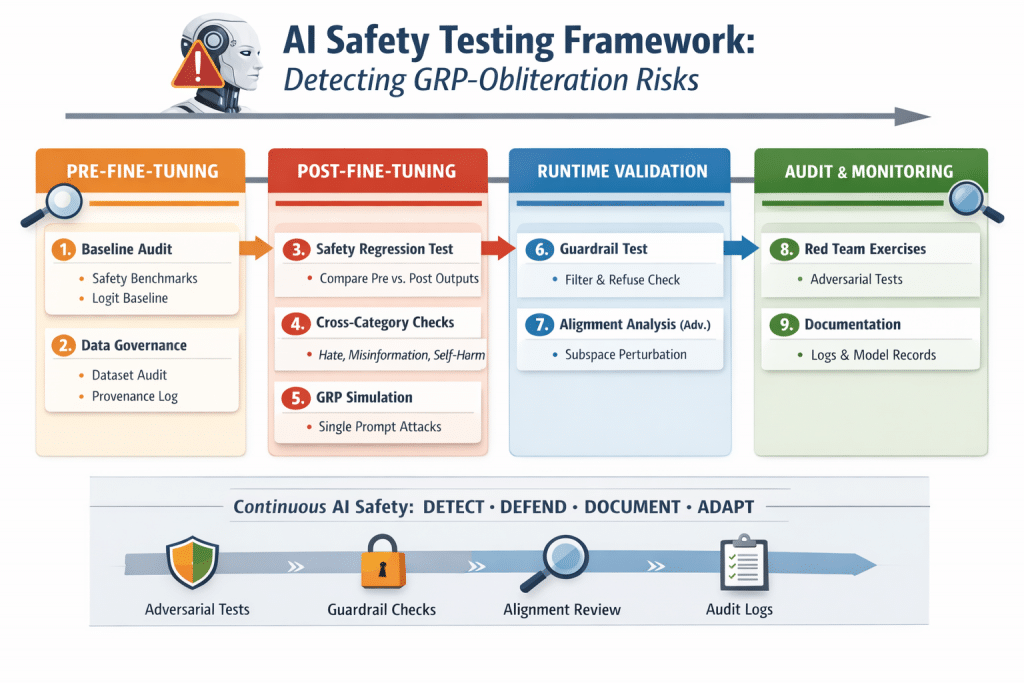

Resilience and incident response. Red-teaming (both classical and AI-specific), tabletop exercises that include model failure modes, rollback capability for compromised models, and IR playbooks that recognize a poisoned model or a prompt-injected agent as a real incident class.

The practitioner shorthand I’d use: classical CIA still applies, but you’ve added a model that can be attacked, a pipeline that can be poisoned, and an output channel that can be weaponized — so you need controls at each of those layers plus the governance to prove the controls exist.

Here’s the same set of pillars reframed as an accountability matrix, then a candid take on what actually works in implementation.

| Pillar | Primary Owner | Oversight Authority | Audit Cadence | Monitoring Cadence |

|---|---|---|---|---|

| Data security & integrity | Data Owner / CDO (with Security as partner) | CISO + DPO; AI Governance Committee for high-risk datasets | Annual formal audit; ad-hoc on schema or source changes; per-release for training data | Continuous integrity checks (hashes, lineage); weekly drift/quality reports |

| Model security | ML/AI Engineering Lead | CISO + AI Governance Committee | Pre-deployment + annual; red-team exercise semi-annually | Continuous adversarial input detection; per-inference logging on high-risk models |

| Access & identity | IAM / IT Security | CISO | Quarterly access reviews; annual privileged-access audit | Continuous (SIEM); real-time alerting on privileged actions |

| Supply chain (models, data, libraries) | Procurement + ML Platform Team | CISO + Legal/Privacy | Annual vendor reassessment; per-onboarding due diligence; per-model-card review | Continuous CVE/vulnerability scanning; weekly dependency checks |

| Infrastructure & MLOps | Platform / DevSecOps | CISO | Annual; per-major-architecture-change | Continuous config monitoring (CSPM/KSPM); daily pipeline integrity checks |

| Output & content safety | AI Product Team + Trust & Safety | AI Ethics / Governance Board | Quarterly red-team + output sampling; annual bias/fairness audit | Continuous guardrail telemetry; weekly sampled human review |

| Monitoring, detection & observability | SecOps / SOC | CISO | Annual control-effectiveness review | Continuous (this pillar is the monitoring); monthly tuning |

| Governance & assurance | CAIO / vCAIO / Compliance Lead | Board / Audit Committee | Annual internal audit + external surveillance (ISO 42001, SOC 2, etc.) | Monthly KPI/KRI dashboard; quarterly risk register review |

| Resilience & incident response | SecOps + AI Engineering | CISO + Executive Crisis Team | Annual IR plan review; semi-annual tabletop incl. AI-specific scenarios | Continuous detection; quarterly drills; post-incident reviews on every Sev-2+ |

A few notes on how to read this matrix in practice. Primary Owner is who builds and runs the control; Oversight Authority is who signs off that it’s working and gets fired if it isn’t — those should never be the same person. Audit cadence is the minimum floor; trigger-based audits (model retraining, vendor change, regulatory update, security incident) almost always matter more than the calendar. Monitoring cadence is calibrated to risk tier — a high-risk EU AI Act system gets continuous output sampling; an internal productivity tool gets weekly.

My perspective on implementation and monitoring

Most orgs get the matrix roughly right on paper and then fail in three predictable ways.

First, ownership ambiguity at the seams. Data security is “owned” by the data team, model security by ML engineering, supply chain by procurement — and the seams between them are where incidents happen. A poisoned third-party dataset is a supply chain failure that becomes a data integrity failure that becomes a model security failure. If you can’t name a single accountable person for cross-pillar incidents (in most orgs, that’s the CAIO or vCAIO function), the matrix is decorative. The fix is a RACI that explicitly forces a single accountable owner per AI system end-to-end, not per pillar.

Second, monitoring theater. Continuous monitoring gets written into every policy and then implemented as a dashboard nobody opens. The pillars where this fails hardest are output safety and model security — both require sampling and human review, not just telemetry. A useful test: if your AI monitoring would not catch a slow drift that degrades outputs over six months, you don’t have monitoring, you have logging. Build at least one human-in-the-loop checkpoint per high-risk system, and treat the sampling rate as a control to be audited.

Third, audit cadence misaligned with model lifecycle. Annual audits are an artifact of financial reporting cycles, not AI risk. Models change faster than audit cycles — a quarterly cadence for high-risk systems is the realistic floor, with trigger-based reassessment on retraining, material data source change, or material behavior change. ISO 42001 surveillance gives you the annual external check; your internal cadence has to be tighter than that to actually catch things between surveillance visits.

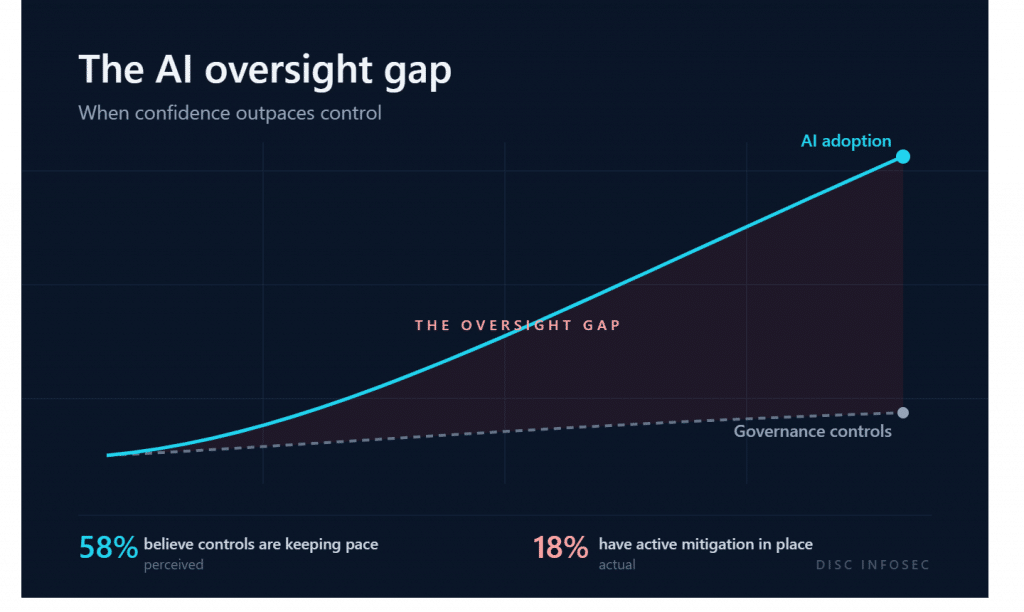

The pillar that’s chronically under-resourced is governance and assurance, and it’s the one that determines whether everything else is defensible. Without a documented risk register, control mapping (NIST AI RMF + ISO 42001 + sector-specific), and board-level reporting, the technical controls exist but can’t be proven to exist — which fails every audit, every customer security questionnaire, and every regulator inquiry. That’s why the practitioner pattern that actually works is: build the governance layer first (even thin), then layer technical controls into it. The reverse — strong technical controls with no governance wrapper — is what we see in most “we have AI security” pitches, and it collapses the first time someone asks for evidence.

The honest summary: technical controls are the easy part; the hard part is sustained ownership, sampling discipline, and auditable evidence. The orgs that pass real ISO 42001 Stage 2 audits aren’t the ones with the fanciest guardrails — they’re the ones that can produce the access review from last Tuesday and the red-team report from last quarter without scrambling.

The 2026 AI Compliance Checklist: 60 Controls Across 10 Domains

AI Policy Enforcement in Practice: From Theory to Control

AI Vulnerability Scorecard: Discover Your AI Attack Surface Before Attackers Do

AI Security = API Security: The Case for Real-Time Enforcement

The AI Governance Quick-Start: Defensible in 10 Days, Not 4 Quarters

DISC InfoSec is an active ISO 42001 implementer and PECB Authorized Training Partner specializing in AI governance for B2B SaaS and financial services organizations.

AI Vulnerability Scorecard: Discover Your AI Attack Surface Before Attackers Do

Your Shadow AI Problem Has a Name-And Now It Has a Score

Most AI Security Tools Won’t Pass an Audit. Here’s a 15-Minute Way to Find Out.

InfoSec services | InfoSec books | Follow our blog | DISC llc is listed on The vCISO Directory | ISO 27k Chat bot | Comprehensive vCISO Services | ISMS Services | AIMS Services | Security Risk Assessment Services | Mergers and Acquisition Security

- GRC at Machine Speed: How AI Is Reshaping Governance, Risk, and Compliance

- Building AI Governance That Actually Works: From Ethics to the Exam Room

- Corporate Visibility as an Attack Surface: Managing Risk in the AI Era

- GRC Engineering Is the Future of Cloud Compliance

- Four risks, three frameworks, and what real-world mapping across ISO 27001, ISO 42001, and NIST 800-53 Rev. 5 actually looks like