Earning Cybersecurity Confidence in the Age of Agentic AI — A Practitioner’s Read

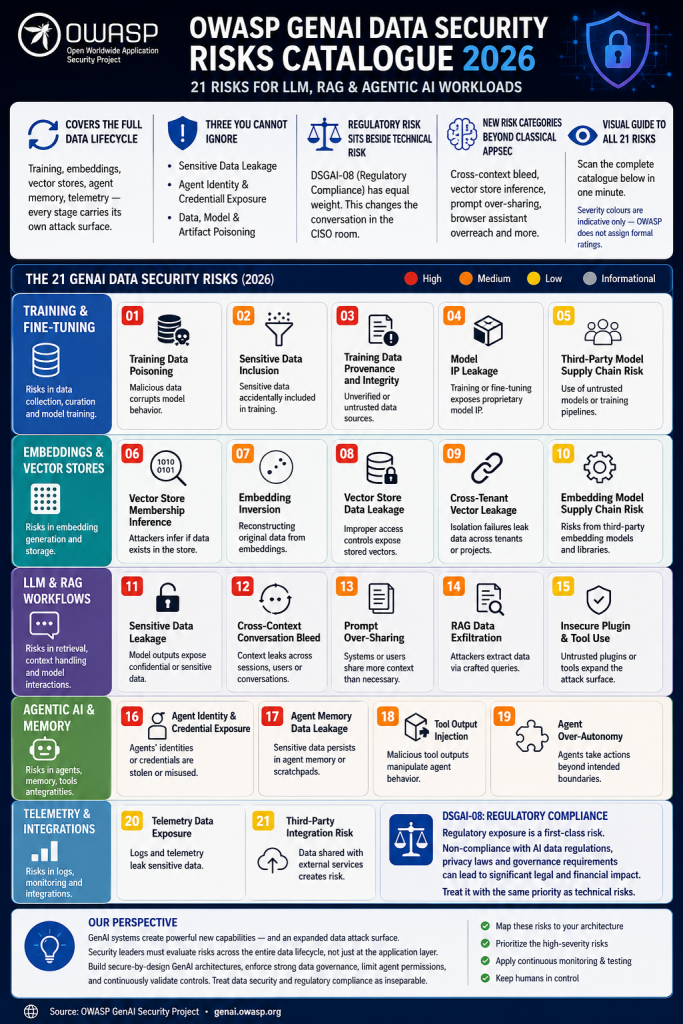

Hrvoje Englman, CISO at Span, used his keynote at the Span Cyber Security Arena to describe a defender’s job that has been rewritten in roughly twenty-four months. Engineering teams are now writing their own software with AI coding assistants, spinning up agents that act on their behalf, and assigning those agents the same access privileges their human creators hold. The boundary between “the user” and “the workload” has effectively collapsed. Identities are over-provisioned by default, and least privilege — long the textbook answer — remains, in his words, an aspiration that is difficult to operationalize once agents start spawning agents inside production.

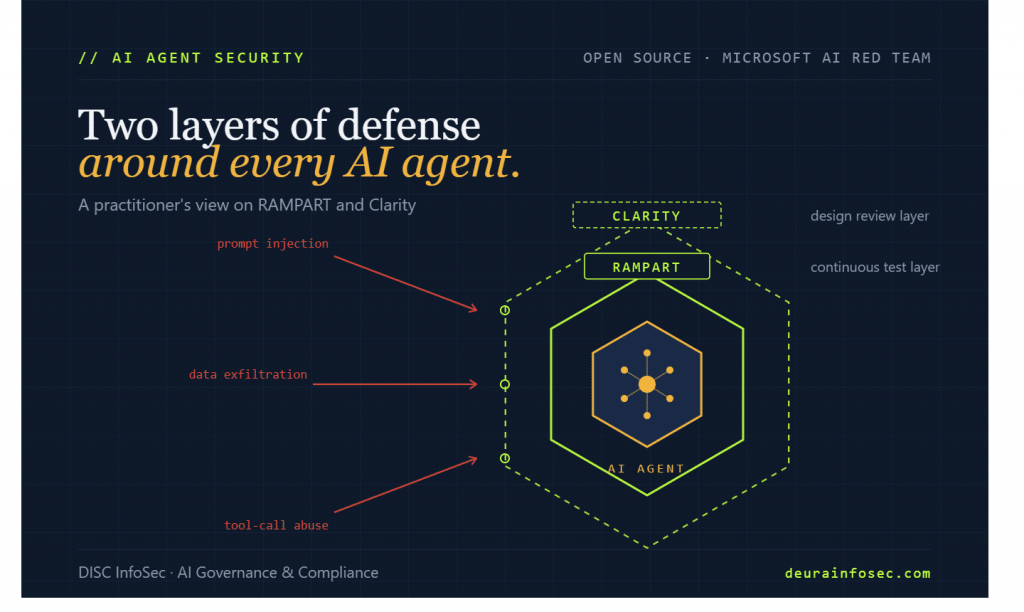

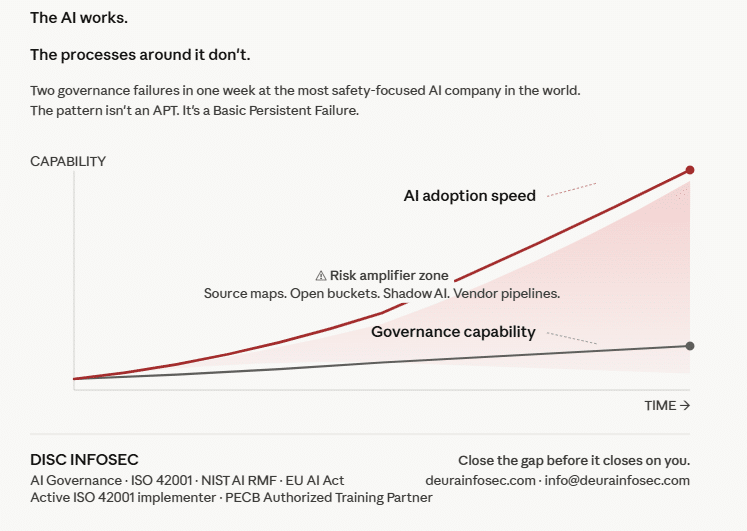

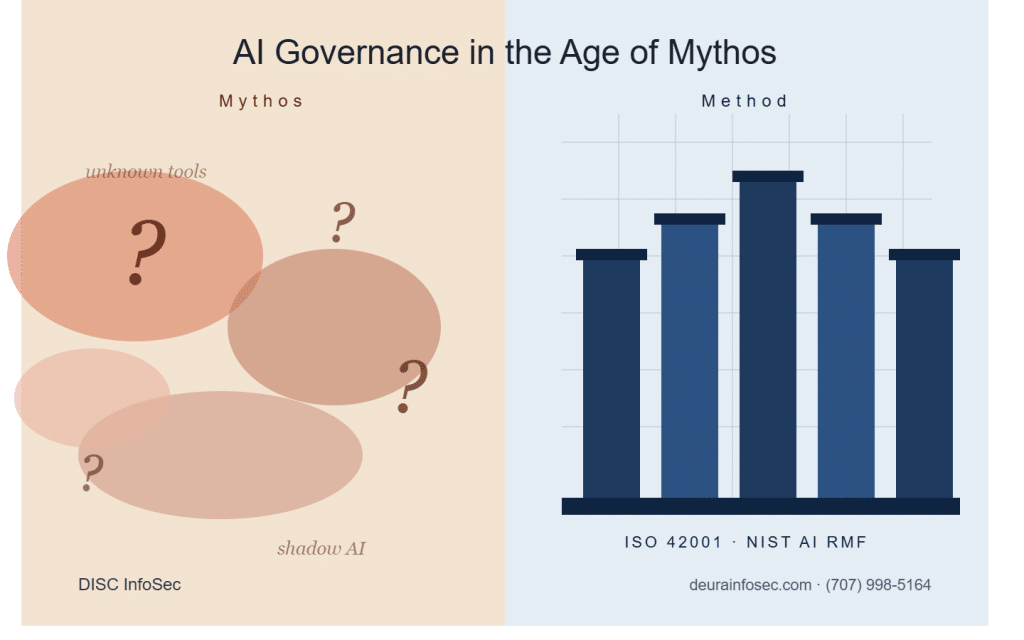

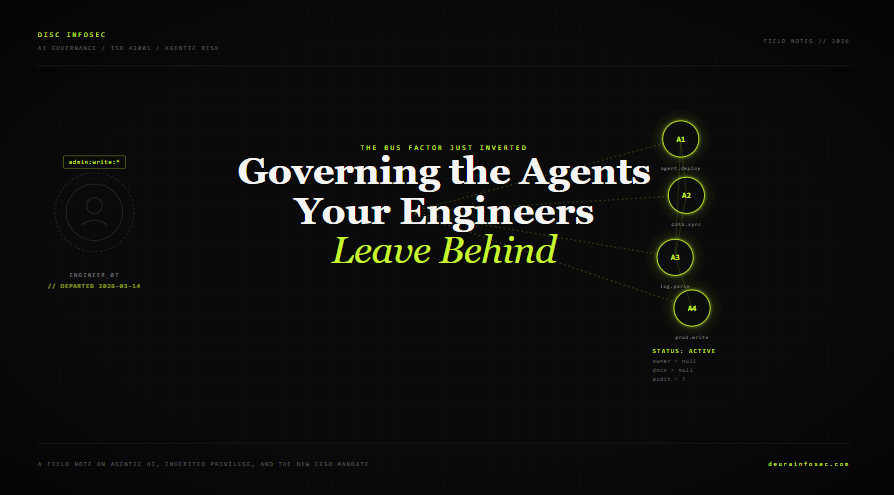

A second-order risk lands on top of that identity sprawl. Englman described what he frames as an inverted bus-factor problem: an engineer automates a workflow with a handful of interacting agents, leaves the company, and the agents keep running with no documentation behind them. The traditional concern was the knowledge gap left by a departing expert. The new concern is the operational system that outlives the expert and continues making business decisions that nobody can fully explain or audit. From a governance standpoint, this is exactly the failure mode ISO/IEC 42001 was written to prevent — and exactly the one most organizations have no inventory for.

Where AI does deliver, Englman is concrete. Log triage that used to consume analyst hours can be compressed against hundreds of megabytes of data, with anomalies and pivot points surfaced in minutes. Policy drafting against internal context can collapse a three-day exercise into a single day, and that compounding time savings is real across a workforce. He treats these as defender leverage that is already shipping value, not vendor theater.

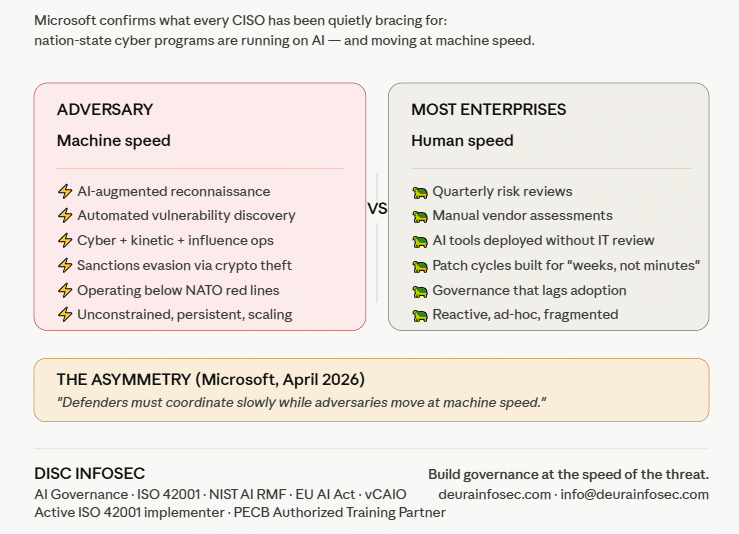

He is far less generous to the marketing around autonomous, AI-driven SOCs. The premise of defensive AI versus offensive AI with no humans in the loop does not survive contact with operational reality. Log ingestion is still the unglamorous bottleneck. Detection engineering still depends on analysts who can articulate why an alert fired and what business process it touches. Englman captured the failure mode plainly: “You get an alert, but your analyst doesn’t understand the alert. And you have two million alerts, and then what?” Autonomous containment also breaks down because the model has no concept of which service is load-bearing for revenue at 2 a.m. — that judgment escalates to humans during real incidents, and it should. He further notes that most large breaches still trace to phishing and credential theft, which means the nation-state framing in vendor decks is solving a smaller slice of the actual loss curve than it implies.

The threat model is sharper still for a security services provider. Span is both a target and a path to its customers, which inverts the calculus a typical end-user organization works with. A normal enterprise can absorb a breach, run the playbook, and recover. For a provider, the incident response itself becomes the product on display — the proof that controls existed, that the blast radius was contained, and that the same operational discipline sold to customers was applied to the provider’s own house. Reputation is the asset, and negligence ends the business. This is the lens every B2B SaaS or managed-services CISO should be borrowing.

On talent, Englman reframes the so-called shortage. Entry-level candidates are plentiful; what is genuinely scarce is the senior practitioner with five-plus years of operational depth, and that bench cannot be conjured through six-week certifications. He worries — correctly, in my view — that the rush to automate junior SOC work is dismantling the apprenticeship pipeline that produces those senior people in the first place. His bar for an analyst is whether they can explain what an alert means and how the triggering conditions came about. Anything short of that is a coin flip dressed up as triage, whether the coin is human or model.

Finally, he discards the piece of conventional wisdom most CISOs still recite reflexively. The line that “humans are the weakest link” is, he argues, lazy and a form of blame culture. The accountability sits with the security function to engineer environments where one bad click does not collapse the business. Brittle defenses that assume perfect human behavior are a design failure dressed up as user awareness.

Source: https://www.helpnetsecurity.com/2026/05/28/hrvoje-englman-span-earning-cybersecurity-confidence/

My perspective — what the CISO is actually selling.

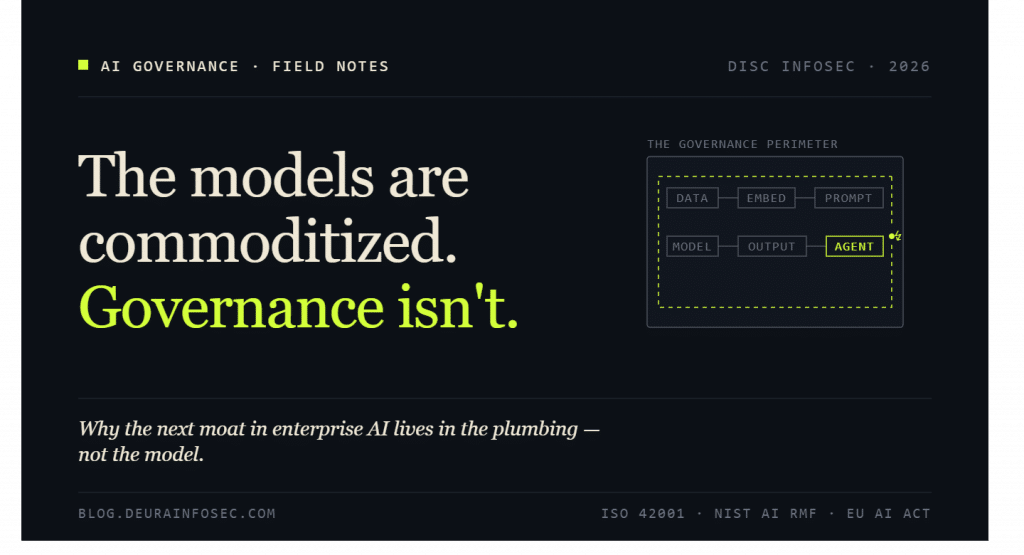

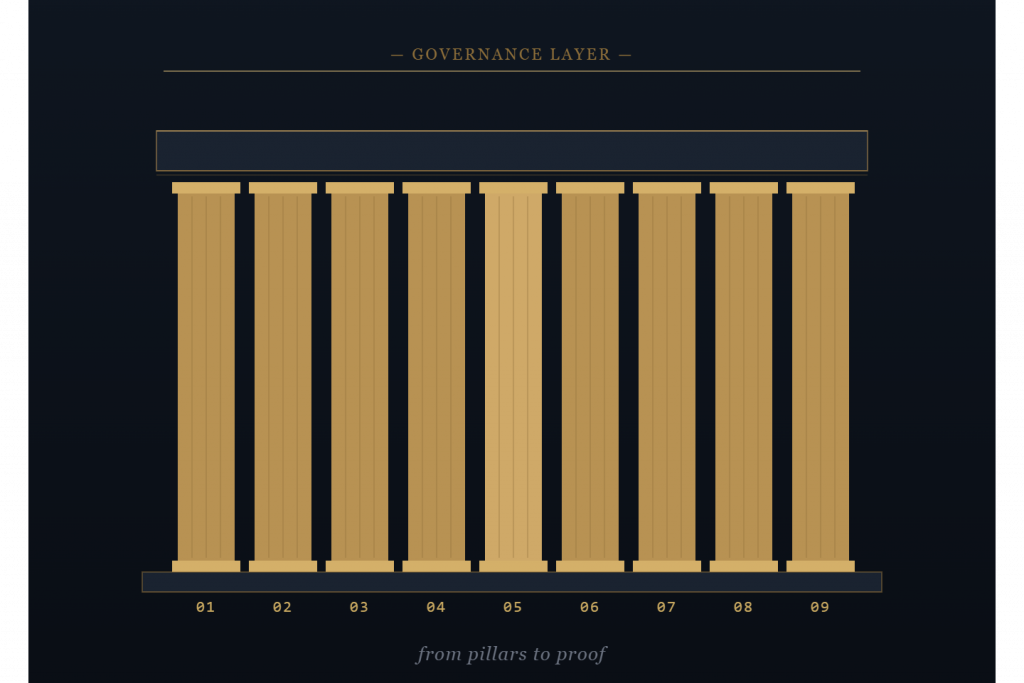

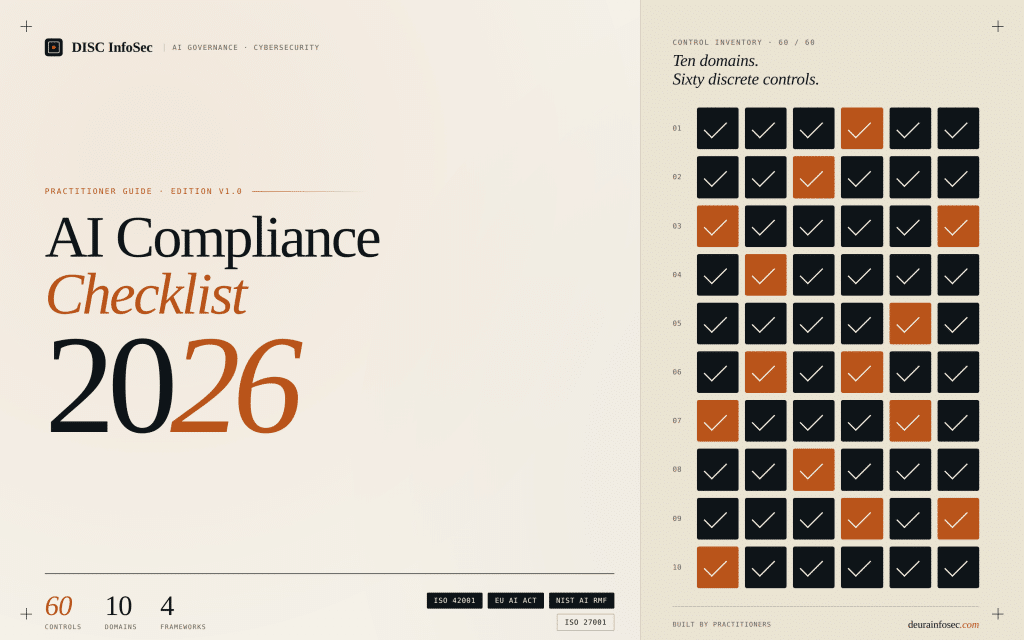

Englman’s interview is, underneath the headlines, a thesis about how to sell confidence in three directions at once: upward to the board, inward to employees, and outward to customers and vendors. None of those audiences are buying a SOC anymore — they are buying the operating discipline behind it. To the board, confidence comes from being able to show that AI is governed the same way any other production system is governed: a mapped inventory of agents and their identities, a documented owner for each one, evidence that controls were designed in rather than bolted on, and the candor to say which threats your stack actually addresses versus which ones are marketing. ISO 42001, NIST AI RMF, and the EU AI Act each give the CISO a defensible scaffold for that conversation; the failure mode is treating them as paperwork instead of as the board narrative they were designed to be. To employees, confidence comes from being an enabler rather than a blocker — codifying acceptable AI use, shipping sanctioned tools faster than Shadow AI can spread, and treating “the user clicked the link” as a signal to fix architecture, not to publish another phishing scorecard. To vendors and customers, confidence is demonstrated in how an incident is handled, not promised in how one is prevented; the playbook, the tabletop cadence, the third-party audit evidence, the time-to-disclose discipline — that is the product. In a market saturated with breach headlines and autonomous-SOC vaporware, the CISOs who win the trust trade are the ones who can prove governance maturity in plain language, name the limits of their tooling honestly, and let operational evidence — not vendor promises — carry the weight.

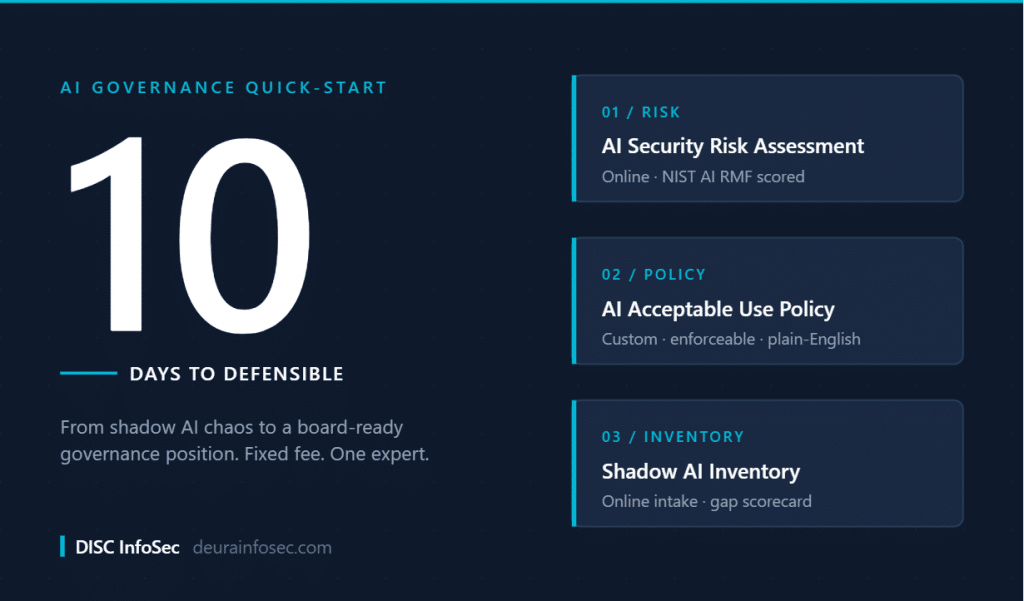

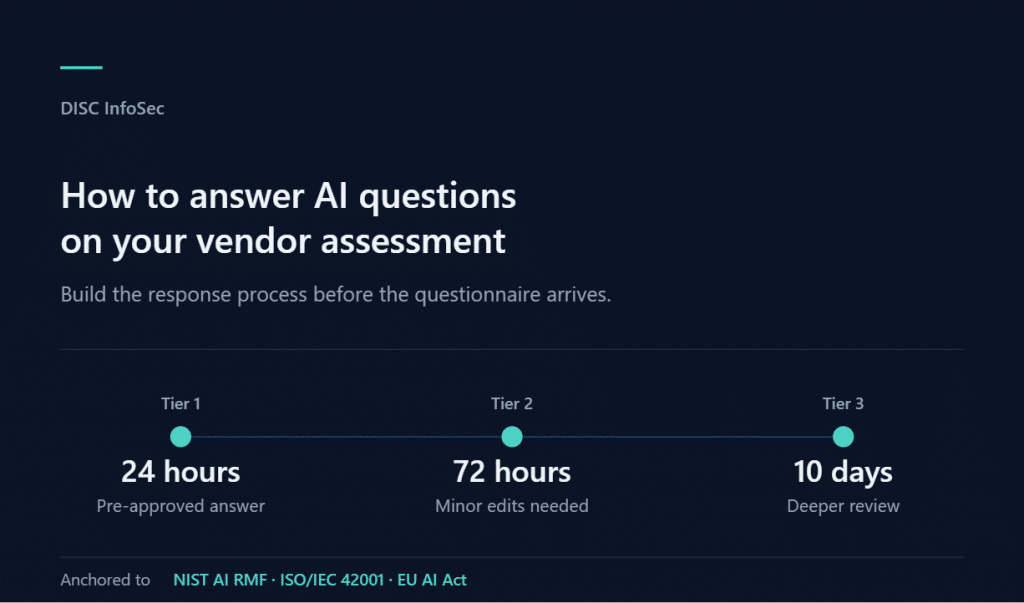

The AI Governance Quick-Start: Defensible in 10 Days, Not 4 Quarters

DISC InfoSec is an active ISO 42001 implementer and PECB Authorized Training Partner specializing in AI governance for B2B SaaS and financial services organizations.

AI Vulnerability Scorecard: Discover Your AI Attack Surface Before Attackers Do

Your Shadow AI Problem Has a Name-And Now It Has a Score

Most AI Security Tools Won’t Pass an Audit. Here’s a 15-Minute Way to Find Out.

InfoSec services | InfoSec books | Follow our blog | DISC llc is listed on The vCISO Directory | ISO 27k Chat bot | Comprehensive vCISO Services | ISMS Services | AIMS Services | Security Risk Assessment Services | Mergers and Acquisition Security

- Corporate Visibility as an Attack Surface: Managing Risk in the AI Era

- GRC Engineering Is the Future of Cloud Compliance

- Four risks, three frameworks, and what real-world mapping across ISO 27001, ISO 42001, and NIST 800-53 Rev. 5 actually looks like

- The Bus Factor Just Inverted: Governing the Agents Your Engineers Leave Behind

- ISO 42001 Just Got Easier to Prove: Anthropic Opens Claude to 28 Security and Compliance Tools