AI Governance That Actually Works: Why Real-Time Enforcement Is the Missing Layer

AI governance is everywhere right now—frameworks, policies, and documentation are rapidly evolving. But there’s a hard truth most organizations are starting to realize:

Governance without enforcement is just intent.

What separates mature AI security programs from the rest is the ability to enforce policies in real time, exactly where AI systems operate—at the API layer.

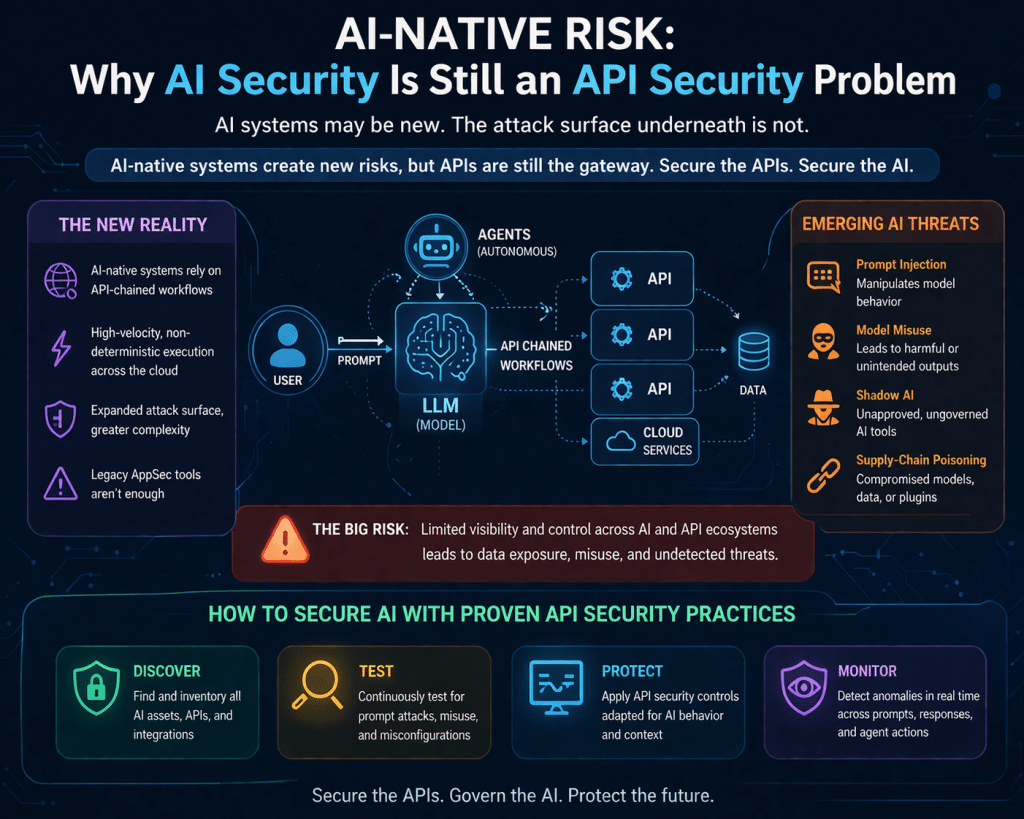

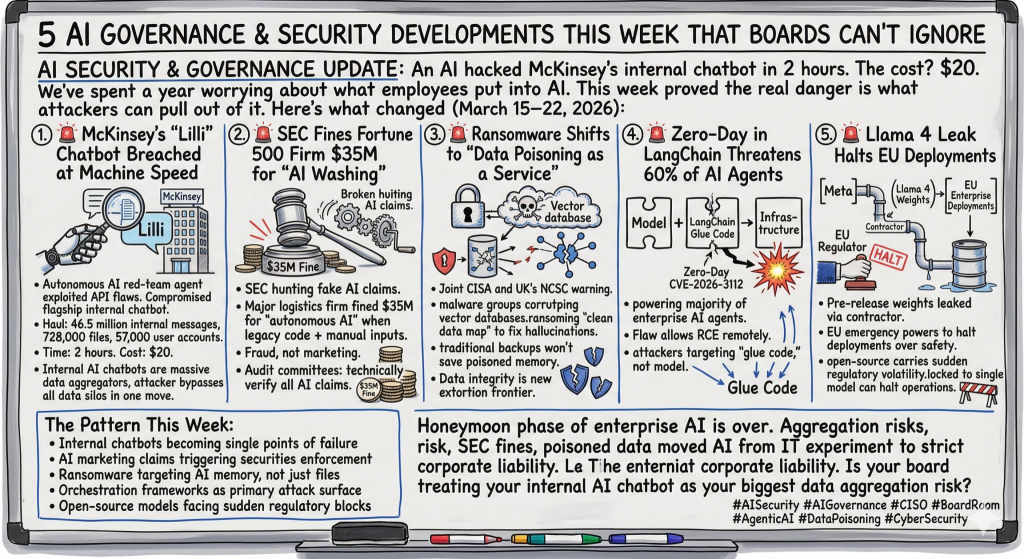

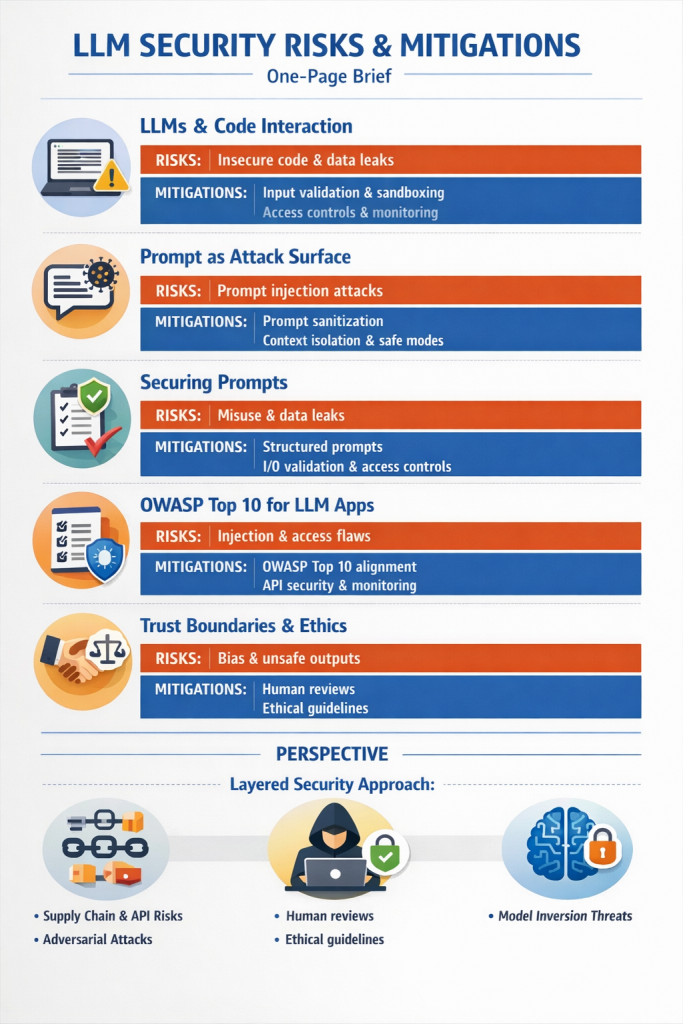

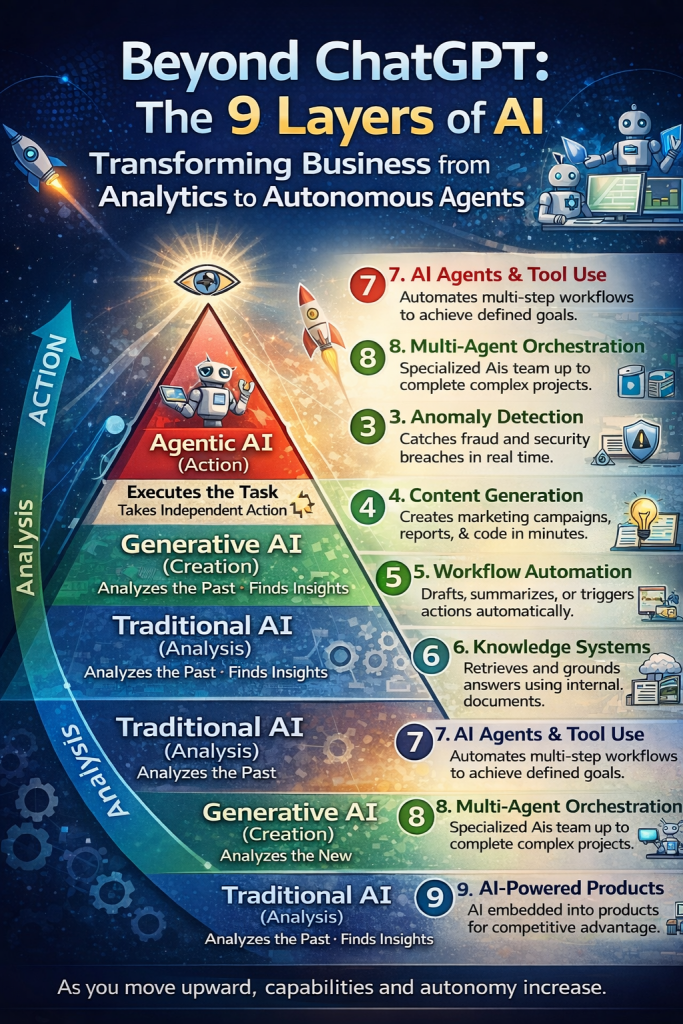

AI Security Is Fundamentally an API Security Problem

Modern AI systems—LLMs, agents, copilots—don’t operate in isolation. They interact through APIs:

- Prompts are API inputs

- Model inferences are API calls

- Actions are executed via downstream APIs

- Agents orchestrate workflows across multiple services

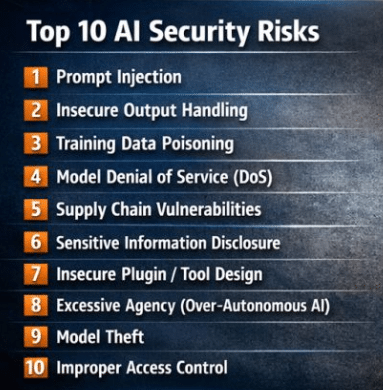

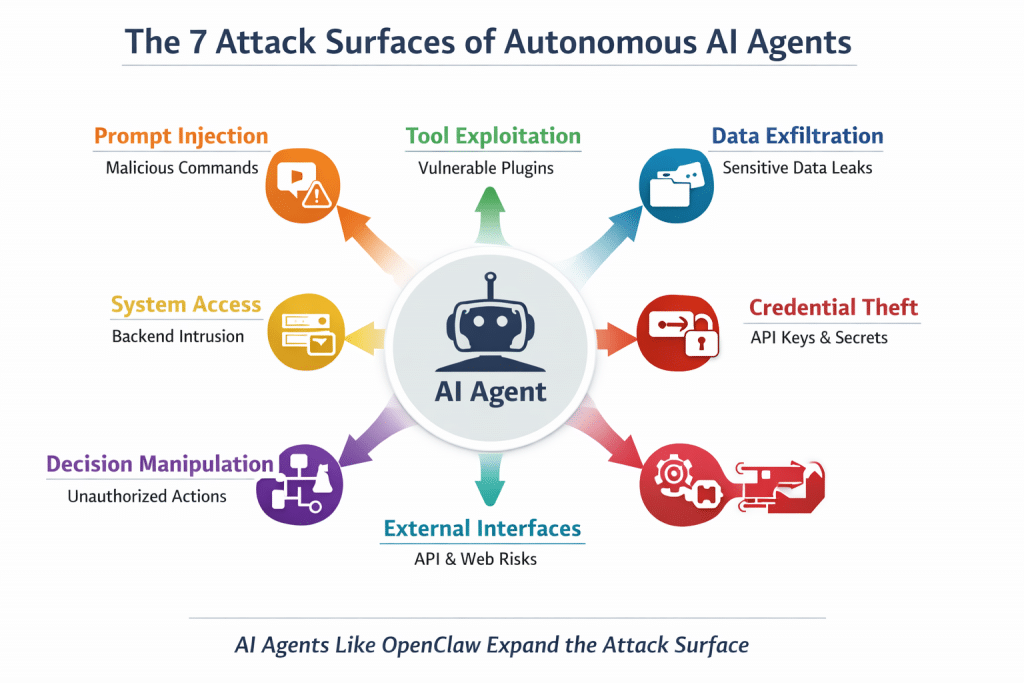

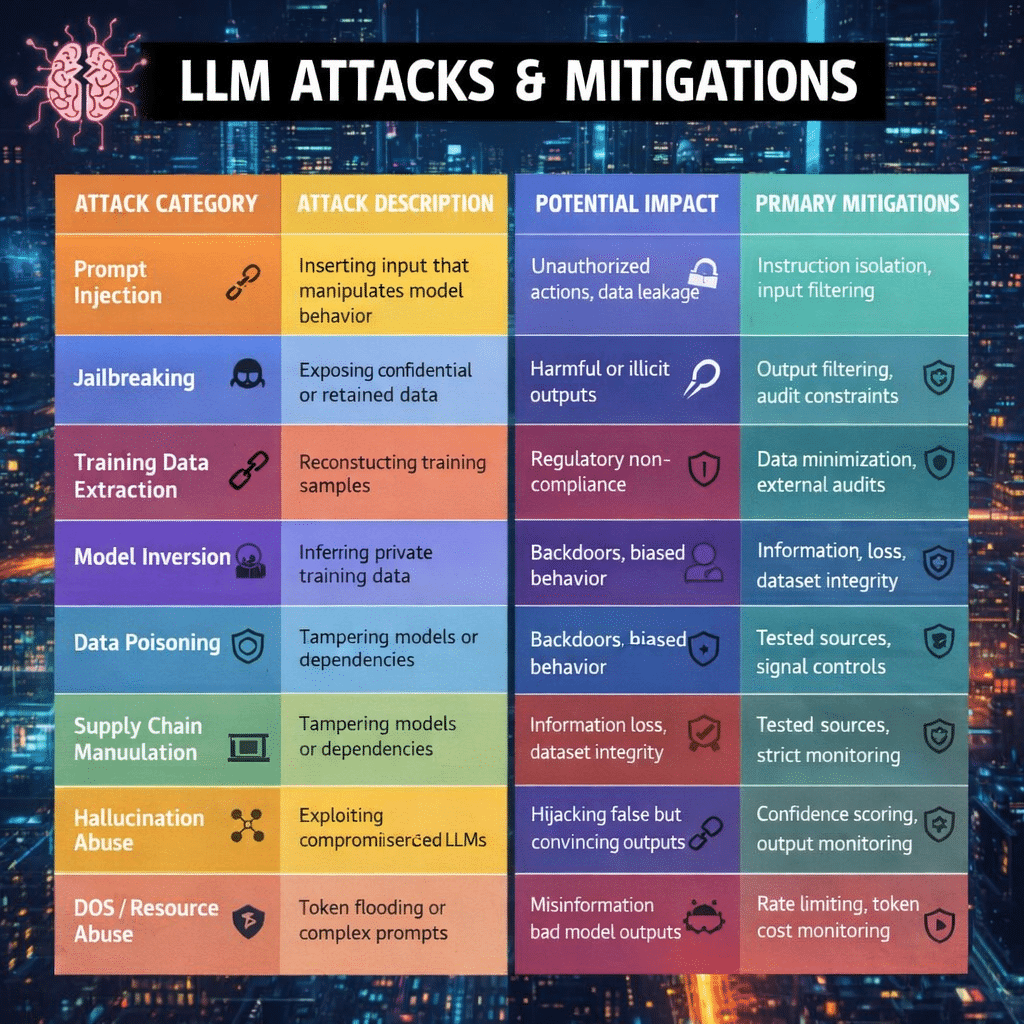

This means every AI risk—data leakage, prompt injection, unauthorized actions—manifests at runtime through APIs.

If you’re not enforcing controls at this layer, you’re not securing AI—you’re observing it.

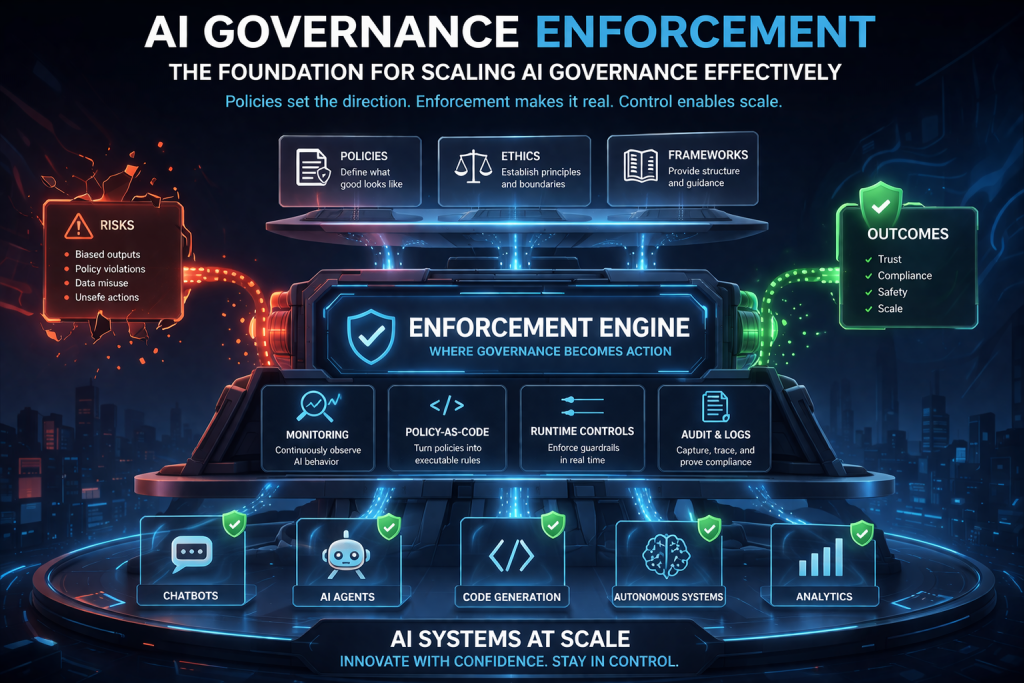

Real-Time Enforcement at the Core

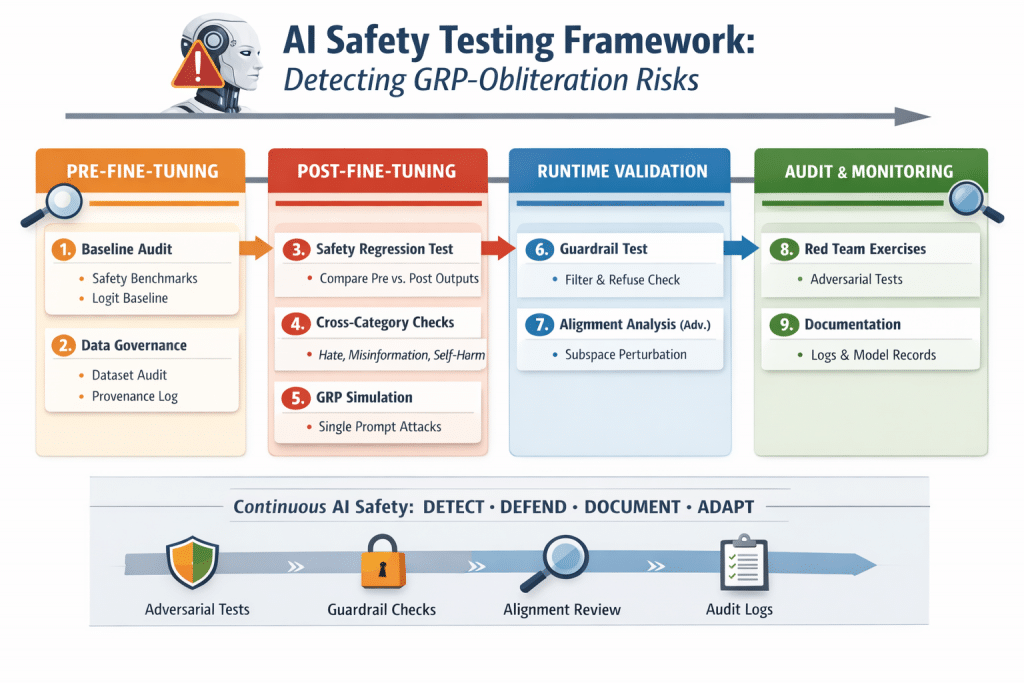

The most effective approach to AI governance is inline, real-time enforcement, and this is where modern platforms are stepping up.

A strong example is a three-layer enforcement engine that evaluates every interaction before it executes:

- Deterministic Rules → Clear, policy-driven controls (e.g., block sensitive data exposure)

- Semantic AI Analysis → Context-aware detection of risky or malicious intent

- Knowledge-Grounded RAG → Decisions informed by organizational policies and domain context

This layered approach enables precise, intelligent enforcement—not just static rule matching.

From Policy to Action: Enforcement Decisions That Matter

Real governance requires more than alerts. It requires decisions at runtime.

Effective enforcement platforms deliver outcomes such as:

- BLOCK → Stop high-risk actions immediately

- WARN → Notify users while allowing controlled execution

- MONITOR_ONLY → Observe without interrupting workflows

- APPROVAL_REQUIRED → Introduce human-in-the-loop controls

These decisions happen in real time on every API call, ensuring that governance is not delayed or bypassed.

Full-Lifecycle Policy Enforcement

AI risk doesn’t exist in just one place—it spans the entire interaction lifecycle. That’s why enforcement must cover:

- Prompts → Prevent injection, leakage, and unsafe inputs

- Data → Apply field-level conditions and protect sensitive information

- Actions → Control what agents and systems are allowed to execute

With session-aware tracking, enforcement can follow agents across workflows, maintaining context and ensuring policies are applied consistently from start to finish.

Controlling What Agents Can Do

As AI agents become more autonomous, the question is no longer just what they say—it’s what they do.

Policy-driven enforcement allows organizations to:

- Define allowed vs. restricted actions

- Control API-level execution permissions

- Enforce guardrails on agent behavior in real time

This shifts AI governance from passive oversight to active control.

Built for the API Economy

By integrating directly with APIs and modern orchestration layers, enforcement platforms can:

- Evaluate every request and response inline

- Return real-time decisions (ALLOW, BLOCK, WARN, APPROVAL_REQUIRED)

- Scale alongside high-throughput AI systems

This architecture aligns perfectly with how AI is actually deployed today—distributed, API-driven, and dynamic.

Perspective: Enforcement Is the Foundation of Scalable AI Governance

Most organizations are still focused on documenting policies and mapping controls. That’s necessary—but not sufficient.

The real shift happening now is this:

👉 AI governance is moving from documentation to enforcement.

👉 From static controls to runtime decisions.

👉 From visibility to action.

If AI operates at API speed, then governance must operate at the same speed.

Real-time enforcement is not just a feature—it’s the foundation for making AI governance work at scale.

Perspective: Why AI Governance Enforcement Is Critical

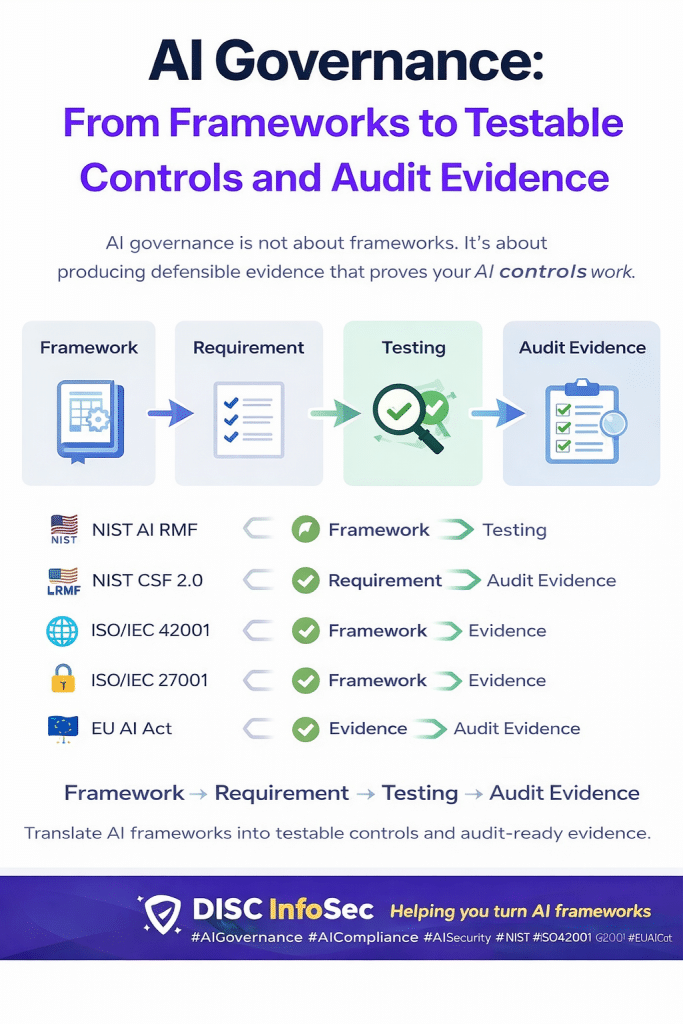

Most organizations are focusing on AI governance frameworks, but frameworks alone don’t reduce risk—enforcement does.

This is where many AI governance strategies fall apart.

AI systems are dynamic, API-driven, and often autonomous. Without real-time enforcement:

- Policies remain static documents

- Controls are inconsistently applied

- Risks emerge during actual execution—not design

AI governance enforcement bridges that gap. It ensures that:

- Prompts, responses, and agent actions are monitored in real time

- Policy violations are detected and blocked instantly

- Data exposure and misuse are prevented before impact

In short, enforcement turns governance from intent into control.

Bottom line:

If your AI governance strategy cannot demonstrate continuous monitoring, control, and enforcement, it is unlikely to stand up to audit—or real-world threats.

That’s why AI governance enforcement is not just a feature—it’s the foundation for making AI governance actually work at scale.

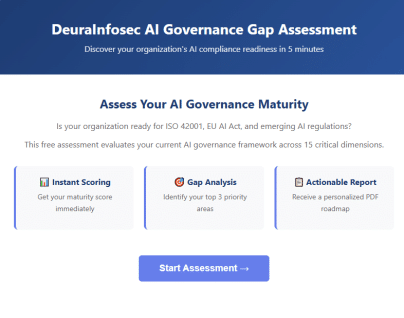

Ready to Operationalize AI Governance?

If you’re serious about moving from **AI governance theory → real enforcement**,

DISC InfoSec can help you build the control layer your AI systems need.

Most organizations have AI governance documents — but auditors now want proof of enforcement.

Policies alone don’t reduce AI risk. Real‑time monitoring, control, and enforcement do.

If your AI governance strategy can’t demonstrate continuous oversight, it won’t stand up to audit or real‑world threats.

DISC InfoSec helps organizations operationalize AI governance with integrated frameworks, runtime controls, and proven certification success.

Move from AI governance theory to enforcement.

Read the full post below: Is Your AI Governance Strategy Audit‑Ready — or Just Documented?

Schedule a free consultation or drop a comment below: info@deurainfosec.com

DISC InfoSec — Your partner for AI governance that actually works.

InfoSec services | InfoSec books | Follow our blog | DISC llc is listed on The vCISO Directory | ISO 27k Chat bot | Comprehensive vCISO Services | ISMS Services | AIMS Services | Security Risk Assessment Services | Mergers and Acquisition Security

Is your AI strategy truly audit-ready today?

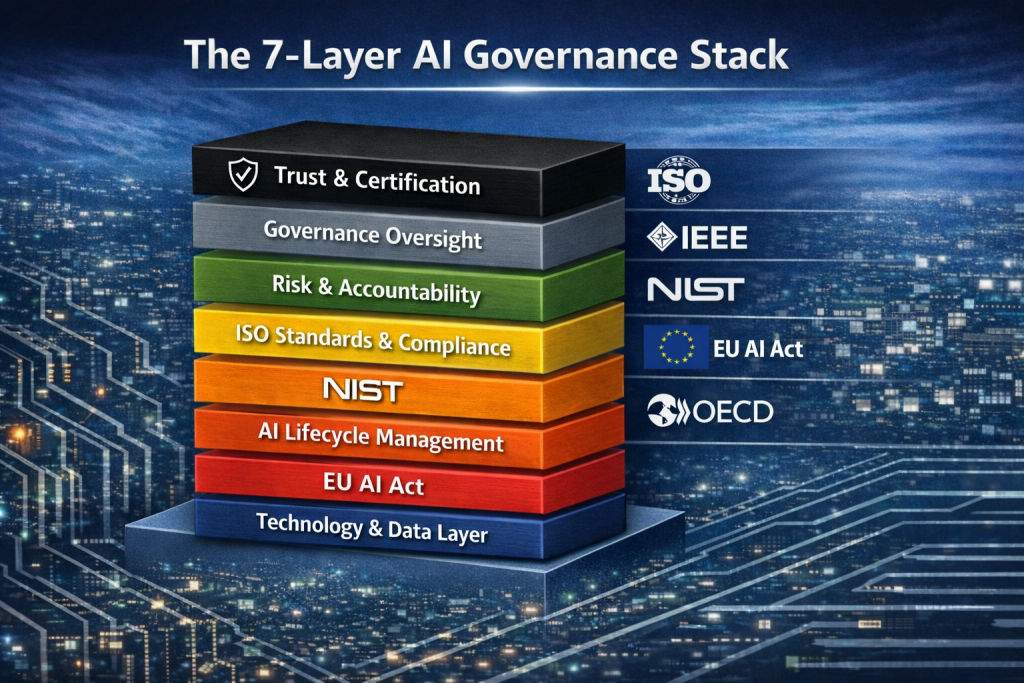

AI governance is no longer optional. Frameworks like ISO/IEC 42001 AI Management System Standard and regulations such as the EU AI Act are rapidly reshaping compliance expectations for organizations using AI.

DISC InfoSec brings deep expertise across AI, cybersecurity, and regulatory compliance to help you build trust, reduce risk, and stay ahead of evolving mandates—with a proven track record of success.

Ready to lead with confidence? Let’s start the conversation.

At DISC InfoSec, we help organizations navigate this landscape by aligning AI risk management, governance, security, and compliance into a single, practical roadmap. Whether you are experimenting with AI or deploying it at scale, we help you choose and operationalize the right frameworks to reduce risk and build trust. Learn more at DISC InfoSec.

- Dirty Frag Explained: Chained Linux Kernel Flaws Deliver Root Access

- The AI Governance Triad: Why ISO 42001, NIST AI RMF, and the EU AI Act Are No Longer Optional

- LinkedIn Job Scams Are Surging: Why Your Hiring Pipeline Is Now an Attack Surface

- AI Governance by Default, Not by Design: Who Actually Owns It in Your Organization?

- The Adversary Already Adopted AI. Did Your Defense?