Who Actually Owns AI Governance? An InfoSec & AI Governance Reading of the IAPP Conversation

The IAPP’s Ashley Casovan, in a recent AdExchanger interview, surfaces what is quickly becoming the most uncomfortable question inside enterprise compliance functions: when an AI tool is deployed, who actually owns the governance of it? Privacy teams have spent years building muscle around data minimization, consent, dark patterns, and children’s data — and now AI is layering on a parallel set of obligations. Crucially, there is no clean line yet between privacy governance and AI governance, which makes the seemingly basic question of accountability surprisingly difficult to answer inside most organizations.

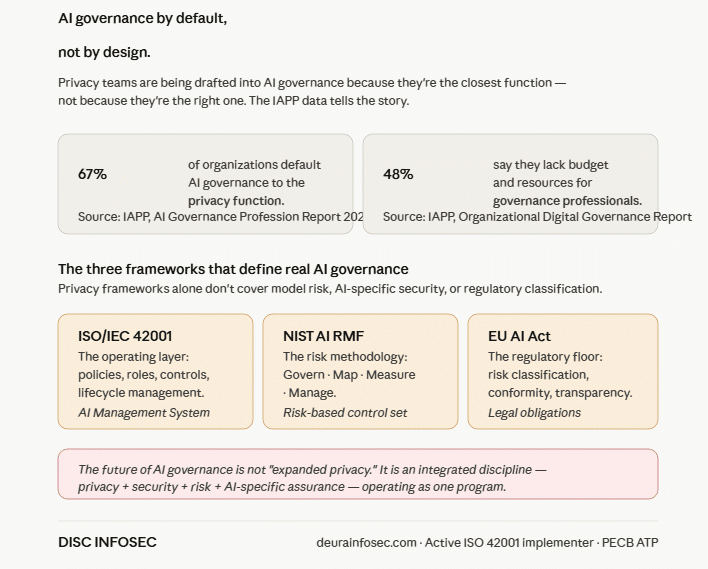

The IAPP’s own research underscores how unsettled this is. Forty-eight percent of organizations report insufficient budget and resources to invest in governance professionals, and sixty-seven percent say primary responsibility for AI governance currently sits inside the privacy function. Casovan is candid that survey-based research conducted with privacy professionals carries some bias, but even accounting for that, the signal is unmistakable: privacy teams are being pulled into AI governance work whether or not they were resourced for it, and the role itself is still being defined organization by organization.

Structurally, there is no consistent operating model. In some organizations, AI governance is simply bolted onto what privacy professionals are already doing. In others, it has evolved into a distinct, near-full-time function — with someone else taking over the residual privacy work. And it is not just privacy teams getting pulled in. Cybersecurity professionals, data governance teams, and increasingly internal audit and assurance functions are being drawn into AI work, with the specific mix dictated by organizational complexity, sector, and size.

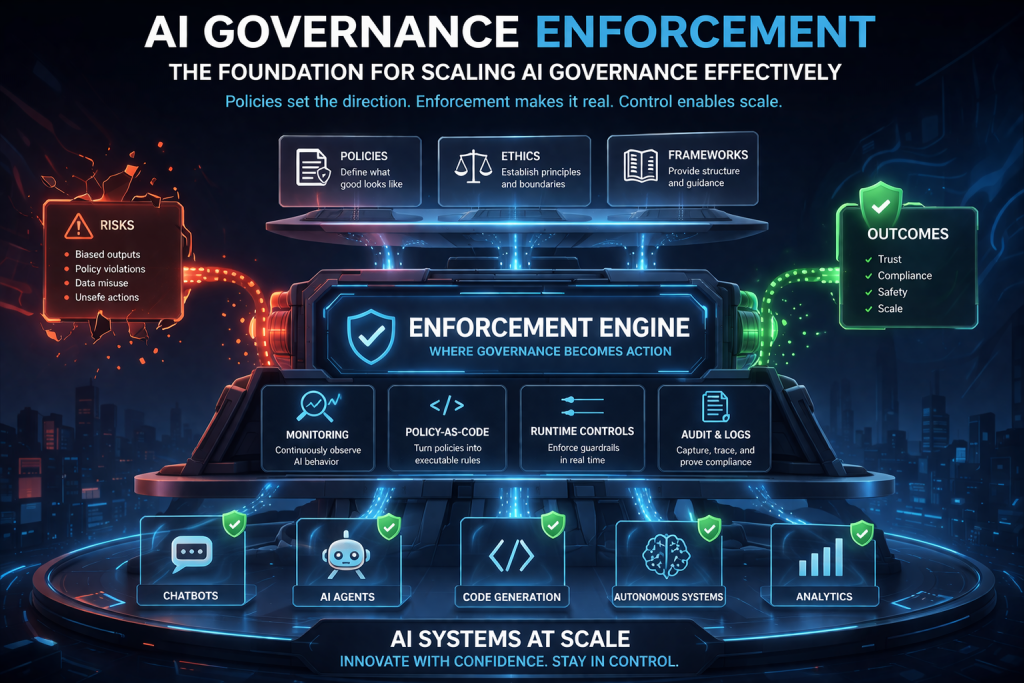

The actual scope of AI governance work is broad, spanning policy, compliance, technical evaluation, and ethics. On the policy side, it means translating high-level principles into concrete rules of use and standing up governance structures — committees, oversight boards, decision rights — so the right people are at the table when AI use cases come forward. On the compliance side, it means implementing and operationalizing frameworks like the NIST AI RMF. On the technical side, it means evaluating systems for bias and assessing the cybersecurity risks introduced through AI components. And layered above all of this is the assurance and ethics work — thinking through downstream impacts and, in regulated sectors, building independent audits and evaluations.

That scope has clear upskilling implications. A regulatory understanding remains foundational, but the modern AI governance role expects practitioners to move beyond a pure compliance lens and engage with technical evaluation methodologies. Casovan specifically flags assurance teams — including accountants and internal auditors — as a population now being asked to review AI systems, raising real questions about what training and tooling those professionals actually have to do that work credibly.

On the regulatory front, Casovan points to California as the bellwether for automated decision-making. The state’s combination of a large, diverse population and its concentration of major tech platforms is producing some of the most substantive and mature AI policy debates in the United States, and what gets resolved in California on automated decision-making is likely to influence other states. On consent, she draws a useful parallel: while advertising-driven AI ecosystems collect significant data passively under questionable consent conditions, more mature domains — pharmaceutical research, medical research — already have well-established guardrails around purpose limitation, downstream use, and data minimization that ad tech and other AI-heavy sectors can learn from.

So what does “good” actually look like today? Casovan lays out a clear sequence: first, know where AI is actually being used in your organization — this is harder than it sounds because AI features are increasingly being injected into existing systems through routine vendor updates (an “agentic AI chatbot” appearing overnight is now a real scenario). Second, define what good means for your organization through policies, standards, and internal principles. Third, stand up a governance mechanism with real decision rights and accountability. Fourth, evaluate potential harms and impacts on real people — not just risk-category checkboxes. Finally, understand jurisdiction-specific compliance obligations, including disclosure and recourse mechanisms. The opportunity, she argues, is for AI governance professionals to move beyond a check-the-box posture and surface the implementation realities the rest of the organization isn’t yet seeing.

Professional Perspective (InfoSec & AI Governance)

The most important takeaway from Casovan’s interview is one she states almost in passing: AI governance is currently being shaped not by org design, but by org default. Privacy teams are being pulled in because they’re the closest existing function — not because they’re the right one. And while privacy professionals bring real value (data subject rights, regulatory fluency, harm-impact thinking), AI governance done well requires capabilities that extend well beyond the privacy lens: model risk evaluation, AI-specific cybersecurity (data poisoning, prompt injection, model exfiltration), supply-chain assurance for AI vendors, and ML-specific testing methodologies. When 67% of organizations are defaulting AI governance to privacy and 48% lack the budget to staff it properly, what you have is a structural under-resourcing problem disguised as an organizational ambiguity problem.

This is where I would push the conversation further than the interview does. The future-state of AI governance is not “expanded privacy,” and it is not “rebadged GRC.” It is an integrated discipline that sits at the intersection of three frameworks that most organizations are still treating as separate: ISO/IEC 42001 for the AI Management System (the operating layer — policies, roles, controls, lifecycle management), NIST AI RMF for the risk methodology (Govern, Map, Measure, Manage), and the EU AI Act for the regulatory floor (risk classification, conformity assessment, transparency obligations). Privacy frameworks like GDPR and CCPA inform the data-handling layer, but they do not, on their own, govern the model itself, the system around the model, or the decisions the model produces. Organizations that try to retrofit AI governance into a privacy program will find the program straining within twelve months.

For practitioners and executives reading this, my recommendation is concrete: stop debating who owns AI governance in the abstract and start operationalizing it. Build an AI inventory mapped to ISO 42001 Annex A controls. Stand up a cross-functional AI governance committee with explicit decision rights, with privacy, security, legal, data governance, and a business sponsor at the table. Define AI-specific vendor assurance that goes beyond a SOC 2 letter. Establish board-level reporting that treats AI adoption velocity as a measurable risk indicator. And invest in upskilling, particularly for assurance and audit functions who are about to be handed AI review responsibilities they were never trained for. The organizations that get this right won’t necessarily have the most sophisticated AI — they’ll have the operational discipline to defend, in front of a regulator or an enterprise customer, exactly why their AI behaves the way it does. That defensibility is the actual deliverable of AI governance, and it’s the work we do at DISC InfoSec every day.

DISC InfoSec is an active ISO 42001 implementer (ShareVault / Pandesa Corporation) and PECB Authorized Training Partner specializing in integrated AI governance — ISO 42001, ISO 27001, NIST AI RMF, and EU AI Act — for B2B SaaS and financial services organizations. If “who owns AI governance?” is an open question in your organization, that is the conversation we have. Reach out at info@deurainfosec.com.

#AIGovernance #ISO42001 #NISTAIRMF #EUAIAct #PrivacyByDesign #IAPP #CISO #DPO #vCAIO #AICompliance #DataGovernance #BoardGovernance #CyberSecurity #ResponsibleAI

The AI Governance Quick-Start: Defensible in 10 Days, Not 4 Quarters

DISC InfoSec is an active ISO 42001 implementer and PECB Authorized Training Partner specializing in AI governance for B2B SaaS and financial services organizations.

AI Vulnerability Scorecard: Discover Your AI Attack Surface Before Attackers Do

Your Shadow AI Problem Has a Name-And Now It Has a Score

Most AI Security Tools Won’t Pass an Audit. Here’s a 15-Minute Way to Find Out.

InfoSec services | InfoSec books | Follow our blog | DISC llc is listed on The vCISO Directory | ISO 27k Chat bot | Comprehensive vCISO Services | ISMS Services | AIMS Services | Security Risk Assessment Services | Mergers and Acquisition Security

- AI Can Pentest Your Network Now. That’s Not the Risk You Should Worry About

- GRC at Machine Speed: How AI Is Reshaping Governance, Risk, and Compliance

- Building AI Governance That Actually Works: From Ethics to the Exam Room

- Corporate Visibility as an Attack Surface: Managing Risk in the AI Era

- GRC Engineering Is the Future of Cloud Compliance