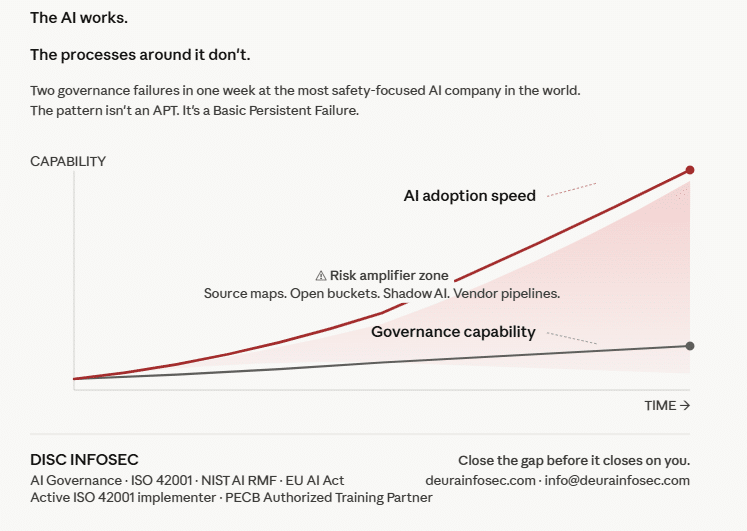

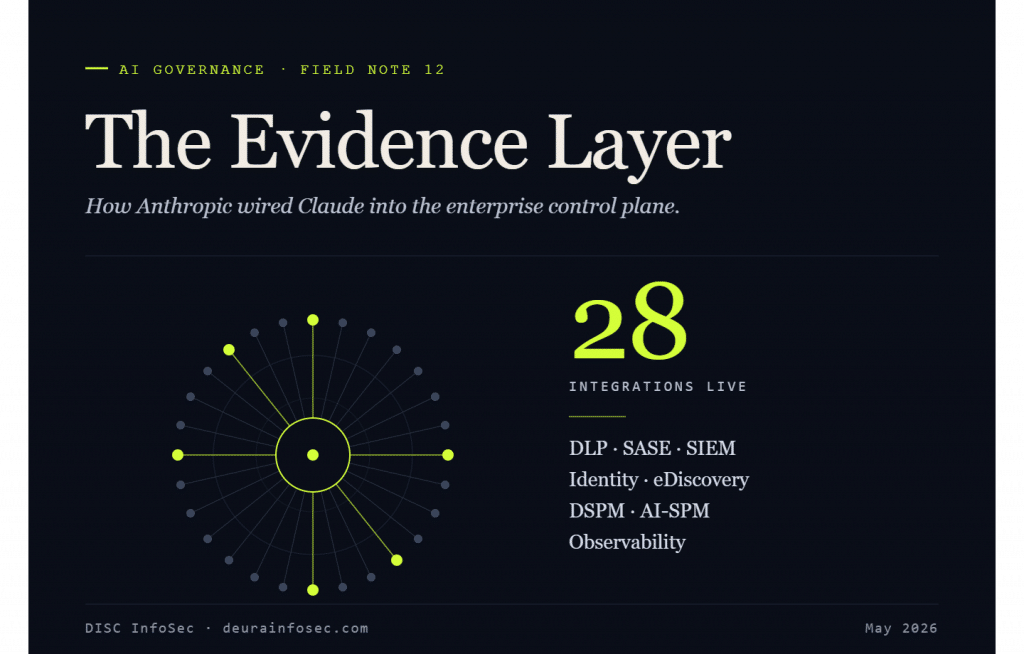

As enterprise adoption of generative AI accelerates, the operational gap between “AI as productivity tool” and “AI as governed enterprise application” has widened. Anthropic has moved to close that gap by introducing 28 integrations with security and compliance tools that allow IT and security teams to manage Claude in the same way they manage other applications in their environments. The announcement reframes Claude from a standalone SaaS product into a workload that fits inside an organization’s existing control plane.

The technical foundation for this is the newly introduced Claude Compliance API. It is a REST API that gives enterprise IT and security teams programmatic access to Claude activity data, replacing manual exports and periodic reviews with real-time programmatic access to usage data and customer content, enabling continuous monitoring and automated policy enforcement. In other words, Anthropic is treating governance signals as first-class telemetry rather than as an after-the-fact audit artifact.

Two data domains are exposed through the API. The first covers conversation content from Claude Enterprise — chats, uploaded files, and projects — which organizations can pipe into their existing security, monitoring, and data loss prevention pipelines. This is the layer where sensitive data exposure, prompt-side leakage, and content-policy violations get detected.

The second domain covers activity events from Claude Enterprise and the Claude Platform, including user logins, administrative actions, and configuration changes. This is the audit-trail layer that satisfies access governance, change management, and forensic reconstruction requirements — the kind of evidence external auditors actually open tickets about.

The 28 launch partners span a broad swath of the enterprise security stack: DLP, SASE, data security, SIEM, security operations, identity management, eDiscovery, AI security posture management, and observability. The named providers include Cloudflare, Cribl, CrowdStrike, Cyera, Datadog, Forcepoint, Fortinet, Geordie AI, IBM Guardium, Microsoft Purview, Mimecast, Netskope, Okta, Palo Alto Networks, Proofpoint, Relativity, ReliaQuest, Rubrik, SailPoint, Smarsh, Snyk, Sumo Logic, Tenable, Theta Lake, Trellix, Varonis, Wiz, and Zscaler. The breadth signals that Anthropic is meeting enterprises wherever their existing investment already sits.

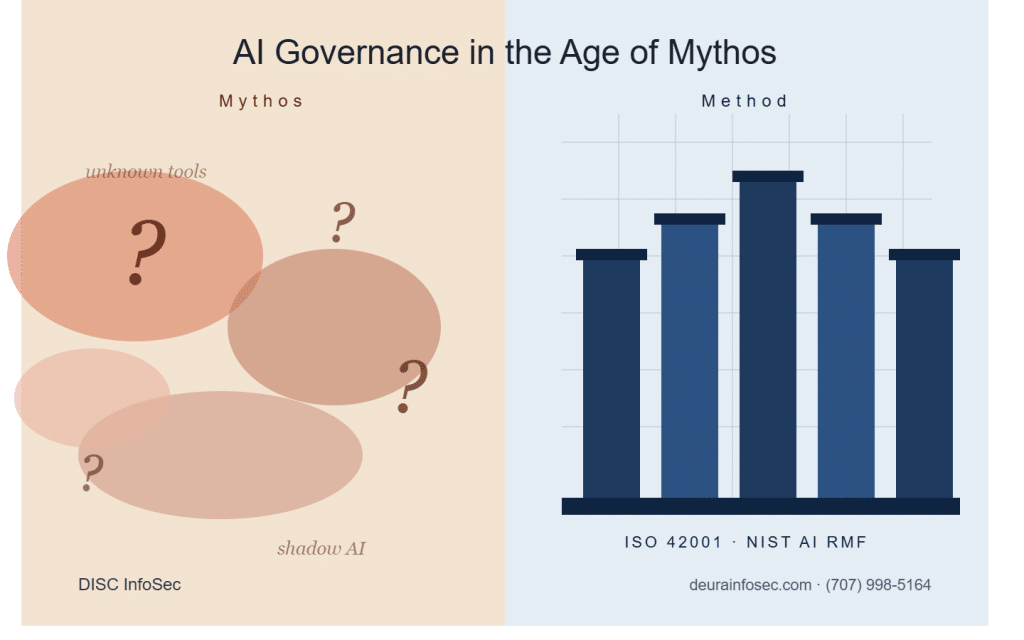

The promised user experience is deliberately undramatic. For organizations already running one of these platforms, enabling coverage over Claude usage involves connecting and configuring the Claude instance so the data flows into the same dashboards and alerting workflows used for everything else. That framing matters: governance friction is the single biggest reason shadow AI proliferates, and “it shows up in your existing SIEM” is a far more compelling story to a CISO than “stand up a parallel monitoring stack for AI.”

Taken together, the move positions Claude as governable infrastructure rather than an unmanaged endpoint. It directly addresses the most common objection raised in enterprise AI risk assessments — that AI usage is opaque, ungoverned, and lives outside the controls that already govern email, file shares, and SaaS. By exposing both content and activity telemetry through a documented API, Anthropic is essentially handing customers the evidence base required to demonstrate operational controls during audits.

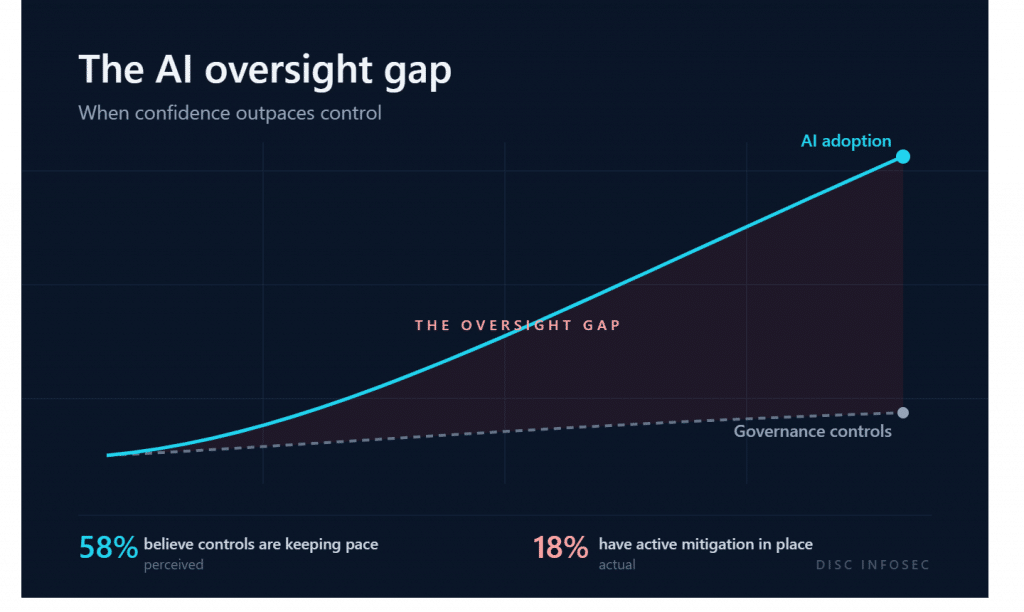

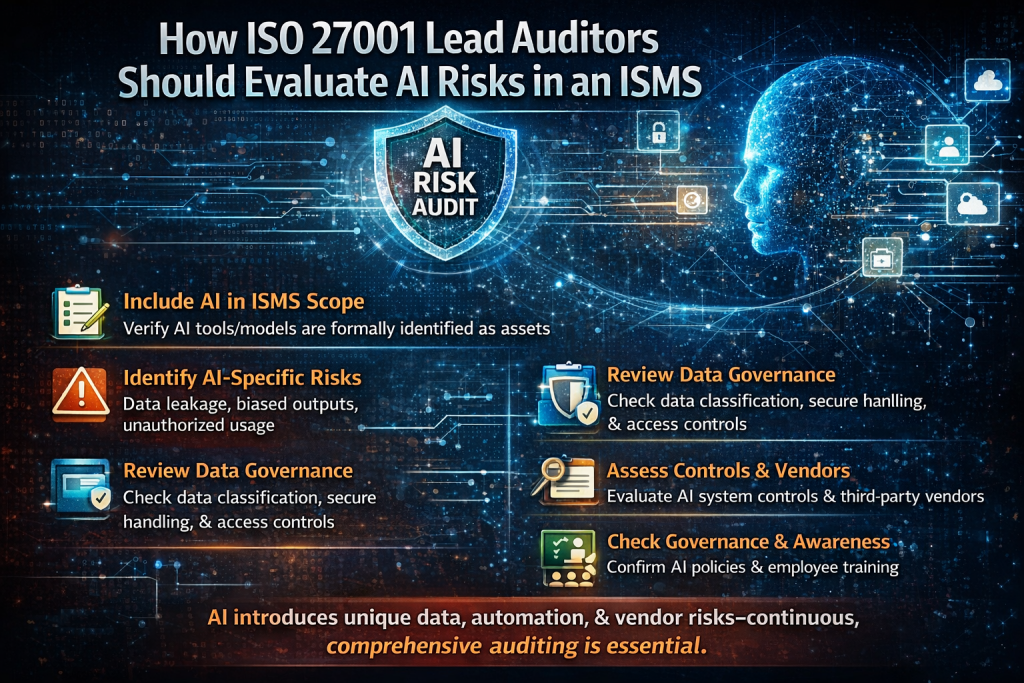

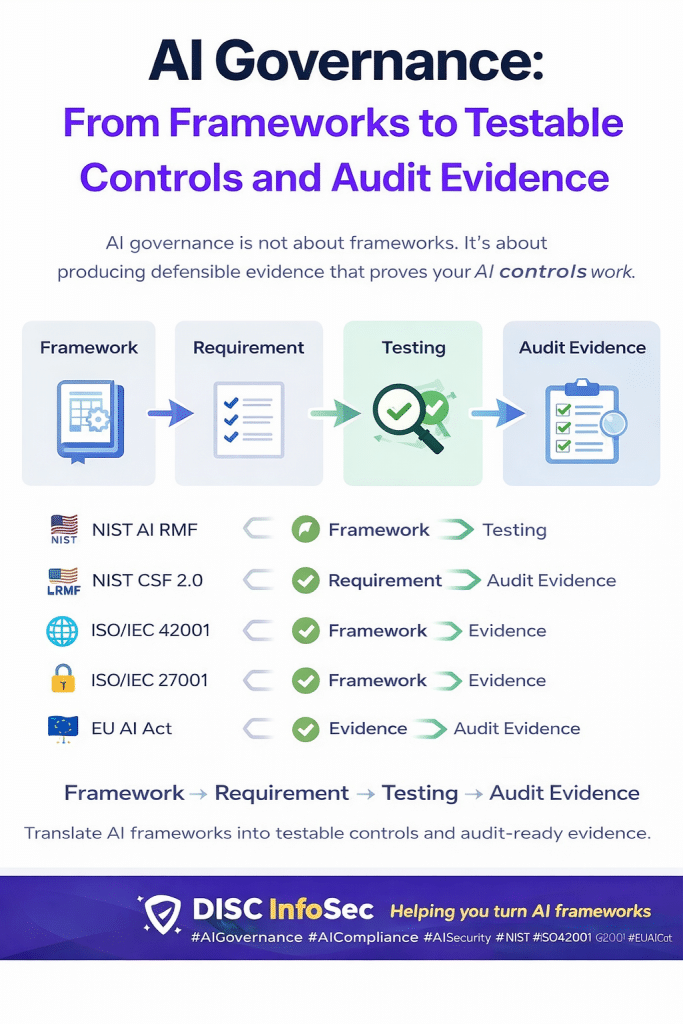

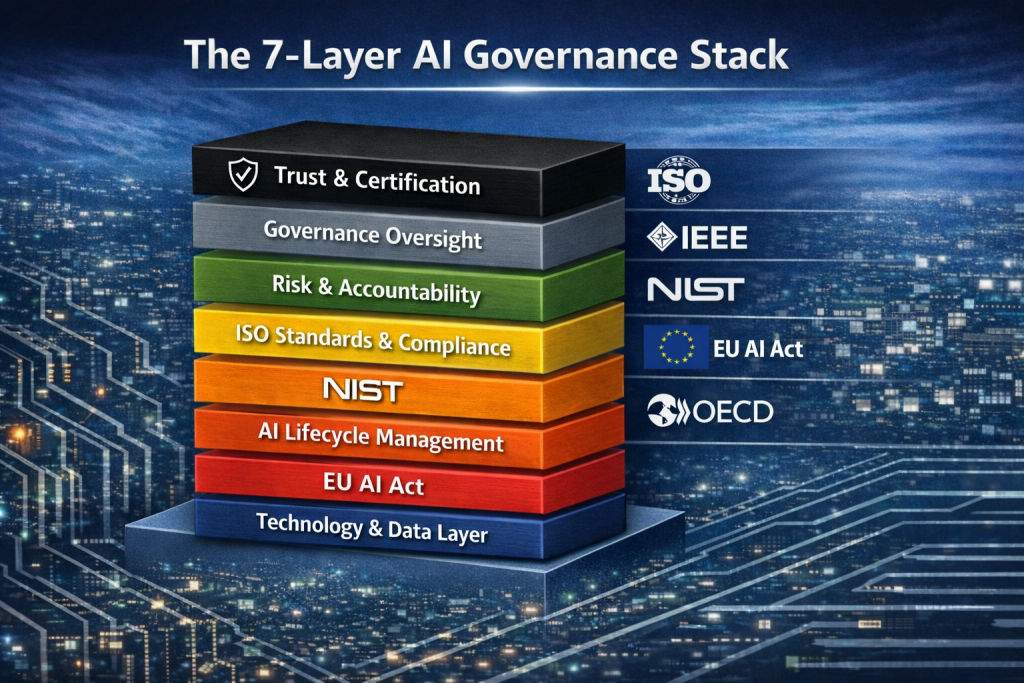

My perspective: this is one of the more consequential governance announcements from a frontier lab to date, and it deserves attention from anyone implementing ISO 42001, NIST AI RMF, or the EU AI Act in practice. Most AI governance programs I see fail not at the policy layer but at the evidence layer — clauses A.6.2.6 (operation), A.6.2.8 (monitoring), and A.9 (performance evaluation) of ISO 42001 all require demonstrable, ongoing oversight of AI system use, and until now that evidence has typically been cobbled together from screenshot exports and vendor attestations. A Compliance API that streams content and activity data into Purview, Netskope, or Varonis converts those clauses from aspirational language into something an internal auditor can actually sample. It also collapses the artificial boundary between “AI governance” and “information security governance,” which is the right outcome — AI systems are information systems, and treating them as a separate compliance silo has always been a structural mistake.

That said, two cautions are worth flagging. First, the API gives you the capability to monitor; it does not give you the program. Without a defined AI acceptable use policy, a classified inventory of AI use cases, role-based access boundaries, and a triage workflow for what to do when DLP fires on a Claude conversation, the telemetry just becomes noise in another dashboard. Second, ingesting conversation content into DLP and eDiscovery tools creates new data-protection obligations of its own — privacy impact assessments, retention schedules, and access controls on the captured prompts and outputs themselves. Organizations should plan for the governance of the governance data before turning the firehose on. For practitioners building toward ISO 42001 certification or a Stage 2 audit, this announcement is the kind of vendor-provided control surface that materially shortens the path to demonstrable conformity — provided the management system around it is actually built.

AI Model Risk Management Is Becoming the Foundation of Enterprise AI Governance

Your Shadow AI Inventory Is Wrong. Here’s a Free Way to Fix It.

Your Shadow AI Problem Has a Name-And Now It Has a Score

AI Policy Enforcement in Practice: From Theory to Control

The AI Governance Quick-Start: Defensible in 10 Days, Not 4 Quarters

DISC InfoSec is an active ISO 42001 implementer and PECB Authorized Training Partner specializing in AI governance for B2B SaaS and financial services organizations.

AI Vulnerability Scorecard: Discover Your AI Attack Surface Before Attackers Do

Your Shadow AI Problem Has a Name-And Now It Has a Score

Most AI Security Tools Won’t Pass an Audit. Here’s a 15-Minute Way to Find Out.

InfoSec services | InfoSec books | Follow our blog | DISC llc is listed on The vCISO Directory | ISO 27k Chat bot | Comprehensive vCISO Services | ISMS Services | AIMS Services | Security Risk Assessment Services | Mergers and Acquisition Security

- The Bus Factor Just Inverted: Governing the Agents Your Engineers Leave Behind

- ISO 42001 Just Got Easier to Prove: Anthropic Opens Claude to 28 Security and Compliance Tools

- Modern GRC Maturity: Connecting Governance, Risk, Controls, and Technology

- The One Security Book That Got Louder With Every Passing Year

- Microsoft Just Made AI Agent Security a CI/CD Problem — Here’s Why That Matters