API Security — what it is and why it matters

API security is the practice of protecting application programming interfaces (APIs) from unauthorized access, abuse, and data exposure. APIs are the connective tissue between systems—apps, services, partners, and now AI models. Because they expose business logic and sensitive data directly, a single weak API can bypass traditional perimeter defenses. With over 80% of internet traffic now API-driven, attackers increasingly target APIs to exploit authentication flaws, misconfigurations, and excessive data exposure. In short, if your APIs are exposed, your core systems are exposed.

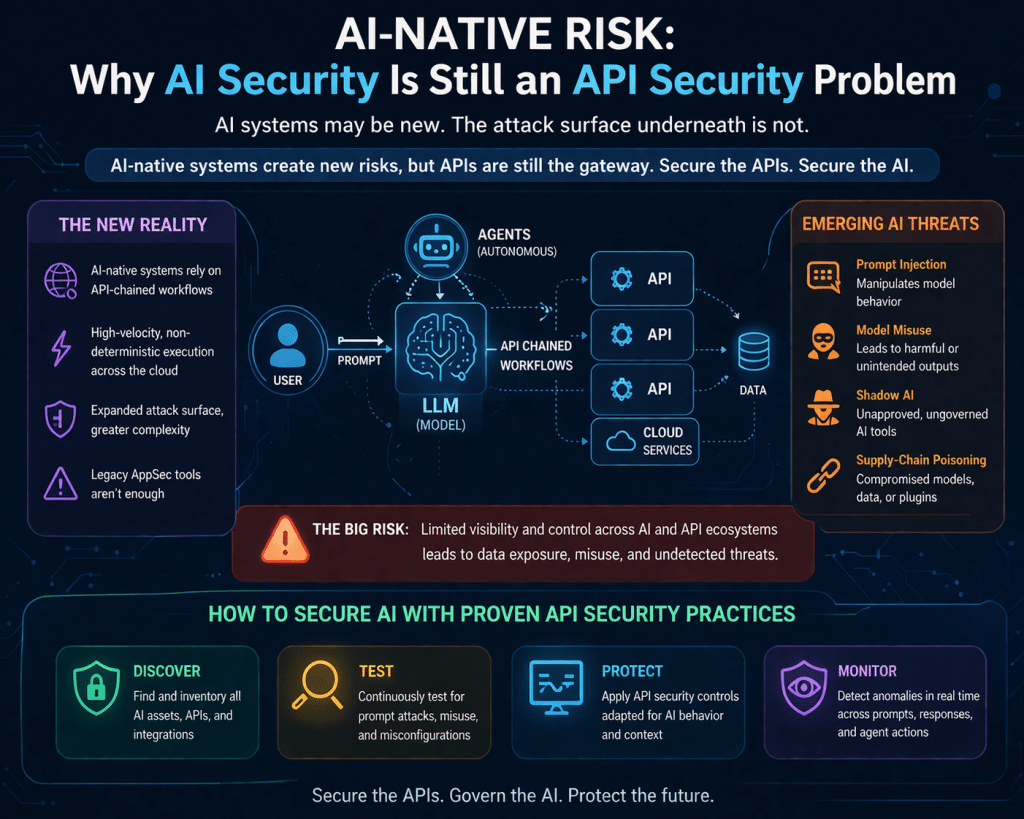

Why API security is critical (even more with AI in the mix)

If you’re already using AI tools, API security becomes non-negotiable. Most AI systems—LLMs, agents, automation workflows—rely heavily on APIs for data retrieval, decision-making, and action execution. That means every AI capability you deploy expands your API attack surface. A vulnerable API can allow attackers to manipulate inputs to AI models, extract sensitive data, or trigger unintended actions. AI doesn’t reduce risk—it amplifies it if the underlying APIs aren’t secured and tested.

Why API security matters for AI Governance

AI governance is about accountability, control, and trust in how AI systems operate. APIs are the execution layer of AI governance—they enforce (or fail to enforce) policy. If APIs lack proper authentication, authorization, rate limiting, or logging, then governance controls are effectively bypassed. You cannot claim governance if you cannot control who accesses your AI systems, what data they use, and what actions they perform. API security is therefore foundational to enforcing AI policies, auditability, and responsible use.

Why API security matters for security, compliance, and privacy

From a security standpoint, APIs are a primary entry point for attacks like broken authentication, privilege escalation, and data exfiltration. From a compliance perspective (ISO 27001, SOC 2, HIPAA, GDPR, etc.), APIs must enforce access controls, protect sensitive data, and maintain audit trails. From a privacy standpoint, APIs often expose personally identifiable information (PII), making them high-risk vectors for breaches. A single vulnerable API can violate multiple regulatory requirements at once.

Context: why your API definition file matters

A 403 “unauthorized” response when attempting to access the API definition via URL simply means access is restricted—which is good—but it also highlights a gap: without the OpenAPI/Swagger (JSON/YAML) definition, a proper security assessment cannot be performed. Modern API security testing—especially AI-assisted scanning—depends on structured API definitions to understand endpoints, parameters, authentication flows, and data models. Without it, testing is incomplete and blind to deeper vulnerabilities.

Why API vulnerability assessment is imperative

API vulnerabilities are not theoretical—they are routinely used for privilege escalation, allowing attackers to move from basic access to administrative control. Given the scale of API traffic and their direct exposure to business logic, continuous API assessment is essential. This is even more critical when APIs are used by AI systems, where a flaw can propagate automated decisions at scale.

My perspective

API security is no longer a technical subdomain—it’s the control plane of modern digital and AI ecosystems. If your APIs are not fully inventoried, documented, and continuously tested, your security posture is incomplete—regardless of how strong your traditional controls are. In the AI era, API security is governance. It’s where policy meets execution. And without visibility (API definitions) and validation (security testing), you’re operating on trust rather than control—which is exactly where attackers thrive.

Secure APIs: Design, build, and implement

InfoSec services | InfoSec books | Follow our blog | DISC llc is listed on The vCISO Directory | ISO 27k Chat bot | Comprehensive vCISO Services | ISMS Services | AIMS Services | Security Risk Assessment Services | Mergers and Acquisition Security

Is your AI strategy truly audit-ready today?

AI governance is no longer optional. Frameworks like ISO/IEC 42001 AI Management System Standard and regulations such as the EU AI Act are rapidly reshaping compliance expectations for organizations using AI.

DISC InfoSec brings deep expertise across AI, cybersecurity, and regulatory compliance to help you build trust, reduce risk, and stay ahead of evolving mandates—with a proven track record of success.

Ready to lead with confidence? Let’s start the conversation.

At DISC InfoSec, we help organizations navigate this landscape by aligning AI risk management, governance, security, and compliance into a single, practical roadmap. Whether you are experimenting with AI or deploying it at scale, we help you choose and operationalize the right frameworks to reduce risk and build trust. Learn more at DISC InfoSec.

- The Bus Factor Just Inverted: Governing the Agents Your Engineers Leave Behind

- ISO 42001 Just Got Easier to Prove: Anthropic Opens Claude to 28 Security and Compliance Tools

- Modern GRC Maturity: Connecting Governance, Risk, Controls, and Technology

- The One Security Book That Got Louder With Every Passing Year

- Microsoft Just Made AI Agent Security a CI/CD Problem — Here’s Why That Matters