Anthropic has expanded access to its AI-driven security capability, Claude Security, moving it into a broader public beta for enterprise users. The solution is designed to help organizations identify vulnerabilities in their codebases and automatically generate remediation fixes, signaling a shift toward AI-assisted secure software development at scale.

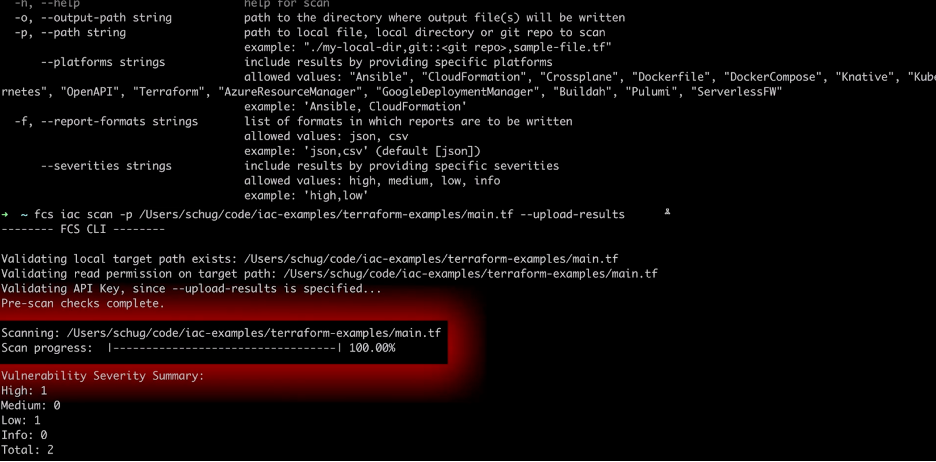

At its core, Claude Security applies advanced AI models to perform continuous code analysis, enabling faster detection of weaknesses that would traditionally require manual secure code review or static analysis tools. The automation of patch generation introduces a new paradigm where remediation is embedded directly into the development lifecycle rather than treated as a downstream activity.

The release comes at a time when AI is increasingly being used by both defenders and attackers. Anthropic positions Claude Security as a defensive countermeasure to the growing risk of AI-powered exploitation, emphasizing that traditional security approaches may not scale effectively against AI-driven threats.

Importantly, the rollout is initially targeted at enterprise environments, suggesting a controlled adoption strategy. By limiting access to organizations with mature security programs, Anthropic appears to be mitigating risks associated with misuse while gathering operational feedback to refine the platform.

The broader context is critical: Anthropic has recently faced scrutiny over internal security lapses, including accidental exposure of large volumes of source code. These incidents highlight the inherent tension between building advanced AI systems and maintaining robust internal security hygiene.

Additionally, emerging AI models such as Anthropic’s advanced systems have demonstrated the capability to uncover large-scale vulnerabilities across major platforms, raising concerns about dual-use risks. The same technology that strengthens defense could also accelerate offensive cyber capabilities if misused.

Overall, Claude Security reflects a broader industry trend: embedding AI directly into cybersecurity operations. It represents a move toward autonomous or semi-autonomous security tooling that augments human analysts, reduces remediation time, and integrates security deeper into DevSecOps pipelines.

Professional Perspective (InfoSec & AI Governance)

From an InfoSec and AI Governance standpoint, this is both inevitable and risky.

First, this validates what many of us have been anticipating: AI-native AppSec is becoming the new baseline. Static analysis, SAST/DAST tools, and manual reviews will increasingly be supplemented—or replaced—by AI systems capable of contextual reasoning and automated remediation. This will compress vulnerability management cycles dramatically.

However, governance is lagging behind capability. Tools like Claude Security introduce several non-trivial risks:

- Model trust & explainability: Can you audit why a fix was generated?

- Secure SDLC integrity: Are AI-generated patches introducing hidden logic flaws?

- Data exposure risk: What code or IP is being processed by external AI systems?

- Supply chain implications: AI becomes part of your software assurance pipeline—expanding your attack surface.

There’s also a strategic concern: defensive AI is racing against offensive AI. If models can autonomously find and fix vulnerabilities, they can also be repurposed to find and exploit them at scale. This reinforces the need for controlled access, monitoring, and policy enforcement (AI governance frameworks like ISO 42001, NIST AI RMF, etc.).

My bottom line:

This is a major leap forward for DevSecOps efficiency, but without strong governance, it can quickly become a high-speed risk amplifier. Organizations adopting such tools should treat them as critical security infrastructure, not just developer productivity enhancers.

The AI Governance Quick-Start: Defensible in 10 Days, Not 4 Quarters

DISC InfoSec is an active ISO 42001 implementer and PECB Authorized Training Partner specializing in AI governance for B2B SaaS and financial services organizations.

AI Vulnerability Scorecard: Discover Your AI Attack Surface Before Attackers Do

Your Shadow AI Problem Has a Name-And Now It Has a Score

Most AI Security Tools Won’t Pass an Audit. Here’s a 15-Minute Way to Find Out.

InfoSec services | InfoSec books | Follow our blog | DISC llc is listed on The vCISO Directory | ISO 27k Chat bot | Comprehensive vCISO Services | ISMS Services | AIMS Services | Security Risk Assessment Services | Mergers and Acquisition Security

- Your Shadow AI Inventory Is Wrong. Here’s a Free Way to Fix It.

- The AI Agent Identity Crisis Has Already Started

- OWASP 2026 GenAI Risk Catalogue Signals a New Era of AI Security Governance

- Dirty Frag Explained: Chained Linux Kernel Flaws Deliver Root Access

- The AI Governance Triad: Why ISO 42001, NIST AI RMF, and the EU AI Act Are No Longer Optional

![Don't Hire a Software Developer Until You Read this Book: The software survival guide for tech startups & entrepreneurs (from idea, to build, to product launch and everything in between.) by [K.N. Kukoyi]](https://m.media-amazon.com/images/I/51a9ala-KuL.jpg)