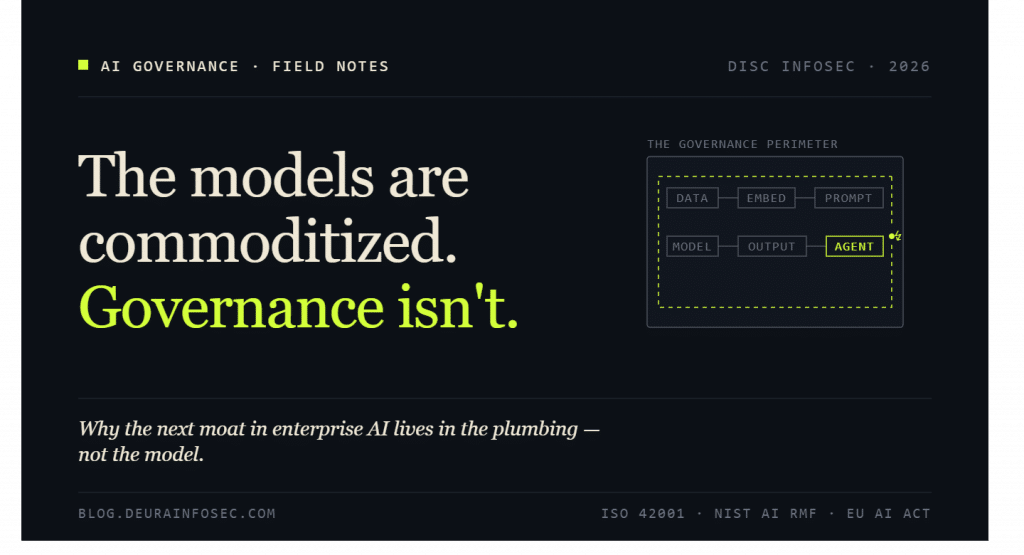

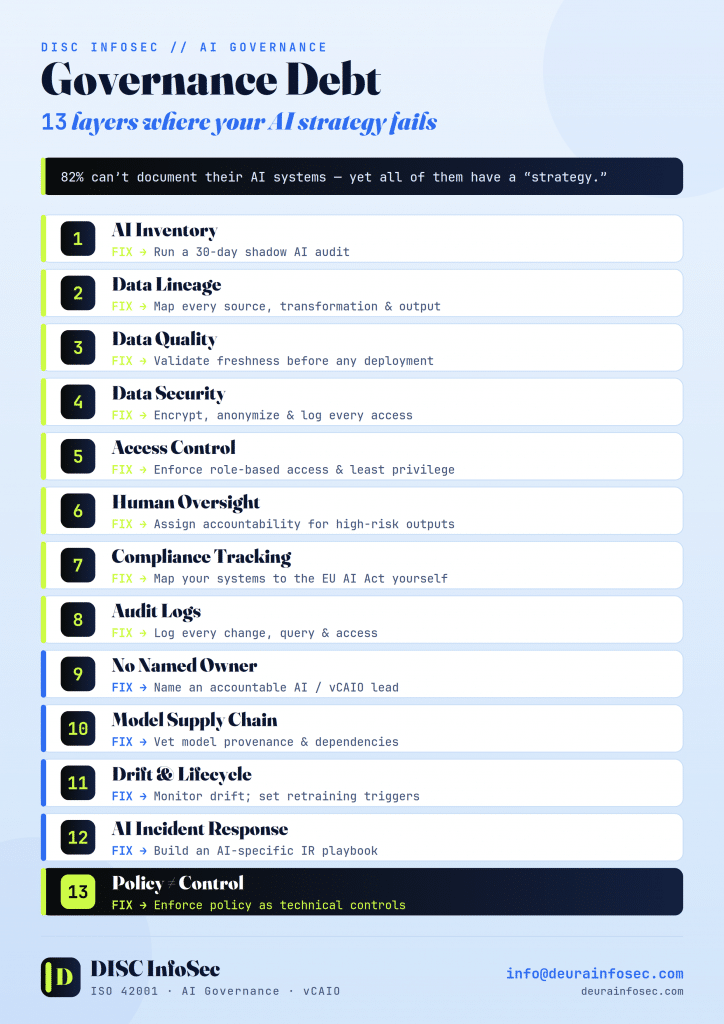

Governance Debt: The 13 Layers Where Your AI Strategy Is Quietly Failing

More than 80% of companies haven’t documented their AI systems.

But every one of them has an “AI strategy.”

That gap has a name. Governance debt. And like technical debt, it doesn’t announce itself. It accrues silently — in the model nobody inventoried, the training data nobody traced, the third-party tool a team adopted over a long lunch. It compounds quietly until an auditor, a regulator, or an incident forces a reckoning. By then the interest is due in full.

Here’s the part executives miss: governance debt isn’t a compliance problem. It’s not a security problem either. It shows up at every layer where AI touches the business — eight of them — and most leadership teams are exposed at all 13th without knowing it.

The reassuring lie is “someone is handling it.” Usually no one is. Below are the mistakes executives actually make, the fix for each, and then the harder question: how you move from patching debt to running a governance program that matures.

The Eight Layers

1. AI Inventory Mistake: No one knows which tools exist. Marketing has three copilots, engineering wired an LLM into the build pipeline, and finance is pasting forecasts into a chatbot — none of it on a list anywhere. Fix: Run a 30-day shadow AI audit. Discovery before policy. You cannot govern what you cannot see, and the inventory is the spine every other control hangs from.

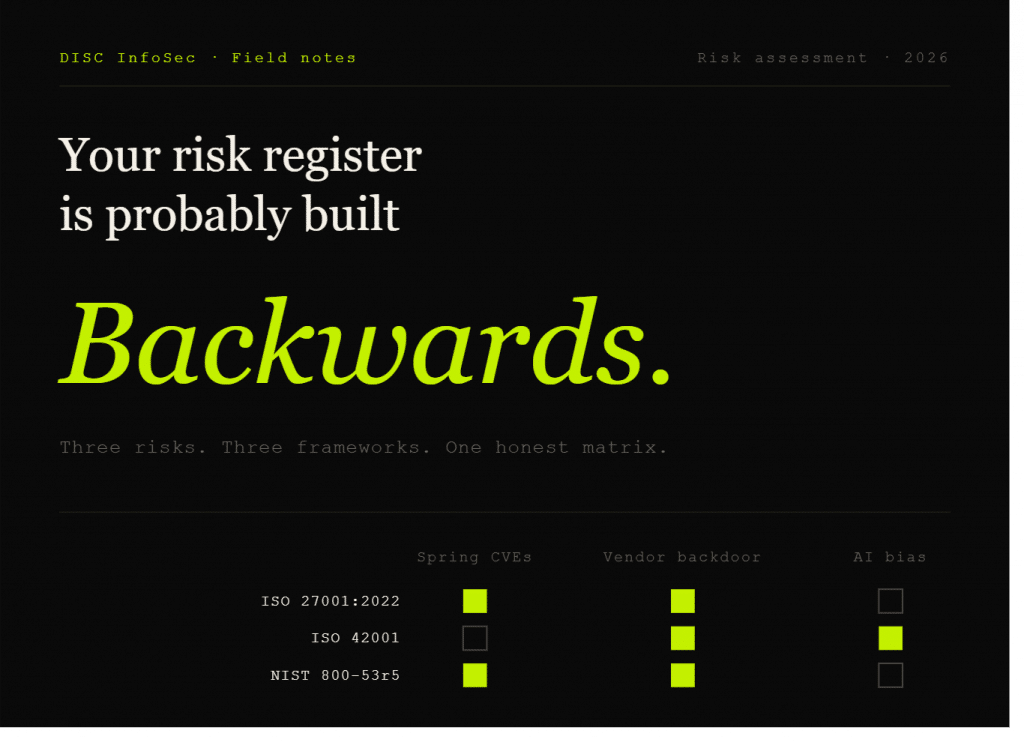

2. Data Lineage Mistake: Training and input data sources are completely untraceable. When a regulator or customer asks “what was this model trained on,” the honest answer is a shrug. Fix: Map every source, transformation, and output. Lineage is what turns “we think it’s fine” into “here is the evidence.” ISO 42001 and the NIST AI RMF both assume you can produce it.

3. Data Quality Mistake: No validation. The model is confidently wrong, and confidence reads as competence to everyone downstream. Fix: Set freshness and quality checks before deployment, not after a bad decision ships. Stale or skewed data is a silent failure mode that no amount of model tuning fixes.

4. Data Security Mistake: Sensitive data leaks into third-party tools. Once it crosses that boundary, you may never get it back — and it may live in someone else’s training set permanently. Fix: Encrypt, anonymize, and log every access. Treat prompts and uploads as data egress, because that’s exactly what they are.

5. Access Control Mistake: Everyone has admin. Nobody should. Fix: Enforce role-based access and least privilege. The blast radius of a compromised account is defined entirely by what that account was allowed to touch.

6. Human Oversight Mistake: AI runs on autopilot. Nobody reviews the high-stakes outputs, and “the system decided” quietly becomes the org’s risk-acceptance policy by default. Fix: Assign named accountability and validate high-risk outputs. Oversight is a role, not a vibe.

7. Compliance Tracking Mistake: “Our vendor is compliant” is treated as if it were your compliance. It isn’t. Their certificate doesn’t transfer to your obligations. Fix: Map your systems to the EU AI Act — and to whatever applies to you (Colorado AI Act, sector rules) — yourself. Vendor attestations are an input to your assessment, not a substitute for it.

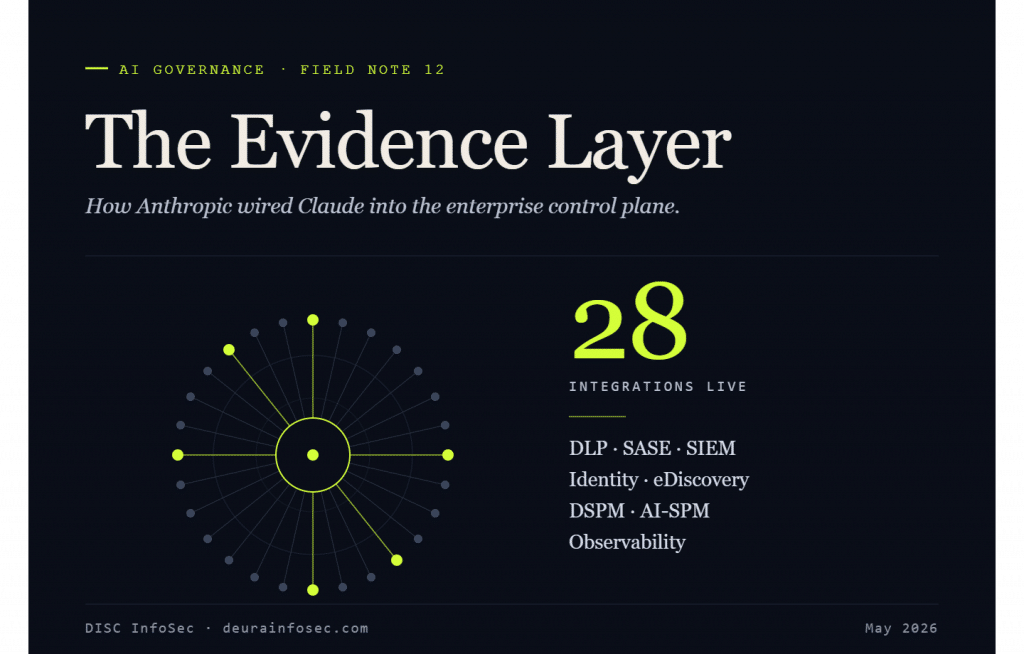

8. Audit Logs Mistake: An auditor asks a question and you scramble. Evidence is reconstructed under deadline pressure, which is the same as not having it. Fix: Log every change, query, and access as a byproduct of normal operation. Audit-readiness should be a query, not a fire drill.

Four More Mistakes Executives Make

The eight layers cover the surface. These are the ones I see sink programs that thought they had the basics handled.

9. No Named Owner. AI governance gets distributed across legal, security, data, and IT — which means it belongs to no one. Distributed accountability is the absence of accountability. Fix: Name an owner with real authority — an AI governance lead, a vCAIO, or a chartered committee with a budget. If no single person can be fired for getting it wrong, it isn’t governed.

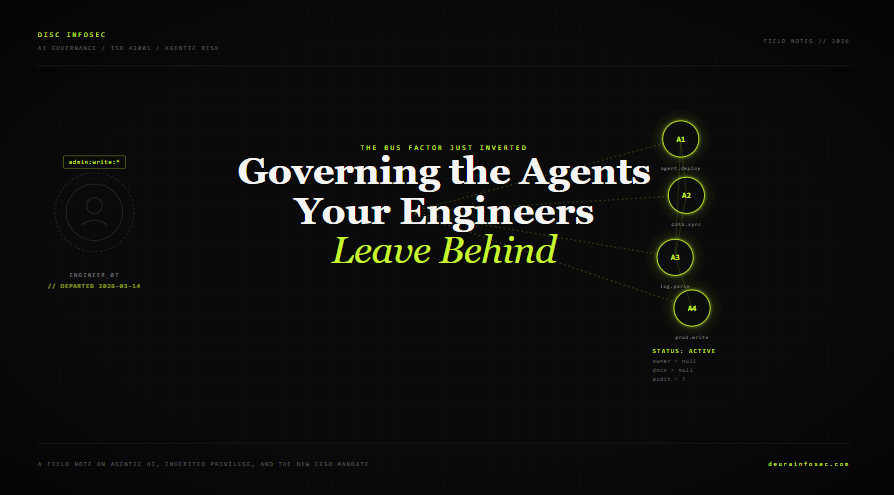

10. Ignoring the Model Supply Chain. Executives obsess over the models they build and ignore the ones they import. Open-weight models, fine-tuned checkpoints, and AI-laden SaaS features arrive with poisoned weights, unvetted dependencies, and license terms nobody read. Fix: Extend third-party risk management to AI components. Vet model provenance the way you’d vet a software dependency — because it is one.

11. No Drift or Lifecycle Monitoring. A model passes review on day one and is assumed safe forever. But data shifts, the world moves, and accuracy quietly decays while everyone trusts the original sign-off. Fix: Monitor for drift continuously, set retraining triggers, and govern models across their full lifecycle — development, validation, deployment, decommission. Approval is a checkpoint, not a permanent license.

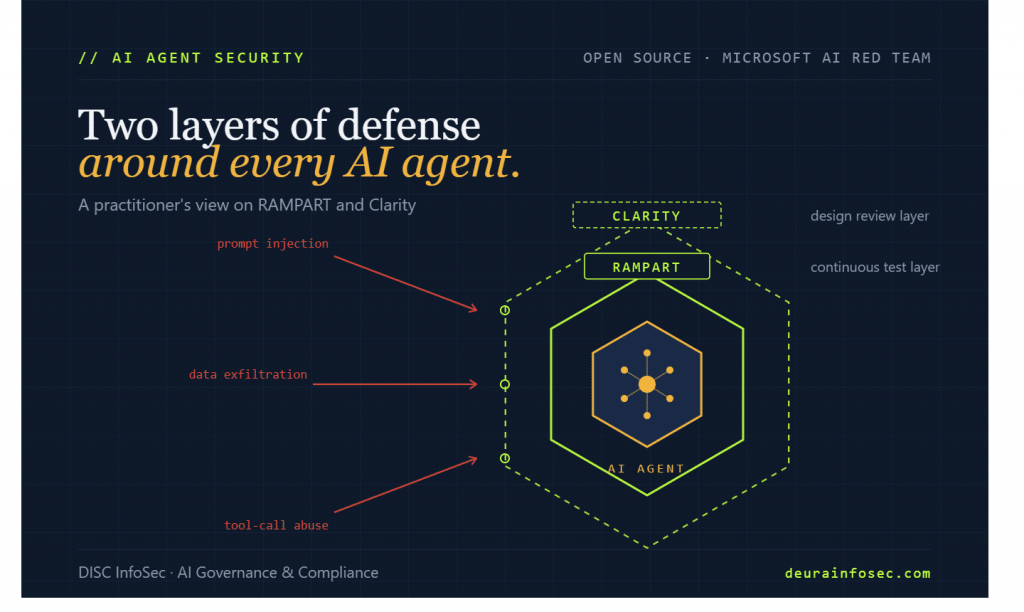

12. No AI Incident Response Plan. Organizations have IR playbooks for ransomware and zero days, and nothing for a hallucinated legal answer, a prompt-injection exfiltration, or a biased decision at scale. When it happens, they improvise — badly, publicly. Fix: Build AI-specific incident response: defined harm categories, escalation paths, a kill switch, and post-incident review. Decide who can pull a model out of production before you need to.

13. Mistaking a Policy Document for a Control. This is the most seductive failure, so I’ll name it as a bonus. A beautifully written AI policy sitting in a SharePoint folder governs nothing. The gap between what the policy says and what the system actually does is invisible until someone exploits it. Fix: Translate policy into enforced technical controls — approved-model registries, usage guardrails, automated checks in the pipeline. A rule that the infrastructure honors by default beats a rule that lives in a PDF.

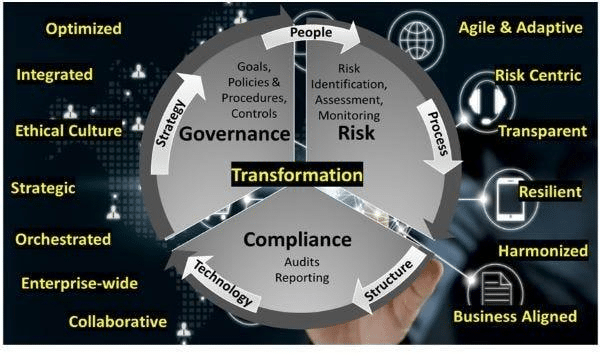

My Perspective: Maturing AI Governance to the Next Level

Fixing the thirteen mistakes above gets you out of debt. It does not give you a mature program. The difference matters, because most organizations stop the moment the immediate pain subsides — and the debt simply starts accruing again.

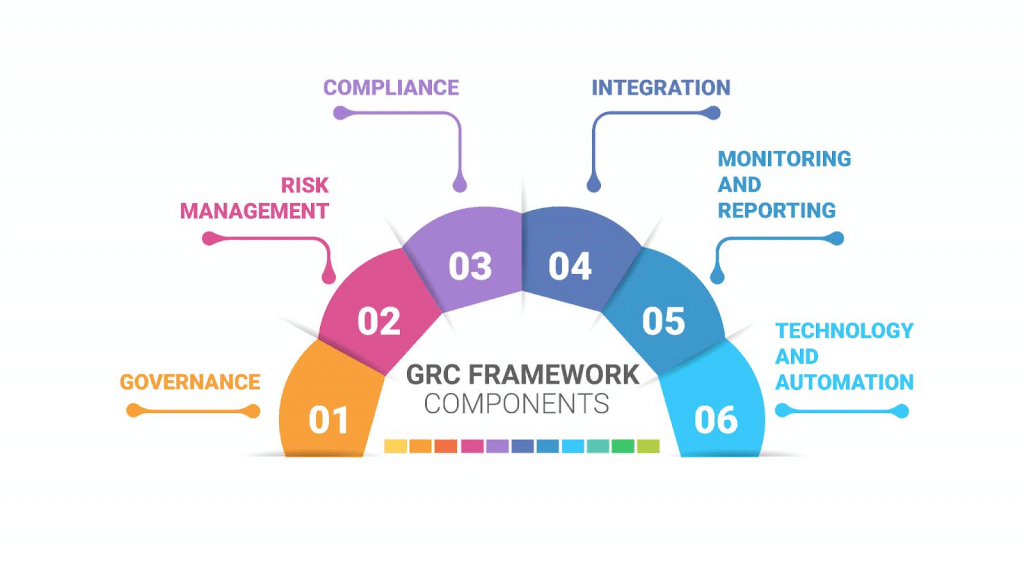

Maturity is a trajectory, and it’s worth being honest about where you are on it. I think about it in five stages, borrowed from the CMMI tradition:

- Level 1 — Ad hoc. Governance happens when something breaks. The shadow AI audit you just ran lives here.

- Level 2 — Documented. Policies exist, an inventory exists, owners are named. This is where the eight layers, done well, land you. It feels like the destination. It’s the starting line.

- Level 3 — Defined. Controls are standardized and repeatable across teams. The same process governs the marketing copilot and the production model. ISO 42001 expects you to operate here.

- Level 4 — Measured. You instrument governance. Continuous control monitoring, live risk telemetry, drift detection feeding dashboards. Evidence is a byproduct, not a project.

- Level 5 — Optimizing. Governance is wired into how you build — versioned, tested, and shipped like any other part of the system. Compliance is a condition of deployment, not a gate at the end.

The leap that separates the mature from the merely compliant is the move from documentation to enforcement — from governance you write down to governance you build. That’s the same shift the cloud forced on the rest of security a decade ago, and AI is forcing it now, faster.

It also changes who the governance professional is. The role used to be custodial: keep the documents, collect the evidence, survive the audit. The next-level program needs something different — a governance engineer who is bilingual, fluent in the language of ISO 42001 clauses and the language of access controls, pipelines, and policy-as-code. The people who can translate a regulatory requirement into an enforced technical control, and explain that control back to an auditor, are the ones who will matter.

One caution, because the automation is seductive enough to mistake instrumentation for governance: a control can enforce a policy flawlessly and the policy can still be wrong. A dashboard can show green for a control that addresses the wrong risk. The irreducible human work — deciding what matters, accepting or rejecting risk, anticipating novel AI harms no rule yet covers — doesn’t disappear as you mature. It becomes the whole job. Everything else is plumbing.

Governance debt is real, it compounds, and “someone is handling it” is not a strategy. But the goal isn’t to pay the debt down to zero and stop. It’s to build a program that generates assurance continuously — so that the next time an auditor, a regulator, or an incident comes asking, the answer is already a query away.

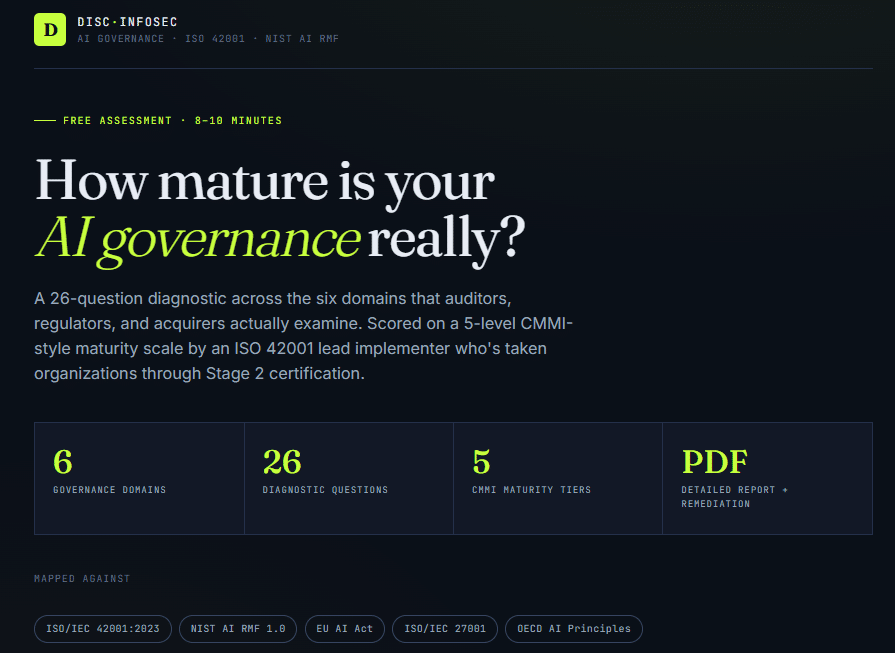

DISC InfoSec helps B2B SaaS and financial services organizations build defensible AI governance — from a 10-day Quick-Start to full ISO 42001 implementation and vCAIO advisory. As an active ISO 42001 implementer and PECB Authorized Training Partner, we’ve stood this up through a live Stage 2 audit, not just on paper.

Find your starting point with our AI Governance Maturity Assessment, or schedule a consultation: calendly.com/hd-deurainfosec

The AI Governance Quick-Start: Defensible in 10 Days, Not 4 Quarters

DISC InfoSec is an active ISO 42001 implementer and PECB Authorized Training Partner specializing in AI governance for B2B SaaS and financial services organizations.

AI Vulnerability Scorecard: Discover Your AI Attack Surface Before Attackers Do

Your Shadow AI Problem Has a Name-And Now It Has a Score

Most AI Security Tools Won’t Pass an Audit. Here’s a 15-Minute Way to Find Out.

InfoSec services | InfoSec books | Follow our blog | DISC llc is listed on The vCISO Directory | ISO 27k Chat bot | Comprehensive vCISO Services | ISMS Services | AIMS Services | Security Risk Assessment Services | Mergers and Acquisition Security

- Your AI Strategy Has a Debt Problem. Here Are the 13 Places It’s Hiding.

- GRC at Machine Speed: Four Anchors Reshaping Governance in the Cloud and AI Era

- AI Can Pentest Your Network Now. That’s Not the Risk You Should Worry About

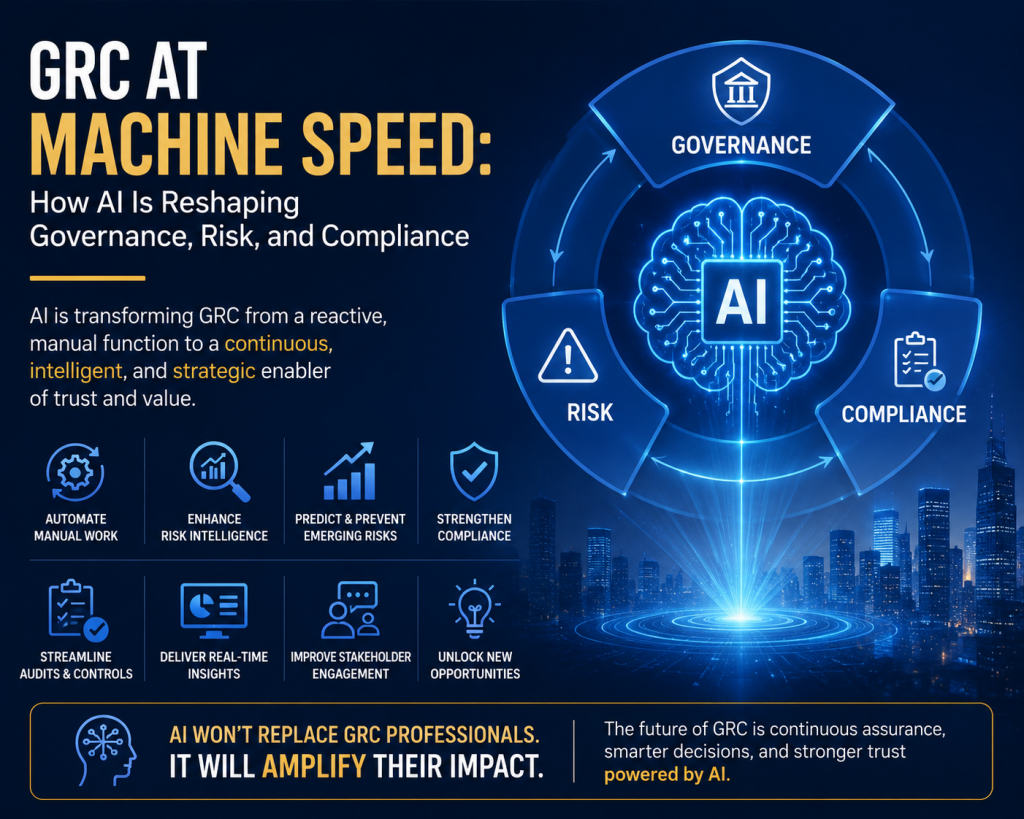

- GRC at Machine Speed: How AI Is Reshaping Governance, Risk, and Compliance

- Building AI Governance That Actually Works: From Ethics to the Exam Room