When the Most Safety-Focused AI Company Misses the Basics: A Governance Wake-Up Call

In the span of a single week, Anthropic — arguably the most safety-conscious AI company in the industry — experienced two back-to-back operational governance failures. Neither was a sophisticated breach. The first involved draft materials for an unreleased model (now public as “Claude Mythos Preview”) sitting in a publicly accessible data store, readable by anyone with the URL. The second was a build configuration that shipped a source map for Claude.ai, exposing the internal module structure and subsystem names of a flagship consumer AI product. Different systems, different mechanisms, same company, same week.

What makes this more revealing is what’s happening on the offensive research side. CISOs running Claude Mythos against their own codebases are reporting that the model genuinely surfaces real vulnerabilities — but the patches it generates remain weak and still require human refinement before shipping. AI demonstrates strength on the discovery side; disciplined human process still owns the remediation side. That asymmetry matters for anyone trying to operationalize AI in DevSecOps.

The deeper lesson isn’t about a clever Advanced Persistent Threat. It’s about a Basic Persistent Failure — twice — at one of the most disciplined AI shops in the world. Anthropic publishes ongoing safety research. Their CISO has been openly building toward nation-state-level internal defenses. The intent and investment are real. And yet the boring fundamentals — what files get bundled into a release, what’s exposed at a public URL — slipped through. If the basics can fail there, they can fail anywhere downstream.

This is where most enterprise leaders need to recalibrate. You’re not building AI; you’re buying it — Copilot, ChatGPT Enterprise, AI features quietly bundled into the SaaS platforms your teams already use. You don’t control the underlying plumbing. You’re trusting the vendor’s pipeline, configuration management, and access controls to be sound. If Anthropic — with its resources, talent, and culture — can publish a source map by accident, the question becomes uncomfortable fast: what’s running inside the smaller AI vendors your teams are integrating with this quarter?

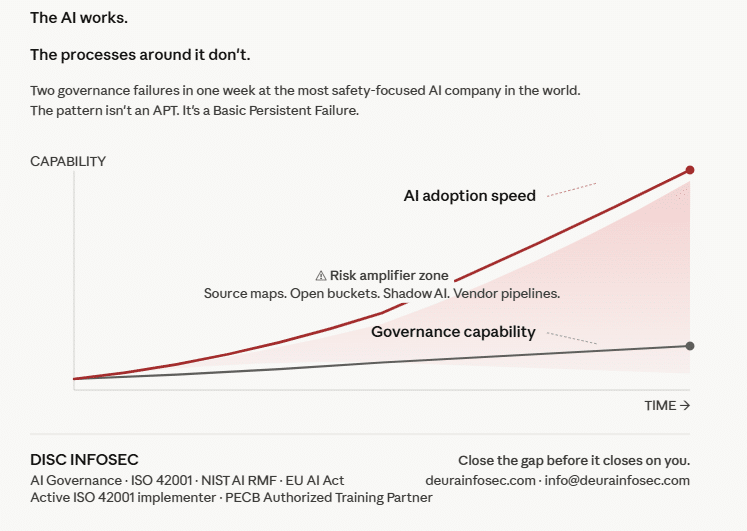

The pattern underneath all of this is a velocity-governance mismatch. Anthropic’s CEO has publicly stated that the majority of the company’s code is now written by Claude itself, with engineers shipping multiple releases per day. The capability is extraordinary; the operational discipline around it didn’t keep pace. Your organization has the same structural gap — not necessarily in software development, but in AI adoption. Employees connect AI assistants to production data. Departments procure AI-powered SaaS without IT or security review. Workflows are being built on AI tools that nobody in compliance knows exist.

There are concrete actions security and governance leaders can take this week. First, ask AI vendors what happens when their system crashes mid-task with your data in memory — if the answer isn’t clear, that’s a finding. Second, audit what AI tools are actually connected to your environment, not just what’s been formally approved; check OAuth integrations, API keys, browser extensions, and Finance’s payment records. Third, review default permissions on every deployed AI tool — most ship wide open to reduce onboarding friction, and if nobody tightened them, you’re operating with unlocked doors. Fourth, update the board-level question from “are we secure?” to “is our AI adoption speed outrunning our ability to govern what we’re adopting?” — and use the moment to make the case for budget and headcount.

There’s also a forward-looking signal worth attention. Independent researchers at AISLE have reproduced Mythos’s flagship vulnerability-discovery results using small, open-weights models — one of them running at roughly eleven cents per million tokens. The frontier capability is already commoditized; the real moat is the system around the model, not the model itself. Combine that with what Anthropic’s CISO told a private group of cybersecurity leaders — that within two years, shipping a vulnerability will mean immediate, not eventual, exploitation — and patch management programs built for a “weeks between discovery and attack” world are facing a structural redesign.

Professional Perspective (InfoSec & AI Governance)

From where I sit as an AI governance practitioner, this is the most useful incident pair the industry has had in months — precisely because nothing exotic happened. No zero-day. No nation-state. Just two misconfigurations at a company that takes AI safety more seriously than most. That’s the entire point. AI governance failures are rarely about the AI; they’re about the operational hygiene around the AI.

This is exactly why frameworks like ISO 42001 (AI Management Systems), NIST AI RMF, and the EU AI Act are not paperwork exercises. They force organizations to answer the unsexy questions that velocity-driven cultures consistently skip: Who owns this AI system? What data flows through it? What’s the change-management process when the model updates? What’s the incident response playbook when an AI vendor’s pipeline leaks? Anthropic’s week is a public, free case study in why those questions cannot be deferred.

If your organization is adopting AI faster than it’s governing — and statistically, it is — three things should be on your desk this quarter: (1) an AI inventory and risk classification mapped against ISO 42001 Annex A controls, (2) a vendor AI assurance process that goes beyond a SOC 2 report and asks AI-specific operational questions, and (3) a board-level governance cadence that treats AI adoption velocity as a measurable risk indicator, not a productivity metric. The organizations that get this right won’t be the ones with the smartest models. They’ll be the ones whose process can keep up with what their models — and their vendors’ models — are doing on their behalf.

The AI is working. The real question, for every CISO and every board, is whether the process around it can.

DISC InfoSec is an active ISO 42001 implementer (ShareVault / Pandesa Corporation) and PECB Authorized Training Partner specializing in AI governance for B2B SaaS and financial services organizations. If you’re trying to close the velocity-governance gap before it closes on you, reach out at info@deurainfosec.com.

#AIGovernance #ISO42001 #NISTAIRMF #EUAIAct #CISO #DevSecOps #AIRiskManagement #VendorRisk #ShadowAI #vCAIO #CyberSecurity #AICompliance

The AI Governance Quick-Start: Defensible in 10 Days, Not 4 Quarters

DISC InfoSec is an active ISO 42001 implementer and PECB Authorized Training Partner specializing in AI governance for B2B SaaS and financial services organizations.

AI Vulnerability Scorecard: Discover Your AI Attack Surface Before Attackers Do

Your Shadow AI Problem Has a Name-And Now It Has a Score

Most AI Security Tools Won’t Pass an Audit. Here’s a 15-Minute Way to Find Out.

InfoSec services | InfoSec books | Follow our blog | DISC llc is listed on The vCISO Directory | ISO 27k Chat bot | Comprehensive vCISO Services | ISMS Services | AIMS Services | Security Risk Assessment Services | Mergers and Acquisition Security

- The One Security Book That Got Louder With Every Passing Year

- Microsoft Just Made AI Agent Security a CI/CD Problem — Here’s Why That Matters

- Free AI Governance Maturity Calculator for Modern Enterprises

- Why ISO 42001 Will Be the Next SOC 2

- Managing AI Risk: A Practical Approach to Secure, Responsible, and Effective AI Adoption