AI Security Tool Evaluation: A Reality Check for CISOs

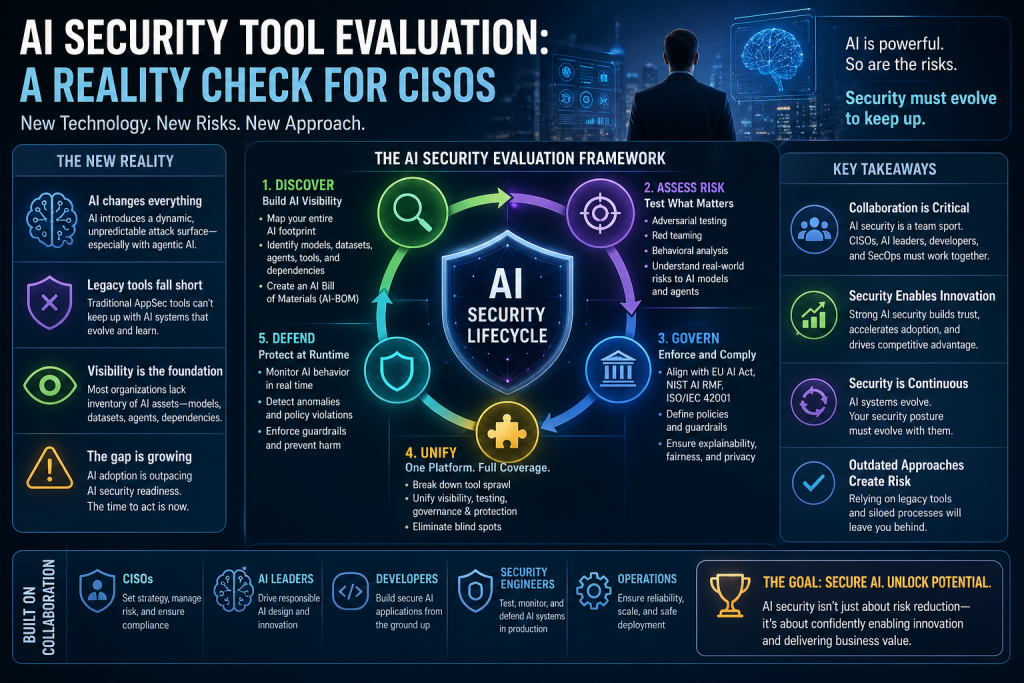

Artificial intelligence is fundamentally reshaping how applications are built, deployed, and attacked. Unlike traditional systems, AI introduces a dynamic and unpredictable attack surface—especially with the rise of agentic AI that can act autonomously. This shift demands a completely new approach to security evaluation.

Most organizations are still relying on legacy application security tools, which were designed for deterministic code. These tools struggle to keep up with AI systems that evolve, learn, and behave differently over time. As a result, CISOs are facing a widening gap between AI adoption and AI security readiness.

The core issue is visibility. Many organizations do not have a clear inventory of their AI assets—models, datasets, agents, and dependencies. Without this foundational understanding, it becomes nearly impossible to secure or govern AI effectively.

To address this, modern AI security evaluation must start with discovery. CISOs need tools that can map the entire AI footprint, including hidden dependencies and third-party integrations. This concept is often referred to as an AI Bill of Materials (AI-BOM), which provides a structured view of the AI supply chain.

Once visibility is established, the next step is risk assessment. AI systems require new testing approaches such as adversarial testing, red teaming, and behavioral analysis. Unlike traditional vulnerability scanning, these methods simulate real-world attacks against AI models and agents to uncover hidden risks.

Governance is another critical pillar. AI security tools must enable organizations to enforce policies aligned with emerging standards like the EU AI Act, NIST AI RMF, and ISO/IEC 42001. Security is no longer just about detection—it must include enforceable controls across the AI lifecycle.

A major shift highlighted in the framework is the need for unified platforms. Fragmented tools create blind spots and operational inefficiencies. Instead, organizations should prioritize integrated solutions that combine visibility, testing, governance, and runtime protection into a single system.

Runtime defense is becoming increasingly important where you may need AI Governance enforcement. AI agents can take actions in real time, interact with external systems, and trigger cascading effects. Security tools must monitor and control these behaviors dynamically, not just during development.

Another key insight is collaboration. AI security is no longer owned by a single team. CISOs, AI leaders, developers, and security engineers must work together to ensure safe adoption. This requires tools and processes that bridge gaps between governance, engineering, and operations.

Ultimately, the goal of AI security tool evaluation is not just to reduce risk but to enable innovation. Organizations that can securely adopt AI will move faster and gain competitive advantage, while those relying on outdated approaches will struggle to keep pace.

Perspective & Recommendations (from a GRC / vCISO lens)

Here’s the blunt truth: most AI security tool evaluations today are feature-driven, not risk-driven.

CISOs are still asking:

- “Does this tool scan prompts?”

- “Does it detect jailbreaks?”

But they should be asking:

- “Can this tool enforce governance?”

- “Can I prove compliance and control effectiveness?”

My perspective:

AI security is quickly becoming a governance problem disguised as a tooling problem.

If you don’t tie tools to:

- Risk scenarios

- Regulatory obligations

- Business impact

…you’re just buying expensive dashboards.

What I recommend (practical + actionable)

1. Start with AI Risk Scenarios, not tools

Define:

- Model misuse

- Data leakage

- Prompt injection

- Autonomous agent abuse

Then evaluate tools against these risks.

2. Demand “control enforcement,” not just detection

Most tools find issues. Few can:

- Block unsafe actions

- Enforce policies

- Provide audit evidence

That’s the gap regulators will focus on.

3. Align evaluation with frameworks early

Map tools to:

- NIST AI RMF

- ISO 42001

- EU AI Act

If a tool can’t map to controls, it won’t survive audit.

4. Prioritize AI asset inventory (non-negotiable)

If you don’t know:

- Where AI is used

- What models exist

- What data flows through them

You don’t have security—you have assumptions.

5. Test tools in real-world scenarios (not demos)

Run:

- Red team exercises

- Abuse cases

- Failure simulations

Because AI breaks in production, not in slide decks.

6. Avoid tool sprawl early

Pick platforms that:

- Integrate into SDLC

- Provide governance + security

- Support runtime controls

Otherwise, you’ll recreate the same AppSec mess.

Final Thought

AI security evaluation is evolving into AI governance maturity assessment.

The winners won’t be the companies with the most tools.

They’ll be the ones who can prove control, enforce policy, and demonstrate trust.

DISC InfoSec is an active ISO 42001 implementer and PECB Authorized Training Partner specializing in AI governance for B2B SaaS and financial services organizations.

AI Vulnerability Scorecard: Discover Your AI Attack Surface Before Attackers Do

Your Shadow AI Problem Has a Name-And Now It Has a Score

Most AI Security Tools Won’t Pass an Audit. Here’s a 15-Minute Way to Find Out.

InfoSec services | InfoSec books | Follow our blog | DISC llc is listed on The vCISO Directory | ISO 27k Chat bot | Comprehensive vCISO Services | ISMS Services | AIMS Services | Security Risk Assessment Services | Mergers and Acquisition Security

- GRC at Machine Speed: Four Anchors Reshaping Governance in the Cloud and AI Era

- AI Can Pentest Your Network Now. That’s Not the Risk You Should Worry About

- GRC at Machine Speed: How AI Is Reshaping Governance, Risk, and Compliance

- Building AI Governance That Actually Works: From Ethics to the Exam Room

- Corporate Visibility as an Attack Surface: Managing Risk in the AI Era