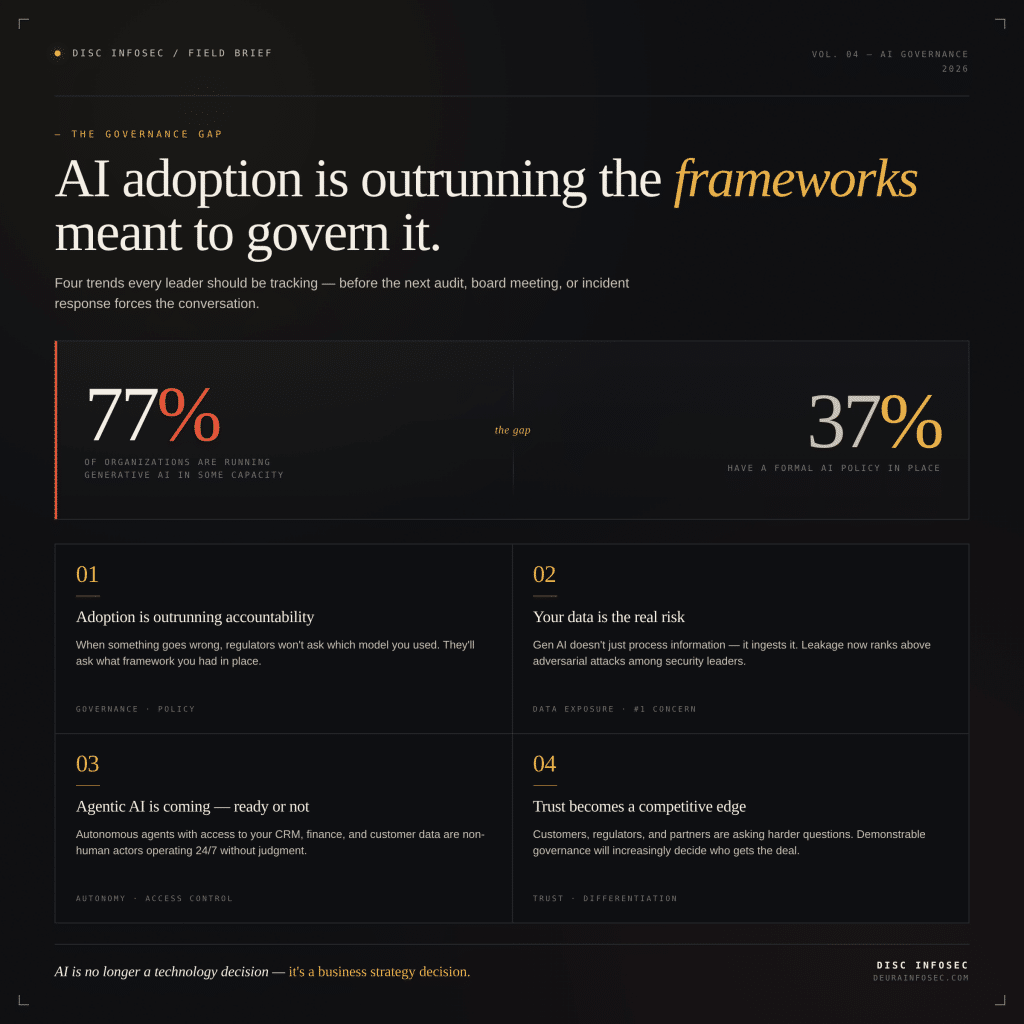

1. Adoption is outrunning accountability

Generative AI is now embedded in 77% of organizations, but only 37% have a formal AI policy guiding how it’s used. That delta isn’t a technology problem — it’s a governance failure waiting to surface. The first time something goes wrong, the absence of a documented framework becomes the story. Regulators, auditors, and boards won’t ask which model you used or how clever the prompt was; they’ll ask what policy, controls, and oversight were in place before the incident. If the answer is “none,” everything that follows gets harder.

2. Your data is the real risk

Generative AI doesn’t just process inputs — it absorbs them. Employees routinely paste customer records, financial data, and proprietary strategy into tools the organization never evaluated, never approved, and often doesn’t even know are in use. Data leakage through gen AI has overtaken adversarial attacks as the top concern among security leaders, and the reason is mundane: the exposure rarely looks like a breach. It looks like a single prompt typed by a well-meaning employee trying to move faster.

3. Agentic AI is coming — ready or not

Autonomous agents that can reason, take action, and connect to enterprise systems are moving out of pilot phase and into production environments. The capability is real, but the governance around it is largely absent. An agent with credentials into your CRM, finance stack, or customer data isn’t a productivity feature — it’s a non-human actor making decisions 24/7 with no judgment, no accountability layer, and often no audit trail. Most organizations haven’t defined who owns these agents, what they’re permitted to do, or how their actions get reviewed.

4. Trust is becoming a competitive differentiator

Customers, regulators, and partners are no longer satisfied with vague assurances about “responsible AI.” They’re asking direct questions: how is AI used in your products, where does our data go, who governs the models, and can you prove it? Organizations that can answer with transparency, auditability, and a defensible governance program will win business and pass diligence. Those that can’t will be filtered out — quietly, but consistently — from the deals and partnerships that matter.

Perspective

The common thread across all four points is that the gap isn’t conceptual — it’s operational. Most leaders already understand AI carries risk. What they don’t have is a working AI management system (AIMS): defined ownership, documented policies, mapped controls, evidence of execution, and an audit trail that holds up under external scrutiny. That’s the entire premise behind frameworks like ISO 42001 and the EU AI Act — they push organizations from intent to implementation.

What I’d add is that the window for treating AI governance as optional is closing fast. Twelve months ago, “we’re still figuring it out” was a defensible answer. The Colorado AI Act is 70 days away. Today, with regulators issuing guidance, customers writing AI clauses into MSAs, and insurers asking about AI controls during renewal, that answer starts to cost real money — in lost deals, failed audits, and incidents that didn’t have to happen. The organizations that move now don’t just reduce risk; they convert governance into a sales asset. The ones that wait will spend the next two years catching up under pressure, which is the most expensive way to build anything.

InfoSec services | InfoSec books | Follow our blog | DISC llc is listed on The vCISO Directory | ISO 27k Chat bot | Comprehensive vCISO Services | ISMS Services | AIMS Services | Security Risk Assessment Services | Mergers and Acquisition Security

- GRC at Machine Speed: Four Anchors Reshaping Governance in the Cloud and AI Era

- AI Can Pentest Your Network Now. That’s Not the Risk You Should Worry About

- GRC at Machine Speed: How AI Is Reshaping Governance, Risk, and Compliance

- Building AI Governance That Actually Works: From Ethics to the Exam Room

- Corporate Visibility as an Attack Surface: Managing Risk in the AI Era

👇 Feel free to reach out with any questions about AI adoption / AI Governance / Governance Enforcement…