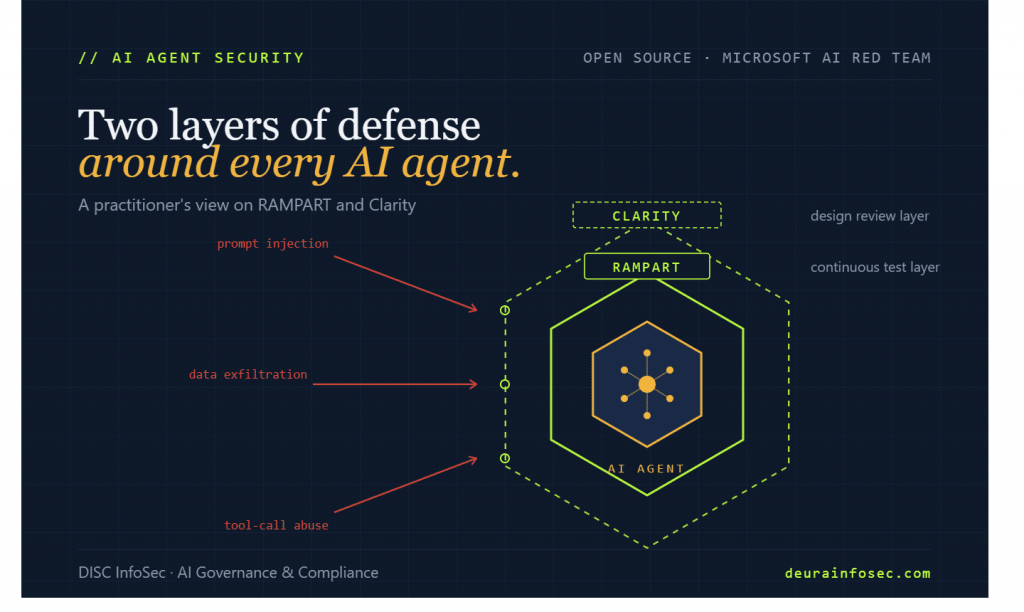

Microsoft Just Open-Sourced the Missing Piece of AI Agent Security: A Practitioner’s Take on RAMPART and Clarity

On May 20, Microsoft’s AI Red Team released two open-source tools that should be on every CISO’s and AI program owner’s reading list this week: RAMPART, a continuous testing framework for AI agents, and Clarity, a structured design-review tool. Both have been battle-tested inside Microsoft before being handed to the community, and together they begin to close one of the most uncomfortable gaps in enterprise AI today — the gap between “we shipped an agent” and “we shipped an agent that holds up under adversarial pressure and audit scrutiny.”

Coming from a practitioner who has spent the last two years implementing ISO 42001 in production environments, my honest reaction: finally. Let me explain why these tools matter, where they fit in a governance program, and where I think organizations will still get this wrong.

What Microsoft Actually Released

RAMPART is a test harness built on top of Microsoft’s existing PyRIT red-teaming library, designed to slot directly into a CI/CD pipeline. Developers write pytest-style tests describing adversarial scenarios — prompt injection, data exfiltration via tool calls, jailbreak attempts — and the framework runs them on every code change. Each test connects through a thin adapter, orchestrates an interaction with the agent, evaluates the outcome, and returns a clear pass/fail signal that can be gated in CI like any other integration test. Because AI systems are probabilistic, RAMPART supports running the same test multiple times and setting a pass threshold rather than demanding deterministic outcomes.

The real-world proof point Microsoft shared is telling: their incident response team took a reported vulnerability, used RAMPART to generate 100 variants of that vulnerability, applied mitigations, and validated each one — collapsing weeks of expert work into hours.

Clarity addresses a different and arguably more expensive failure mode: bad design decisions that become baked into the agent’s architecture. It guides engineers through structured conversations covering problem clarification, solution exploration, failure analysis, and decision tracking. Multiple AI “thinkers” independently examine the proposed system from different angles — security, human factors, adversarial scenarios, operational concerns — and surface the kinds of questions an experienced architect or safety engineer would ask. The output is committed to the repo as human-readable markdown in a .clarity-protocol/ directory, which means design decisions become reviewable artifacts rather than tribal knowledge.

Both tools are available on GitHub now.

Why This Matters for Security Discipline in Agent Development

Most AI agent failures I’ve seen in client environments don’t trace back to model behavior. They trace back to two earlier failures: nobody wrote down the threat model before the agent was built, and nobody set up continuous adversarial testing after it shipped. RAMPART and Clarity address exactly these two gaps — and they do it in a way that maps cleanly onto how engineering teams already work.

Shifting Agent Safety Left — Without Slowing Anyone Down

The defining problem with AI agent security today is that the testing usually happens in the wrong place at the wrong time. Pre-launch red team engagements are expensive, sporadic, and stale within a sprint. Post-incident reviews are valuable but, by definition, too late. RAMPART changes the economics by making adversarial tests behave like unit tests: cheap to run, repeatable, and enforceable through pull request gating. When a developer adds a new tool to the agent — say, the ability to query a customer database — the safety test for that new capability gets added in the same PR. This is what “secure SDLC” actually looks like for AI agents, and it’s something most internal AI programs have been describing in slide decks but failing to implement in code.

Making Design Decisions Auditable

Clarity is the more underrated of the two tools. ISO 42001, the NIST AI RMF, and the EU AI Act all require organizations to demonstrate that they considered foreseeable risks during system design — not just that they ran some tests at the end. Auditors increasingly ask: “Show me the design review record. Show me the failure modes you considered and the decisions you made.” In most organizations, that record doesn’t exist. It lives in someone’s head, a Slack thread, or a Jira ticket that got closed eight sprints ago. Clarity’s commitment to writing design decisions as markdown artifacts inside the code repo is genuinely useful for compliance evidence — it turns ephemeral architectural conversations into the kind of durable, reviewable record that an ISO 42001 internal auditor or an EU AI Act conformity assessment will ask for.

Closing the “Variant Problem” in AI Incident Response

The detail from Microsoft’s writeup that should grab every incident responder is the 100-variant test. When a real vulnerability is reported in a traditional system, you patch the specific exploit and move on. AI agents don’t work that way. The same underlying weakness can be triggered by hundreds of semantically equivalent prompts, and patching one doesn’t patch the others. RAMPART’s ability to generate variants of a reported vulnerability, test mitigations against all of them, and validate the fix is the kind of capability most enterprise security teams have been trying to build in-house with mixed results. Having Microsoft hand this over as open source — battle-tested against real incidents — meaningfully lowers the cost of doing AI incident response properly.

Where Organizations Will Still Get This Wrong

Tools don’t fix governance gaps. Tools amplify whatever discipline already exists. Three predictions about how RAMPART and Clarity get deployed:

1. Teams will adopt RAMPART without adopting a threat model. RAMPART runs the tests you write. If you only write tests for the prompt injection scenarios you happen to think of, you get a false sense of coverage. Organizations that haven’t done the upstream work of mapping their agent’s attack surface — tool calls, retrieval sources, prompt-completion logging, orchestration handoffs — will end up with a green CI pipeline and the same underlying risk.

2. Clarity will be treated as documentation, not governance. The whole point of structured design reviews is that decisions get challenged before they become technical debt. If Clarity outputs become files that nobody reads in code review, the tool fails. The discipline isn’t in running Clarity. It’s in treating its output as a gate.

3. Both tools will live inside the AI team, not the security organization. This is the failure mode I’ve written about repeatedly. AI agents touch sensitive data, call APIs, and make decisions on behalf of users — they are production systems with security blast radius. If RAMPART and Clarity sit only with the ML engineers and never get visibility from the security team, the org has automated the wrong half of the problem. ISO 42001 explicitly requires defined ownership of AI system risk; this is exactly the kind of shared responsibility these tools enable, if the org bothers to set it up.

My Perspective: This Is the Beginning, Not the End

Microsoft’s release is a meaningful contribution to the AI security commons, but it’s important to be clear-eyed about what it does and doesn’t solve. RAMPART and Clarity are excellent at what they do — adversarial testing in CI and structured design review with artifact output — and they bring genuine engineering rigor to two phases of the AI development lifecycle that have been governed mostly by good intentions.

What they don’t do is replace the broader governance program. An organization that runs RAMPART tests on every PR but has no data classification, no model change management policy, no inventory of which agents are touching which data sources, and no defined accountability for AI risk has automated the testing without building the governance underneath it. These tools are most valuable when they slot into an existing AI management system — ISO 42001 or equivalent — that already defines who is accountable, what risks the organization has accepted, and how evidence gets collected for audit. Without that scaffolding, they become another set of green checkmarks in a dashboard nobody trusts.

The trajectory here is also worth watching. We are moving, fast, toward a world where enterprise procurement asks vendors for evidence of AI agent testing the same way it asks for SOC 2 reports today. The organizations that adopt RAMPART and Clarity now — and, more importantly, build the governance program around them — will be the ones that can answer those procurement questions with confidence in 12 months. Everyone else will be scrambling to retrofit security discipline into agents that are already in production, talking to customers, and quietly accumulating risk.

Microsoft just gave the community two of the right tools. The harder question is whether your organization has the governance discipline to use them well. That part doesn’t come from GitHub.

At DISC InfoSec, we help B2B SaaS and financial services organizations build the AI governance scaffolding — ISO 42001, NIST AI RMF, EU AI Act — that makes tools like RAMPART and Clarity actually deliver value. If you’re standing up an AI agent program and want a practitioner’s view of what holds up under audit, let’s talk.

📩 info@deurainfosec.com | 🌐 www.deurainfosec.com | 📝 blog.deurainfosec.com

#AIGovernance #AIAgents #ISO42001 #AIRedTeam #AISecurity #RAMPART #Clarity #Microsoft #SecureSDLC #CISO #vCAIO #NISTAIRMF #EUAIAct #ResponsibleAI #DISCInfoSec