Cybersecurity Investment Strategy: Investing With Intent

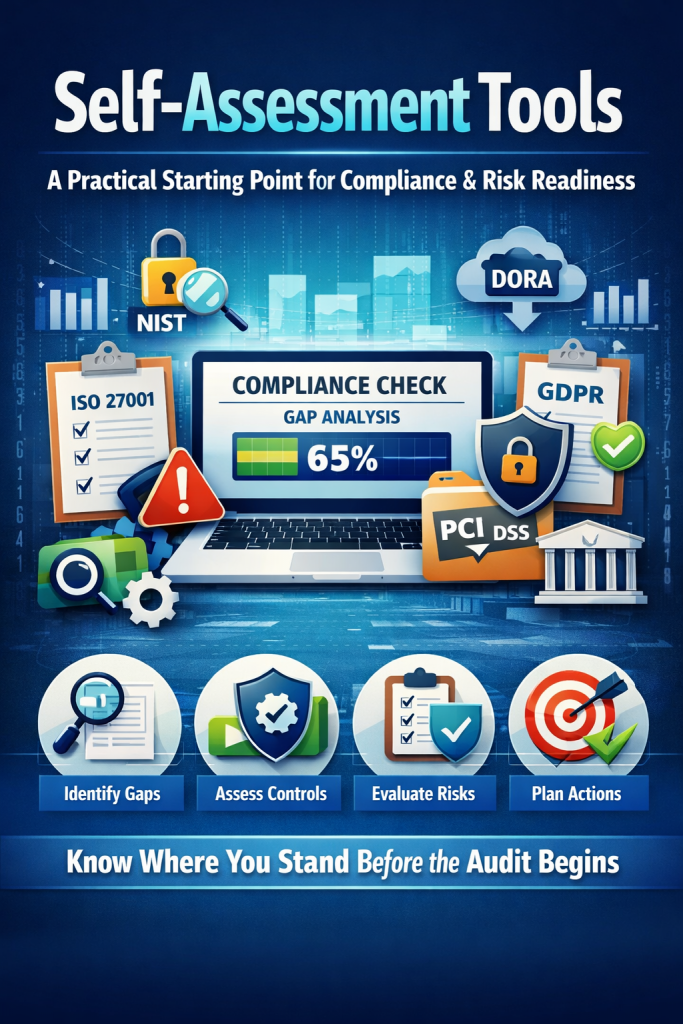

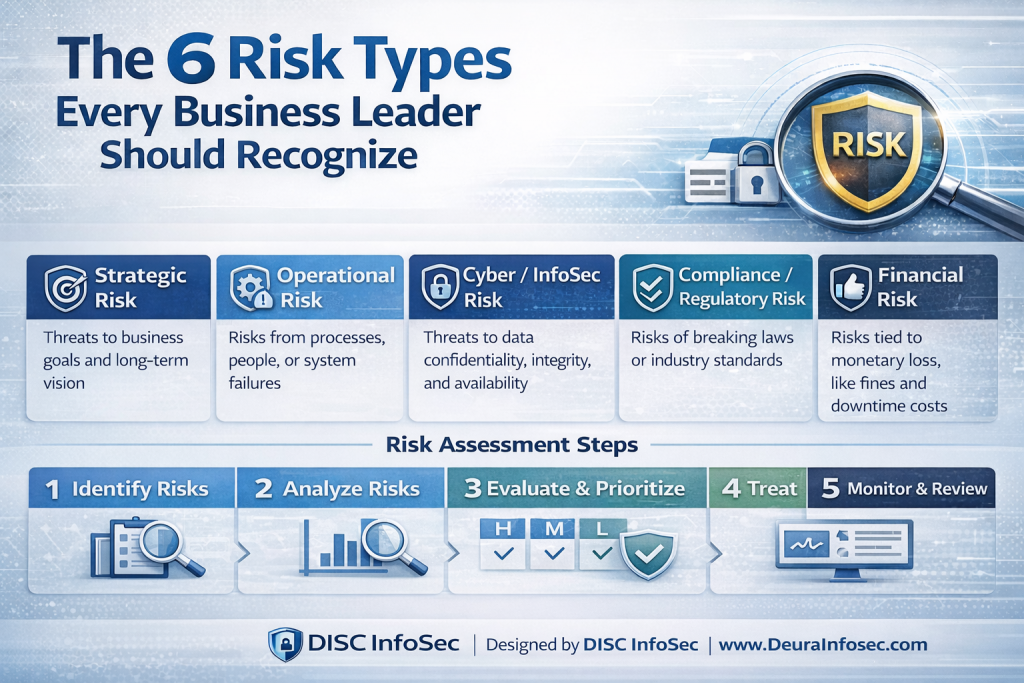

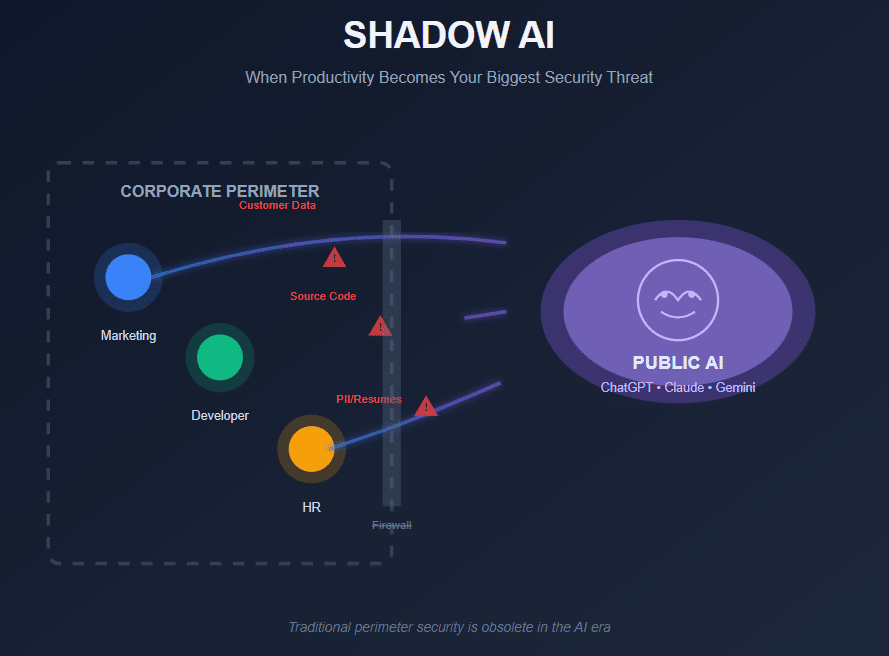

One of the most common mistakes organizations make in cybersecurity is investing in tools and controls without a clear, outcome-driven strategy. Not every security investment delivers value at the same speed, and not all controls produce the same long-term impact. Without prioritization, teams often overspend on complex solutions while leaving foundational gaps exposed.

A smarter approach is to align security initiatives based on investment level versus time to results. This is where a Cybersecurity Investment Strategy Matrix becomes valuable—it helps leaders visualize which initiatives deliver immediate risk reduction and which ones compound value over time. The goal is to focus resources on what truly moves the needle for the business.

Some initiatives provide fast results but require higher investment. Capabilities like EDR/XDR, SIEM and SOAR platforms, incident response readiness, and next-generation firewalls can rapidly improve detection and response. These are often critical for organizations facing active threats or regulatory pressure, but they demand both financial and operational commitment.

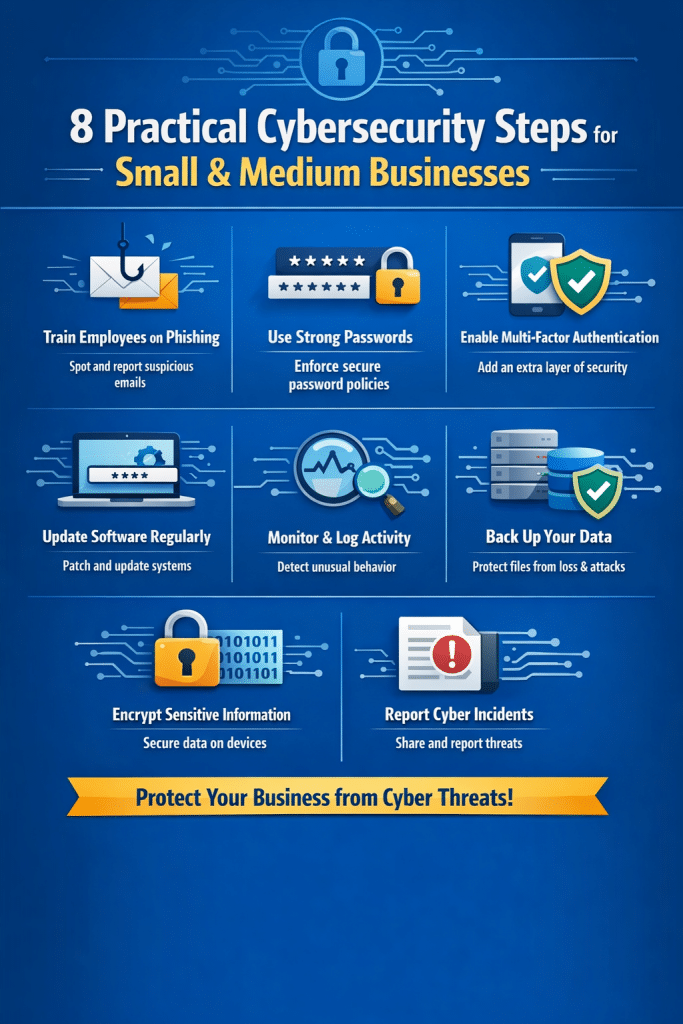

Other controls deliver fast results with relatively low investment. Measures such as multi-factor authentication, password managers, security baselines, and basic network segmentation quickly reduce attack surface and prevent common breaches. These are often high-impact, low-friction wins that should be implemented early.

Certain investments are designed for long-term payoff and require significant investment. Zero Trust architectures, DevSecOps, CSPM, identity governance, and DLP programs strengthen security at scale and maturity, but they take time, cultural change, and sustained funding to deliver full value.

Finally, there are long-term, low-investment initiatives that quietly build resilience over time. Security awareness training, vulnerability management, penetration testing, security champions programs, strong documentation, meaningful KPIs, and open-source security tools all improve security posture steadily without heavy upfront costs.

A well-designed cybersecurity investment strategy balances quick wins with long-term capability building. The real question for leadership isn’t what tools to buy, but which three initiatives, if prioritized today, would reduce the most risk and support the business right now.

My opinion: the best cybersecurity investment strategy is a balanced, risk-driven mix of fast wins and long-term foundations, not an “all-in” bet on any single quadrant.

Here’s why:

- Start with fast results / low investment (mandatory baseline)

This should be non-negotiable. Controls like MFA, security baselines, password managers, and basic network segmentation deliver immediate risk reduction at minimal cost. Skipping these while investing in advanced tools is one of the most common (and expensive) mistakes I see. - Add 1–2 fast results / high investment controls (situational)

Once the basics are in place, selectively invest in high-impact capabilities like EDR/XDR or incident response readiness—only if your threat profile, regulatory exposure, or business criticality justifies it. These tools are powerful, but without maturity, they become noisy and underutilized. - Continuously build long-term / low investment foundations (quiet multiplier)

Security awareness, vulnerability management, documentation, and KPIs don’t look flashy, but they compound over time. These initiatives increase ROI on every other control you deploy and reduce operational friction. - Delay long-term / high investment initiatives until maturity exists

Zero Trust, DevSecOps, DLP, and identity governance are excellent goals—but pursuing them too early often leads to stalled programs and wasted spend. These work best when governance, ownership, and basic hygiene are already solid.

Bottom line:

The best strategy is baseline first → targeted protection next → long-term maturity in parallel.

If I had to simplify it:

Fix what attackers exploit most today, while quietly building the capabilities that prevent tomorrow’s failures.

This approach aligns security spend with business risk, avoids tool sprawl, and delivers both immediate and sustained value.

InfoSec services | InfoSec books | Follow our blog | DISC llc is listed on The vCISO Directory | ISO 27k Chat bot | Comprehensive vCISO Services | ISMS Services | AIMS Services | Security Risk Assessment Services | Mergers and Acquisition Security

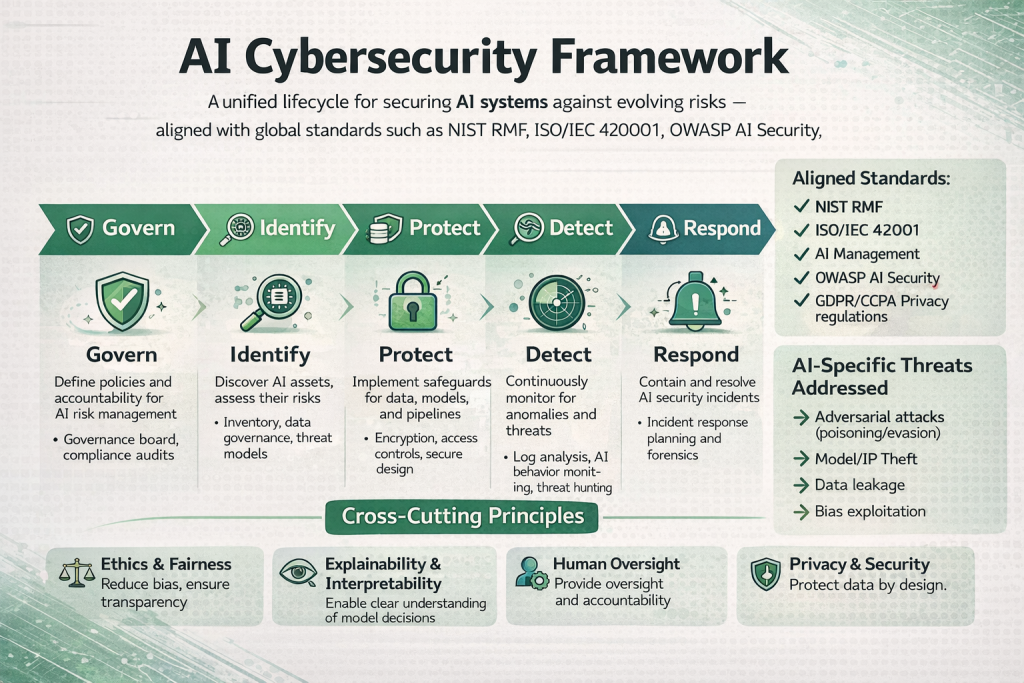

At DISC InfoSec, we help organizations navigate this landscape by aligning AI risk management, governance, security, and compliance into a single, practical roadmap. Whether you are experimenting with AI or deploying it at scale, we help you choose and operationalize the right frameworks to reduce risk and build trust. Learn more at DISC InfoSec.

- How ISO 27001 Lead Auditors Should Evaluate AI Risks in an ISMS

- Secure Your Web & API Applications Before Attackers Do: Reduce Vulnerabilities

- Is ISO 27001 Training Right for You? Here’s Who Should Consider It

- Top 15 Kali Linux Tools for AI Governance with Use Cases

- Risk Management with GRC platform: Mapping ISO 42001 Clause 6 to AI Governance