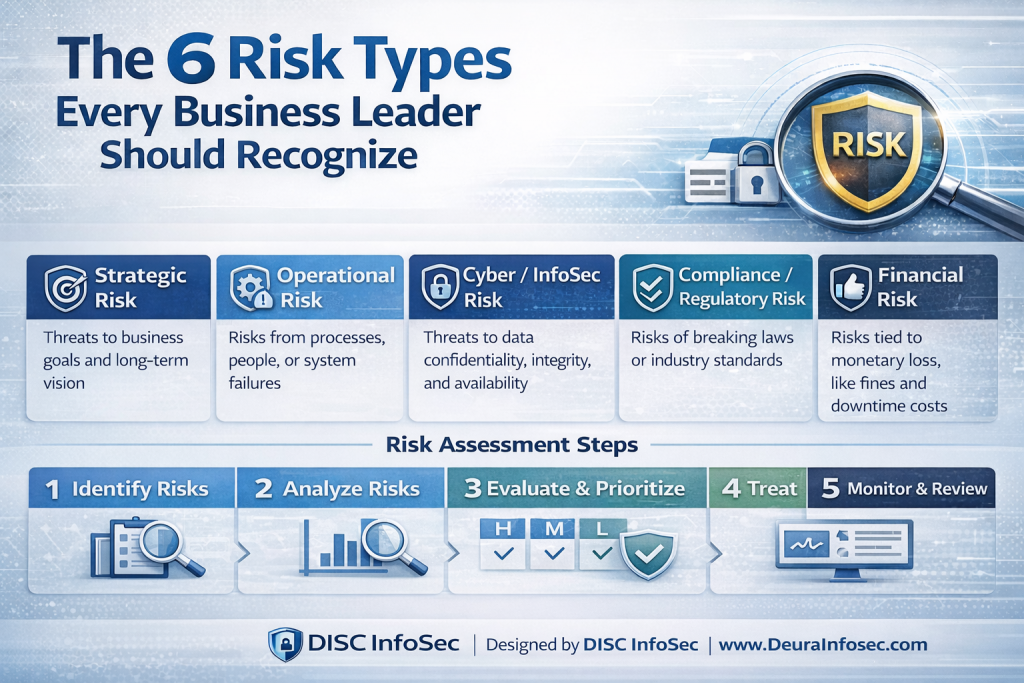

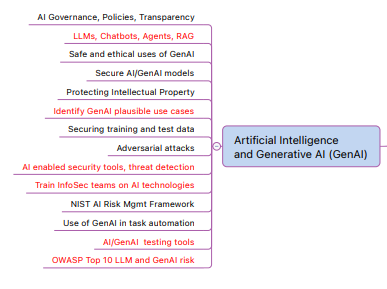

The risk management process is designed to help organizations systematically identify, assess, prioritize, and mitigate risks related to AI systems throughout the entire AI lifecycle. It is part of the broader AI governance capabilities of the GRC platform, which supports compliance with frameworks like ISO 42001, ISO 27001, the EU AI Act, and the NIST AI RMF.

Below is a clear breakdown of the core steps in the GRC platform risk management process.

1. Risk Identification

The process begins by identifying risks across AI projects, models, and vendors. These risks may include issues such as bias in training data, model failures, security vulnerabilities, regulatory non-compliance, or third-party vendor risks.

GRC platform centralizes all identified risks in a unified risk register, which provides a single view of risks across the organization.

Typical information captured includes:

- Risk name and description

- AI lifecycle phase (design, training, deployment, etc.)

- Potential impact

- Risk category

- Assigned owner

This step ensures that AI risks are visible and documented rather than scattered across spreadsheets or emails.

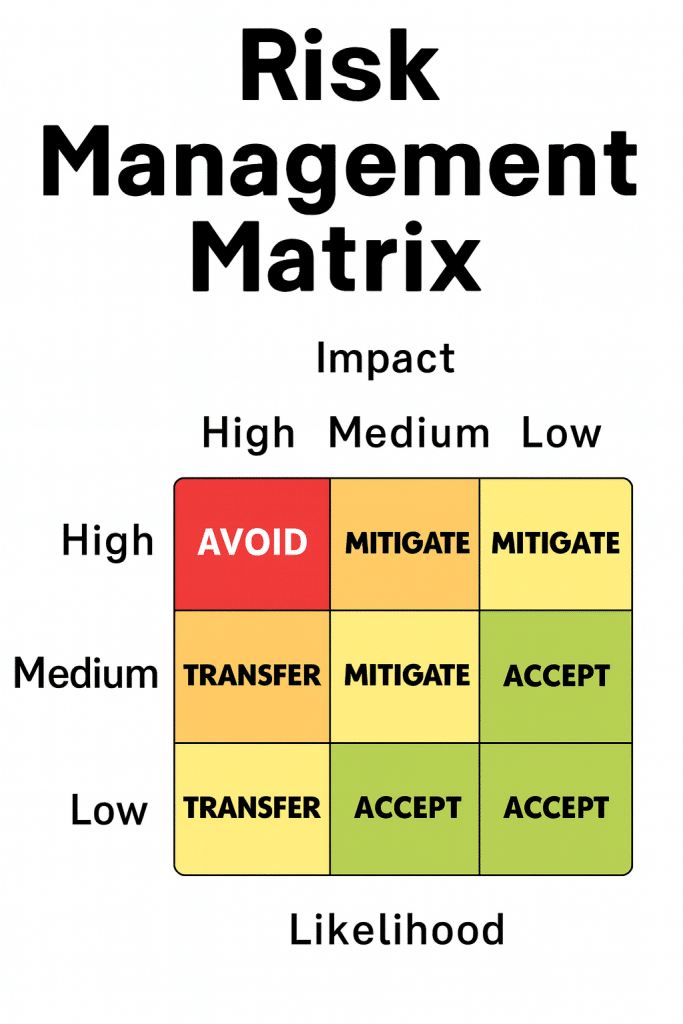

2. Risk Assessment

Once risks are identified, they are evaluated based on likelihood and severity.

GRC platform automatically calculates a risk score using a weighted formula:

Risk Score = (Likelihood × 1) + (Severity × 3)

This method intentionally weights severity three times higher than probability, ensuring that high-impact risks are prioritized even if they seem unlikely.

The resulting score maps to six risk levels:

- No Risk

- Very Low

- Low

- Medium

- High

- Very High

This structured scoring allows organizations to prioritize the most critical AI risks first.

3. Risk Classification

GRC platform organizes risks into three main categories to improve governance and traceability:

- Project Risks – Risks related to the AI system or use case itself.

- Model Risks – Risks related to algorithm performance, bias, or failure.

- Vendor Risks – Risks associated with third-party AI tools or providers.

This three-dimensional risk tracking approach allows organizations to understand where risks originate and how they propagate across the AI ecosystem.

4. Risk Mitigation Planning

After risk evaluation, the next step is to develop a mitigation strategy.

Each risk entry includes:

- Mitigation plan

- Implementation strategy

- Responsible owner

- Target completion date

- Residual risk evaluation

The system tracks mitigation through a structured workflow, ensuring accountability and visibility across teams.

5. Workflow and Approval Process

GRC platform uses a 7-stage mitigation workflow to track progress:

- Not Started

- In Progress

- Completed

- On Hold

- Deferred

- Cancelled

- Requires Review

This structured workflow ensures that risk remediation activities are tracked, reviewed, and approved rather than forgotten.

6. Control and Framework Mapping

Each identified risk can be mapped to regulatory or compliance controls, such as:

- EU AI Act requirements

- ISO 42001 clauses

- ISO 27001 controls

- NIST AI RMF categories

This mapping provides audit-ready traceability, allowing organizations to demonstrate how specific risks are addressed within governance frameworks.

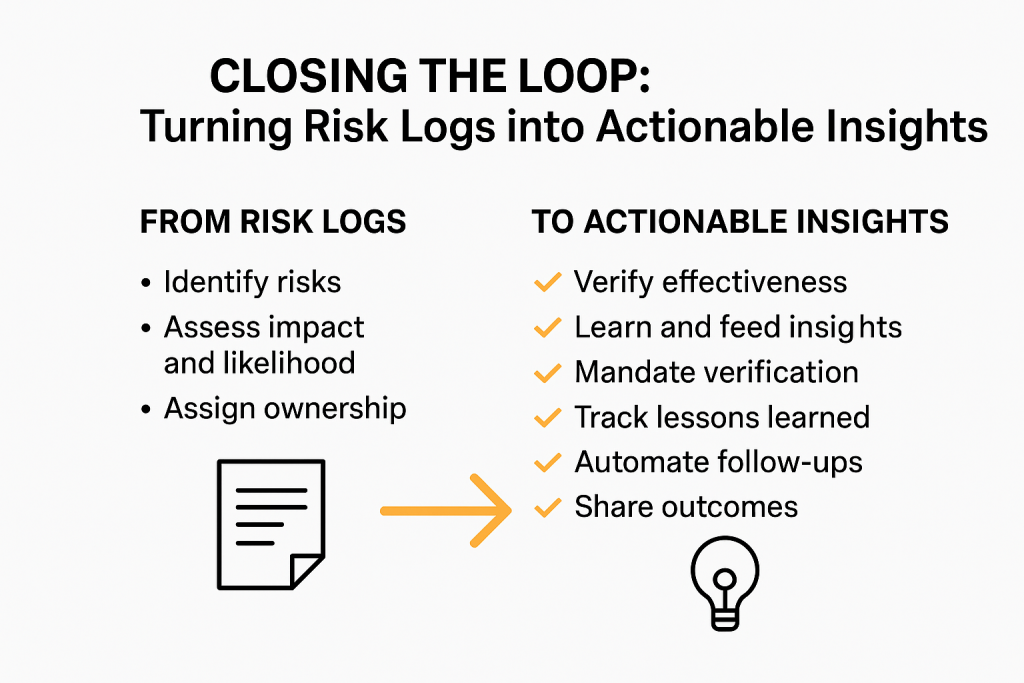

7. Monitoring and Continuous Improvement

Risk management in GRC platformis continuous rather than one-time.

The platform provides:

- Historical risk tracking

- Time-series analytics

- Risk posture monitoring over time

Organizations can analyze how risk levels evolve as mitigation actions are implemented, improving governance maturity and transparency.

✅ Summary of the GRC platformRisk Management Process

- Identify AI risks

- Assess likelihood and severity

- Calculate risk score and classify risk level

- Develop mitigation plans

- Assign ownership and track workflow

- Map risks to compliance frameworks

- Monitor and review risks continuously

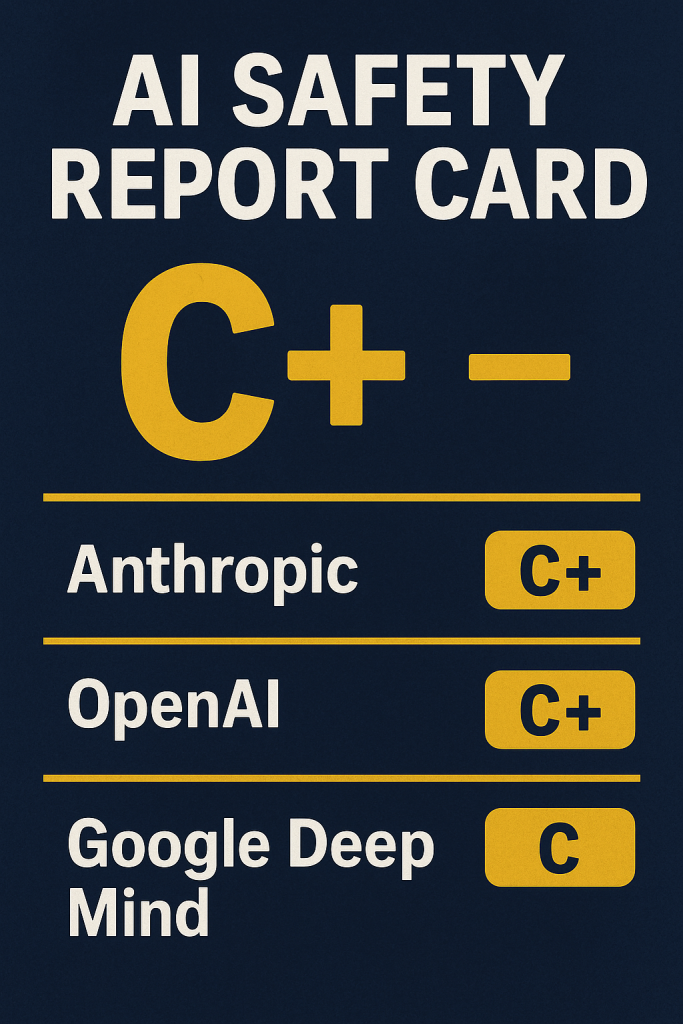

💡 My perspective (given your background in security and compliance:

GRC platformessentially applies traditional GRC risk management concepts to AI systems, but with AI-specific risk categories (model, vendor, lifecycle) and framework traceability (ISO 42001, EU AI Act, NIST AI RMF).

The key differentiator is that it treats AI risk as dynamic and lifecycle-based, rather than static like traditional IT risk registers. That approach aligns well with emerging AI governance practices.

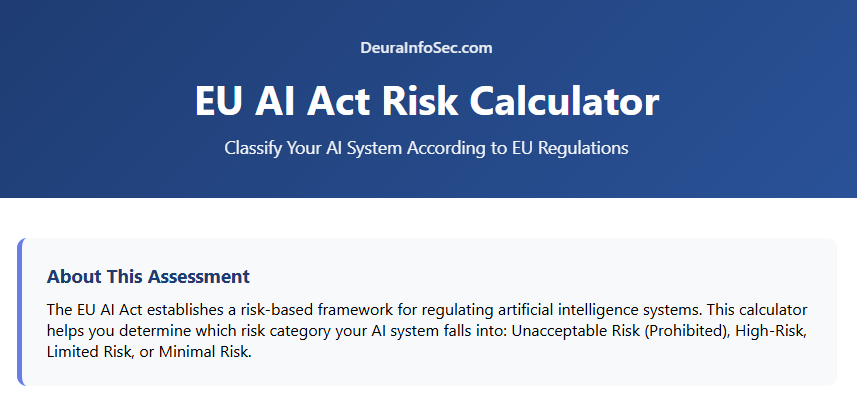

How risk management to ISO 42001 Clause 6 (Risk & Opportunity Management) and broader AI governance principles, tailored for organizations managing AI systems:

1. Context Establishment (ISO 42001 Clause 6.1.1)

ISO 42001 requirement: Understand internal and external context, including stakeholders, regulatory requirements, and AI objectives, before managing risks.

GRC platform mapping:

- Allows defining AI projects, systems, and stakeholders in a centralized register.

- Captures regulatory requirements like EU AI Act, NIST AI RMF, or state AI laws.

- Provides a holistic view of AI assets, vendors, and models, ensuring all relevant context is captured before risk assessment.

AI governance impact: Ensures that AI governance decisions are context-aware, not ad hoc.

2. Risk & Opportunity Identification (Clause 6.1.2)

ISO 42001 requirement: Identify risks and opportunities that could affect the achievement of AI objectives.

GRC platform mapping:

- Identifies project, model, and vendor risks across the AI lifecycle.

- Risks include bias, security vulnerabilities, regulatory non-compliance, and operational failures.

- Supports opportunity identification by noting areas for model improvement, regulatory alignment, or vendor efficiency.

AI governance impact: Ensures that AI systems are proactively monitored for both threats and improvement areas, aligning with responsible AI principles.

3. Risk Assessment & Evaluation (Clause 6.1.3)

ISO 42001 requirement: Assess likelihood and impact of risks and determine priority.

GRC platform mapping:

- Calculates risk scores using weighted likelihood × severity formula.

- Maps risks to six risk levels (No Risk → Very High).

- Provides a prioritized list of risks based on impact and probability.

AI governance impact: Helps organizations focus governance resources on high-impact AI risks, such as models affecting safety, fairness, or regulatory compliance.

4. Risk Treatment / Mitigation Planning (Clause 6.1.4)

ISO 42001 requirement: Determine actions to mitigate risks or exploit opportunities, assign responsibility, and set deadlines.

GRC platform mapping:

- Each risk entry includes:

- Mitigation plan

- Assigned owner

- Target completion date

- Residual risk evaluation

- Tracks mitigation through a 7-stage workflow (Not Started → Requires Review).

AI governance impact: Ensures accountability and traceability in AI risk treatment, meeting governance and audit requirements.

5. Integration into AI Governance (Clause 6.2)

ISO 42001 requirement: Embed risk management into overall AI governance, strategy, and operations.

GRC platform mapping:

- Links risks to AI lifecycle phases (design, training, deployment).

- Maps each risk to regulatory or framework controls (ISO 42001 clauses, ISO 27001, NIST AI RMF).

- Supports continuous monitoring and reporting, integrating risk management into AI governance dashboards.

AI governance impact: Makes risk management a core part of AI governance, not an afterthought.

6. Monitoring & Review (Clause 6.3)

ISO 42001 requirement: Monitor risks, evaluate effectiveness of mitigation, and update as needed.

GRC platform mapping:

- Provides time-series analytics and historical tracking of risks.

- Flags changes in risk levels over time.

- Ensures audit-readiness with documented mitigation history.

AI governance impact: Enables dynamic governance that adapts to model updates, new AI deployments, and regulatory changes.

✅ Summary of Mapping

| ISO 42001 Clause | Requirement | GRC platform Feature | AI Governance Benefit |

| 6.1.1 Context | Understand context | Stakeholder, AI system, vendor, regulatory registry | Context-aware AI governance |

| 6.1.2 Identification | Identify risks & opportunities | Project/Model/Vendor risk register | Proactive risk & opportunity capture |

| 6.1.3 Assessment | Evaluate risk likelihood & impact | Risk scoring & prioritization | Focus on high-impact AI risks |

| 6.1.4 Treatment | Mitigate risks / assign ownership | Mitigation plans + workflow | Accountability & traceability |

| 6.2 Integration | Embed in AI governance | Lifecycle & control mapping | Risk mgmt part of governance strategy |

| 6.3 Monitoring | Review & update | Analytics + historical tracking | Continuous governance & audit readiness |

💡 Perspective:

GRC platform aligns ISO 42001’s structured risk management approach with AI-specific considerations like bias, model failure, and vendor dependency. By integrating risk scoring, workflow management, and framework mapping, it operationalizes risk-based AI governance—a critical requirement for regulatory compliance and responsible AI deployment.

Feel free to reach out to schedule a demo. We’ll walk you through the GRC platform and show how it dynamically supports comprehensive risk management or for that matter any question regarding AI Governance.

Get Your Free AI Governance Readiness Assessment – Is your organization ready for ISO 42001, EU AI Act, and emerging AI regulations?

AI Governance Gap Assessment tool

- 15 questions

- Instant maturity score

- Detailed PDF report

- Top 3 priority gaps

Click below to open an AI Governance Gap Assessment in your browser or click the image to start assessment.

ai_governance_assessment-v1.5Download

Built by AI governance experts. Used by compliance leaders.

InfoSec services | InfoSec books | Follow our blog | DISC llc is listed on The vCISO Directory | ISO 27k Chat bot | Comprehensive vCISO Services | ISMS Services | AIMS Services | Security Risk Assessment Services | Mergers and Acquisition Security

At DISC InfoSec, we help organizations navigate this landscape by aligning AI risk management, governance, security, and compliance into a single, practical roadmap. Whether you are experimenting with AI or deploying it at scale, we help you choose and operationalize the right frameworks to reduce risk and build trust. Learn more at DISC InfoSec.

- MITRE ATT&CK: Turning Blind Spots into Real-World Cyber Defense

- When AI Hacks Faster Than Humans: The Coming Collapse of Traditional Cybersecurity Value

- SOC 2 Isn’t Enough: Moving Beyond Compliance Theater to Real Risk Management

- When AI Becomes the Attack Surface: Lessons from the McKinsey Lilli Incident

- Why Every Company Needs a CISO (or at Least vCISO-Level Leadership)