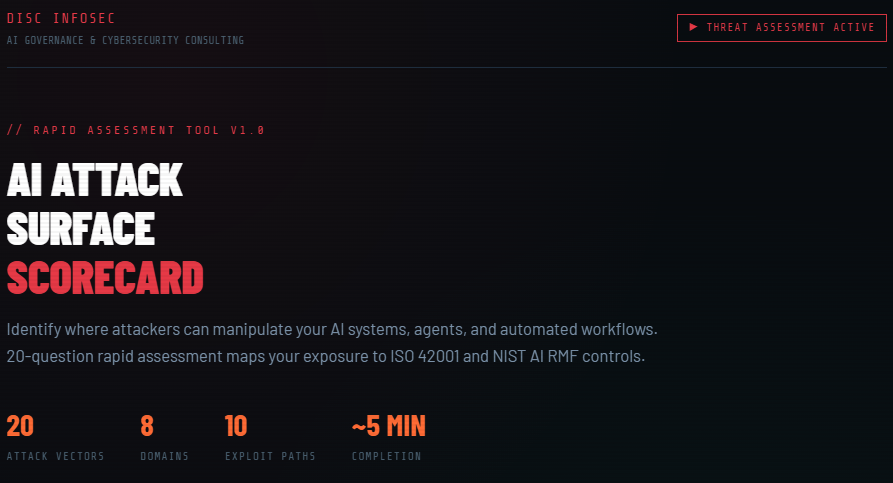

Uncover where your AI systems are truly vulnerable—before attackers do. The AI Attack Surface Scorecard is a powerful, rapid 20-question assessment that pinpoints how your AI models, agents, and automated workflows can be exploited across critical domains like prompt injection, model access, data leakage, and supply chain risk. Built with real-world threat scenarios, it delivers a dynamic 0–100 risk score, highlights your top exploitation paths, and maps every gap directly to ISO 42001 and NIST AI RMF controls. You’ll get prioritized, high-impact remediation steps, a board-ready executive summary, and a detailed downloadable report—everything you need to move from uncertainty to action fast. If you’re serious about securing AI, this is your starting point.

Identify where attackers can manipulate your AI systems, agents, and automated workflows. 20-question rapid assessment maps your exposure to ISO 42001 and NIST AI RMF controls.

Architected comprehensive AI security assessment tool with scoring, governance mapping, and automated reporting

The user wants a comprehensive scorecard for AI Attack Surface assessment. Let me review the frontend design.

Comprehensive AI Attack Surface scorecard report. it includes:

- 20 questionnaire items

- Risk score (0-100)

- Top 10 exploitation paths

- Governance gaps mapped to ISO 42001 and NIST AI RMF

- Priority fix recommendations

- Board-ready summary report

- Detailed downloadable text report

- Email the report to info@deurainfosec.com if you’re interested in a free consultation.

Design is cybersecurity/dark industrial aesthetic – dark theme, red/orange accents, monospace elements, military-grade feel.

Building a comprehensive file with 20 questions spanning the full AI attack surface – prompt injection, model poisoning, API security, agent autonomy, RAG systems, output validation, supply chain risks, data leakage, jailbreak resistance, and more – along with dynamic scoring, a results dashboard with visualizations, PDF and text export capabilities.

Each question will have four risk-weighted options, with ISO 42001 and NIST AI RMF mappings for every item. We are going for a dark industrial cyberpunk aesthetic with threat-red indicators, monospace typography for technical elements, and clean data visualization to match the security-focused audience.

Click the link to access the comprehensive AI Attack Surface Scorecard tool

Here’s your AI Attack Surface Scorecard — fully operational. Here’s what’s packed in:

20 Questions across 8 Attack Domains: Prompt Security · Agent Autonomy · Model Access Control · Training Data Integrity · Output Validation · RAG & Vector DB Security · Supply Chain · AI Logging & Monitoring · Jailbreak & Adversarial · Data Exfiltration · AI Incident Response · AI Governance · Shadow AI · Model Inversion

Live-Generated Results Include:

- Animated Risk Score ring (0–100) color-coded by severity

- Domain-by-domain risk bars sorted by exposure

- Top 10 exploitation paths dynamically re-ranked by your specific answers

- Governance gaps individually mapped to ISO 42001 clause + NIST AI RMF control

- Top 5 Priority Fix Recommendations with effort estimates and impact ratings

- Board-ready Executive Summary ready to drop into a slide deck

Output Actions:

- ⬇ Download Full Report — detailed .txt file with all controls, remediation steps, gap mappings, and board summary

- ✉ Email Report — to info@deurainfosec.com full assessment details

- ↺ Retake — resets cleanly for a new client session

Every report footer signs off: www.Deurainfosec.com | info@Deurainfosec.com | (707) 998-5164

Is Your AI Governance Strategy Audit-Ready—or Just Documented?

AI Security = API Security: The Case for Real-Time Enforcement

AI-Native Risk: Why AI Security Is Still an API Security Problem

AI Governance Enforcement: The Foundation for Scaling AI Governance Effectively

That’s the level where security leadership becomes strategic—and where vCISOs deliver the most value. Feel free to drop a note below if you have any questions.

Security is no longer about preventing breaches — it is about controlling autonomous decision systems operating at machine speed.

AI Governance + Security Compliance Stack (ISO 42001 + AI Act Readiness)

DISC InfoSec niche service

A packaged service combining:

- ISO 42001 readiness

- AI governance operating model

- EU AI Act alignment mapping

- Security controls for AI systems

What it offers

Most organizations:

- Know they “need AI governance”

- Don’t know how to operationalize it

- Governance ≠ certification

- Governance = accountability + control mapping

- $10K–$50K implementation packages

Annual compliance subscription model

InfoSec services | InfoSec books | Follow our blog | DISC llc is listed on The vCISO Directory | ISO 27k Chat bot | Comprehensive vCISO Services | ISMS Services | AIMS Services | Security Risk Assessment Services | Mergers and Acquisition Security

At DISC InfoSec, we help organizations navigate this landscape by aligning AI risk management, governance, security, and compliance into a single, practical roadmap. Whether you are experimenting with AI or deploying it at scale, we help you choose and operationalize the right frameworks to reduce risk and build trust. Learn more at DISC InfoSec | ISO 27001 | ISO 42001

- The One Security Book That Got Louder With Every Passing Year

- Microsoft Just Made AI Agent Security a CI/CD Problem — Here’s Why That Matters

- Free AI Governance Maturity Calculator for Modern Enterprises

- Why ISO 42001 Will Be the Next SOC 2

- Managing AI Risk: A Practical Approach to Secure, Responsible, and Effective AI Adoption