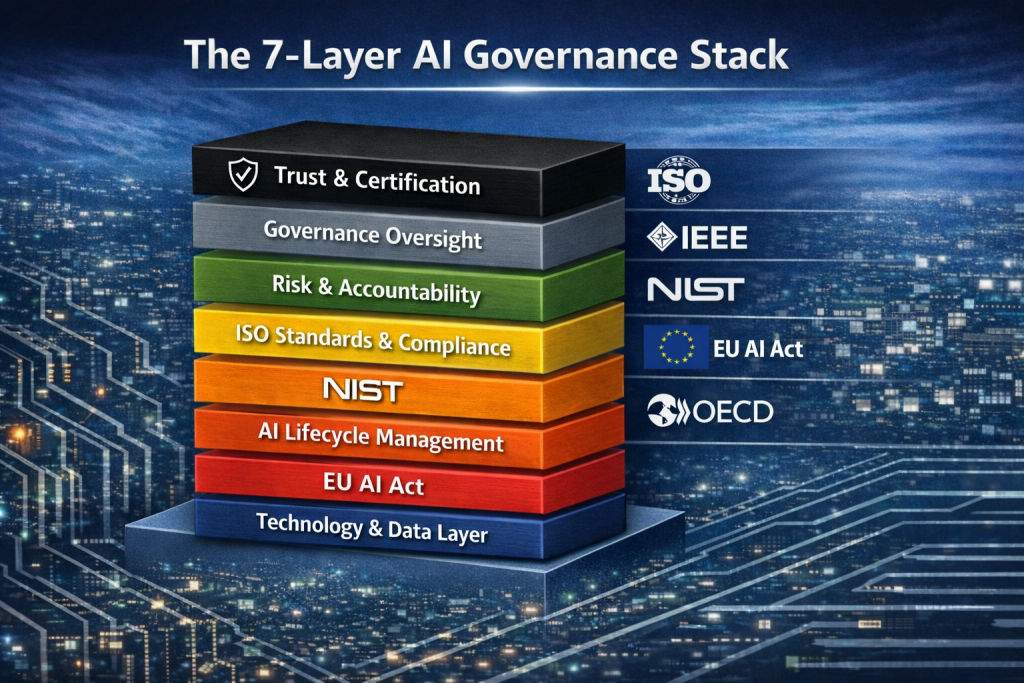

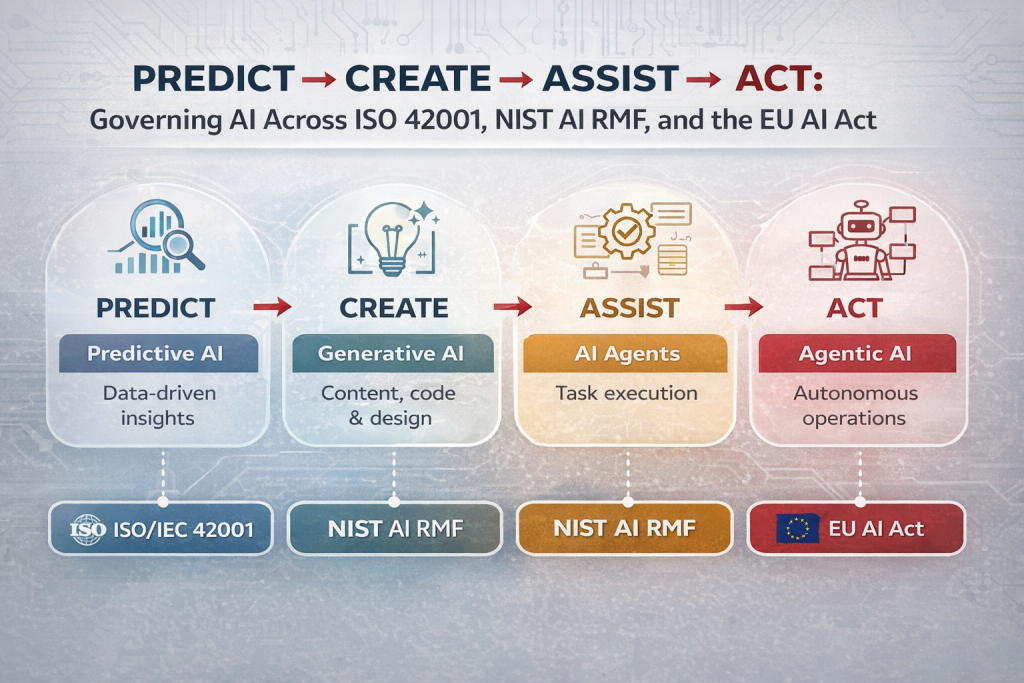

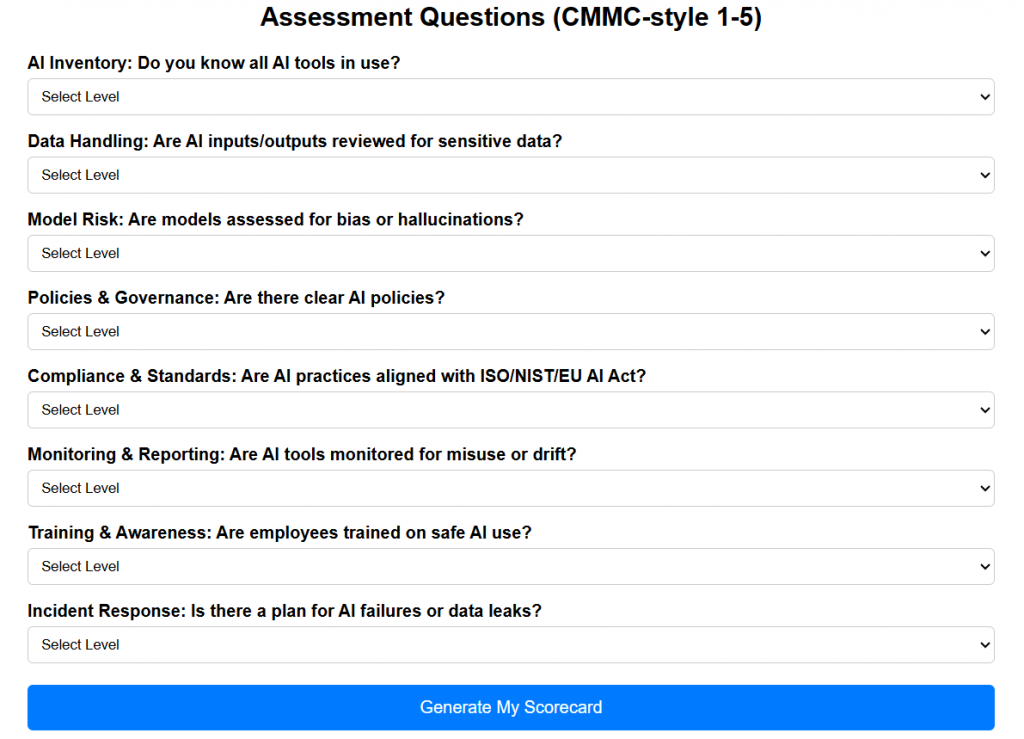

ISO/IEC 42001, the EU AI Act, and the NIST AI Risk Management Framework (AI RMF) represent three distinct but complementary approaches to governing artificial intelligence. ISO 42001 is a formal management system standard designed to institutionalize AI governance within organizations. Its core concept is continuous improvement through structured controls, with a primary focus on embedding AI risk management into business processes. It applies broadly across industries and is certifiable, making it attractive for organizations seeking formal assurance. Its scope covers governance, lifecycle management, and accountability, using a risk-based, auditable approach. Globally, it is emerging as the backbone for standardized AI governance, especially for enterprises seeking international credibility.

The EU AI Act is fundamentally different, operating as a regulatory framework rather than a voluntary standard. Its core concept is risk classification of AI systems (e.g., unacceptable, high-risk), with a primary focus on protecting individuals’ rights and safety. It applies to any organization that develops, deploys, or offers AI systems within the European Union, regardless of where the company is based. Compliance is mandatory, not certifiable, and enforced through legal mechanisms. Its scope is extensive, covering use cases, data governance, transparency, and human oversight. The risk approach is prescriptive and tiered, and its global impact is significant, as it effectively sets a de facto regulatory benchmark for companies operating internationally.

The NIST AI RMF takes a more flexible, guidance-driven approach. Its core concept is trustworthy AI built on principles like fairness, accountability, and transparency. The primary focus is helping organizations identify, assess, and manage AI risks without imposing strict requirements. It is applicable to organizations of all sizes, particularly in the U.S., but is not certifiable or legally binding. Its scope spans the AI lifecycle, emphasizing governance, mapping, measurement, and management functions. The risk approach is adaptive and contextual rather than prescriptive. Globally, it serves as a practical playbook and is widely referenced as a baseline for AI risk discussions.

When compared, ISO 42001 provides structure and certifiability, the EU AI Act enforces legal accountability, and NIST AI RMF offers operational flexibility. ISO is ideal for organizations wanting to operationalize governance programs with measurable controls. The EU AI Act is unavoidable for companies interacting with EU markets, demanding strict adherence to compliance requirements. NIST AI RMF, meanwhile, is best suited for organizations seeking to mature their AI risk posture without the overhead of certification or regulatory burden.

Together, these frameworks form a layered model of AI governance: NIST AI RMF as the foundation for understanding and managing risk, ISO 42001 as the system for institutionalizing and auditing those practices, and the EU AI Act as the regulatory overlay enforcing accountability. Organizations that align across all three are better positioned to move from reactive compliance to proactive, continuous AI risk management—something that is quickly becoming a competitive differentiator in the global market.

If you’re deciding which framework to adopt first, the answer isn’t “one-size-fits-all”—it depends heavily on where you operate, your regulatory exposure, and how mature your AI usage is. But there is a practical sequencing that works in most real-world scenarios.

🇺🇸 U.S.-based organizations (like you in California)

Start with NIST AI Risk Management Framework.

The reason is simple: it’s flexible, fast to adopt, and aligns well with how U.S. companies already think about risk (similar to NIST CSF). It gives you an immediate way to structure AI governance without slowing innovation.

From a vCISO or GRC standpoint, this is your “operational foundation”—you can quickly map risks, define controls, and start producing defensible outputs for clients or regulators.

👉 My take: If you skip this step and jump straight into compliance-heavy frameworks, you’ll create “paper governance” without real risk visibility.

🇪🇺 If you touch EU markets (customers, users, or data)

Prioritize the EU AI Act immediately—even before anything else if exposure is high.

This is not optional. If your AI system falls into “high-risk,” you’re dealing with legal obligations, audits, and potential penalties.

👉 My take: This is the “hard boundary” framework. It defines what you must do, not what you should do.

Even U.S. companies often underestimate this—if your product scales, EU rules will reach you faster than expected.

🌍 When you want credibility, scale, or enterprise trust

Adopt ISO/IEC 42001 after you’ve operationalized risk (typically after NIST AI RMF).

ISO 42001 is where governance becomes institutionalized and auditable. It’s especially valuable if you:

- Sell to enterprises

- Need third-party assurance

- Want to productize your AI governance (e.g., your DISC InfoSec offering)

👉 My take: This is your “trust multiplier.” It turns internal practices into something marketable and defensible.

🔑 Practical adoption sequence (what I recommend)

For most organizations (especially in the U.S.):

- Start with NIST AI RMF → build real risk visibility

- Overlay EU AI Act (if applicable) → ensure regulatory compliance

- Formalize with ISO 42001 → scale, certify, and monetize trust

💡 My blunt perspective

- If you start with ISO 42001 → you risk over-engineering too early

- If you ignore EU AI Act → you risk legal exposure

- If you skip NIST AI RMF → you risk fake governance (compliance theater)

Comparing of ISO 27001 with ISO 42001

ISO/IEC 42001 builds directly on the structure of ISO/IEC 27001, so at first glance the two frameworks look similar in clauses, risk assessment approach, and use of Annex A controls. However, their intent and scope diverge significantly. ISO 27001 is inward-focused, centered on protecting an organization’s information assets and managing risks that could impact the business. In contrast, ISO/IEC 42001 is outward-looking and expands accountability beyond the organization to include impacts—both negative and positive—on society, individuals, and other stakeholders arising from AI use. It also shifts emphasis from purely information protection to governance of AI-driven products and services, making it closer to a quality management system in practice. Key differences include the introduction of AI system impact assessments (evaluating societal harms and benefits), distinct and more AI-specific Annex A controls, and additional guidance annexes. While many governance elements (e.g., audits, nonconformities) remain structurally similar, ISO 42001 requires deeper scrutiny of ethical, societal, and product-level risks, making it broader, more externally accountable, and more aligned with AI lifecycle management than ISO 27001.

At DISC InfoSec:

👉 “We move you from AI chaos → risk visibility → compliance → certification”

AI Governance Playbook: How to Secure, Control, and Optimize Artificial Intelligence Initiatives

InfoSec services | InfoSec books | Follow our blog | DISC llc is listed on The vCISO Directory | ISO 27k Chat bot | Comprehensive vCISO Services | ISMS Services | AIMS Services | Security Risk Assessment Services | Mergers and Acquisition Security

At DISC InfoSec, we help organizations navigate this landscape by aligning AI risk management, governance, security, and compliance into a single, practical roadmap. Whether you are experimenting with AI or deploying it at scale, we help you choose and operationalize the right frameworks to reduce risk and build trust. Learn more at DISC InfoSec.

- Securing LLM-Powered Enterprises: From Invisible Threats to Operational Resilience

- Cyber Resilience Maturity Model: From Reactive Security to Operational Resilience

- From Risk to Resilience: A 5-Step Playbook for Securing AI in the Modern Threat Era

- Which AI Governance Framework Should You Adopt First? A Practical Guide for U.S., EU, and Global Organizations

- MITRE ATT&CK: Turning Blind Spots into Real-World Cyber Defense