Governance, Risk, and Compliance (GRC) — A Practical Summary

What GRC Means in Practice

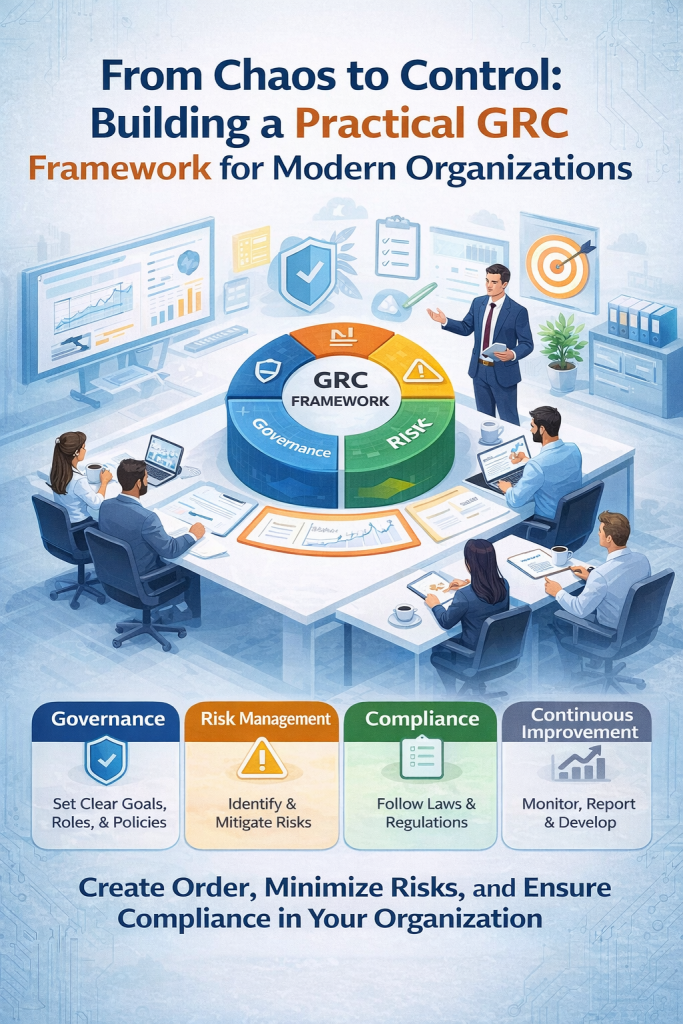

A Governance, Risk, and Compliance (GRC) framework is a structured way to bring order, accountability, and consistency to how an organization manages decisions, risks, and regulatory obligations. Governance sets the direction by defining goals, leadership responsibilities, and policies so everyone understands their role and the company’s priorities. Risk management focuses on identifying threats—such as cyber incidents, operational failures, or legal exposure—and reducing their likelihood or impact. Compliance ensures the organization follows laws, standards, and internal rules. Together, these elements create an integrated system that improves oversight, reduces surprises, and builds trust with stakeholders.

GRC Success Metrics and Value

A mature GRC program improves risk visibility and decision-making by linking priorities to measurable value and protection outcomes. Organizations with effective GRC frameworks typically see stronger alignment between business goals and risk controls, better resource prioritization, and improved protection against operational and regulatory failures. By tracking key performance indicators (KPIs) and formulas—such as risk scoring (likelihood × impact) and control effectiveness—leaders can quantify how well the organization is managing uncertainty and compliance. This data-driven approach helps convert abstract risk into actionable insights.

Step-by-Step GRC Framework

Building a GRC framework follows a logical progression. It starts with establishing a governance structure and charter that defines authority and accountability. Next comes defining risk appetite—how much risk the organization is willing to accept. A policy framework is then developed to translate strategy into practical rules. Regulatory mapping ensures all legal and industry requirements are addressed. Risk identification and assessment help prioritize threats, followed by implementing appropriate controls. Continuous monitoring through key risk indicators (KRIs), reporting dashboards, and feedback loops supports ongoing improvement. The process is cyclical: document, monitor, and refine regularly to keep the framework relevant.

Core GRC Components

At the center of a GRC framework is an integrated system that connects governance, risk management, and compliance activities. Core components include strategy and governance oversight, risk assessment and management processes, compliance tracking, internal controls, and audit and assurance functions. Supporting artifacts—such as a GRC charter, risk register, policy library, control matrix, compliance tracker, and audit reports—provide the documentation backbone. Together, these components ensure that risks are systematically identified, controls are enforced, and compliance is continuously validated.

Essential Formulas, KPIs, and Documentation

Effective GRC relies on measurable indicators and structured documentation. Key formulas and KPIs evaluate performance, risk exposure, and control effectiveness, allowing leaders to monitor progress objectively. Essential document outputs—such as risk registers, policy libraries, and control matrices—create transparency and consistency. A clear approval workflow (draft → review → approval → implementation → monitoring → improvement) ensures accountability and continuous oversight. These mechanisms transform GRC from a theoretical model into an operational discipline.

Common GRC Mistakes

Many organizations struggle with GRC because of cultural and structural gaps. Weak leadership commitment, unclear risk appetite, inconsistent policy enforcement, and lack of continuous monitoring are common pitfalls. Without executive support and regular review, frameworks become paperwork exercises rather than living systems. Avoiding these mistakes requires strong tone at the top, simple and well-documented processes, and frequent reassessment.

Final Perspective

A well-designed GRC framework acts as a stabilizing force for an organization. It clarifies governance, reduces risk exposure, strengthens compliance posture, and supports sustainable performance. By keeping the framework simple, documented, and continuously reviewed, companies can transform GRC into a practical operating system that guides everyday decisions rather than a one-time compliance project.

InfoSec services | InfoSec books | Follow our blog | DISC llc is listed on The vCISO Directory | ISO 27k Chat bot | Comprehensive vCISO Services | ISMS Services | AIMS Services | Security Risk Assessment Services | Mergers and Acquisition Security

At DISC InfoSec, we help organizations navigate this landscape by aligning AI risk management, governance, security, and compliance into a single, practical roadmap. Whether you are experimenting with AI or deploying it at scale, we help you choose and operationalize the right frameworks to reduce risk and build trust. Learn more at DISC InfoSec.

- The One Security Book That Got Louder With Every Passing Year

- Microsoft Just Made AI Agent Security a CI/CD Problem — Here’s Why That Matters

- Free AI Governance Maturity Calculator for Modern Enterprises

- Why ISO 42001 Will Be the Next SOC 2

- Managing AI Risk: A Practical Approach to Secure, Responsible, and Effective AI Adoption