PCAA

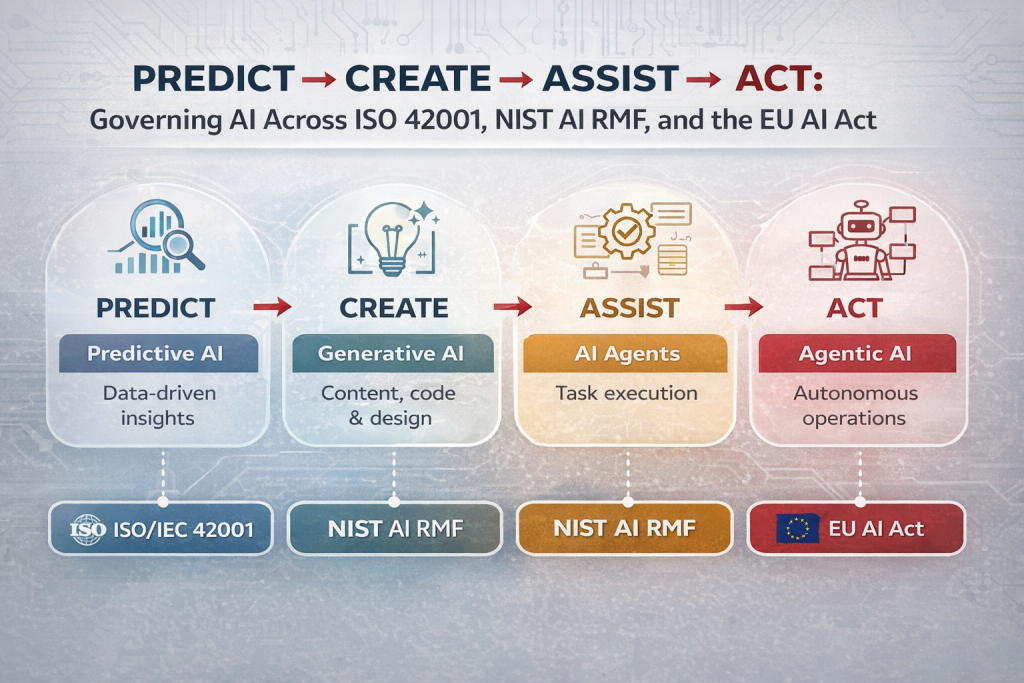

1️⃣ Predictive AI – Predict

Predictive AI is the most mature and widely adopted form of AI. It analyzes historical data to identify patterns and forecast what is likely to happen next. Organizations use it to anticipate customer demand, detect fraud, identify anomalies, and support risk-based decisions. The goal isn’t automation for its own sake, but faster and more accurate decision-making, with humans still in control of final actions.

2️⃣ Generative AI – Create

Generative AI goes beyond prediction and focuses on creation. It generates text, code, images, designs, and insights based on prompts. Rather than replacing people, it amplifies human productivity, helping teams draft content, write software, analyze information, and communicate faster. Its core value lies in increasing output velocity while keeping humans responsible for judgment and accountability.

3️⃣ AI Agents – Assist

AI Agents add execution to intelligence. These systems are connected to enterprise tools, applications, and internal data sources. Instead of only suggesting actions, they can perform tasks—such as retrieving data, updating systems, responding to requests, or coordinating workflows. AI Agents expand human capacity by handling repetitive or multi-step tasks, delivering knowledge access and task leverage at scale.

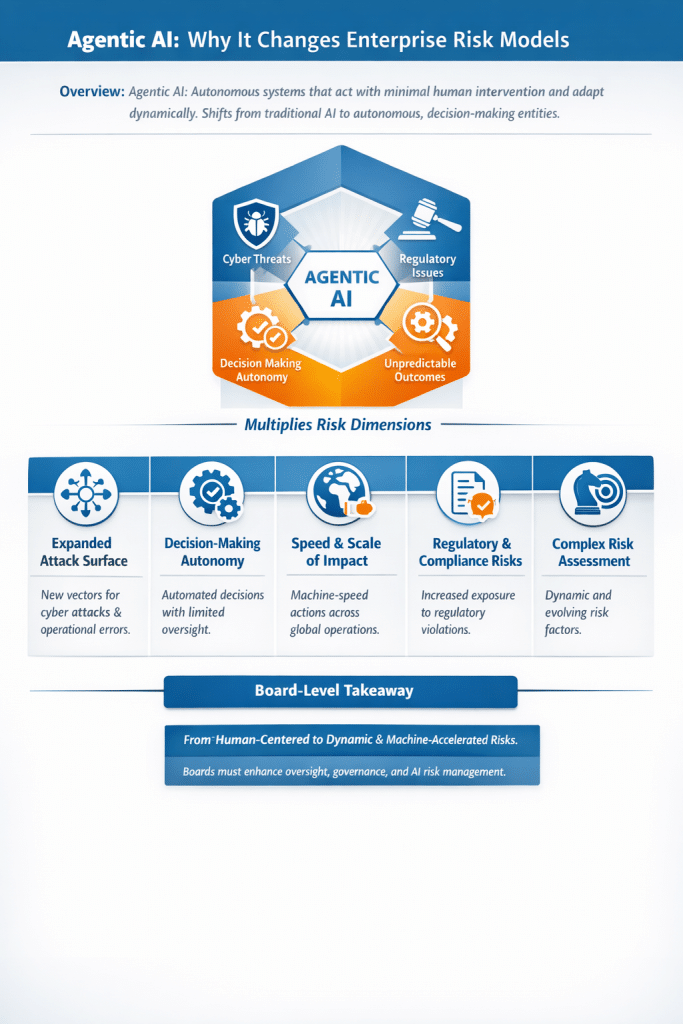

4️⃣ Agentic AI – Act

Agentic AI represents the frontier of AI adoption. It orchestrates multiple agents to run workflows end-to-end with minimal human intervention. These systems can plan, delegate, verify, and complete complex processes across tools and teams. At this stage, AI evolves from a tool into a digital team member, enabling true process transformation, not just efficiency gains.

Simple decision framework

- Need faster decisions? → Predictive AI

- Need more output? → Generative AI

- Need task execution and assistance? → AI Agents

- Need end-to-end transformation? → Agentic AI

Below is a clean, standards-aligned mapping of the four AI types (Predict → Create → Assist → Act) to ISO/IEC 42001, NIST AI RMF, and the EU AI Act.

This is written so you can directly reuse it in AI governance decks, risk registers, or client assessments.

AI Types Mapped to ISO 42001, NIST AI RMF & EU AI Act

1️⃣ Predictive AI (Predict)

Forecasting, scoring, classification, anomaly detection

ISO/IEC 42001 (AI Management System)

- Clause 4–5: Organizational context, leadership accountability for AI outcomes

- Clause 6: AI risk assessment (bias, drift, fairness)

- Clause 8: Operational controls for model lifecycle management

- Clause 9: Performance evaluation and monitoring

👉 Focus: Data quality, bias management, model drift, transparency

NIST AI RMF

- Govern: Define risk tolerance for AI-assisted decisions

- Map: Identify intended use and impact of predictions

- Measure: Test bias, accuracy, robustness

- Manage: Monitor and correct model drift

👉 Predictive AI is primarily a Measure + Manage problem.

EU AI Act

- Often classified as High-Risk AI if used in:

- Credit scoring

- Hiring & HR decisions

- Insurance, healthcare, or public services

Key obligations:

- Data governance and bias mitigation

- Human oversight

- Accuracy, robustness, and documentation

2️⃣ Generative AI (Create)

Text, code, image, design, content generation

ISO/IEC 42001

- Clause 5: AI policy and responsible AI principles

- Clause 6: Risk treatment for misuse and data leakage

- Clause 8: Controls for prompt handling and output management

- Annex A: Transparency and explainability controls

👉 Focus: Responsible use, content risk, data leakage

NIST AI RMF

- Govern: Acceptable use and ethical guidelines

- Map: Identify misuse scenarios (prompt injection, hallucinations)

- Measure: Output quality, harmful content, data exposure

- Manage: Guardrails, monitoring, user training

👉 Generative AI heavily stresses Govern + Map.

EU AI Act

- Typically classified as General-Purpose AI (GPAI) or GPAI with systemic risk

Key obligations:

- Transparency (AI-generated content disclosure)

- Training data summaries

- Risk mitigation for downstream use

⚠️ Stricter rules apply if used in regulated decision-making contexts.

3️⃣ AI Agents (Assist)

Task execution, tool usage, system updates

ISO/IEC 42001

- Clause 6: Expanded risk assessment for automated actions

- Clause 8: Operational boundaries and authority controls

- Clause 7: Competence and awareness (human oversight)

👉 Focus: Authority limits, access control, traceability

NIST AI RMF

- Govern: Define scope of agent autonomy

- Map: Identify systems, APIs, and data agents can access

- Measure: Monitor behavior, execution accuracy

- Manage: Kill switches, rollback, escalation paths

👉 AI Agents sit squarely in Manage territory.

EU AI Act

- Risk classification depends on what the agent does, not the tech itself.

If agents:

- Modify records

- Trigger transactions

- Influence regulated decisions

→ Likely High-Risk AI

Key obligations:

- Human oversight

- Logging and traceability

- Risk controls on automation scope

4️⃣ Agentic AI (Act)

End-to-end workflows, autonomous decision chains

ISO/IEC 42001

- Clause 5: Top management accountability

- Clause 6: Enterprise-level AI risk management

- Clause 8: Strong operational guardrails

- Clause 10: Continuous improvement and corrective action

👉 Focus: Autonomy governance, accountability, systemic risk

NIST AI RMF

- Govern: Board-level AI risk ownership

- Map: End-to-end workflow impact analysis

- Measure: Continuous monitoring of outcomes

- Manage: Fail-safe mechanisms and incident response

👉 Agentic AI requires full-lifecycle RMF maturity.

EU AI Act

- Almost always High-Risk AI when deployed in production workflows.

Strict requirements:

- Human-in-command oversight

- Full documentation and auditability

- Robustness, cybersecurity, and post-market monitoring

🚨 Highest regulatory exposure across all AI types.

Executive Summary (Board-Ready)

| AI Type | Governance Intensity | Regulatory Exposure |

|---|---|---|

| Predictive AI | Medium | Medium–High |

| Generative AI | Medium | Medium |

| AI Agents | High | High |

| Agentic AI | Very High | Very High |

Rule of thumb:

As AI moves from insight to action, governance must move from IT control to enterprise risk management.

📚 Training References – Learn Generative AI (Free)

Microsoft offers one of the strongest beginner-to-builder GenAI learning paths:

- Intro to Generative AI & LLMs

https://lnkd.in/g_4ENCvC - Choosing the Right LLM

https://lnkd.in/gU2HU8JT - Responsible AI

https://lnkd.in/g9EEfdnE

InfoSec services | InfoSec books | Follow our blog | DISC llc is listed on The vCISO Directory | ISO 27k Chat bot | Comprehensive vCISO Services | ISMS Services | AIMS Services | Security Risk Assessment Services | Mergers and Acquisition Security

At DISC InfoSec, we help organizations navigate this landscape by aligning AI risk management, governance, security, and compliance into a single, practical roadmap. Whether you are experimenting with AI or deploying it at scale, we help you choose and operationalize the right frameworks to reduce risk and build trust. Learn more at DISC InfoSec.

- How ISO 27001 Lead Auditors Should Evaluate AI Risks in an ISMS

- Secure Your Web & API Applications Before Attackers Do: Reduce Vulnerabilities

- Is ISO 27001 Training Right for You? Here’s Who Should Consider It

- Top 15 Kali Linux Tools for AI Governance with Use Cases

- Risk Management with GRC platform: Mapping ISO 42001 Clause 6 to AI Governance