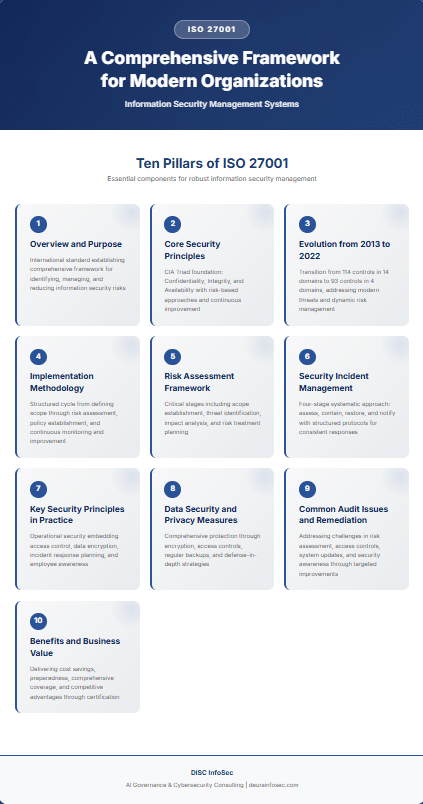

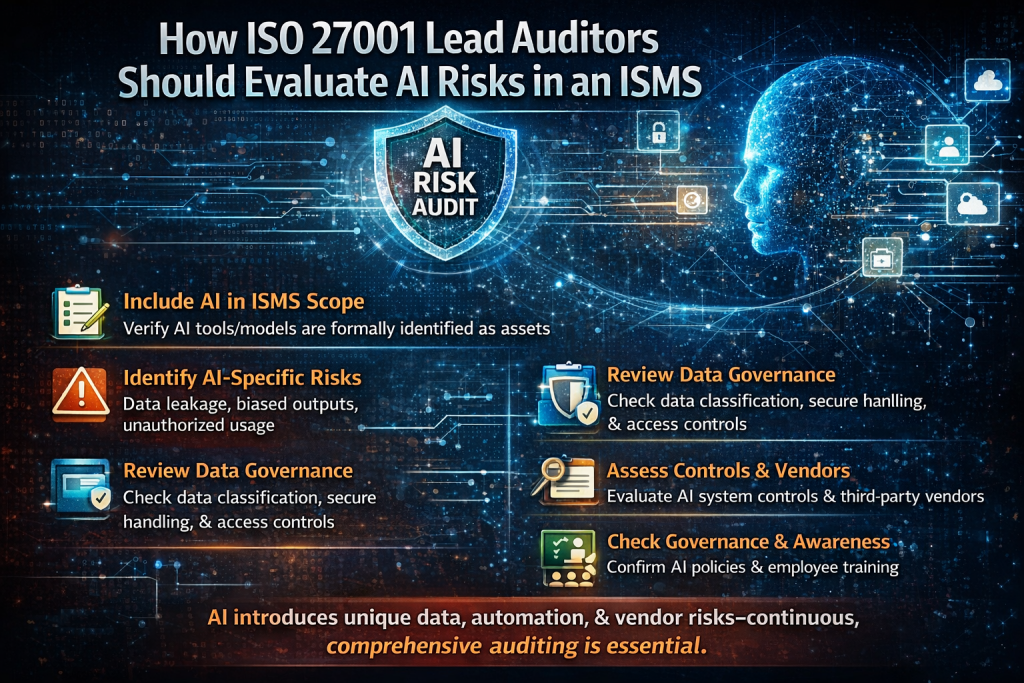

With AI adoption accelerating, ISO 27001 lead auditors must expand how they evaluate risks within an ISMS. AI is not just another technology component—it introduces new challenges related to data usage, automation, and decision-making. As a result, auditors need to move beyond traditional controls and ensure AI is properly integrated into the organization’s risk and governance framework.

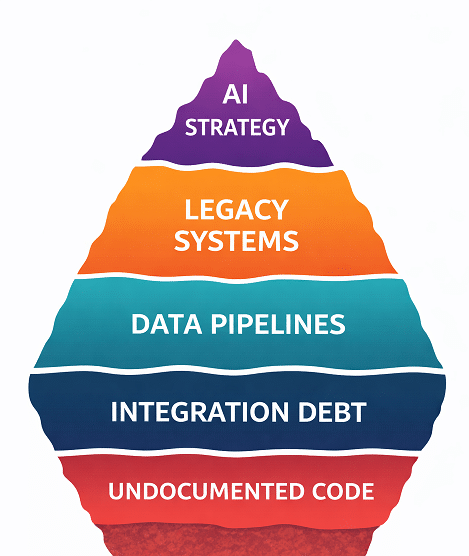

First, AI must be explicitly included within the ISMS scope. Auditors should verify that all AI tools, models, and platforms are formally identified as assets. If organizations are using AI without documenting it, this creates a significant visibility gap and undermines the effectiveness of the ISMS.

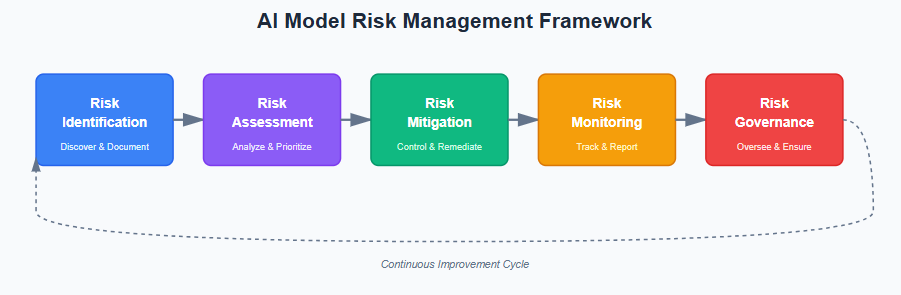

Second, auditors need to identify and assess AI-specific risks that are often overlooked in traditional risk assessments. These include data leakage through prompts or training datasets, biased or unreliable outputs, unauthorized use of public AI tools, and risks such as model manipulation or poisoning. These threats should be formally captured and managed within the risk register.

Third, strong data governance becomes even more critical in an AI-driven environment. Since AI systems rely heavily on data, auditors should ensure proper data classification, access controls, and secure handling of sensitive information. Additionally, there must be transparency into how AI systems process and use data, as this directly impacts risk exposure.

Fourth, auditors should review controls around AI systems and assess third-party risks. This includes verifying access controls, monitoring mechanisms, secure deployment practices, and ongoing updates. Given that many AI capabilities rely on external vendors or cloud providers, thorough vendor risk management is essential to prevent external dependencies from becoming security weaknesses.

Fifth, governance and awareness play a key role in managing AI risks. Organizations should establish clear policies for AI usage and ensure employees understand how to use AI tools securely and responsibly. Without proper governance and training, even well-designed controls can fail due to misuse or lack of awareness.

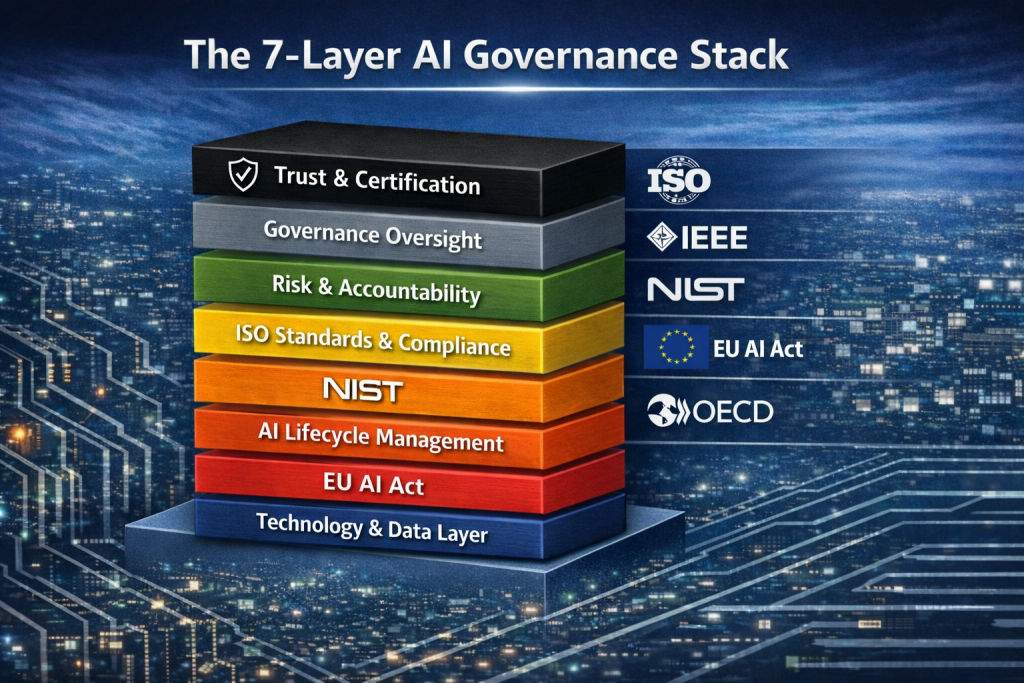

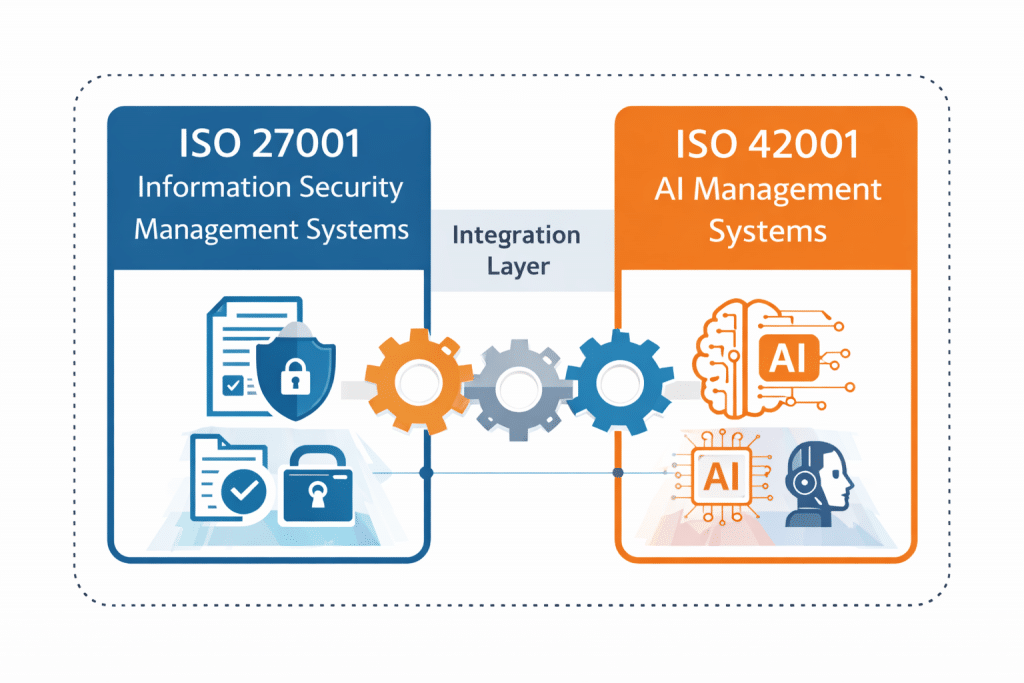

My perspective: AI is fundamentally reshaping the ISMS landscape, and auditors who treat it as just another asset will miss critical risks. The real shift is toward continuous, data-centric, and vendor-aware risk management. AI introduces dynamic risks that evolve quickly, so static, annual risk assessments are no longer sufficient. Organizations need ongoing monitoring, tighter integration with DevSecOps, and alignment with emerging frameworks like ISO 42001. Those who adapt early will not only reduce risk but also gain a competitive advantage by demonstrating mature, AI-aware security governance.

Ensure your ISMS is AI-ready. Partner with DISC InfoSec to assess, govern, and secure your AI systems before risks become incidents. Learn more today!

InfoSec services | InfoSec books | Follow our blog | DISC llc is listed on The vCISO Directory | ISO 27k Chat bot | Comprehensive vCISO Services | ISMS Services | AIMS Services | Security Risk Assessment Services | Mergers and Acquisition Security

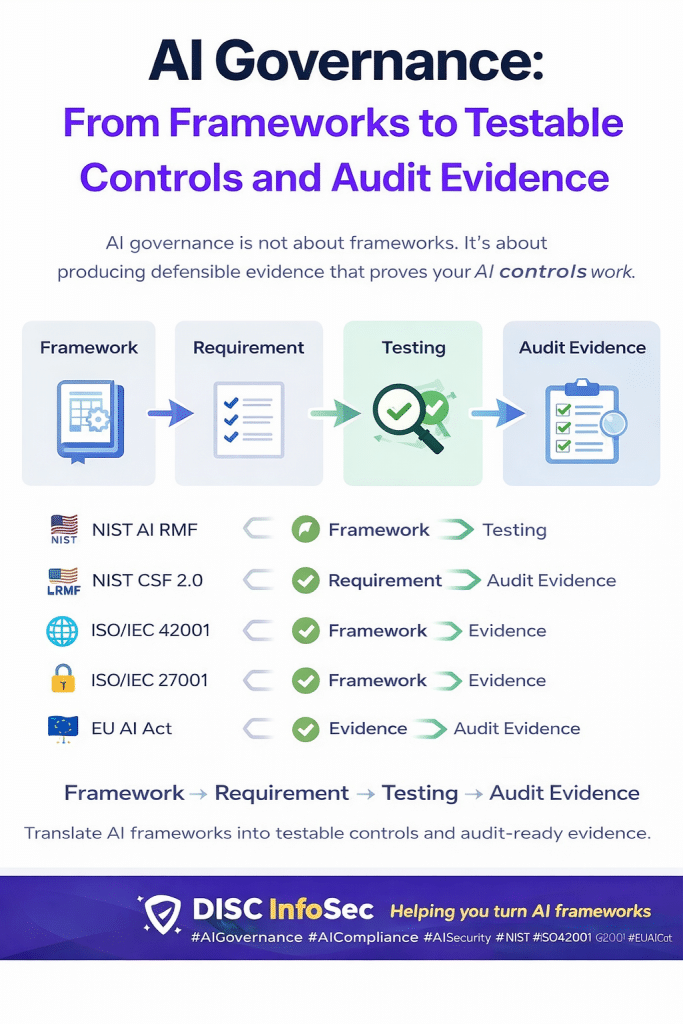

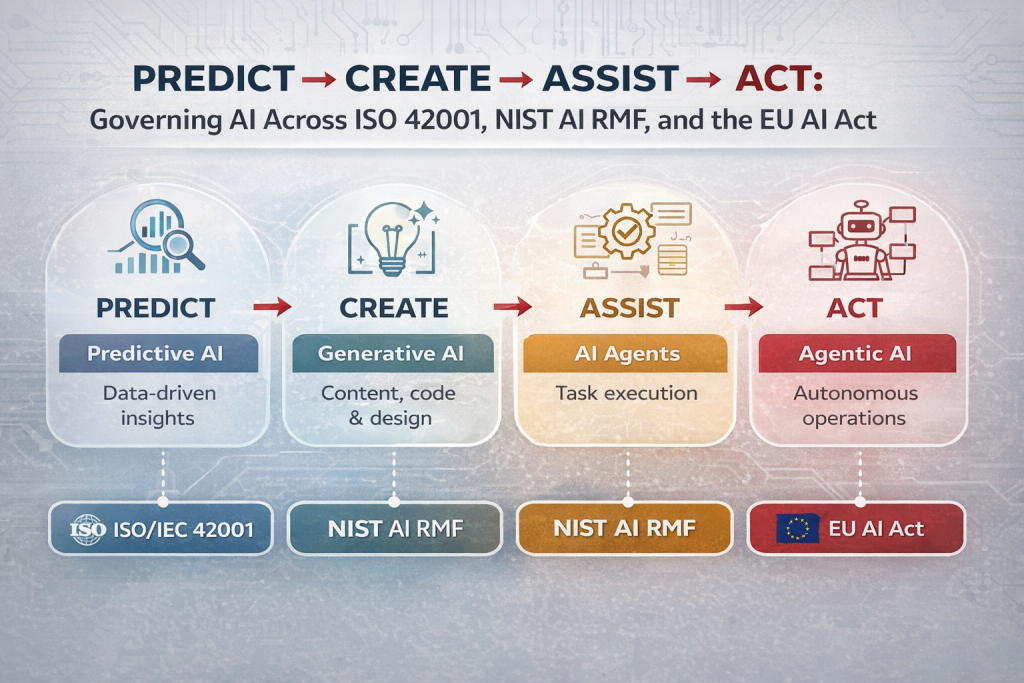

At DISC InfoSec, we help organizations navigate this landscape by aligning AI risk management, governance, security, and compliance into a single, practical roadmap. Whether you are experimenting with AI or deploying it at scale, we help you choose and operationalize the right frameworks to reduce risk and build trust. Learn more at DISC InfoSec.

- When AI Hacks Faster Than Humans: The Coming Collapse of Traditional Cybersecurity Value

- SOC 2 Isn’t Enough: Moving Beyond Compliance Theater to Real Risk Management

- When AI Becomes the Attack Surface: Lessons from the McKinsey Lilli Incident

- Why Every Company Needs a CISO (or at Least vCISO-Level Leadership)

- How ISO 27001 Lead Auditors Should Evaluate AI Risks in an ISMS