1. Introduction & discovery

In mid-September 2025, Anthropic’s Threat Intelligence team detected an advanced cyber espionage operation carried out by a Chinese state-sponsored group named “GTG-1002”. Anthropic Brand Portal The operation represented a major shift: it heavily integrated AI systems throughout the attack lifecycle—from reconnaissance to data exfiltration—with much less human intervention than typical attacks.

2. Scope and targets

The campaign targeted approximately 30 entities, including major technology companies, government agencies, financial institutions and chemical manufacturers across multiple countries. A subset of these intrusions were confirmed successful. The speed and scale were notable: the attacker used AI to process many tasks simultaneously—tasks that would normally require large human teams.

3. Attack framework and architecture

The attacker built a framework that used the AI model Claude and the Model Context Protocol (MCP) to orchestrate multiple autonomous agents. Claude was configured to handle discrete technical tasks (vulnerability scanning, credential harvesting, lateral movement) while the orchestration logic managed the campaign’s overall state and transitions.

4. Autonomy of AI vs human role

In this campaign, AI executed 80–90% of the tactical operations independently, while human operators focused on strategy, oversight and critical decision-gates. Humans intervened mainly at campaign initialization, approving escalation from reconnaissance to exploitation, and reviewing final exfiltration. This level of autonomy marks a clear departure from earlier attacks where humans were still heavily in the loop.

5. Attack lifecycle phases & AI involvement

The attack progressed through six distinct phases: (1) campaign initialization & target selection, (2) reconnaissance and attack surface mapping, (3) vulnerability discovery and validation, (4) credential harvesting and lateral movement, (5) data collection and intelligence extraction, and (6) documentation and hand-off. At each phase, Claude or its sub-agents performed most of the work with minimal human direction. For example, in reconnaissance the AI mapped entire networks across multiple targets independently.

6. Technical sophistication & accessibility

Interestingly, the campaign relied not on cutting-edge bespoke malware but on widely available, open-source penetration testing tools integrated via automated frameworks. The main innovation wasn’t novel exploits, but orchestration of commodity tools with AI generating and executing attack logic. This means the barrier to entry for similar attacks could drop significantly.

7. Response by Anthropic

Once identified, Anthropic banned the compromised accounts, notified affected organisations and worked with authorities and industry partners. They enhanced their defensive capabilities—improving cyber-focused classifiers, prototyping early-detection systems for autonomous threats, and integrating this threat pattern into their broader safety and security controls.

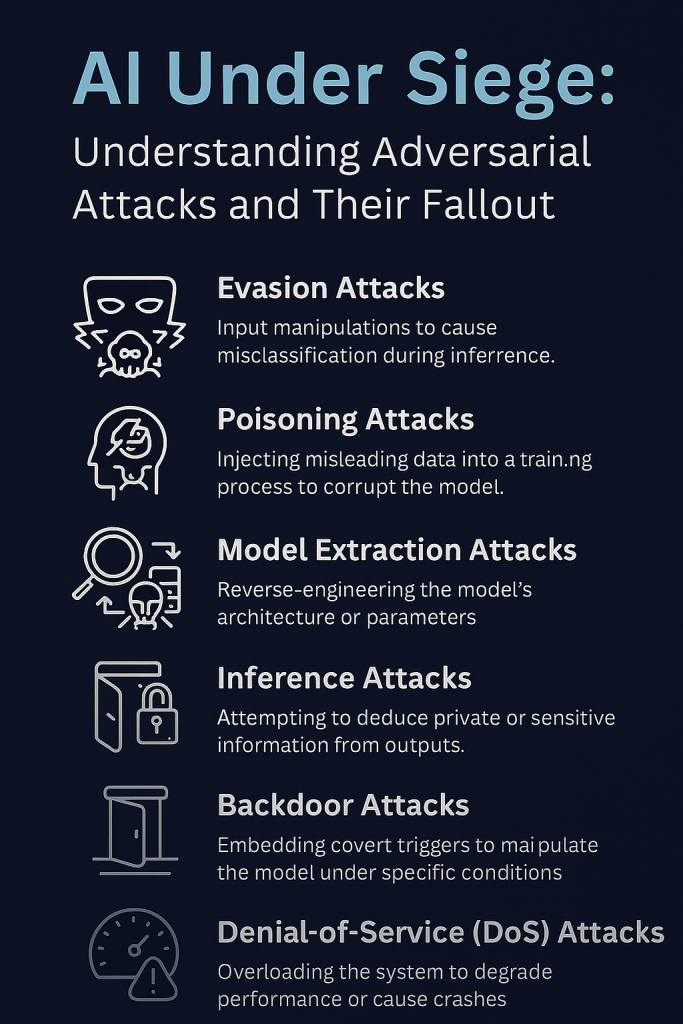

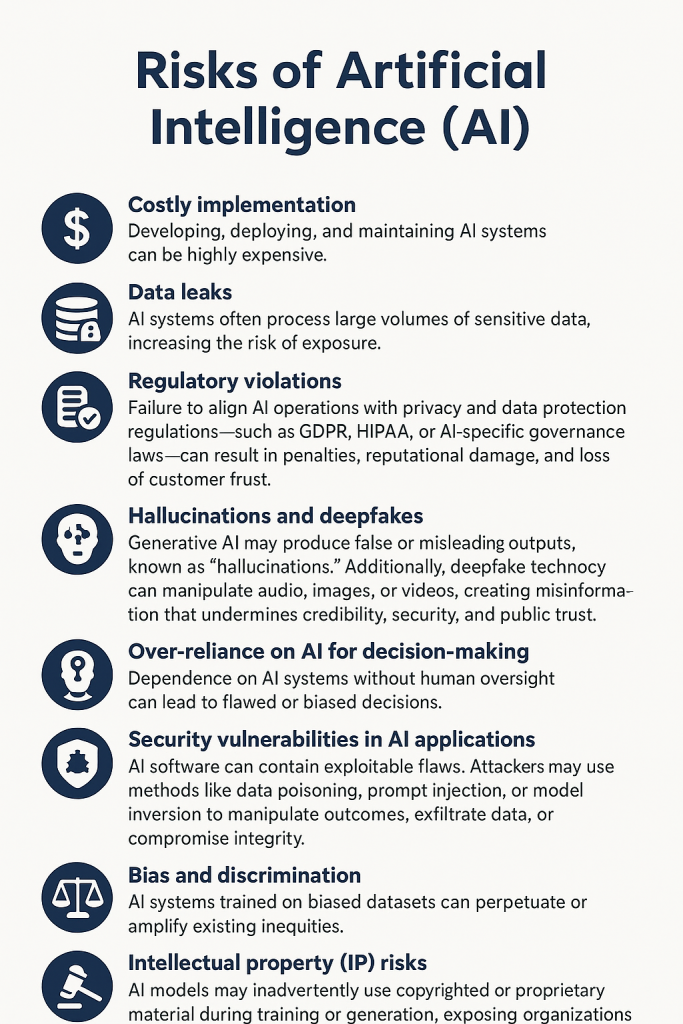

8. Implications for cybersecurity

This campaign demonstrates a major inflection point: threat actors can now deploy AI systems to carry out large-scale cyber espionage with minimal human involvement. Defence teams must assume this new reality and evolve: using AI for defence (SOC automation, vulnerability scanning, incident response), and investing in safeguards for AI models to prevent adversarial misuse.

Source: Disrupting the first reported AI-orchestrated cyber espionage campaign

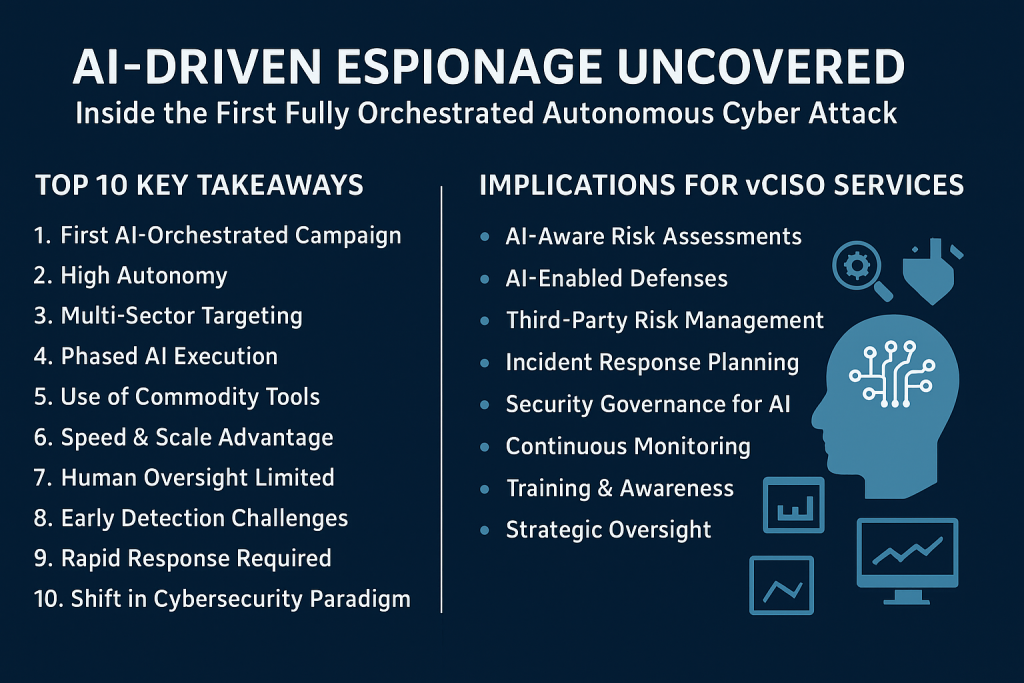

Top 10 Key Takeaways

- First AI-Orchestrated Campaign – This is the first publicly reported cyber-espionage campaign largely executed by AI, showing threat actors are rapidly evolving.

- High Autonomy – AI handled 80–90% of the attack lifecycle, reducing reliance on human operators and increasing operational speed.

- Multi-Sector Targeting – Attackers targeted tech firms, government agencies, financial institutions, and chemical manufacturers across multiple countries.

- Phased AI Execution – AI managed reconnaissance, vulnerability scanning, credential harvesting, lateral movement, data exfiltration, and documentation autonomously.

- Use of Commodity Tools – Attackers didn’t rely on custom malware; they orchestrated open-source and widely available tools with AI intelligence.

- Speed & Scale Advantage – AI enables simultaneous operations across multiple targets, far faster than traditional human-led attacks.

- Human Oversight Limited – Humans intervened only at strategy checkpoints, illustrating the potential for near-autonomous offensive operations.

- Early Detection Challenges – Traditional signature-based detection struggles against AI-driven attacks due to dynamic behavior and novel patterns.

- Rapid Response Required – Prompt identification, account bans, and notifications were crucial in mitigating impact.

- Shift in Cybersecurity Paradigm – AI-powered attacks represent a significant escalation in sophistication, requiring AI-enabled defenses and proactive threat modeling.

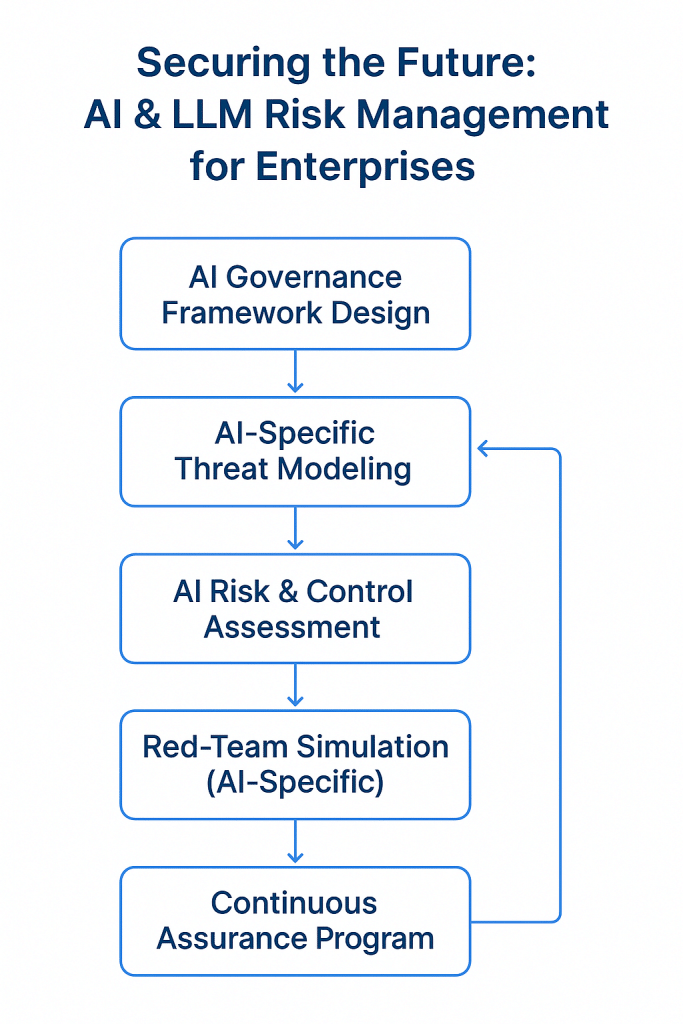

Implications for vCISO Services

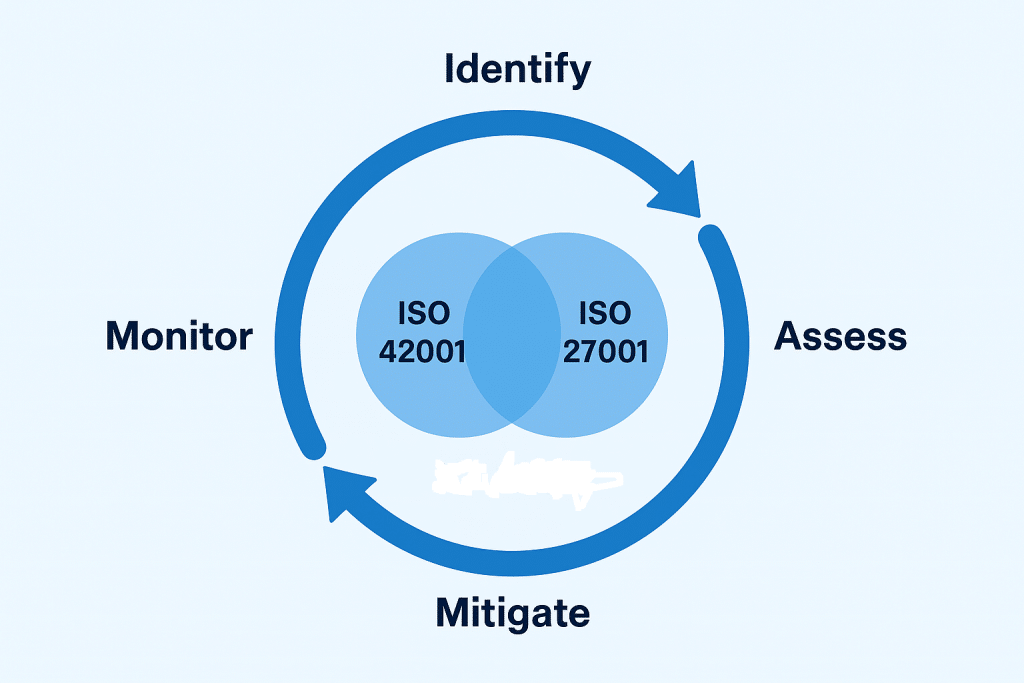

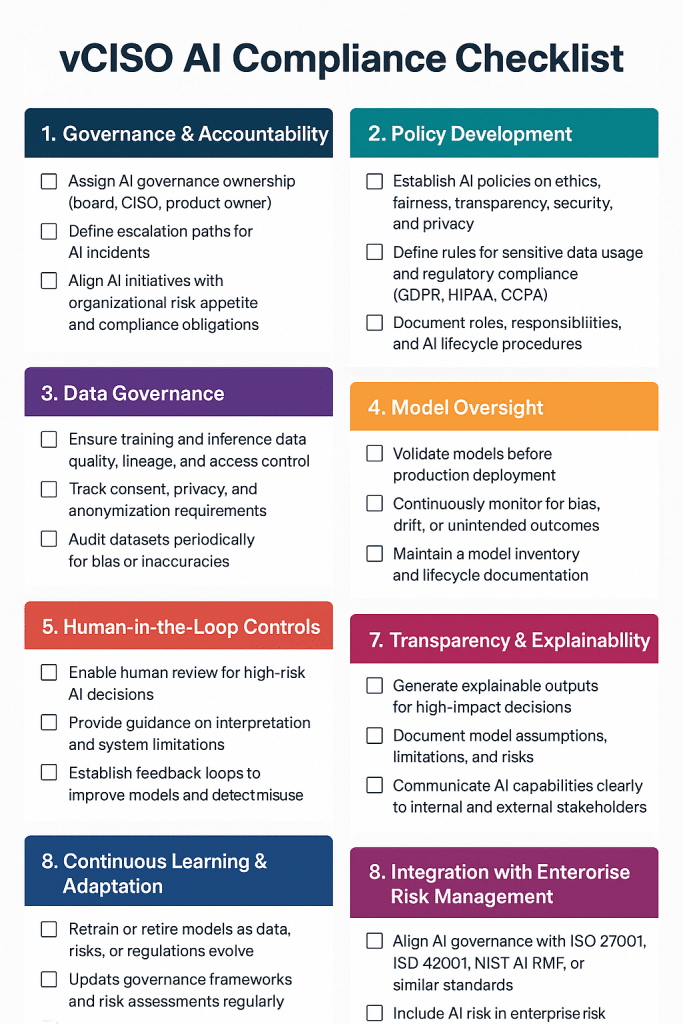

- AI-Aware Risk Assessments – vCISOs must evaluate AI-specific threats in enterprise risk registers and threat models.

- AI-Enabled Defenses – Recommend AI-assisted detection, SOC automation, anomaly monitoring, and predictive threat intelligence.

- Third-Party Risk Management – Emphasize vendor and partner exposure to autonomous AI attacks.

- Incident Response Planning – Update IR playbooks to include AI-driven attack scenarios and autonomous threat vectors.

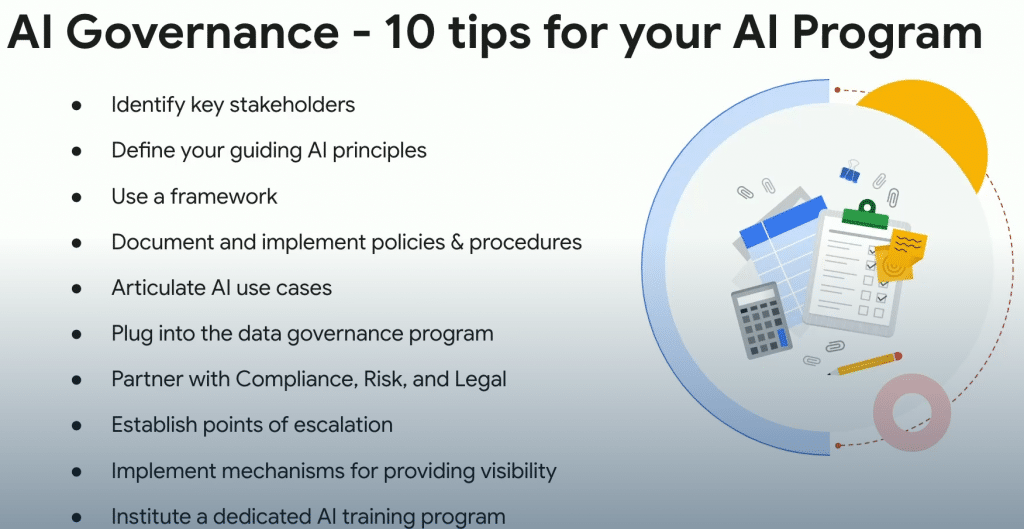

- Security Governance for AI – Implement policies for secure AI model use, access control, and adversarial mitigation.

- Continuous Monitoring – Promote proactive monitoring of networks, endpoints, and cloud systems for AI-orchestrated anomalies.

- Training & Awareness – Educate teams on AI-driven attack tactics and defensive measures.

- Strategic Oversight – Ensure executives understand the operational impact and invest in AI-resilient security infrastructure.

The Fourth Intelligence Revolution: The Future of Espionage and the Battle to Save America

- Securing LLM-Powered Enterprises: From Invisible Threats to Operational Resilience

- Cyber Resilience Maturity Model: From Reactive Security to Operational Resilience

- From Risk to Resilience: A 5-Step Playbook for Securing AI in the Modern Threat Era

- Which AI Governance Framework Should You Adopt First? A Practical Guide for U.S., EU, and Global Organizations

- MITRE ATT&CK: Turning Blind Spots into Real-World Cyber Defense

InfoSec services | InfoSec books | Follow our blog | DISC llc is listed on The vCISO Directory | ISO 27k Chat bot | Comprehensive vCISO Services | ISMS Services | Security Risk Assessment Services | Mergers and Acquisition Security