Too Powerful to Release? The AI Model That’s Exposing Hidden Cyber Risk

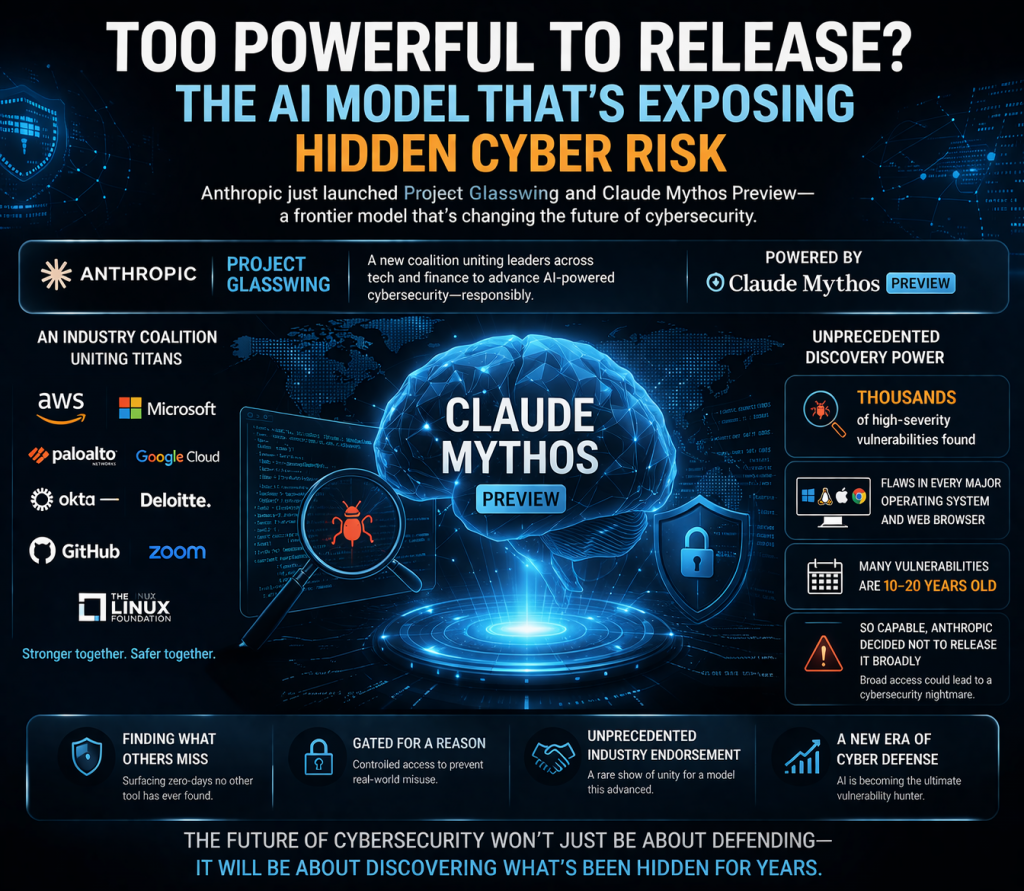

This development is one that deserves close attention. Anthropic has introduced Project Glasswing, a new industry coalition that brings together major players across technology and financial services. At the center of this initiative is a highly advanced frontier model known as Claude Mythos Preview, signaling a significant shift in how AI intersects with cybersecurity.

Project Glasswing is not just another AI release—it represents a coordinated effort between leading organizations to explore the implications of next-generation AI capabilities. By aligning multiple sectors, the initiative highlights that the impact of such models extends far beyond research labs into critical infrastructure and global enterprise environments.

What sets Claude Mythos apart is its demonstrated ability to identify high-severity vulnerabilities at scale. According to the announcement, the model has already uncovered thousands of serious security flaws, including weaknesses across major operating systems and widely used web browsers. This level of discovery suggests a step-change in automated vulnerability research.

Even more striking is the nature of the vulnerabilities being found. Many of them are not newly introduced issues but long-standing flaws—some dating back one to two decades. This indicates that existing tools and methods have been unable to fully surface or prioritize these risks, leaving hidden exposure in foundational technologies.

The implications for cybersecurity are profound. A model capable of uncovering such deeply embedded vulnerabilities challenges long-held assumptions about the maturity and completeness of current security practices. It suggests that the attack surface is not only larger than expected, but also less understood than previously believed.

Recognizing the potential risks, Anthropic has chosen not to release the model broadly. Instead, access is being tightly controlled through the Glasswing coalition. The company has explicitly stated that unrestricted availability could lead to a cybersecurity crisis, as malicious actors could leverage the same capabilities to discover and exploit vulnerabilities at unprecedented speed.

This decision marks a notable departure from the typical AI release cycle, where rapid deployment and widespread access are often prioritized. In this case, restraint reflects an acknowledgment that capability has outpaced control, and that governance must evolve alongside technical progress.

It is also significant that a relatively young company like Anthropic has secured broad industry backing for such a cautious approach. The participation and endorsement of established cybersecurity and financial institutions signal a shared recognition of both the opportunity and the risk presented by models like Mythos.

Another critical point is that Mythos is reportedly identifying zero-day vulnerabilities that other tools have missed entirely. If validated at scale, this positions AI not just as a support tool for security teams, but as a primary engine for vulnerability discovery, fundamentally changing how organizations approach risk identification and remediation.

Perspective:

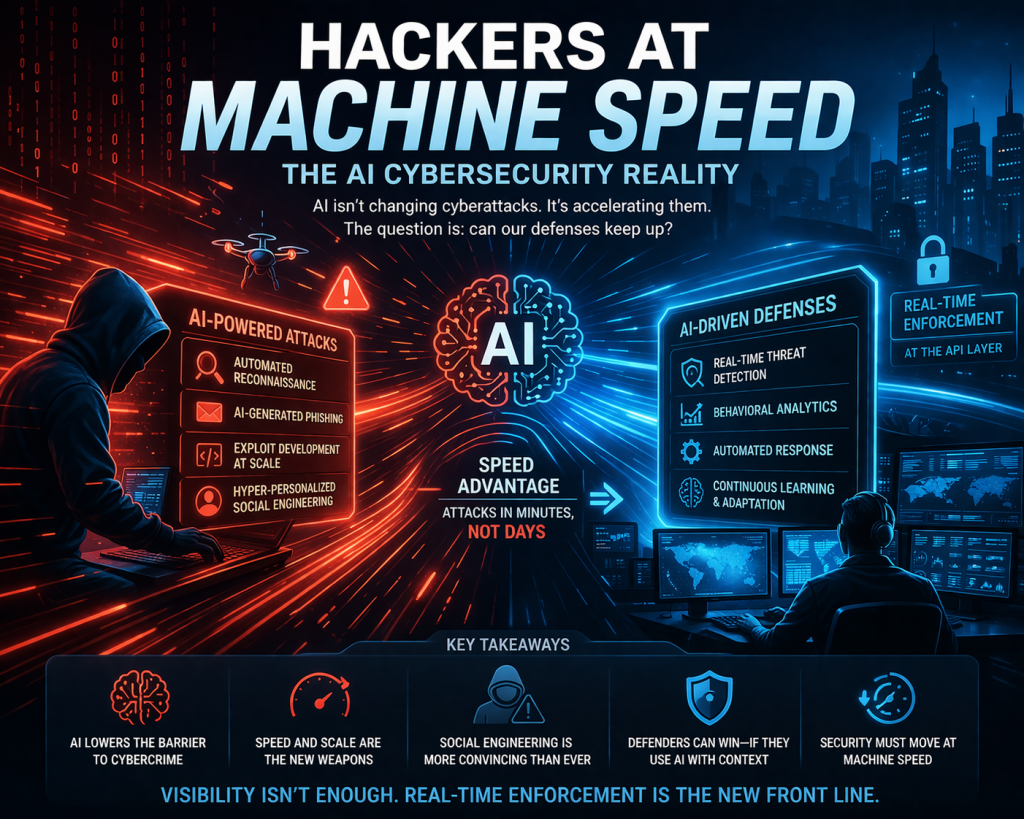

This moment feels like an inflection point for cybersecurity. What we’re seeing is the emergence of AI systems that can outpace traditional security processes, not just incrementally but exponentially. The real issue is no longer whether vulnerabilities exist—it’s how quickly they can be discovered and exploited.

This reinforces a critical shift: cybersecurity must move from periodic testing and reactive patching to continuous, real-time control. If AI can find vulnerabilities at scale, attackers will eventually gain access to similar capabilities. The only viable response is to implement runtime enforcement and API-level controls that can mitigate risk even when unknown vulnerabilities exist.

In short, AI is forcing the industry to confront a new reality—you can’t patch fast enough, so you must control behavior in real time.

Bottom line:

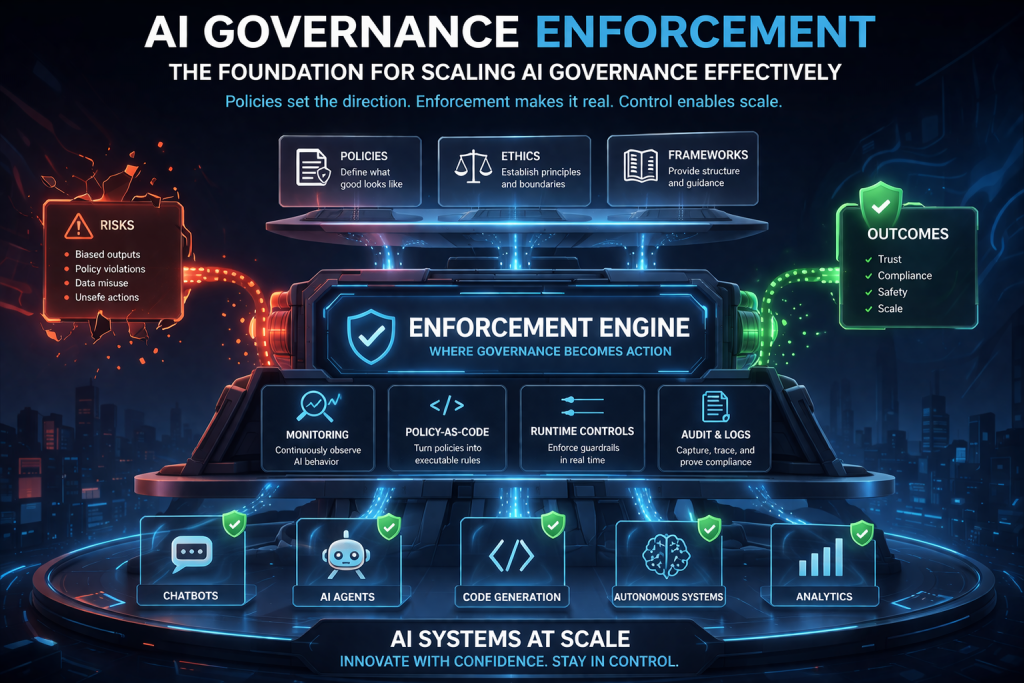

If your AI governance strategy cannot demonstrate continuous monitoring, control, and enforcement, it is unlikely to stand up to audit—or real-world threats.

That’s why AI governance enforcement is not just a feature—it’s the foundation for making AI governance actually work at scale.

Ready to Operationalize AI Governance?

If you’re serious about moving from **AI governance theory → real enforcement**,

DISC InfoSec can help you build the control layer your AI systems need.

Most organizations have AI governance documents — but auditors now want proof of enforcement.

Policies alone don’t reduce AI risk. Real‑time monitoring, control, and enforcement do.

If your AI governance strategy can’t demonstrate continuous oversight, it won’t stand up to audit or real‑world threats.

DISC InfoSec helps organizations operationalize AI governance with integrated frameworks, runtime controls, and proven certification success.

Move from AI governance theory to enforcement.

Read the full post below: Is Your AI Governance Strategy Audit‑Ready — or Just Documented?

Schedule a consultation or drop a note below: info@deurainfosec.com

InfoSec services | InfoSec books | Follow our blog | DISC llc is listed on The vCISO Directory | ISO 27k Chat bot | Comprehensive vCISO Services | ISMS Services | AIMS Services | Security Risk Assessment Services | Mergers and Acquisition Security

Is your AI strategy truly audit-ready today?

AI governance is no longer optional. Frameworks like ISO/IEC 42001 AI Management System Standard and regulations such as the EU AI Act are rapidly reshaping compliance expectations for organizations using AI.

DISC InfoSec brings deep expertise across AI, cybersecurity, and regulatory compliance to help you build trust, reduce risk, and stay ahead of evolving mandates—with a proven track record of success.

Ready to lead with confidence? Let’s start the conversation.

At DISC InfoSec, we help organizations navigate this landscape by aligning AI risk management, governance, security, and compliance into a single, practical roadmap. Whether you are experimenting with AI or deploying it at scale, we help you choose and operationalize the right frameworks to reduce risk and build trust. Learn more at DISC InfoSec.

- Claude Mythos and the Future of Cybersecurity: Powerful—and Potentially Dangerous

- Hackers at Machine Speed: The AI Cybersecurity Reality

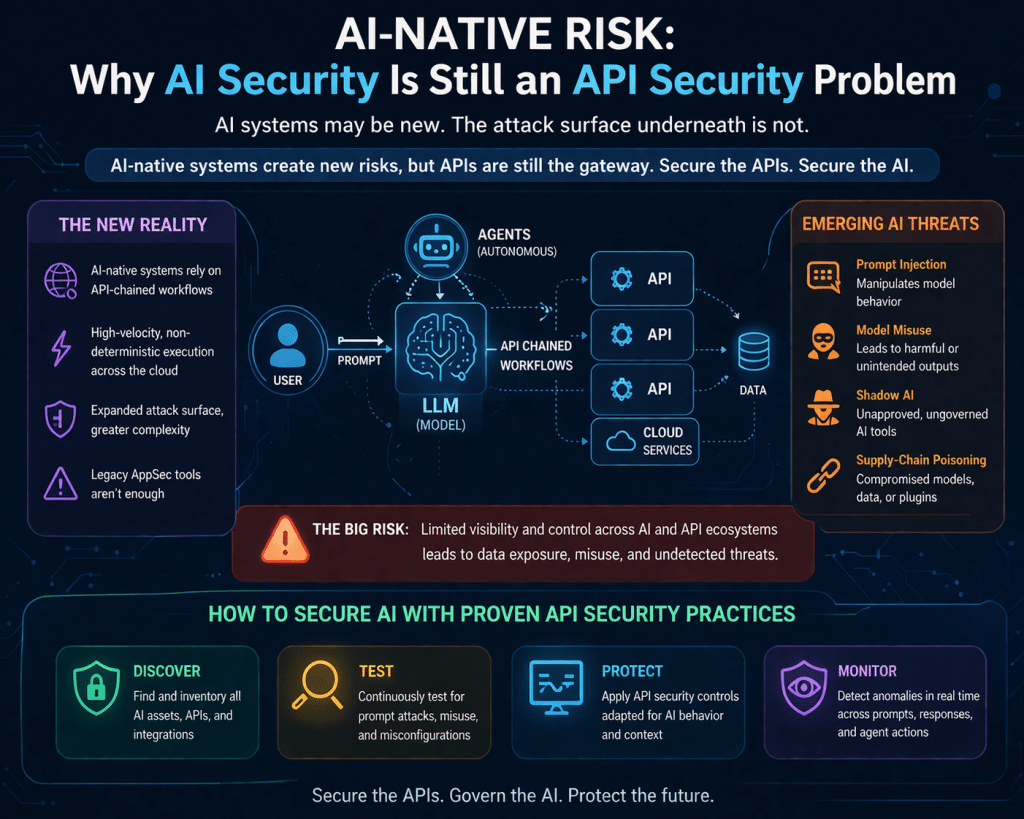

- AI Security = API Security: The Case for Real-Time Enforcement

- Is Your AI Governance Strategy Audit-Ready—or Just Documented?

- AI-Native Risk: Why AI Security Is Still an API Security Problem