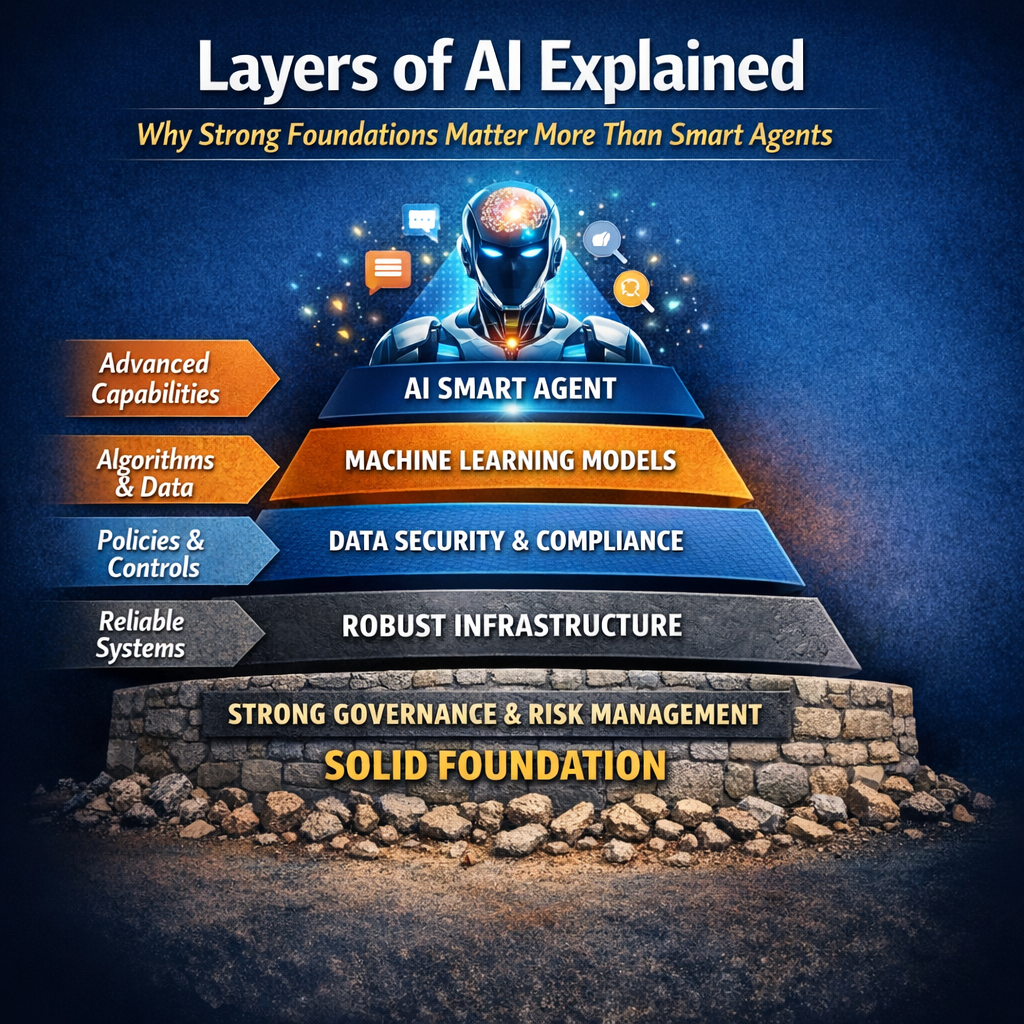

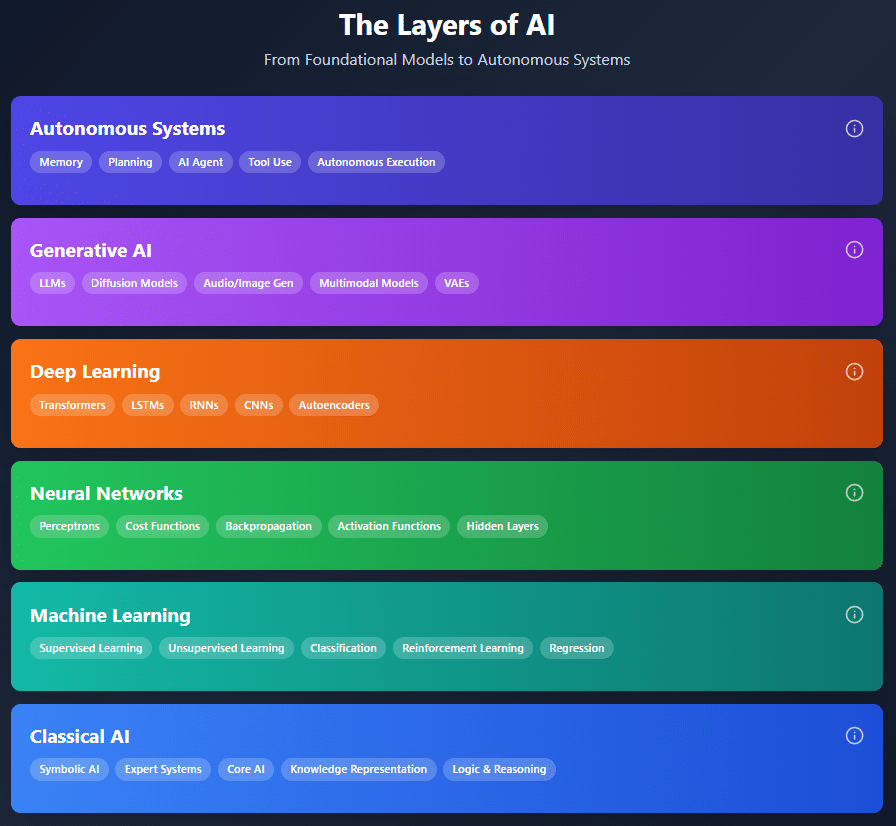

Understanding the Layers of AI

The “Layers of AI” model helps explain how artificial intelligence evolves from simple rule-based logic into autonomous, goal-driven systems. Each layer builds on the capabilities of the one beneath it, adding complexity, adaptability, and decision-making power. Understanding these layers is essential for grasping not just how AI works technically, but also where risks, governance needs, and human oversight must be applied as systems move closer to autonomy.

Classical AI: The Rule-Based Foundation

Classical AI represents the earliest form of artificial intelligence, relying on explicit rules, logic, and symbolic representations of knowledge. Systems such as expert systems and logic-based reasoning engines operate deterministically, meaning they behave exactly as programmed. While limited in flexibility, Classical AI laid the groundwork for structured reasoning, decision trees, and formal problem-solving that still influence modern systems.

Machine Learning: Learning from Data

Machine Learning marked a shift from hard-coded rules to systems that learn patterns from data. Techniques such as supervised, unsupervised, and reinforcement learning allow models to improve performance over time without explicit reprogramming. Tasks like classification, regression, and prediction became scalable, enabling AI to adapt to real-world variability rather than relying solely on predefined logic.

Neural Networks: Mimicking the Brain

Neural Networks introduced architectures inspired by the human brain, using interconnected layers of artificial neurons. Concepts such as perceptrons, activation functions, cost functions, and backpropagation allow these systems to learn complex representations. This layer enables non-linear problem solving and forms the structural backbone for more advanced AI capabilities.

Deep Learning: Scaling Intelligence

Deep Learning extends neural networks by stacking many hidden layers, allowing models to extract increasingly abstract features from raw data. Architectures such as CNNs, RNNs, LSTMs, transformers, and autoencoders power breakthroughs in vision, speech, language, and pattern recognition. This layer made AI practical at scale, especially with large datasets and high-performance computing.

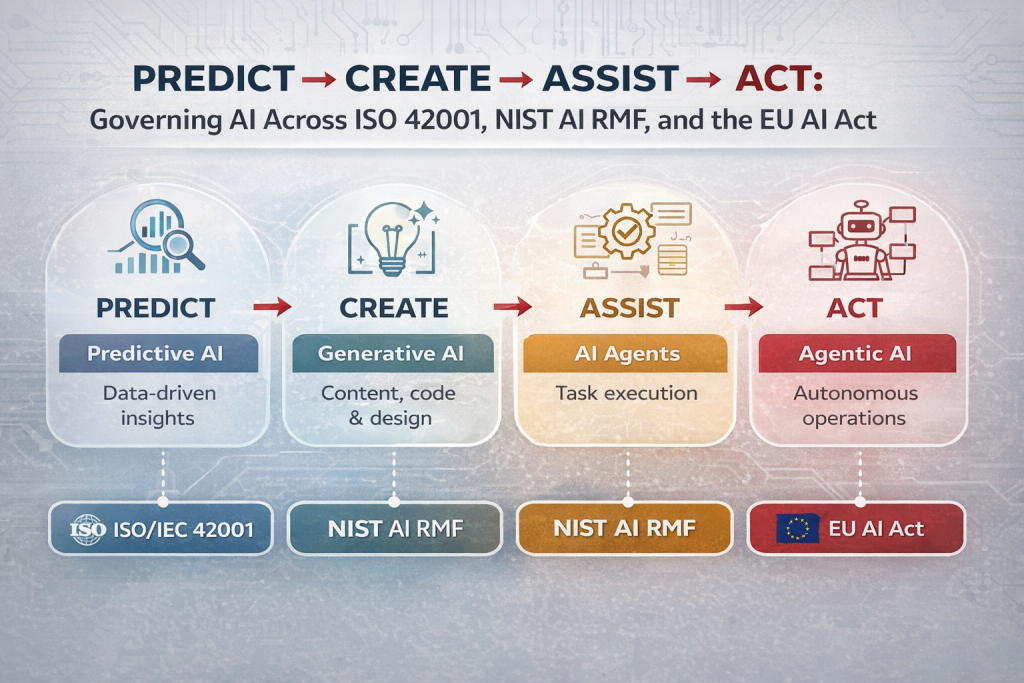

Generative AI: Creating New Content

Generative AI focuses on producing new data rather than simply analyzing existing information. Large Language Models (LLMs), diffusion models, VAEs, and multimodal systems can generate text, images, audio, video, and code. This layer introduces creativity, probabilistic reasoning, and uncertainty, but also raises concerns around hallucinations, bias, intellectual property, and trustworthiness.

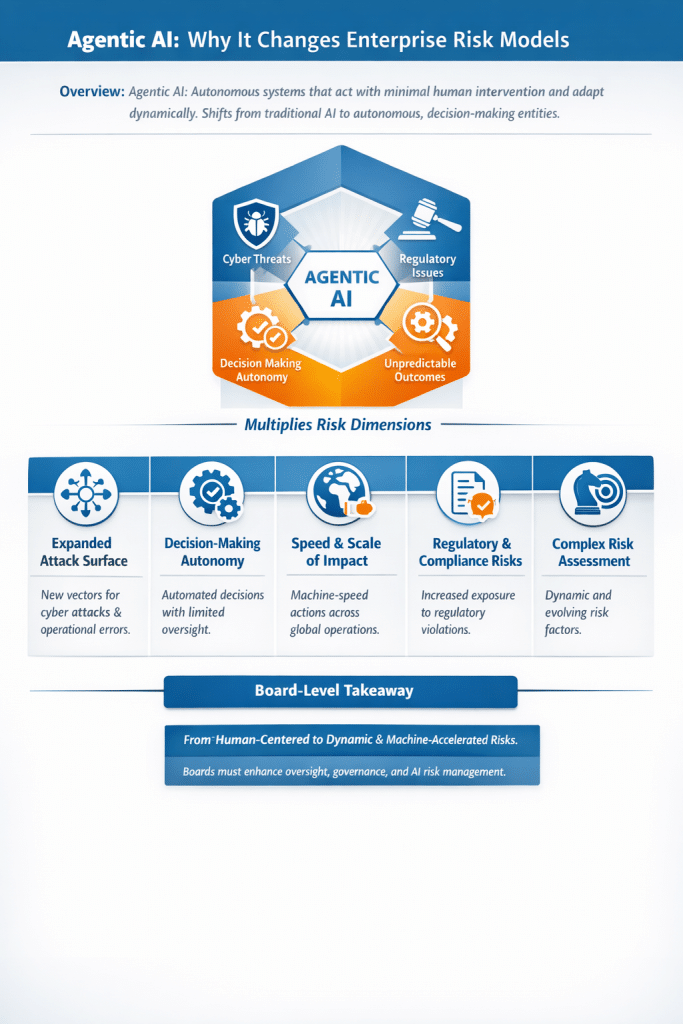

Agentic AI: Acting with Purpose

Agentic AI adds decision-making and goal-oriented behavior on top of generative models. These systems can plan tasks, retain memory, use tools, and take actions autonomously across environments. Rather than responding to a single prompt, agentic systems operate continuously, making them powerful—but also significantly more complex to govern, audit, and control.

Autonomous Execution: AI Without Constant Human Input

At the highest layer, AI systems can execute tasks independently with minimal human intervention. Autonomous execution combines planning, tool use, feedback loops, and adaptive behavior to operate in real-world conditions. This layer blurs the line between software and decision-maker, raising critical questions about accountability, safety, alignment, and ethical boundaries.

My Opinion: From Foundations to Autonomy

The layered model of AI is useful because it makes one thing clear: autonomy is not a single leap—it is an accumulation of capabilities. Each layer introduces new power and new risk. While organizations are eager to adopt agentic and autonomous AI, many still lack maturity in governing the foundational layers beneath them. In my view, responsible AI adoption must follow the same layered discipline—strong foundations, clear controls at each level, and escalating governance as systems gain autonomy. Skipping layers in governance while accelerating layers in capability is where most AI risk emerges.

InfoSec services | InfoSec books | Follow our blog | DISC llc is listed on The vCISO Directory | ISO 27k Chat bot | Comprehensive vCISO Services | ISMS Services | AIMS Services | Security Risk Assessment Services | Mergers and Acquisition Security

At DISC InfoSec, we help organizations navigate this landscape by aligning AI risk management, governance, security, and compliance into a single, practical roadmap. Whether you are experimenting with AI or deploying it at scale, we help you choose and operationalize the right frameworks to reduce risk and build trust. Learn more at DISC InfoSec.

- Securing LLM-Powered Enterprises: From Invisible Threats to Operational Resilience

- Cyber Resilience Maturity Model: From Reactive Security to Operational Resilience

- From Risk to Resilience: A 5-Step Playbook for Securing AI in the Modern Threat Era

- Which AI Governance Framework Should You Adopt First? A Practical Guide for U.S., EU, and Global Organizations

- MITRE ATT&CK: Turning Blind Spots into Real-World Cyber Defense