As Jack Jones, co-founder of RiskLens, tells the story, he started down the road to creating the FAIR™ model for cyber risk quantification because of “two questions and two lame answers.” As CISO at Nationwide insurance, he presented his pitch for cybersecurity investment and was asked:

“How much risk do we have?”

“How much less risk will we have if we spend the millions of dollars you’re asking for?”

To which Jack could only answer “Lots” and “Less.”

“If he had asked me to talk more about the ‘vulnerabilities’ we had or the threats we faced, I could have talked all day,” he recalled in the FAIR book, Measuring and Managing Information Risk.

In that moment, Jack saw the need for a way that cybersecurity teams could communicate risk to senior executives and boards of directors in the language of business, dollars and cents.

Some CISOs are still in the position of Jack pre-quantification – talking all day and delivering lame answers, from the board’s point of view. Here’s a short guide to what they’re not saying – and how RiskLens, the analytics platform built on FAIR, can provide the right answers.

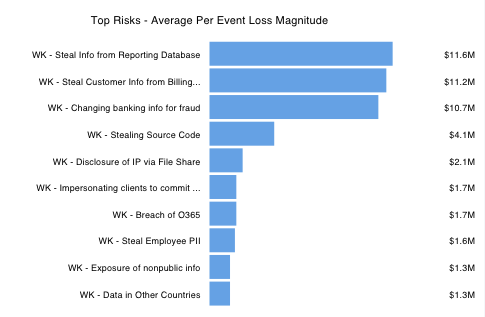

1. I don’t really know what our top risks are

I can ask a group of subject matter experts in the company to vote on a top risks list based on their opinions, but that’s as close as I can get.

Top Risks is the first report that many new RiskLens users run, and it only takes minutes, using the Rapid Risk Assessment capability of the RiskLens platform. The platform guides you through properly defining a set of risks (say, from your risk register) for quantitative analysis according to the FAIR standard. To speed the process, the platform draws on data from pre-populated loss tables. The resulting analysis quickly stack-ranks the risks for probable size of loss in dollar terms, across several parameters.

2. I can’t give you an ROI on the money you give me to invest in cybersecurity

You see, cybersecurity is different from other programs you’re asked to invest in – it’s constantly changing and never-ending. You never really hit a point of success; you just chip away at the problem.

With Top Risks in hand, RiskLens clients can dig deeper on individual scenarios and run a Detailed Analysis to expose the drivers of risk to see, for instance, what types of threat actors account for the highest frequency of attacks or what classes of assets account for the highest probable losses. Then they can run the Risk Treatment Analysis capability of the platform to evaluate controls for their ROI in risk reduction.

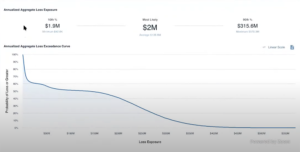

3. I can’t really tell you if things are getting better on cyber risk.

I can show you our progress with compliance checklists and maturity scales, and I hope you’ll assume that’s reducing risk.

While compliance with NIST CSF, CIS Controls, etc. is good and useful, these frameworks don’t measure performance outcomes in reducing risk – that takes a quantitative approach. The RiskLens platform can aggregate risk scenarios to generate risk assessment reports showing risk across the enterprise or by business unit, in dollar terms – and to show risk exposure over time. It’s easy to update and re-run risk assessments, thanks to the platform’s Data Helpers that store risk data for re-use. Update a Data Helper, and all the related risk scenarios update at the same time – and so do the aggregated risk assessments.

4. I can’t help you set a risk appetite.

I don’t really know how much risk we have and am pretty much operating on the principle that no risk is acceptable.

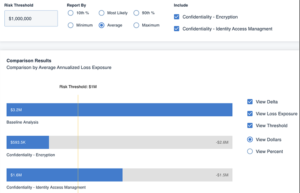

Boards should have a strong sense of their appetite for risk in cyber as in all fields, but qualitative (high-medium-low) cyber risk analysis only supports vague appetite statements that are difficult to follow in practice. On the RiskLens platform, a CISO can input a dollar figure for “risk threshold” as a hypothetical, and run the analyses to rank how the various risk scenarios stack up against that limit, making a risk appetite a practical target.

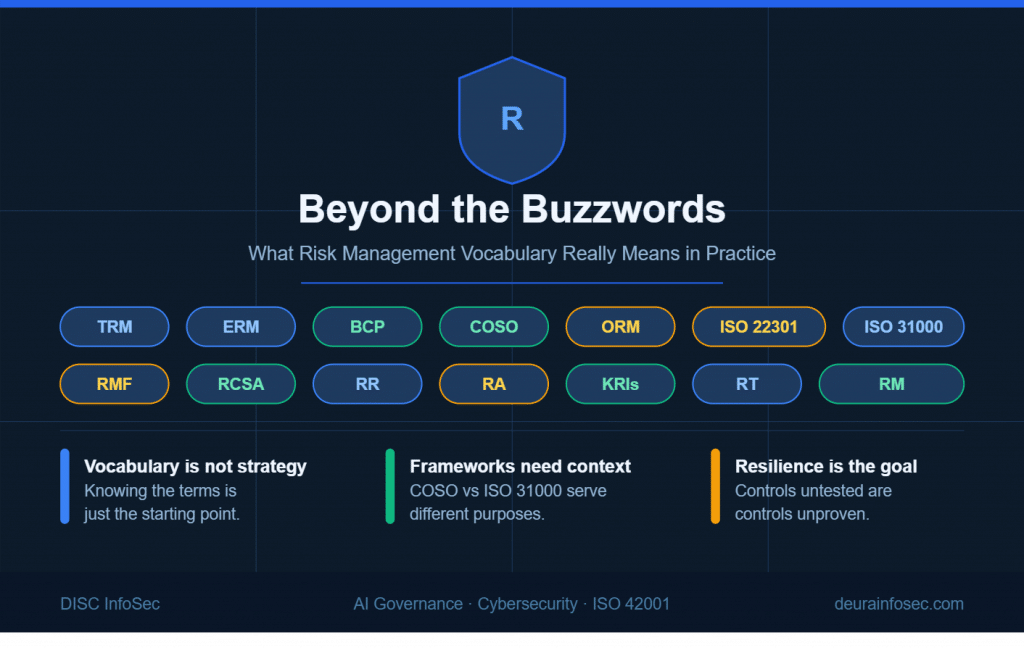

5. I don’t know how to align cyber risk management with the other forms of risk management we do.

Enterprise risk, operational risk, market risk, financial risk—I’ve heard their board presentations in quantitative terms. But cyber is just different.

Quantification is the answer – reporting on cyber risk in the same financial terms that the rest of enterprise risk management programs employ finally gives the board what it wants to hear on cyber risk management. ISACA, the National Association of Corporate Directors and the COSO ERM framework have all recommended FAIR for board reporting. As an ISACA white paper said,

The more a risk-management measurement resembles the financial statements and income projections that the board typically sees, the easier it is for board members to manage cybersecurity risk…FAIR can enable the economic representation of cybersecurity risk that is sorely missing in the boardroom, but can illuminate cybersecurity exposure.