Phishing is old, but AI just gave it new life

Different Tricks, Smarter Clicks: AI-Powered Phishing and the New Era of Enterprise Resilience.

1. Old Threat, New Tools

Phishing is a well-worn tactic, but artificial intelligence has given it new potency. A recent report from Comcast, based on the analysis of 34.6 billion security events, shows attackers are combining scale with sophistication to slip past conventional defenses.

2. Parallel Campaigns: Loud and Silent

Modern attackers don’t just pick between noisy mass attacks and stealthy targeted ones — they run both in tandem. Automated phishing campaigns generate high volumes of noise, while expert threat actors probe networks quietly, trying to avoid detection.

3. AI as a Force Multiplier

Generative AI lets even low-skilled threat actors craft very convincing phishing messages and malware. On the defender side, AI-powered systems are essential for anomaly detection and triage. But automation alone isn’t enough — human analysts remain crucial for interpreting signals, making strategic judgments, and orchestrating responses.

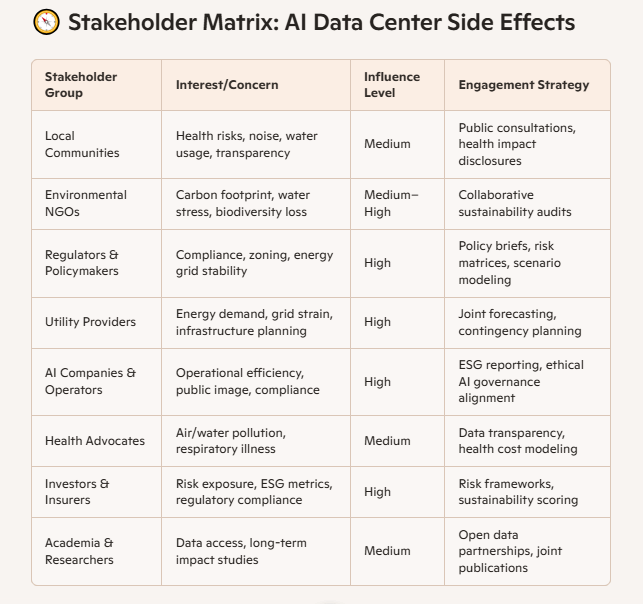

4. Shadow AI & Expanded Attack Surface

One emerging risk is “shadow AI” — when employees use unauthorized AI tools. This behavior expands the attack surface and introduces non-human identities (bots, agents, service accounts) that need to be secured, monitored, and governed.

5. Alert Fatigue & Resource Pressure

Security teams are already under heavy load. They face constant alerts, redundant tasks, and a flood of background noise, which makes it easy for real threats to be missed. Meanwhile, regular users remain the weakest link—and a single click can upset layers of defense.

6. Proxy Abuse & Eroding Trust Signals

Attackers are increasingly using compromised home and business devices to act as proxy relays, making malicious traffic look benign. This undermines traditional trust cues like IP geolocation or blocklists. As a result, defenders must lean more heavily on behavioral analysis and zero-trust models.

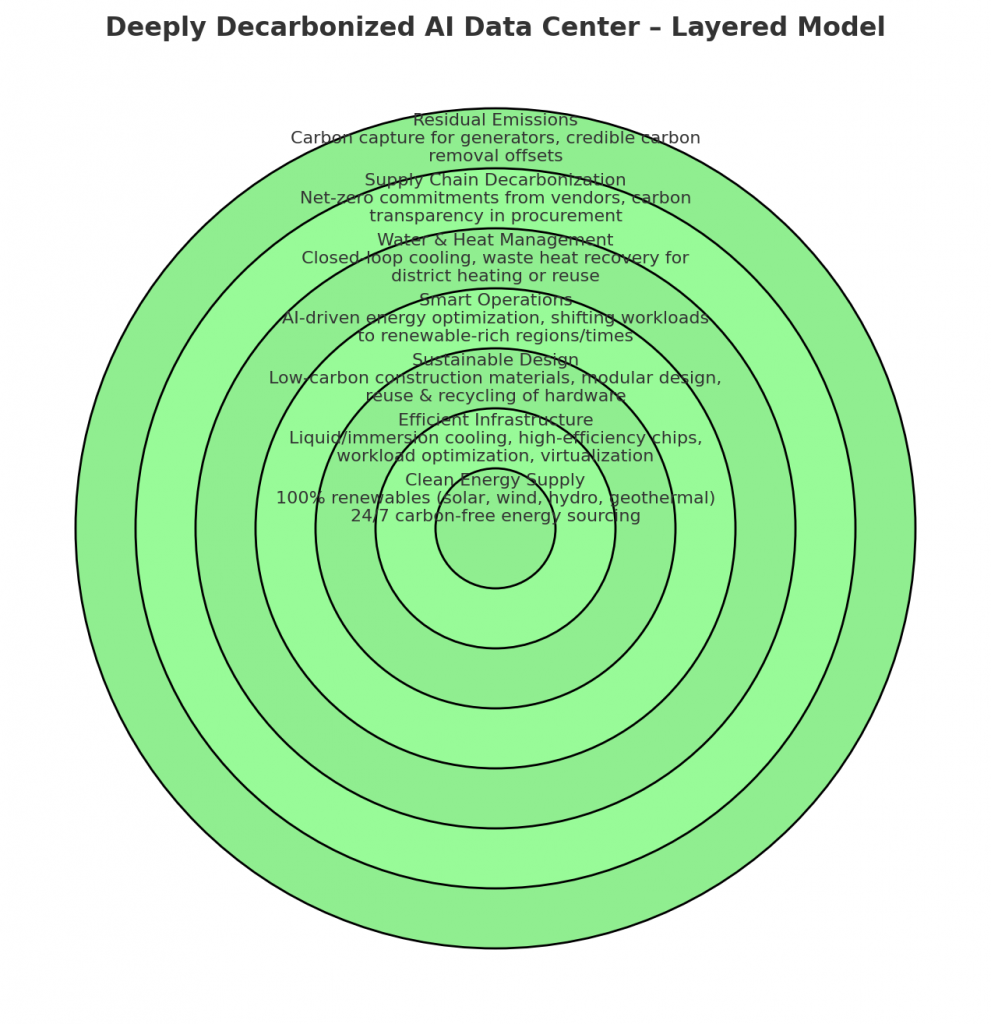

7. Building a Layered, Resilient Approach

Given that no single barrier is perfect, organizations must adopt layered defenses. That includes the basics (patching, multi-factor authentication, secure gateways) plus adaptive capabilities like threat hunting, AI-driven detection, and resilient governance of both human and machine identities.

8. The Balance of Innovation and Risk

Threats are growing in both scale and stealth. But there’s also opportunity: as attackers adopt AI, defenders can too. The key lies in combining intelligent automation with human insight, and turning innovation into resilience. As Noopur Davis (Comcast’s EVP & CISO) noted, this is a transformative moment for cyber defense.

My opinion

This article highlights a critical turning point: AI is not only a tool for attackers, but also a necessity for defenders. The evolving threat landscape means that relying solely on traditional rules-based systems is insufficient. What stands out to me is that human judgment and strategy still matter greatly — automation can help filter and flag, but it cannot replace human intuition, experience, or oversight. The real differentiator will be organizations that master the orchestration of AI systems and nurture security-aware people and processes. In short: the future of cybersecurity is hybrid — combining the speed and scale of automation with the wisdom and flexibility of humans.

Building a Cyber Risk Management Program: Evolving Security for the Digital Age

AIMS and Data Governance – Managing data responsibly isn’t just good practice—it’s a legal and ethical imperative.

Secure Your Business. Simplify Compliance. Gain Peace of Mind

InfoSec services | InfoSec books | Follow our blog | DISC llc is listed on The vCISO Directory | ISO 27k Chat bot | Comprehensive vCISO Services | ISMS Services | Security Risk Assessment Services | Mergers and Acquisition Security