Large Language Models (LLMs) are revolutionizing the way developers interact with code, automating tasks from code generation to debugging. While this boosts productivity, it also introduces new security risks. For example, maliciously crafted prompts or inputs can trick an LLM into producing insecure code or leaking sensitive data. Countermeasures include rigorous input validation, sandboxing generated code, and implementing access controls to prevent execution of untrusted outputs. Continuous monitoring and testing of LLM outputs is also essential to catch anomalies before they escalate into vulnerabilities.

The prompt itself has become a critical component of the attack surface. Prompt injection attacks—where attackers manipulate input to influence the model’s behavior—pose a novel security threat. Risks include unauthorized data exfiltration, execution of harmful instructions, or bypassing model safety mechanisms. Effective countermeasures involve prompt sanitization, context isolation, and using “safe mode” configurations in LLMs that limit the scope of model responses. Organizations must treat prompt security with the same seriousness as traditional code security.

Securing the code alone is no longer sufficient. Organizations must also focus on securing prompts, as they now represent a vector through which attacks can propagate. Insecure prompt handling can allow attackers to manipulate outputs, expose confidential information, or perform unintended actions. Countermeasures include designing prompts with strict templates, implementing input/output validation, and logging prompt interactions to detect anomalies. Additionally, access controls and role-based permissions can reduce the risk of malicious or accidental misuse.

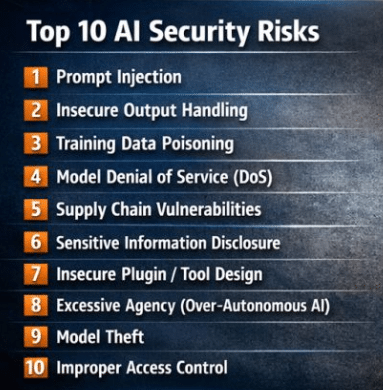

Understanding the OWASP Top 10 for LLM-powered applications is crucial for identifying and mitigating security risks. These risks range from injection attacks and data leakage to model misuse and broken access control. Awareness of these threats allows organizations to implement targeted countermeasures, such as secure coding practices for generated code, API rate limiting, proper authentication and authorization, and robust monitoring of model behavior. Mapping LLM-specific risks to established security frameworks helps ensure a comprehensive approach to security.

Building trust boundaries and practicing ethical research are essential as we navigate this emerging cybersecurity frontier. Risks include model bias, unintentional harm through unsafe outputs, and misuse of generated information. Countermeasures involve clearly defining trust boundaries between users and models, implementing human-in-the-loop review processes, conducting regular audits of model outputs, and following ethical guidelines for data handling and AI experimentation. Transparency with stakeholders and responsible disclosure practices further strengthen trust.

From my perspective, while these areas cover the most immediate LLM security challenges, organizations should also consider supply chain risks (like vulnerabilities in model weights or third-party APIs), adversarial attacks on training data, and model inversion risks where sensitive information can be inferred from outputs. A proactive, layered approach combining technical controls, governance, and continuous monitoring is critical to safely leverage LLMs in production environments.

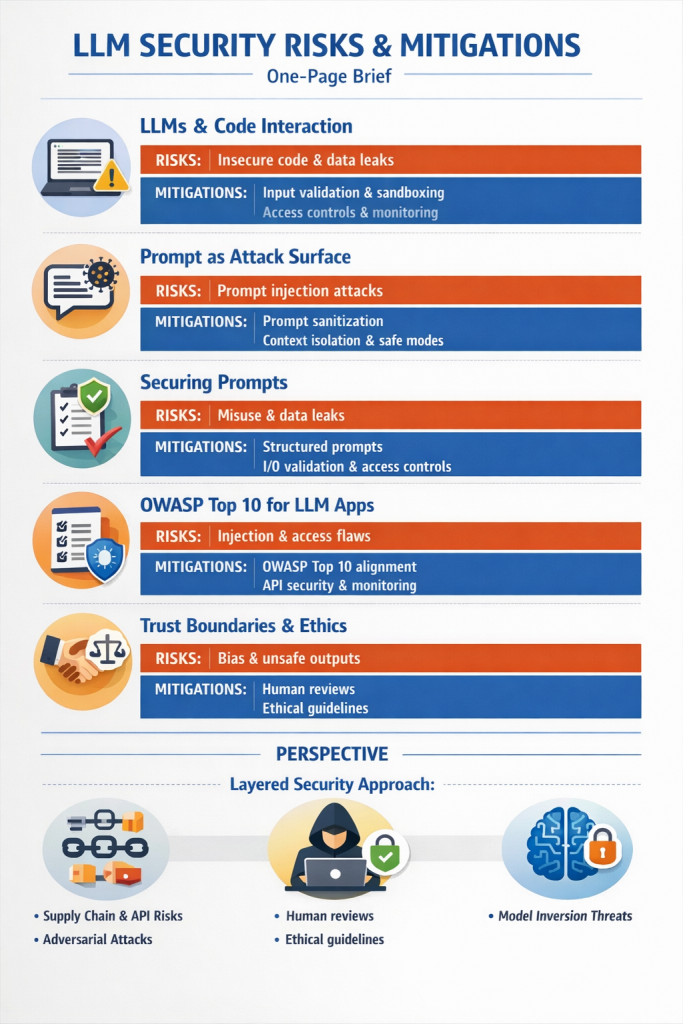

Here’s a concise one-page visual brief version of the LLM security risks and mitigations.

LLM Security Risks & Mitigations: One-Page Brief

1. LLMs and Code Interaction

- Risk: LLMs can generate insecure code, leak secrets, or introduce vulnerabilities.

- Countermeasures:

- Input validation on user prompts

- Sandbox execution for generated code

- Access controls and monitoring outputs

2. Prompt as an Attack Surface

- Risk: Prompt injection can manipulate the model to exfiltrate data or bypass safety mechanisms.

- Countermeasures:

- Prompt sanitization and template enforcement

- Context isolation to limit exposure

- Safe-mode configurations to restrict outputs

3. Securing Prompts

- Risk: Insecure prompt handling can allow misuse, data leaks, or unintended actions.

- Countermeasures:

- Structured prompt templates

- Input/output validation

- Logging and monitoring prompt interactions

- Role-based access control for sensitive prompts

4. OWASP Top 10 for LLM Apps

- Risk: Injection attacks, broken access control, data leakage, and model misuse.

- Countermeasures:

- Map LLM risks to OWASP Top 10 framework

- Secure coding for generated code

- API rate limiting and authentication

- Continuous behavior monitoring

5. Trust Boundaries & Ethical Practices

- Risk: Model bias, unsafe outputs, misuse of information.

- Countermeasures:

- Define trust boundaries between users and LLMs

- Human-in-the-loop review

- Ethical AI guidelines and audits

- Transparency with stakeholders

Perspective

- LLM security requires a layered approach: technical controls, governance, and continuous monitoring.

- Additional risks to consider:

- Supply chain vulnerabilities (third-party models, APIs)

- Adversarial attacks on training data

- Model inversion and data inference attacks

- Organizations must treat prompts as first-class security artifacts alongside traditional code.

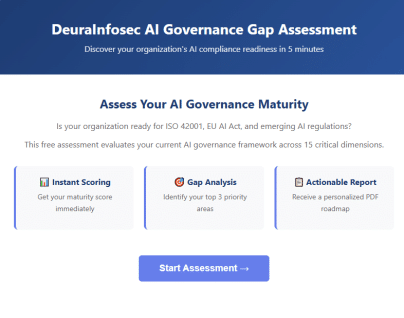

Get Your Free AI Governance Readiness Assessment – Is your organization ready for ISO 42001, EU AI Act, and emerging AI regulations?

AI Governance Gap Assessment tool

- 15 questions

- Instant maturity score

- Detailed PDF report

- Top 3 priority gaps

Click below to open an AI Governance Gap Assessment in your browser or click the image to start assessment.

ai_governance_assessment-v1.5Download

Built by AI governance experts. Used by compliance leaders.

InfoSec services | InfoSec books | Follow our blog | DISC llc is listed on The vCISO Directory | ISO 27k Chat bot | Comprehensive vCISO Services | ISMS Services | AIMS Services | Security Risk Assessment Services | Mergers and Acquisition Security

At DISC InfoSec, we help organizations navigate this landscape by aligning AI risk management, governance, security, and compliance into a single, practical roadmap. Whether you are experimenting with AI or deploying it at scale, we help you choose and operationalize the right frameworks to reduce risk and build trust. Learn more at DISC InfoSec.

- MITRE ATT&CK: Turning Blind Spots into Real-World Cyber Defense

- When AI Hacks Faster Than Humans: The Coming Collapse of Traditional Cybersecurity Value

- SOC 2 Isn’t Enough: Moving Beyond Compliance Theater to Real Risk Management

- When AI Becomes the Attack Surface: Lessons from the McKinsey Lilli Incident

- Why Every Company Needs a CISO (or at Least vCISO-Level Leadership)