AI Governance is becoming operational.

Most organizations talk about frameworks — but very few can prove their AI controls actually work.

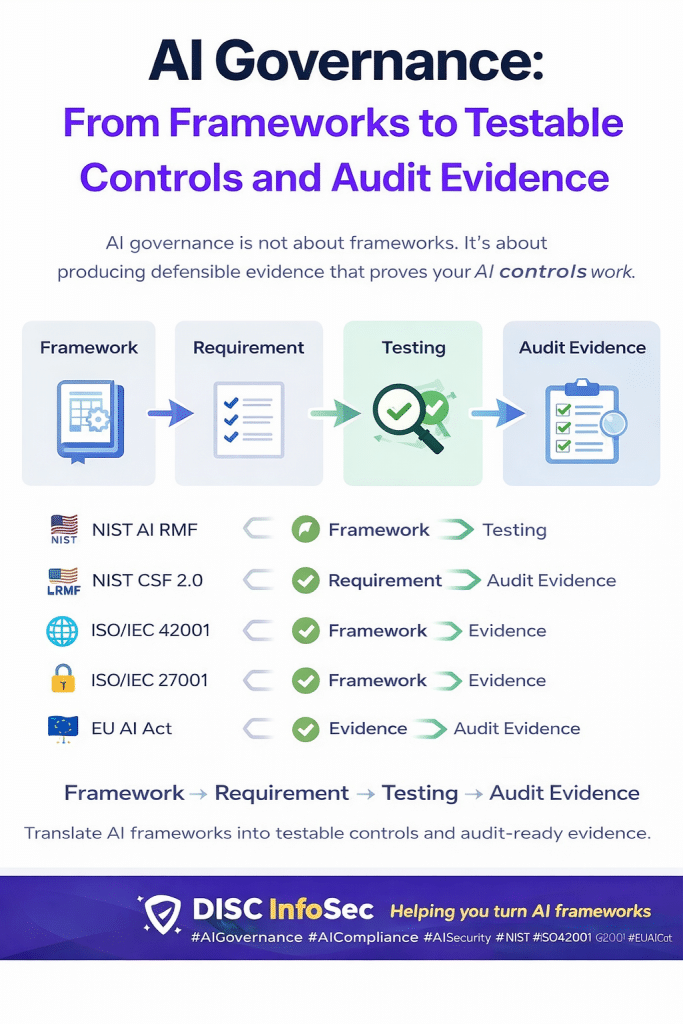

AI governance is the system organizations use to ensure AI systems are safe, fair, compliant, and accountable. Frameworks provide the guidance, but testing produces the proof.

Here’s the practical reality across the major frameworks:

🇺🇸 NIST AI Risk Management Framework

Organizations must identify and measure AI risks. In practice, that means testing models for bias, hallucinations, and performance drift. Evidence includes risk registers, evaluation scorecards, and drift monitoring logs.

🔐 NIST Cybersecurity Framework 2.0

Cybersecurity applied to AI. Organizations must know what AI systems exist and who has access. Testing focuses on shadow AI discovery, access control validation, and security testing. Evidence includes AI asset inventories, penetration test reports, and access matrices.

🌐 ISO/IEC 42001

The emerging AI management system standard. It requires organizations to assess AI impact and monitor performance. Testing includes misuse scenarios, regression testing, and anomaly detection. Evidence includes AI impact assessments, red-team results, and KPI monitoring reports.

🔒 ISO/IEC 27001

Security for AI pipelines and training data. Controls must protect models, code, and personal data. Testing focuses on code vulnerabilities, PII leakage, and data memorization risks. Evidence includes SAST reports, PII scan results, and data masking logs.

🇪🇺 EU Artificial Intelligence Act

The first binding AI law. High-risk AI must be governed, explainable, and built on quality data. Testing evaluates misuse scenarios, bias in datasets, and decision traceability. Evidence includes risk management plans, model cards, data quality reports, and output logs.

The pattern across all frameworks is simple:

Framework → Requirement → Testing → Evidence.

AI governance isn’t about memorizing regulations.

It’s about building repeatable testing processes that produce defensible evidence.

Organizations that succeed with AI governance will treat compliance like engineering:

• Test the controls

• Monitor continuously

• Produce verifiable evidence

That’s how AI governance moves from policy to proof.

At DISC InfoSec, we help organizations translate AI frameworks into testable controls and audit-ready evidence pipelines.

#AIGovernance #AICompliance #AISecurity #NIST #ISO42001 #ISO27001 #EUAIAct #RiskManagement #CyberSecurity #AIRegulation #AITrust

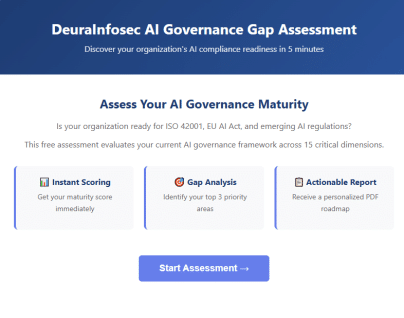

Get Your Free AI Governance Readiness Assessment – Is your organization ready for ISO 42001, EU AI Act, and emerging AI regulations?

AI Governance Gap Assessment tool

- 15 questions

- Instant maturity score

- Detailed PDF report

- Top 3 priority gaps

Click below to open an AI Governance Gap Assessment in your browser or click the image to start assessment.

ai_governance_assessment-v1.5Download

Built by AI governance experts. Used by compliance leaders.

InfoSec services | InfoSec books | Follow our blog | DISC llc is listed on The vCISO Directory | ISO 27k Chat bot | Comprehensive vCISO Services | ISMS Services | AIMS Services | Security Risk Assessment Services | Mergers and Acquisition Security

At DISC InfoSec, we help organizations navigate this landscape by aligning AI risk management, governance, security, and compliance into a single, practical roadmap. Whether you are experimenting with AI or deploying it at scale, we help you choose and operationalize the right frameworks to reduce risk and build trust. Learn more at DISC InfoSec.

- The AI Oversight Gap: When Confidence Outpaces Control

- The AI Governance Quick-Start: Defensible in 10 Days, Not 4 Quarters

- AI Security Tool Evaluation: A Reality Check for CISOs

- How to Answer AI Questions on Your Vendor Assessment (Without Stalling the Deal)

- Most AI Security Tools Won’t Pass an Audit. Here’s a 15-Minute Way to Find Out.