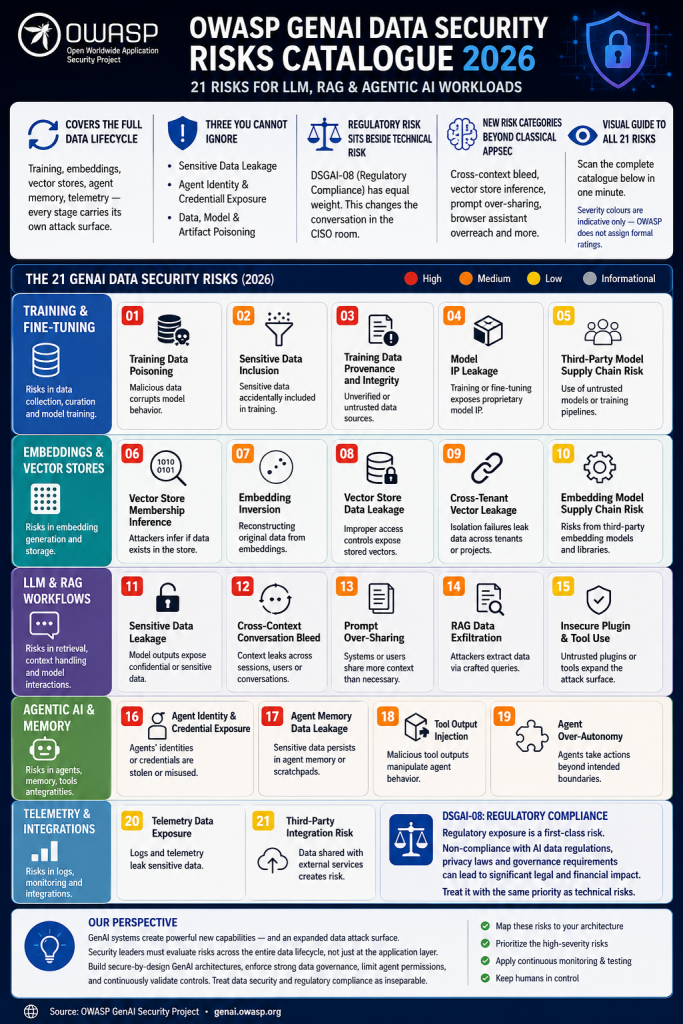

The newly released 2026 OWASP catalogue on GenAI data security risks highlights how rapidly the security landscape is evolving for organizations deploying LLMs, RAG pipelines, and agentic AI systems. Unlike traditional application security frameworks, this catalogue focuses specifically on the unique ways AI systems process, store, retrieve, and expose data across increasingly autonomous workflows. The release signals that AI security is no longer a niche concern but a central governance issue for enterprise technology leaders.

One of the most important themes in the catalogue is that AI risk spans the entire data lifecycle. Security exposure is not limited to the model itself; vulnerabilities can emerge during training, embedding generation, vector storage, inference, telemetry collection, and long-term memory retention. This broader attack surface means organizations must evaluate security controls across every stage of AI operations rather than relying on conventional perimeter-based protections.

OWASP emphasizes several high-priority risks that security leaders should treat as foundational concerns during architecture reviews. Sensitive Data Leakage remains one of the most immediate threats, especially when models unintentionally reveal confidential information through prompts, retrieval systems, logs, or generated outputs. Because GenAI systems often aggregate large volumes of internal and external data, the likelihood of accidental disclosure increases significantly without strong governance controls.

Another major concern is Agent Identity and Credential Exposure. Agentic AI systems increasingly interact with APIs, enterprise applications, browsers, and cloud environments using privileged credentials. If these identities are compromised, attackers may gain broad access to systems and sensitive resources. This risk becomes especially critical as organizations adopt autonomous agents capable of performing multi-step actions with limited human oversight.

The catalogue also highlights Data, Model, and Artifact Poisoning as a core threat category. Malicious actors may manipulate training datasets, embeddings, vector databases, prompts, or model artifacts to influence AI behavior or corrupt outputs. Because AI systems rely heavily on probabilistic reasoning and external context retrieval, poisoning attacks can be subtle, persistent, and difficult to detect through traditional security monitoring approaches.

A notable shift in the OWASP framework is the equal treatment of regulatory exposure alongside technical vulnerabilities. The inclusion of DSGAI 08 reflects growing recognition that compliance failures, privacy violations, and governance gaps can create business risk comparable to direct cyberattacks. This changes the conversation in executive and board-level security discussions, where AI governance is increasingly tied to legal accountability, auditability, and reputational protection.

The report also introduces several threat categories that have little precedent in classical application security. Risks such as cross-context conversation bleed, vector store membership inference, prompt over-sharing, and browser assistant overreach illustrate how AI systems create entirely new modes of data exposure. These are not simply extensions of existing AppSec problems; they emerge from the contextual reasoning, memory persistence, and autonomous behavior that define modern AI architectures.

Overall, the OWASP catalogue demonstrates that GenAI security requires a dedicated discipline rather than incremental updates to traditional cybersecurity programs. Organizations deploying AI at scale must rethink identity management, data governance, monitoring, retrieval security, and compliance frameworks together. The report serves as both a warning and a roadmap for enterprises integrating AI into critical business operations.

From my perspective, the most important takeaway is that AI security is shifting from a “model risk” conversation to a “systemic operational risk” conversation. The danger no longer comes only from what the model knows, but from how interconnected AI systems interact with data, memory, tools, users, and external environments. Many companies are still treating GenAI deployments like standard SaaS integrations, when in reality they behave more like dynamic decision-making ecosystems. The organizations that succeed will be the ones that build AI governance and security into architecture decisions from the beginning rather than attempting to retrofit controls after deployment.

Source: OWASP GenAI Security Project · genai.owasp.org

The AI Governance Quick-Start: Defensible in 10 Days, Not 4 Quarters

DISC InfoSec is an active ISO 42001 implementer and PECB Authorized Training Partner specializing in AI governance for B2B SaaS and financial services organizations.

AI Vulnerability Scorecard: Discover Your AI Attack Surface Before Attackers Do

Your Shadow AI Problem Has a Name-And Now It Has a Score

Most AI Security Tools Won’t Pass an Audit. Here’s a 15-Minute Way to Find Out.

InfoSec services | InfoSec books | Follow our blog | DISC llc is listed on The vCISO Directory | ISO 27k Chat bot | Comprehensive vCISO Services | ISMS Services | AIMS Services | Security Risk Assessment Services | Mergers and Acquisition Security

- Four risks, three frameworks, and what real-world mapping across ISO 27001, ISO 42001, and NIST 800-53 Rev. 5 actually looks like

- The Bus Factor Just Inverted: Governing the Agents Your Engineers Leave Behind

- ISO 42001 Just Got Easier to Prove: Anthropic Opens Claude to 28 Security and Compliance Tools

- Modern GRC Maturity: Connecting Governance, Risk, Controls, and Technology

- The One Security Book That Got Louder With Every Passing Year