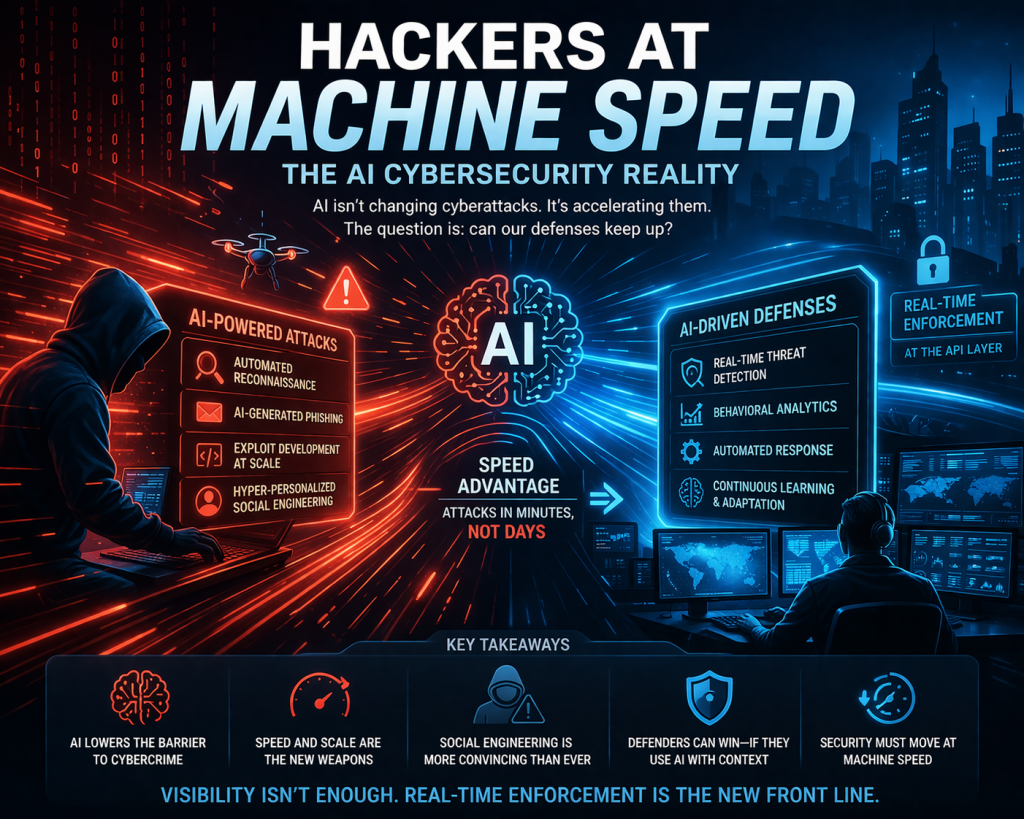

A recent The New York Times report highlights how artificial intelligence is rapidly reshaping the cybersecurity landscape, particularly in the hands of hackers. Rather than introducing entirely new attack techniques, AI is acting as a force multiplier, enabling cybercriminals to execute existing methods faster, cheaper, and at a much larger scale.

One of the key themes is the democratization of cybercrime. AI tools are lowering the barrier to entry, allowing less-skilled attackers to perform sophisticated operations that previously required deep technical expertise. Tasks like writing malware, crafting phishing campaigns, and identifying vulnerabilities can now be automated, significantly expanding the pool of potential attackers.

The article also emphasizes the speed advantage AI provides. Cyberattacks that once took days or weeks can now be executed in minutes or hours. AI accelerates reconnaissance, automates exploit development, and enables rapid iteration, making it difficult for traditional security teams to keep up with the pace of modern threats.

Another important shift is the rise of AI-assisted social engineering. Hackers are using AI to generate highly convincing phishing messages, impersonations, and even real-time conversational attacks. This increases the success rate of attacks by making them more personalized, scalable, and harder to detect.

The report also points out that AI-driven attacks are not necessarily more sophisticated—they are simply more efficient and scalable. Attackers are reusing known techniques but executing them with greater precision and automation. This creates a scenario where organizations face a higher volume of attacks, each delivered with improved consistency and timing.

At the same time, defenders are not standing still. The article notes that AI can also be used defensively to analyze large volumes of data, detect anomalies, and respond to threats faster than humans alone. However, the advantage lies with organizations that can effectively apply AI with context and integrate it into their security operations.

Finally, the broader implication is that AI is accelerating an ongoing cybersecurity arms race. It is exposing weaknesses in traditional security models—particularly those reliant on manual processes, static controls, and delayed response mechanisms. Organizations that fail to adapt risk being overwhelmed by the speed and scale of AI-enabled threats.

Perspective:

The most important takeaway is that AI is not changing what attacks look like—it’s changing how fast and how often they happen. This reinforces a critical point: cybersecurity can no longer rely on detection and response alone. If attacks operate at machine speed, then security controls must also operate at machine speed.

This is where the conversation shifts directly into real-time enforcement, especially at the API layer. AI systems—and increasingly, enterprise systems overall—are API-driven. That means the only effective control point is inline, real-time decisioning.

In practical terms, the future of cybersecurity will be defined by organizations that can move from visibility to enforcement, from alerts to action, and from reactive defense to proactive control. AI didn’t break security—it simply exposed where it was already too slow.

Bottom line:

If your AI governance strategy cannot demonstrate continuous monitoring, control, and enforcement, it is unlikely to stand up to audit—or real-world threats.

That’s why AI governance enforcement is not just a feature—it’s the foundation for making AI governance actually work at scale.

Ready to Operationalize AI Governance?

If you’re serious about moving from **AI governance theory → real enforcement**,

DISC InfoSec can help you build the control layer your AI systems need.

Most organizations have AI governance documents — but auditors now want proof of enforcement.

Policies alone don’t reduce AI risk. Real‑time monitoring, control, and enforcement do.

If your AI governance strategy can’t demonstrate continuous oversight, it won’t stand up to audit or real‑world threats.

DISC InfoSec helps organizations operationalize AI governance with integrated frameworks, runtime controls, and proven certification success.

Move from AI governance theory to enforcement.

Read the full post below: Is Your AI Governance Strategy Audit‑Ready — or Just Documented?

Schedule a consultation or drop a note below: info@deurainfosec.com

InfoSec services | InfoSec books | Follow our blog | DISC llc is listed on The vCISO Directory | ISO 27k Chat bot | Comprehensive vCISO Services | ISMS Services | AIMS Services | Security Risk Assessment Services | Mergers and Acquisition Security

Is your AI strategy truly audit-ready today?

AI governance is no longer optional. Frameworks like ISO/IEC 42001 AI Management System Standard and regulations such as the EU AI Act are rapidly reshaping compliance expectations for organizations using AI.

DISC InfoSec brings deep expertise across AI, cybersecurity, and regulatory compliance to help you build trust, reduce risk, and stay ahead of evolving mandates—with a proven track record of success.

Ready to lead with confidence? Let’s start the conversation.

At DISC InfoSec, we help organizations navigate this landscape by aligning AI risk management, governance, security, and compliance into a single, practical roadmap. Whether you are experimenting with AI or deploying it at scale, we help you choose and operationalize the right frameworks to reduce risk and build trust. Learn more at DISC InfoSec.

- Claude Mythos and the Future of Cybersecurity: Powerful—and Potentially Dangerous

- Hackers at Machine Speed: The AI Cybersecurity Reality

- AI Security = API Security: The Case for Real-Time Enforcement

- Is Your AI Governance Strategy Audit-Ready—or Just Documented?

- AI-Native Risk: Why AI Security Is Still an API Security Problem