Why Cybersecurity Is Critical in the Age of AI

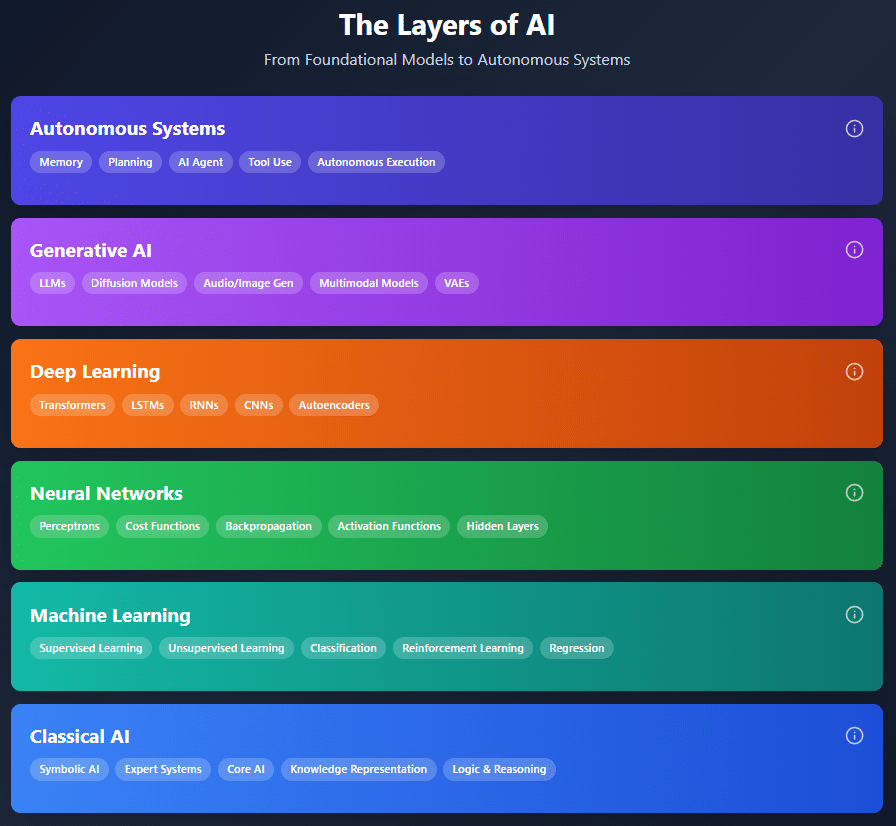

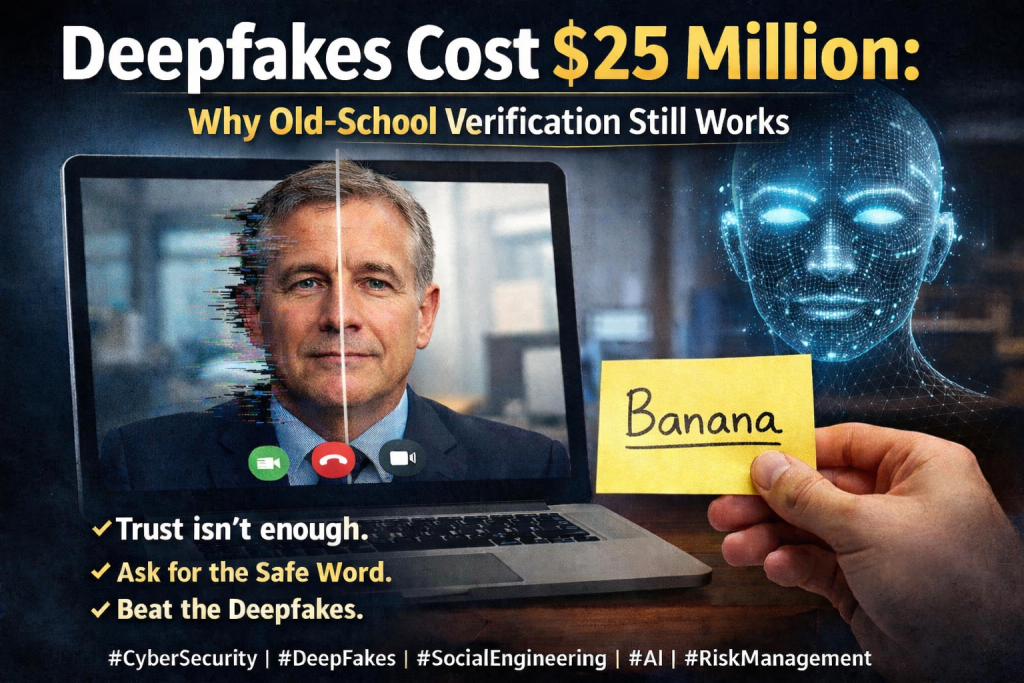

In today’s world, cybersecurity matters more than ever because artificial intelligence dramatically changes both how attacks happen and how defenses must work. AI amplifies scale, speed, and sophistication—enabling attackers to automate phishing, probe systems, and evolve malware far faster than human teams can respond on their own. At the same time, AI can help defenders sift through massive datasets, spot subtle patterns, and automate routine work to reduce alert fatigue. That dual nature makes cybersecurity foundational to protecting organizations’ data, systems, and operations: without strong security, AI becomes another vulnerability rather than a defensive advantage.

Greater Executive Engagement Meets Growing Workload Pressure

Security teams are now more involved in strategic business discussions than in prior years, particularly around resilience, risk tolerance, and continuity. While this elevated visibility brings more board-level support and scrutiny, it also increases pressure to deliver measurable outcomes such as compliance posture, incident-handling metrics, and vulnerability coverage. Despite AI being used broadly, many routine tasks like evidence collection and ticket coordination remain manual, stretching teams thin and contributing to fatigue.

AI Now Powers Everyday Security Tasks—With New Risks

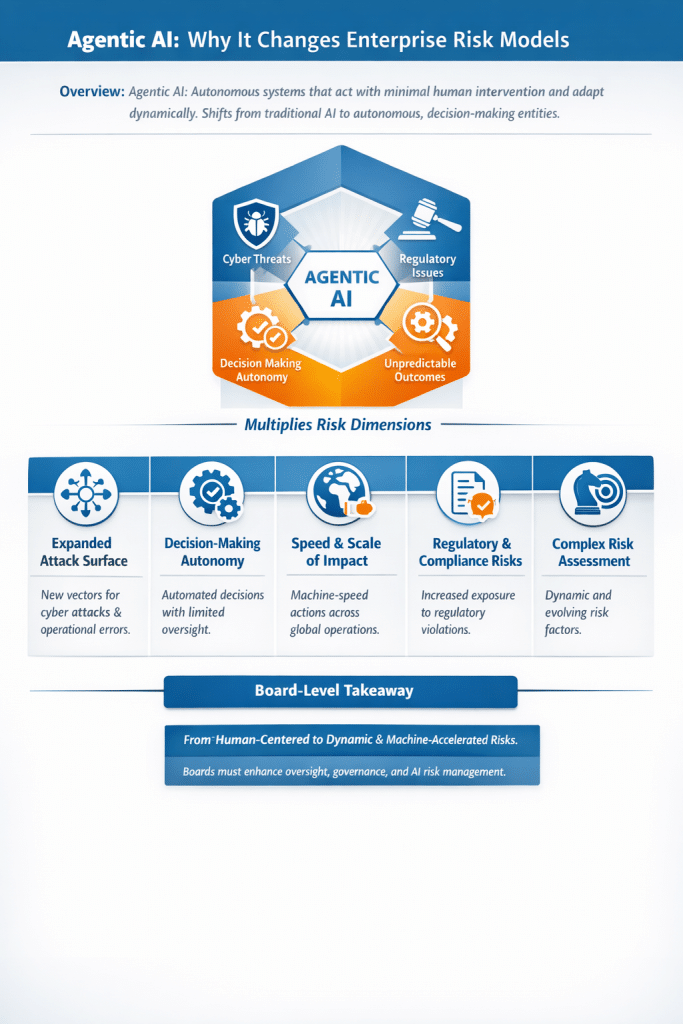

AI isn’t experimental anymore—it’s part of the everyday security toolkit for functions such as threat intelligence, detection, identity monitoring, phishing analysis, ticket triage, and compliance reporting. But as AI becomes integrated into core operations, it brings new attack surfaces and risks. Data leakage through AI copilots, unmanaged internal AI tools, and prompt manipulation are emerging concerns that intersect with sensitive data and access controls. These issues mean security teams must govern how AI is used as much as where it is used.

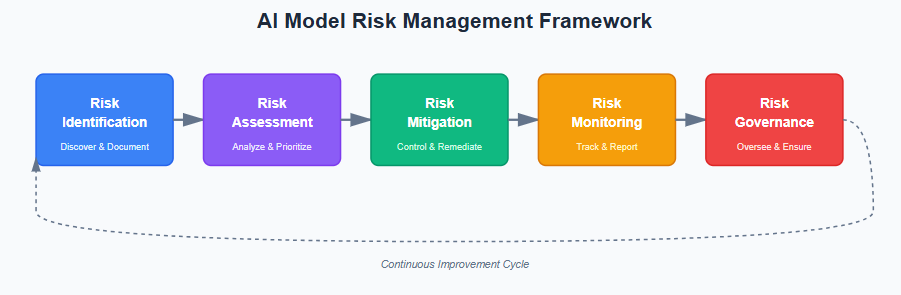

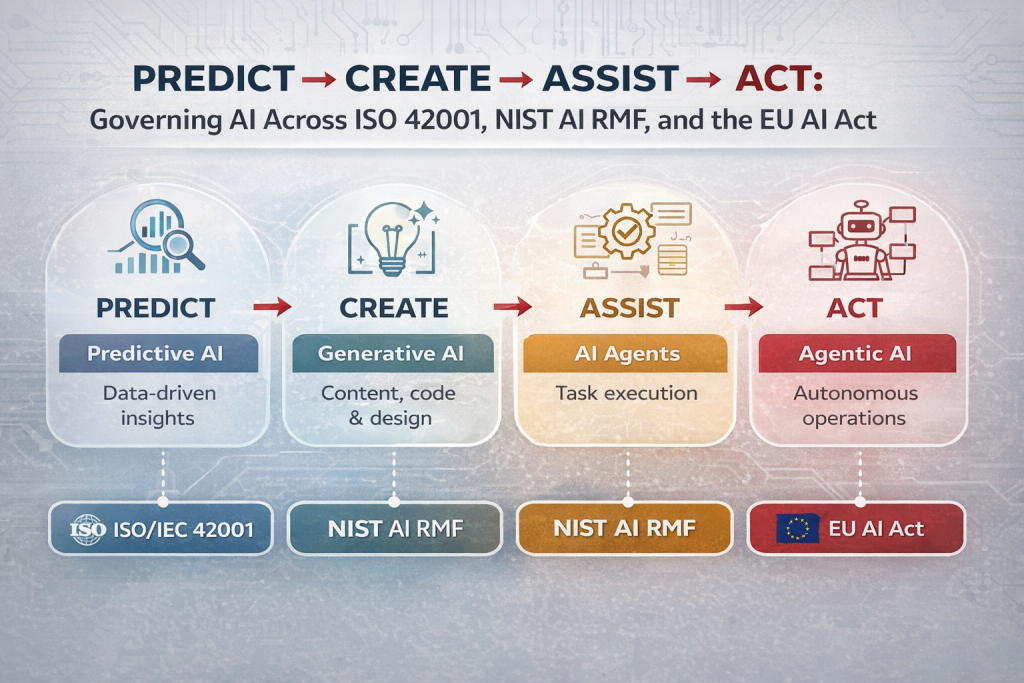

AI Governance Has Become an Operational Imperative

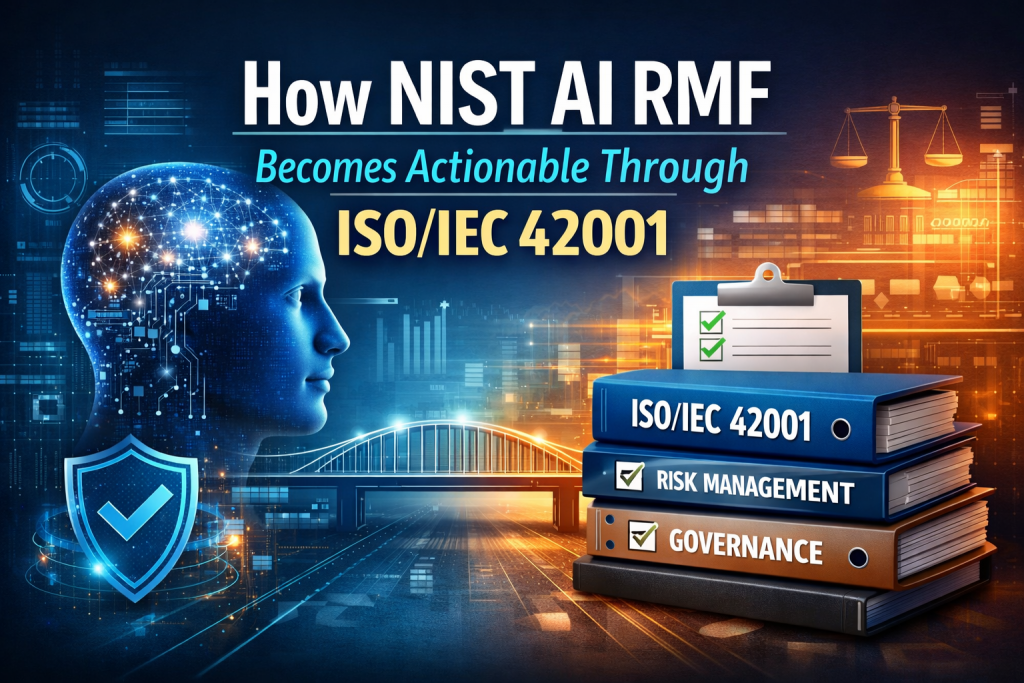

Organizations are increasingly formalizing AI policies and AI governance frameworks. Teams with clear rules and review processes feel more confident that AI outputs are safe and auditable before they influence decisions. Governance now covers data handling, access management, lifecycle oversight of models, and ensuring automation respects compliance obligations. These governance structures aren’t optional—they help balance innovation with risk control and affect how quickly automation can be adopted.

Manual Processes Still Cause Burnout and Risk

Even as AI tools are adopted, many operational workflows remain manual. Frequent context switching between tools and repetitive tasks increases cognitive load and retention risk among security practitioners. Manual work also introduces operational risk—human error slows response times and limits scale during incidents. Many teams now see automation and connected workflows as essential for reducing manual burden, improving morale, and stabilizing operations.

Connected, AI-Driven Workflows Are Gaining Traction

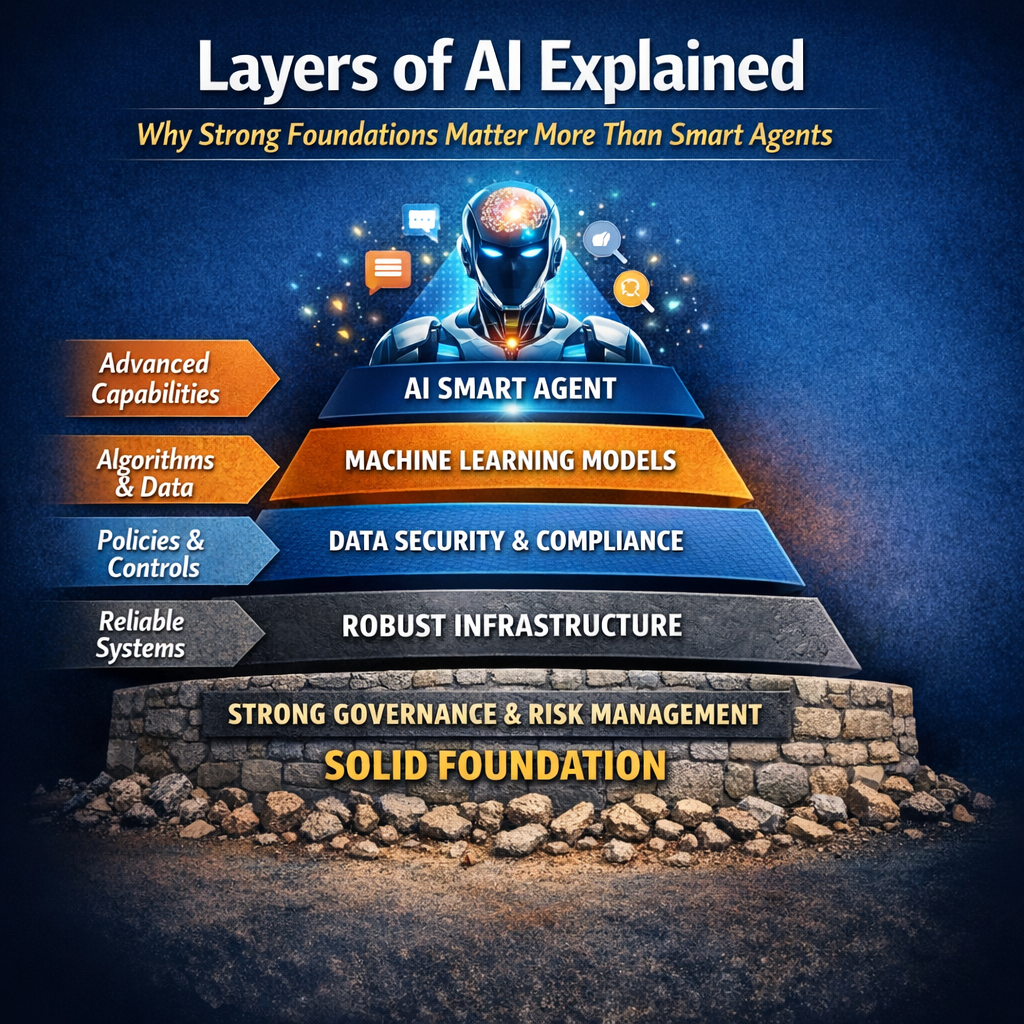

A growing number of teams are exploring platforms that blend automation, AI, and human oversight into seamless workflows. These “intelligent workflow” approaches reduce manual handoffs, speed response times, and improve data accuracy and tracking. Interoperability—standards and APIs that allow AI systems to interact reliably with tools—is becoming more important as organizations seek to embed AI deeply yet safely into core security processes. Teams recognize that AI alone isn’t enough—it must be integrated with governance and strong workflow design to deliver real impact.

My Perspective: The State of Cybersecurity in the AI Era

Cybersecurity in 2026 stands at a crossroads between risk acceleration and defensive transformation. AI has moved from exploration into everyday operations—but so too have AI-related threats and vulnerabilities. Many organizations are still catching up: only a minority have dedicated AI security protections or teams, and governance remains immature in many environments.

The net effect is that AI amplifies both sides of the equation: attackers can probe and exploit systems at machine speed, while defenders can automate detection and response at volumes humans could never manage alone. The organizations that succeed will be those that treat AI security not as a feature but as an integral part of their cybersecurity strategy—coupling strong AI governance, human-in-loop oversight, and well-designed workflows with intelligent automation. Cybersecurity isn’t less important in the age of AI—it’s foundational to making AI safe, reliable, and trustworthy.

In a recent interview and accompanying essay, Anthropic CEO Dario Amodei warns that humanity is not prepared for the rapid evolution of artificial intelligence and the profound disruptions it could bring. He argues that existing social, political, and economic systems may lag behind the pace of AI advancements, creating a dangerous mismatch between capability and governance.

InfoSec services | InfoSec books | Follow our blog | DISC llc is listed on The vCISO Directory | ISO 27k Chat bot | Comprehensive vCISO Services | ISMS Services | AIMS Services | Security Risk Assessment Services | Mergers and Acquisition Security

At DISC InfoSec, we help organizations navigate this landscape by aligning AI risk management, governance, security, and compliance into a single, practical roadmap. Whether you are experimenting with AI or deploying it at scale, we help you choose and operationalize the right frameworks to reduce risk and build trust. Learn more at DISC InfoSec.

- AI Agents and the New Cybersecurity Frontier: Understanding the 7 Major Attack Surfaces

- Understanding AI/LLM Application Attack Vectors and How to Defend Against Them

- AI Governance Assessment for ISO 42001 Readiness

- Beyond ChatGPT: The 9 Layers of AI Transforming Business from Analytics to Autonomous Agents

- CMMC Level 2 Third-Party Assessment: What It Is, Why It Matters, and What to Expect