🌐 “Does ISO/IEC 42001 Risk Slowing Down AI Innovation, or Is It the Foundation for Responsible Operations?”

🔍 Overview

The post explores whether ISO/IEC 42001—a new standard for Artificial Intelligence Management Systems—acts as a barrier to AI innovation or serves as a framework for responsible and sustainable AI deployment.

🚀 AI Opportunities

ISO/IEC 42001 is positioned as a catalyst for AI growth:

- It helps organizations understand their internal and external environments to seize AI opportunities.

- It establishes governance, strategy, and structures that enable responsible AI adoption.

- It prepares organizations to capitalize on future AI advancements.

🧭 AI Adoption Roadmap

A phased roadmap is suggested for strategic AI integration:

- Starts with understanding customer needs through marketing analytics tools (e.g., Hootsuite, Mixpanel).

- Progresses to advanced data analysis and optimization platforms (e.g., GUROBI, IBM CPLEX, Power BI).

- Encourages long-term planning despite the fast-evolving AI landscape.

🛡️ AI Strategic Adoption

Organizations can adopt AI through various strategies:

- Defensive: Mitigate external AI risks and match competitors.

- Adaptive: Modify operations to handle AI-related risks.

- Offensive: Develop proprietary AI solutions to gain a competitive edge.

⚠️ AI Risks and Incidents

ISO/IEC 42001 helps manage risks such as:

- Faulty decisions and operational breakdowns.

- Legal and ethical violations.

- Data privacy breaches and security compromises.

🔐 Security Threats Unique to AI

The presentation highlights specific AI vulnerabilities:

- Data Poisoning: Malicious data corrupts training sets.

- Model Stealing: Unauthorized replication of AI models.

- Model Inversion: Inferring sensitive training data from model outputs.

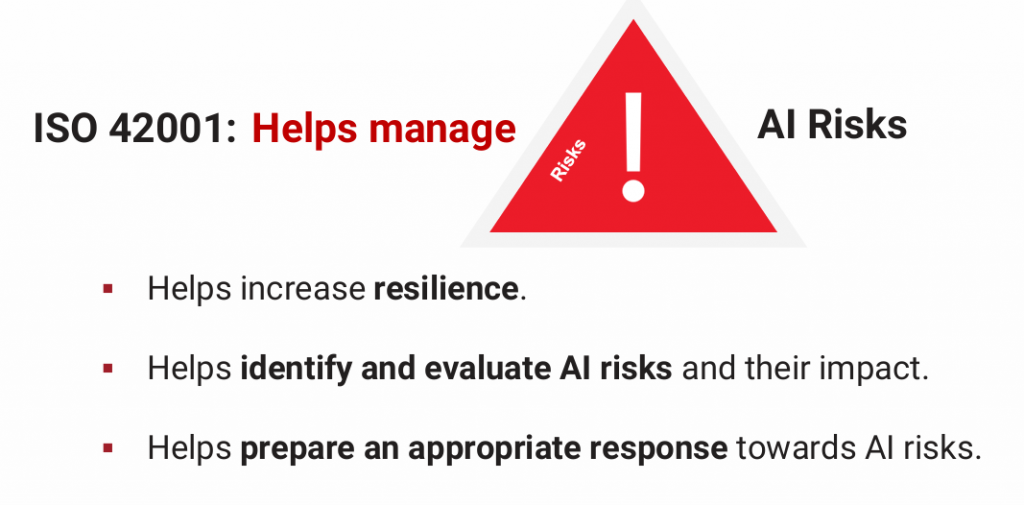

🧩 ISO 42001 as a GRC Framework

The standard supports Governance, Risk Management, and Compliance (GRC) by:

- Increasing organizational resilience.

- Identifying and evaluating AI risks.

- Guiding appropriate responses to those risks.

🔗 ISO 27001 vs ISO 42001

- ISO 27001: Focuses on information security and privacy.

- ISO 42001: Focuses on responsible AI development, monitoring, and deployment.

Together, they offer a comprehensive risk management and compliance structure for organizations using or impacted by AI.

🏗️ Implementing ISO 42001

The standard follows a structured management system:

- Context: Understand stakeholders and external/internal factors.

- Leadership: Define scope, policy, and internal roles.

- Planning: Assess AI system impacts and risks.

- Support: Allocate resources and inform stakeholders.

- Operations: Ensure responsible use and manage third-party risks.

- Evaluation: Monitor performance and conduct audits.

- Improvement: Drive continual improvement and corrective actions.

💬 My Take

ISO/IEC 42001 doesn’t hinder innovation—it channels it responsibly. In a world where AI can both empower and endanger, this standard offers a much-needed compass. It balances agility with accountability, helping organizations innovate without losing sight of ethics, safety, and trust. Far from being a brake, it’s the steering wheel for AI’s journey forward.

Would you like help applying ISO 42001 principles to your own organization or project?

Feel free to contact us if you need assistance with your AI management system.

ISO/IEC 42001 can act as a catalyst for AI innovation by providing a clear framework for responsible governance, helping organizations balance creativity with compliance. However, if applied rigidly without alignment to business goals, it could become a constraint that slows decision-making and experimentation.

AIMS and Data Governance – Managing data responsibly isn’t just good practice—it’s a legal and ethical imperative.

Click the ISO 42001 Awareness Quiz — it will open in your browser in full-screen mode

Secure Your Business. Simplify Compliance. Gain Peace of Mind

InfoSec services | InfoSec books | Follow our blog | DISC llc is listed on The vCISO Directory | ISO 27k Chat bot | Comprehensive vCISO Services | ISMS Services | Security Risk Assessment Services | Mergers and Acquisition Security