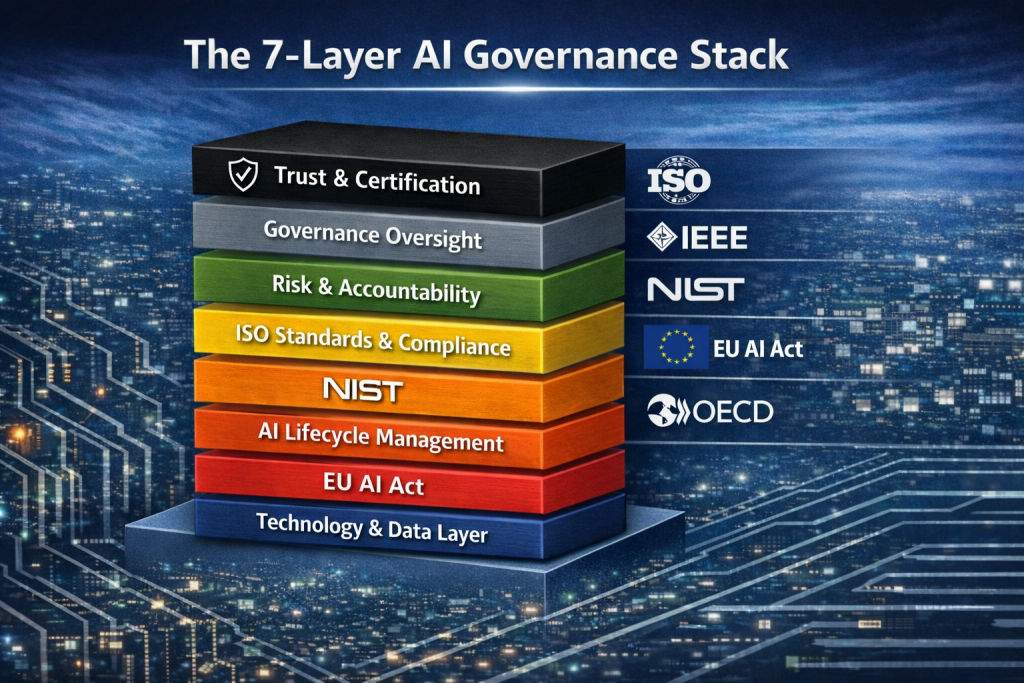

Defining the AI Governance Stack (Layers + Countermeasures)

1. Technology & Data Layer

This is the foundational layer where AI systems are built and operate. It includes infrastructure, datasets, machine learning models, APIs, cloud environments, and development platforms that power AI applications. Risks at this level include data poisoning, model manipulation, unauthorized access, and insecure pipelines.

Countermeasures: Secure data governance, strong access control, encryption, secure MLOps pipelines, dataset validation, and adversarial testing to protect model integrity.

2. AI Lifecycle Management

This layer governs the entire lifecycle of AI systems—from design and training to deployment, monitoring, and retirement. Without lifecycle oversight, models may drift, produce harmful outputs, or operate outside their intended purpose.

Countermeasures: Implement lifecycle governance frameworks such as the National Institute of Standards and Technology AI Risk Management Framework and ISO model lifecycle practices. Continuous monitoring, model validation, and AI system documentation are essential.

3. Regulation Layer

Regulation defines the legal obligations governing AI development and use. Governments worldwide are establishing regulatory regimes to address safety, privacy, and accountability risks associated with AI technologies.

Countermeasures: Regulatory compliance programs, legal monitoring, AI impact assessments, and alignment with frameworks like the EU AI Act and other national laws.

4. Standards & Compliance Layer

Standards translate regulatory expectations into operational requirements and technical practices that organizations can implement. They provide structured guidance for building trustworthy AI systems.

Countermeasures: Adopt international standards such as ISO/IEC 42001 and governance engineering frameworks from Institute of Electrical and Electronics Engineers to ensure responsible design, transparency, and accountability.

5. Risk & Accountability Layer

This layer focuses on identifying, evaluating, and managing AI-related risks—including bias, privacy violations, security threats, and operational failures. It also defines who is responsible for decisions made by AI systems.

Countermeasures: Enterprise risk management integration, algorithmic risk assessments, impact analysis, internal audit oversight, and adoption of principles such as the OECD AI Principles.

6. Governance Oversight Layer

Governance oversight ensures that leadership, ethics boards, and risk committees supervise AI strategy and operations. This layer connects technical implementation with corporate governance and accountability structures.

Countermeasures: Establish AI governance committees, board-level oversight, policy frameworks, and internal controls aligned with organizational governance models.

7. Trust & Certification Layer

The top layer focuses on demonstrating trust externally through certification, assurance, and transparency. Organizations must show regulators, partners, and customers that their AI systems operate responsibly and safely.

Countermeasures: Independent audits, third-party certification programs, transparency reporting, and responsible AI disclosures aligned with global assurance standards.

AI Governance Is Becoming Infrastructure

The real challenge of AI governance has never been simply writing another set of ethical principles. While ethics guidelines and policy statements are valuable, they do not solve the structural problem organizations face: how to manage dozens of overlapping regulations, standards, and governance expectations across the AI lifecycle.

The fundamental issue is governance architecture. Organizations do not need more isolated principles or compliance checklists. What they need is a structured system capable of integrating multiple governance regimes into a single operational framework.

In practical terms, such governance architectures must integrate multiple frameworks simultaneously. These may include regulatory systems like the EU AI Act, governance standards such as ISO/IEC 42001, technical risk frameworks from the National Institute of Standards and Technology, engineering ethics guidance from the Institute of Electrical and Electronics Engineers, and global governance principles like the OECD AI Principles.

The complexity of the governance environment is significant. Today, organizations face more than one hundred AI governance frameworks, regulatory initiatives, standards, and guidelines worldwide. These systems frequently overlap, creating fragmentation that traditional compliance approaches struggle to manage.

Historically, global discussions about AI governance focused primarily on ethics principles, isolated compliance frameworks, or individual national regulations. However, the rapid expansion of AI technologies has transformed the governance landscape into a dense ecosystem of interconnected governance regimes.

This shift is reflected in emerging policy guidance, particularly the due diligence frameworks being promoted by international institutions. These approaches emphasize governance processes such as risk identification, mitigation, monitoring, and remediation across the entire lifecycle of AI systems rather than relying on standalone regulatory requirements.

As a result, organizations are no longer dealing with a single governance framework. They are operating within a layered governance stack where regulations, standards, risk management frameworks, and operational controls must work together simultaneously.

Perspective on the Future of AI Governance

From my perspective, the next phase of AI governance will not be defined by new frameworks alone. The real transformation will occur when governance becomes infrastructure—a structured system capable of integrating regulations, standards, and operational controls at scale.

In other words, AI governance is evolving from policy into governance engineering. Organizations that build governance architectures—rather than simply chasing compliance—will be far better positioned to manage AI risk, demonstrate trust, and adapt to the rapidly expanding global regulatory environment.

For cybersecurity and governance leaders, this means treating AI governance the same way we treat cloud architecture or security architecture: as a foundational system that enables resilience, accountability, and trust in AI-driven organizations. 🔐🤖📊

Get Your Free AI Governance Readiness Assessment – Is your organization ready for ISO 42001, EU AI Act, and emerging AI regulations?

AI Governance Gap Assessment tool

- 15 questions

- Instant maturity score

- Detailed PDF report

- Top 3 priority gaps

Click below to open an AI Governance Gap Assessment in your browser or click the image to start assessment.

ai_governance_assessment-v1.5Download

Built by AI governance experts. Used by compliance leaders.

InfoSec services | InfoSec books | Follow our blog | DISC llc is listed on The vCISO Directory | ISO 27k Chat bot | Comprehensive vCISO Services | ISMS Services | AIMS Services | Security Risk Assessment Services | Mergers and Acquisition Security

At DISC InfoSec, we help organizations navigate this landscape by aligning AI risk management, governance, security, and compliance into a single, practical roadmap. Whether you are experimenting with AI or deploying it at scale, we help you choose and operationalize the right frameworks to reduce risk and build trust. Learn more at DISC InfoSec.

- Cyber Resilience Maturity Model: From Reactive Security to Operational Resilience

- From Risk to Resilience: A 5-Step Playbook for Securing AI in the Modern Threat Era

- Which AI Governance Framework Should You Adopt First? A Practical Guide for U.S., EU, and Global Organizations

- MITRE ATT&CK: Turning Blind Spots into Real-World Cyber Defense

- When AI Hacks Faster Than Humans: The Coming Collapse of Traditional Cybersecurity Value