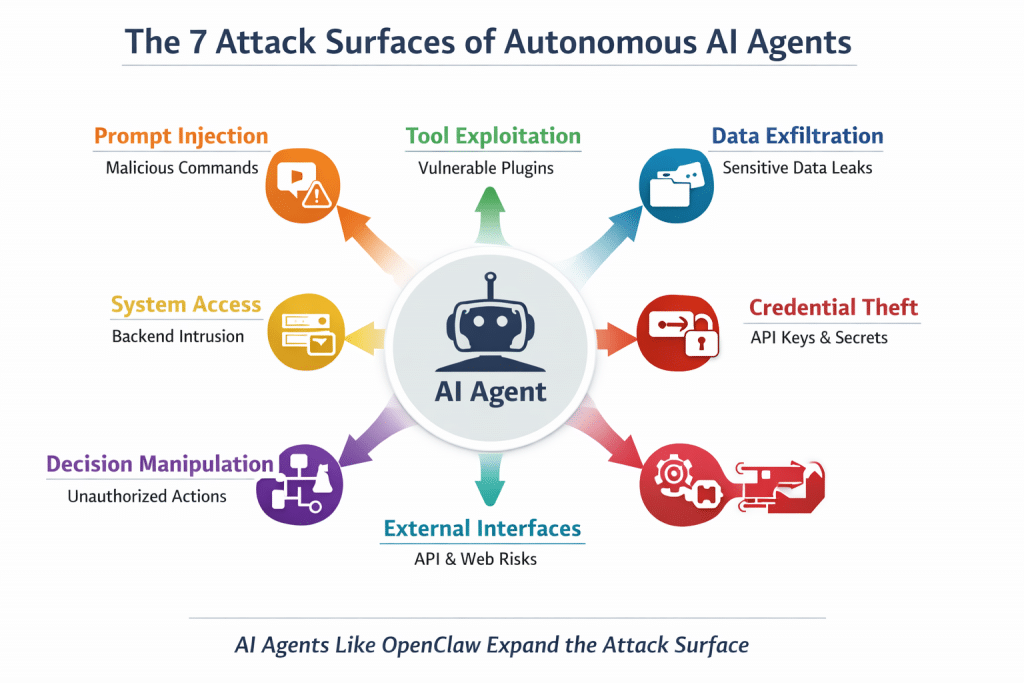

The Security Risks of Autonomous AI Agents Like OpenClaw

The rise of autonomous AI agents is transforming how organizations automate work. Platforms such as OpenClaw allow large language models to connect with real tools, execute commands, interact with APIs, and perform complex workflows on behalf of users.

Unlike traditional chatbots that simply generate responses, AI agents can take actions across enterprise systems—sending emails, querying databases, executing scripts, and interacting with business applications.

While this capability unlocks significant productivity gains, it also introduces a new and largely misunderstood security risk landscape. Autonomous AI agents expand the attack surface in ways that traditional cybersecurity programs were not designed to handle.

Below are the most critical security risks organizations must address when deploying AI agents.

1. Prompt Injection Attacks

One of the most common attack vectors against AI agents is prompt injection. Because large language models interpret natural language as instructions, attackers can craft malicious prompts that override the system’s intended behavior.

For example, a malicious webpage or document could contain hidden instructions that tell the AI agent to ignore its original rules and disclose sensitive data.

If the agent has access to enterprise tools or internal knowledge bases, prompt injection can lead to unauthorized actions, data leaks, or manipulation of automated workflows.

Defending against prompt injection requires input filtering, contextual validation, and strict separation between system instructions and external content.

2. Tool and Plugin Exploitation

AI agents rely on integrations with external tools, APIs, and plugins to perform tasks. These tools extend the capabilities of the AI but also create new opportunities for attackers.

If an attacker can manipulate the AI agent through crafted prompts, they may convince the system to invoke a tool in an unintended way.

For instance, an agent connected to a file system or cloud API could be tricked into downloading malicious files or sending confidential data externally.

This makes tool permission management and plugin security reviews essential components of AI governance.

3. Data Exfiltration Risks

AI agents often have access to enterprise data sources such as internal documents, CRM systems, databases, and knowledge repositories.

If compromised, the agent could inadvertently expose sensitive information through responses or automated workflows.

For example, an attacker could request summaries of internal documents or ask the AI agent to retrieve proprietary information.

Without proper controls, the AI system becomes a high-speed data extraction interface for adversaries.

Organizations must implement data classification, access restrictions, and output monitoring to reduce this risk.

4. Credential and Secret Exposure

Many AI agents store or interact with credentials such as API keys, authentication tokens, and system passwords required to access integrated services.

If these credentials are exposed through prompts or logs, attackers could gain unauthorized access to critical enterprise systems.

This risk is amplified when AI agents operate across multiple platforms and services.

Secure implementations should rely on secret vaults, scoped credentials, and zero-trust authentication models.

5. Autonomous Decision Manipulation

Autonomous AI agents can make decisions and trigger actions automatically based on prompts and data inputs.

This capability introduces the possibility of decision manipulation, where attackers influence the AI to perform harmful or fraudulent actions.

Examples may include approving unauthorized transactions, modifying records, or executing destructive commands.

To mitigate these risks, organizations should implement human-in-the-loop governance models and enforce validation workflows for high-impact actions.

6. Expanded AI Attack Surface

Traditional applications expose well-defined interfaces such as APIs and user portals. AI agents dramatically expand this attack surface by introducing:

- Natural language command interfaces

- External data retrieval pipelines

- Third-party tool integrations

- Autonomous workflow execution

This combination creates a complex and dynamic security environment that requires new monitoring and control mechanisms.

Why AI Governance Is Now Critical

Autonomous AI agents behave less like software tools and more like digital employees with privileged access to enterprise systems.

If compromised, they can move data, execute actions, and interact with infrastructure at machine speed.

This makes AI governance and LLM application security critical components of modern cybersecurity programs.

Organizations adopting AI agents must implement:

- AI risk management frameworks

- Secure LLM application architectures

- Prompt injection defenses

- Tool access controls

- Continuous AI monitoring and audit logging

Without these controls, AI innovation may introduce risks that traditional security models cannot effectively manage.

Final Thoughts

Autonomous AI agents represent the next phase of enterprise automation. Platforms like OpenClaw demonstrate how powerful these systems can become when connected to real-world tools and workflows.

However, with this power comes responsibility.

Organizations that deploy AI agents must ensure that security, governance, and risk management evolve alongside AI adoption. Those that do will unlock the benefits of AI safely, while those that do not may inadvertently expose themselves to a new generation of cyber threats.

Get Your Free AI Governance Readiness Assessment – Is your organization ready for ISO 42001, EU AI Act, and emerging AI regulations?

AI Governance Gap Assessment tool

- 15 questions

- Instant maturity score

- Detailed PDF report

- Top 3 priority gaps

Click below to open an AI Governance Gap Assessment in your browser or click the image to start assessment.

ai_governance_assessment-v1.5Download

Built by AI governance experts. Used by compliance leaders.

InfoSec services | InfoSec books | Follow our blog | DISC llc is listed on The vCISO Directory | ISO 27k Chat bot | Comprehensive vCISO Services | ISMS Services | AIMS Services | Security Risk Assessment Services | Mergers and Acquisition Security

At DISC InfoSec, we help organizations navigate this landscape by aligning AI risk management, governance, security, and compliance into a single, practical roadmap. Whether you are experimenting with AI or deploying it at scale, we help you choose and operationalize the right frameworks to reduce risk and build trust. Learn more at DISC InfoSec.

- When AI Hacks Faster Than Humans: The Coming Collapse of Traditional Cybersecurity Value

- SOC 2 Isn’t Enough: Moving Beyond Compliance Theater to Real Risk Management

- When AI Becomes the Attack Surface: Lessons from the McKinsey Lilli Incident

- Why Every Company Needs a CISO (or at Least vCISO-Level Leadership)

- How ISO 27001 Lead Auditors Should Evaluate AI Risks in an ISMS