How to addresses the complex security challenges introduced by Large Language Models (LLMs) and agentic solutions.

Addressing the security challenges of large language models (LLMs) and agentic AI

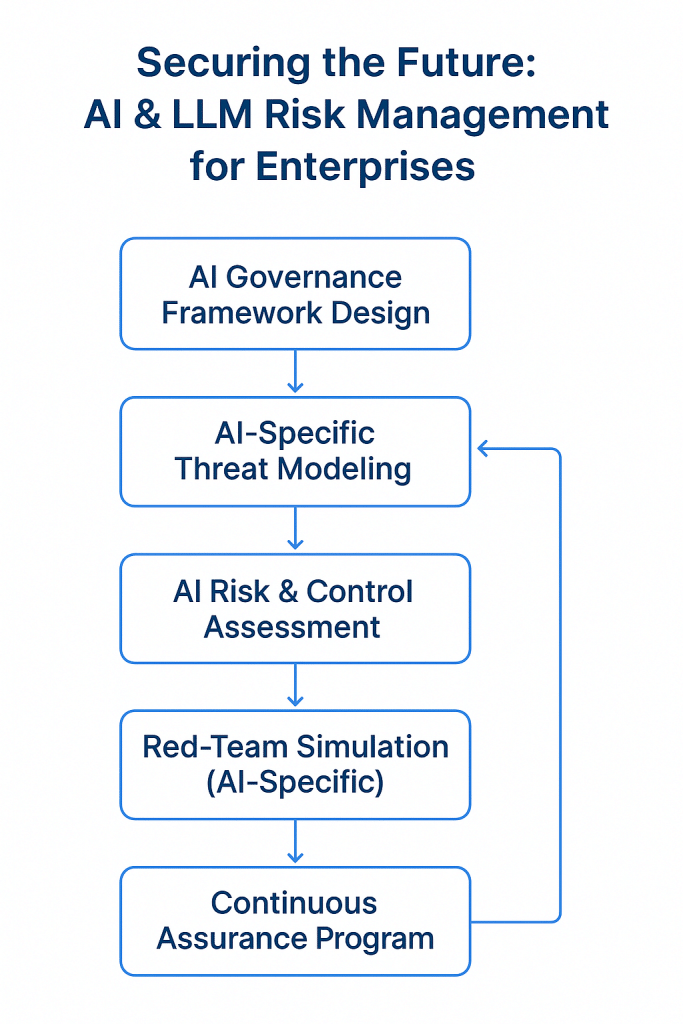

The session (Securing AI Innovation: A Proactive Approach) opens by outlining how the adoption of LLMs and multi-agent AI solutions has introduced new layers of complexity into enterprise security. Traditional governance frameworks, threat models and detection tools often weren’t designed for autonomous, goal-driven AI agents — leaving gaps in how organisations manage risk.

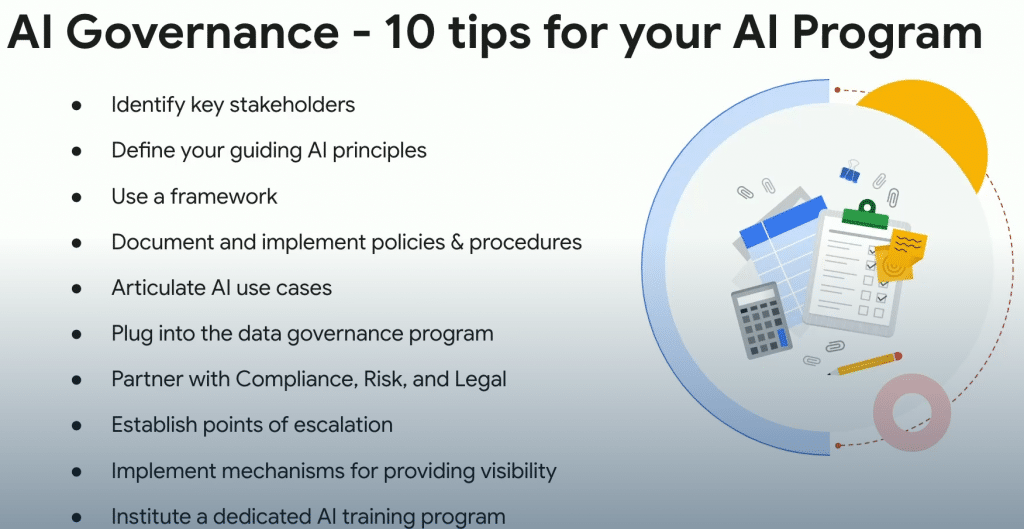

One of the root issues is insufficient integrated governance around AI deployments. While many organisations have policies for traditional IT systems, they lack the tailored rules, roles and oversight needed when an LLM or agentic solution can plan, act and evolve. Without governance aligned to AI’s unique behaviours, control is weak.

The session then shifts to proactive threat modelling for AI systems. It emphasises that effective risk management isn’t just about reacting to incidents but modelling how an AI might be exploited — e.g., via prompt injection, memory poisoning or tool misuse — and embedding those threats into design, before production.

It explains how AI-specific detection mechanisms are becoming essential. Unlike static systems, LLMs and agents have dynamic behaviours, evolving goals, and memory/context mechanisms. Detection therefore needs to be built for anomalies in those agent behaviours — not just standard security events.

The presenters share findings from a year of securing and attacking AI deployments. Lessons include observing how adversaries exploit agent autonomy, memory persistence, and tool chaining in real-world or simulated environments. These insights help shape realistic threat scenarios and red-team exercises.

A key practical takeaway: organisations should run targeted red-team exercises tailored to AI/agentic systems. Rather than generic pentests, these exercises simulate AI-specific attacks (for example manipulations of memory, chaining of agent tools, or goal misalignment) to challenge the control environment.

The discussion also underlines the importance of layered controls: securing the model/foundation layer, data and memory layers, tooling and agent orchestration layers, and the deployment/infrastructure layer — because each presents its own unique vulnerabilities in agentic systems.

Governance, threat modelling and detection must converge into a continuous feedback loop: model → deploy → monitor → learn → adapt. Because agentic AI behaviour can evolve, the risk profile changes post-deployment, so continuous monitoring and periodic re-threat-modelling are essential.

The session encourages organisations — especially those moving beyond single-shot LLM usage into long-horizon or multi-agent deployments — to treat AI not merely as a feature but as a critical system with its own security lifecycle, supply-chain, and auditability requirements.

Finally, it emphasises that while AI and agentic systems bring huge opportunity, the security challenges are real — but manageable. With integrated governance, proactive threat modelling, detection tuned for agent behaviours, and red-teaming tailored to AI, organisations can adopt these technologies with greater confidence and resilience.

AI/LLM Security Governance & Risk Assessment

Click the ISO 42001 Awareness Quiz — it will open in your browser in full-screen mode

Protect your AI systems — make compliance predictable.

Expert ISO-42001 readiness for small & mid-size orgs. Get a AI Risk vCISO-grade program without the full-time cost. Think of AI risk like a fire alarm—our register tracks risks, scores impact, and ensures mitigations are in place before disaster strikes.

Manage Your AI Risks Before They Become Reality.

Problem – AI risks are invisible until it’s too late

Solution – Risk register, scoring, tracking mitigations

Benefits – Protect compliance, avoid reputational loss, make informed AI decisions

We offer free high level AI risk scorecard in exchange of an email. info@deurainfosec.com

Secure Your Business. Simplify Compliance. Gain Peace of Mind

Check out our earlier posts on AI-related topics: AI topic

InfoSec services | InfoSec books | Follow our blog | DISC llc is listed on The vCISO Directory | ISO 27k Chat bot | Comprehensive vCISO Services | ISMS Services | Security Risk Assessment Services | Mergers and Acquisition Security

- AI Vulnerability Scorecard: Discover Your AI Attack Surface Before Attackers Do

- API Security in the Age of AI: Why Vulnerability Assessment Is Non-Negotiable

- AI Attack Surface ScoreCard

- AI-Accelerated Offense: Why Security Programs Must Move Now, Not Later

- AI Governance Explained: Accountability, Trust, and Control in the Age of AI

November 22nd, 2025 1:01 pm

[…] Complete the ISO 42001 Gap Assessment → […]