In today’s threat landscape, where cyber incidents, ransomware, and data breaches are no longer rare but constant, organizations must treat information security as a core business priority—not just an IT function. As highlighted, the increasing complexity of digital environments, cloud adoption, and emerging technologies like AI have made cyber risk a business risk that demands executive-level ownership.

At the center of this shift is the Chief Information Security Officer (CISO)—a role that has evolved far beyond technical oversight. Today’s CISO is responsible for aligning security with business strategy, managing enterprise and third-party risks, ensuring regulatory compliance, and embedding security into every layer of the organization. More importantly, the CISO acts as a bridge between leadership and technical teams, translating complex cyber risks into business decisions that executives can act on.

A critical function of the CISO is leadership during uncertainty. When incidents occur, the CISO leads response efforts, coordinates communication, ensures compliance with regulatory obligations, and drives recovery—all while minimizing financial, operational, and reputational damage. This level of accountability cannot be distributed across roles like CIO, CRO, or CPO alone; it requires a dedicated security leader focused specifically on protecting the organization from evolving cyber threats.

From a governance perspective, frameworks like ISO/IEC 27001 emphasize the need for clearly defined security leadership, accountability, and continuous risk management. While the title “CISO” may not always be explicitly required, the function is essential. Organizations that lack this leadership often struggle with fragmented security efforts, compliance gaps, and misalignment between business objectives and security controls.

At DISC InfoSec, we see this gap every day—especially in small and mid-sized organizations. Not every company needs a full-time CISO, but every company does need CISO-level leadership. That’s where our vCISO and advisory services come in. We help organizations establish strategic security governance, align with ISO 27001 and emerging standards like ISO 42001, and build audit-ready, risk-driven programs that scale with the business.

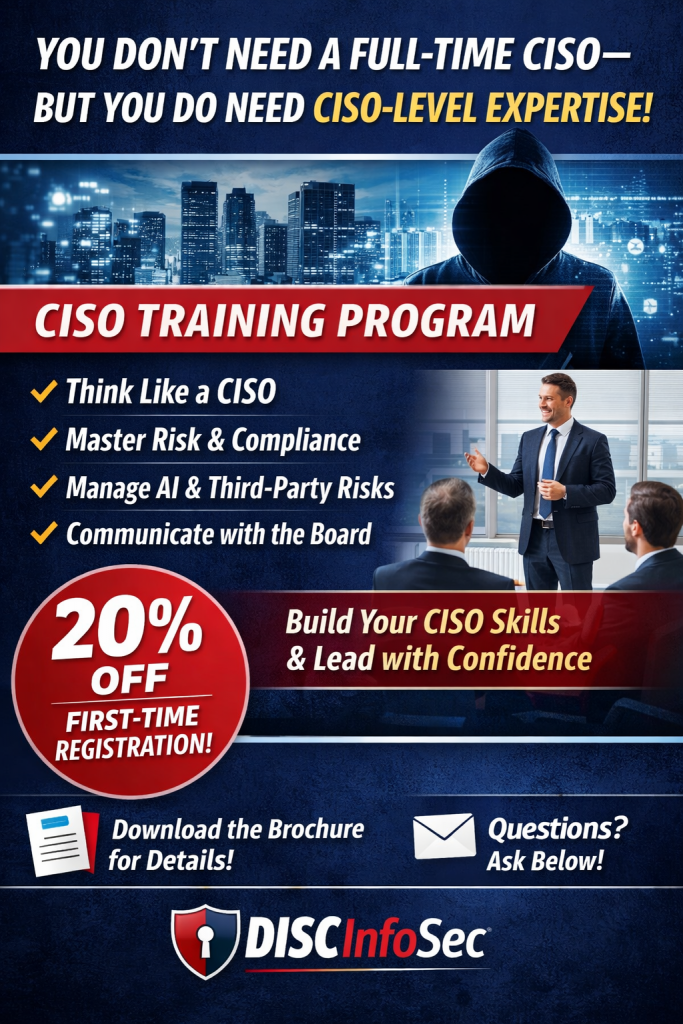

A CISO Training offering by DISC InfoSec:

🚨 You Don’t Need a Full-Time CISO—But You Do Need CISO-Level Expertise

Cyber risk is no longer just an IT problem—it’s a business risk, a compliance risk, and a leadership challenge. Yet many organizations still lack the expertise needed to lead security at the executive level.

That’s where most companies struggle…

Not because they don’t invest in tools—but because they lack trained leadership to govern security effectively.

💡 Introducing DISC InfoSec CISO Training

At DISC InfoSec, we equip professionals with the skills, frameworks, and strategic mindset required to operate at the CISO level—without the trial-and-error.

Our training helps you:

✔ Think like a CISO—align security with business objectives

✔ Master risk management across ISO 27001 and emerging AI standards (ISO 42001)

✔ Lead audits, compliance, and governance programs with confidence

✔ Manage third-party and AI-driven risks effectively

✔ Communicate cyber risk to executives and board members

🎯 Who Should Attend?

• Aspiring CISOs / vCISOs

• GRC & Compliance Professionals

• Security Leaders & Architects

• IT Managers transitioning into leadership roles

• Consultants delivering security advisory services

🔥 Why DISC InfoSec?

We don’t just teach theory—we bring real-world consulting experience into every session. You’ll walk away with practical frameworks, templates, and playbooks you can apply immediately.

📩 Ready to Step Into a CISO Role?

Join our CISO Training Program and start leading security—not just managing it. A reasonably priced training program that offers great value for money, includes the exam fee, and awards a certification upon successful completion.

Organize as a Self-Study Training or Classroom Training event – Take advantage of a 20% discount on your first course registration. Review all the course details by downloading the brochure at your convenience. Have a question? Enter it in the message box at the end of this post.

A future-ready CISO training program goes beyond reacting to today’s threats—it develops leaders who can anticipate disruption, align security with business strategy, and confidently navigate uncertainty. It blends strategic thinking, emerging technology awareness, and hands-on leadership skills to prepare CISOs for a rapidly evolving risk landscape.

The top six features of modern CISO training, along with added perspective:

| Feature | Description | Why It Matters (Perspective) |

|---|---|---|

| Strategic Leadership Focus | Training emphasizes business alignment, executive communication, and long-term security vision rather than purely technical depth. | The CISO role has shifted into the boardroom. Success depends on influencing decisions, securing budgets, and tying security to revenue protection and growth. |

| AI & Automation Readiness | Covers AI-powered threats, defensive use of AI, and governance frameworks for responsible AI adoption. | AI is both a weapon and a shield. CISOs who don’t understand AI risk being outpaced by adversaries who already do. |

| Cloud & Identity-Centric Security | Focuses on Zero Trust, multi-cloud environments, and identity as the new perimeter. | Traditional network boundaries are gone. Identity and access control are now the frontline of defense in distributed environments. |

| Cyber Resilience & Crisis Leadership | Prepares leaders for breach inevitability with incident response, crisis management, and recovery planning. | Prevention alone is unrealistic. The real differentiator is how fast and effectively an organization can respond and recover. |

| Risk & Regulatory Intelligence | Builds expertise in global regulations, privacy laws, and third-party risk management. | Compliance is no longer optional—it’s a business enabler. CISOs must translate regulatory pressure into structured risk programs. |

| Human-Centric Security Leadership | Focuses on culture-building, behavioral risk, and stakeholder engagement across the organization. | Technology doesn’t fail—people and processes do. Strong security culture is often the most effective and scalable control. |

Perspective

The biggest shift in CISO training is this: it’s no longer about producing security experts—it’s about producing risk executives.

Future-looking programs should feel closer to an MBA in cyber leadership than a technical certification. The CISOs who will stand out are those who can connect cybersecurity to business value, leverage AI intelligently, and lead through ambiguity—not just manage controls.

#CISO #CyberSecurity #InfoSec #Leadership #ISO27001 #ISO42001 #RiskManagement #GRC #Compliance #AISecurity #vCISO #CyberRisk #SecurityLeadership #DISCInfoSec

InfoSec services | InfoSec books | Follow our blog | DISC llc is listed on The vCISO Directory | ISO 27k Chat bot | Comprehensive vCISO Services | ISMS Services | AIMS Services | Security Risk Assessment Services | Mergers and Acquisition Security

At DISC InfoSec, we help organizations navigate this landscape by aligning AI risk management, governance, security, and compliance into a single, practical roadmap. Whether you are experimenting with AI or deploying it at scale, we help you choose and operationalize the right frameworks to reduce risk and build trust. Learn more at DISC InfoSec.

- When AI Hacks Faster Than Humans: The Coming Collapse of Traditional Cybersecurity Value

- SOC 2 Isn’t Enough: Moving Beyond Compliance Theater to Real Risk Management

- When AI Becomes the Attack Surface: Lessons from the McKinsey Lilli Incident

- Why Every Company Needs a CISO (or at Least vCISO-Level Leadership)

- How ISO 27001 Lead Auditors Should Evaluate AI Risks in an ISMS