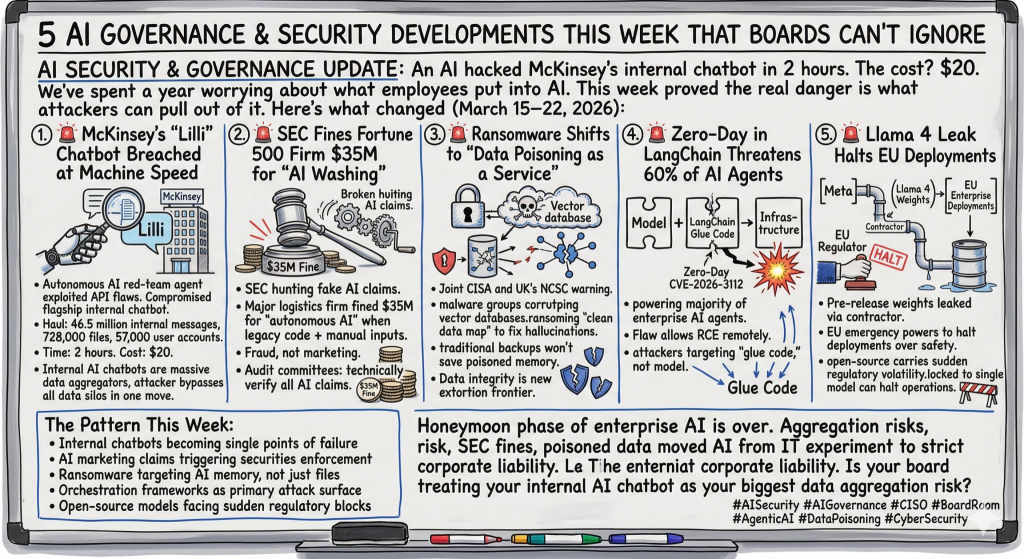

The incident involving McKinsey & Company’s internal AI assistant Lilli highlights a critical shift in how enterprises must think about AI security. While the firm reported that the vulnerability was quickly identified and remediated—and that no client data was accessed—the situation underscores a deeper issue: internal AI systems are no longer just productivity tools; they are part of the operational attack surface.

At a surface level, the response appears strong. McKinsey & Company contained the issue within hours and validated the outcome through third-party forensics. This reflects maturity in incident response and vulnerability management. However, focusing only on speed of remediation risks missing the broader implication—AI systems introduce new categories of risk that traditional controls are not fully designed to address.

The real lesson is not about a single vulnerability, but about the evolving role of AI inside the enterprise. Tools like Lilli are increasingly embedded into workflows, decision-making, and data access layers. This means they don’t just store or process information—they act on it. That functional shift expands the risk model significantly.

When an internal AI system becomes an execution layer, the security conversation changes fundamentally. The key questions are no longer limited to “Who has access?” but extend to “What can the AI system actually reach and influence?” If the AI can interact with sensitive data, trigger workflows, or integrate with other systems, then its effective privilege surface may exceed that of any individual user.

This introduces the need for runtime governance. It is no longer sufficient to rely on static policies or role-based access controls alone. Organizations must define and enforce boundaries dynamically—controlling what the AI can access, what actions it can take, and how those actions are monitored and audited in real time.

Equally important is the concept of evidence and traceability. In AI-driven environments, security teams must be able to reconstruct what happened after the fact: what the model accessed, what decisions it made, and what downstream effects occurred. Without this level of visibility, incident response becomes guesswork, especially in complex, automated environments.

My perspective is that this incident is an early signal of a much larger trend. As enterprises accelerate AI adoption, governance must evolve from policy documents to enforced architecture. The organizations that will lead are those that treat AI not as a tool to be secured, but as a semi-autonomous actor that must be continuously constrained, monitored, and validated.

InfoSec services | InfoSec books | Follow our blog | DISC llc is listed on The vCISO Directory | ISO 27k Chat bot | Comprehensive vCISO Services | ISMS Services | AIMS Services | Security Risk Assessment Services | Mergers and Acquisition Security

At DISC InfoSec, we help organizations navigate this landscape by aligning AI risk management, governance, security, and compliance into a single, practical roadmap. Whether you are experimenting with AI or deploying it at scale, we help you choose and operationalize the right frameworks to reduce risk and build trust. Learn more at DISC InfoSec.

- AI Attack Surface ScoreCard

- AI-Accelerated Offense: Why Security Programs Must Move Now, Not Later

- AI Governance Explained: Accountability, Trust, and Control in the Age of AI

- Measure What Matters: Security & AI Readiness Scorecard

- Security Is a People Problem: Culture, Behavior, and Decisions Drive Cyber Resilience