Understanding the Evolution of AI: Traditional, Generative, and Agentic

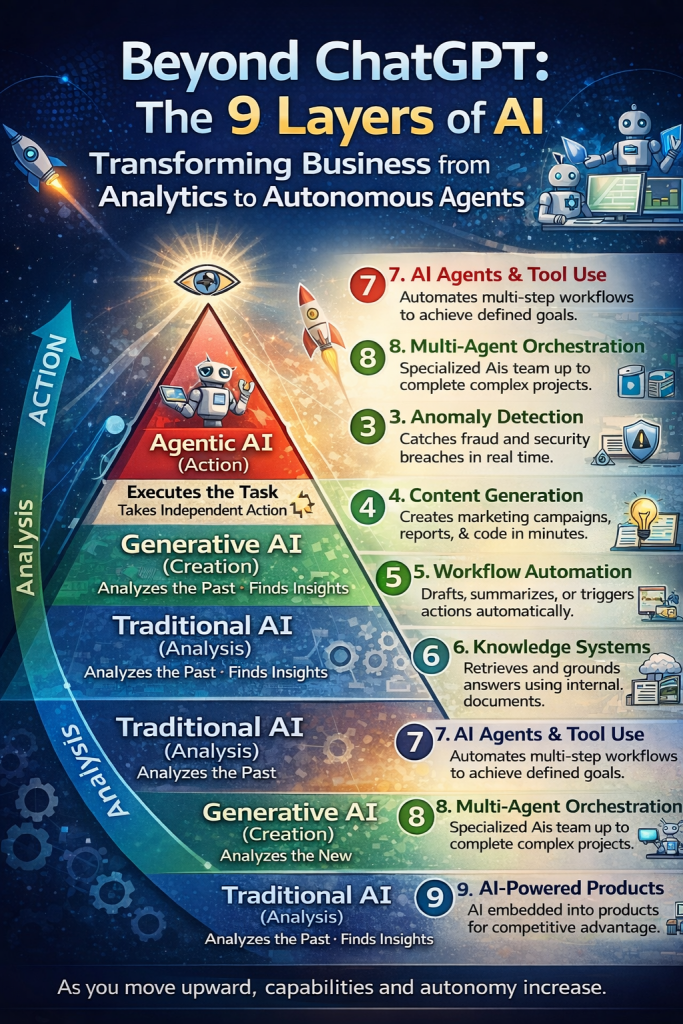

Artificial Intelligence is often associated only with tools like ChatGPT, but AI is much broader. In reality, there are multiple layers of AI capabilities that organizations use to analyze data, generate new information, and increasingly take autonomous action. These capabilities can generally be grouped into three categories: Traditional AI (analysis), Generative AI (creation), and Agentic AI (autonomous execution). As you move up these layers, the level of automation, intelligence, and independence increases.

Traditional AI

Traditional AI focuses primarily on analyzing historical data and recognizing patterns. These systems use statistical models and machine learning algorithms to identify trends, categorize information, and detect irregularities. Traditional AI is commonly used in financial modeling, fraud detection, and operational analytics. It does not create new information or take independent action; instead, it provides insights that humans use to make decisions.

From a security standpoint, organizations should secure Traditional AI systems by implementing data governance, model integrity controls, and monitoring for model drift or adversarial manipulation.

1. Predictive Analytics

Predictive analytics uses historical data and machine learning algorithms to forecast future outcomes. Businesses rely on predictive models to estimate customer churn, forecast demand, predict equipment failures, and anticipate financial risks. By identifying patterns in past behavior, predictive analytics helps organizations make proactive decisions rather than reacting to problems after they occur.

To secure predictive analytics systems, organizations should ensure training data integrity, protect models from data poisoning attacks, and implement strict access controls around model inputs and outputs.

2. Classification Systems

Classification systems automatically categorize data into predefined groups. In business operations, these systems are widely used for sorting customer support tickets, detecting spam emails, routing financial transactions, or labeling large datasets. By automating categorization tasks, classification models significantly improve operational efficiency and reduce manual workloads.

Securing classification systems requires strong data labeling governance, protection against adversarial inputs designed to misclassify data, and continuous monitoring of model accuracy and bias.

3. Anomaly Detection

Anomaly detection systems identify unusual patterns or behaviors that deviate from normal operations. This type of AI is commonly used for fraud detection, cybersecurity monitoring, financial irregularities, and system health monitoring. By identifying anomalies in real time, organizations can detect threats or failures before they cause significant damage.

Security for anomaly detection systems should focus on ensuring reliable baseline data, preventing manipulation of detection thresholds, and integrating alerts with incident response and security monitoring systems.

Generative AI

Generative AI represents the next stage of AI capability. Instead of just analyzing information, these systems create new content, ideas, or outputs based on patterns learned during training. Generative AI models can produce text, images, code, or reports, making them powerful tools for productivity and innovation.

To secure generative AI, organizations must implement AI governance policies, control sensitive data exposure, and monitor outputs to prevent misinformation, data leakage, or malicious prompt manipulation.

4. Content Generation

Content generation AI can automatically produce written reports, marketing copy, emails, code, or visual content. These tools dramatically accelerate creative and operational work by generating drafts within seconds rather than hours or days. Businesses increasingly rely on these systems for marketing, documentation, and customer engagement.

To secure content generation systems, organizations should enforce prompt filtering, data protection policies, and human review mechanisms to prevent sensitive information leakage or harmful outputs.

5. Workflow Automation

Workflow automation integrates AI capabilities into business processes to assist with repetitive operational tasks. AI can summarize meetings, draft responses, process forms, and trigger automated actions across enterprise applications. This type of automation helps streamline workflows and improve operational efficiency.

Securing AI-driven workflows requires strong identity and access management, API security, and logging of AI-driven actions to ensure accountability and prevent unauthorized automation.

6. Knowledge Systems (Retrieval-Augmented Generation)

Knowledge systems combine generative AI with enterprise data retrieval systems to produce context-aware answers. This approach, often called Retrieval-Augmented Generation (RAG), allows AI to access internal company documents, policies, and knowledge bases to generate accurate responses grounded in trusted data sources.

Security for knowledge systems should include strict data access controls, encryption of internal knowledge repositories, and protections against prompt injection attacks that attempt to expose sensitive information.

Agentic AI

Agentic AI represents the most advanced stage in the evolution of AI systems. Instead of simply analyzing or generating information, these systems can take actions and pursue goals autonomously. Agentic AI systems can coordinate tasks, interact with external tools, and execute workflows with minimal human intervention.

To secure Agentic AI systems, organizations must implement robust governance frameworks, permission boundaries, and real-time monitoring to prevent unintended actions or system misuse.

7. AI Agents and Tool Use

AI agents are autonomous systems capable of interacting with software tools, APIs, and enterprise applications to complete tasks. These agents can schedule meetings, update CRM systems, send emails, or perform operational activities within defined permissions. They operate as digital assistants capable of executing tasks rather than just recommending them.

Security for AI agents requires strict role-based permissions, sandboxed execution environments, and approval mechanisms for sensitive actions.

8. Multi-Agent Orchestration

Multi-agent orchestration involves multiple AI agents working together to accomplish complex objectives. Each agent may specialize in a specific task such as research, analysis, decision-making, or execution. These coordinated systems allow organizations to automate entire workflows that previously required multiple human roles.

To secure multi-agent systems, organizations should deploy centralized orchestration governance, communication monitoring between agents, and policy enforcement to prevent cascading failures or unauthorized collaboration between systems.

9. AI-Powered Products

The final layer involves embedding AI directly into products and services. Instead of being used internally, AI becomes part of the product offering itself, providing customers with intelligent features such as recommendations, automation, or decision support. Many modern software platforms now integrate AI to deliver competitive advantage and enhanced user experiences.

Securing AI-powered products requires secure model deployment pipelines, protection of customer data, model lifecycle management, and continuous monitoring for vulnerabilities and misuse.

Key Evolution Across AI Layers

The evolution of AI can be summarized as follows:

- Traditional AI analyzes past data to generate insights.

- Generative AI creates new content and information.

- Agentic AI executes tasks and pursues goals autonomously.

As organizations adopt higher levels of AI capability, they also introduce greater levels of autonomy and risk, making governance and security increasingly important.

Perspective: The Future of Autonomous AI

We are entering an era where AI will increasingly function as digital workers rather than just digital tools. Over the next few years, organizations will move from isolated AI experiments toward AI-driven operational systems that manage workflows, coordinate tasks, and make decisions at scale.

However, the shift toward autonomous AI also introduces new security challenges. AI systems will require strong governance frameworks, accountability mechanisms, and risk management strategies similar to those used for human employees. Organizations that succeed will not simply deploy AI but will integrate AI governance, cybersecurity, and risk management into their AI strategy from the start.

In the near future, most enterprises will operate with a hybrid workforce consisting of humans and AI agents working together. The organizations that gain competitive advantage will be those that combine multiple AI capabilities—analytics, generation, and autonomous execution—while maintaining strong AI security, compliance, and oversight.

InfoSec services | InfoSec books | Follow our blog | DISC llc is listed on The vCISO Directory | ISO 27k Chat bot | Comprehensive vCISO Services | ISMS Services | AIMS Services | Security Risk Assessment Services | Mergers and Acquisition Security

At DISC InfoSec, we help organizations navigate this landscape by aligning AI risk management, governance, security, and compliance into a single, practical roadmap. Whether you are experimenting with AI or deploying it at scale, we help you choose and operationalize the right frameworks to reduce risk and build trust. Learn more at DISC InfoSec.

- SOC 2 Isn’t Enough: Moving Beyond Compliance Theater to Real Risk Management

- When AI Becomes the Attack Surface: Lessons from the McKinsey Lilli Incident

- Why Every Company Needs a CISO (or at Least vCISO-Level Leadership)

- How ISO 27001 Lead Auditors Should Evaluate AI Risks in an ISMS

- Secure Your Web & API Applications Before Attackers Do: Reduce Vulnerabilities